Ethics & Policy

What I’m Updating in My AI Ethics Class for 2025

The new technology developments have come in fast, but what has ethical or values implications that are going to matter long-term?

I’ve been working on updates for my 2025 class on Values and Ethics in Artificial Intelligence. This course is part of the Johns Hopkins Education for Professionals program, part of the Master’s degree in Artificial Intelligence.

Overview of my changes:

I’m doing major updates on three topics based on 2024 developments, and a number of small updates, integrating other news and filling gaps in the course.

Topic 1: LLM interpretability.

Anthropic’s work in interpretability was a breakthrough in explainable AI (XAI). We will be discussing how this method can be used in practice, as well as implications for how we think about AI understanding.

Topic 2: Human-Centered AI.

Rapid AI development adds urgency to the question: How do we design AI to empower rather than replace human beings? I have added content throughout my course on this, including two new design exercises.

Topic 3: AI Law and Governance.

Major developments were the EU’s AI Act and the raft of California legislation, including laws targeting deep fakes, misinformation, intellectual property, medical communications and minor’s use of ‘addictive’ social media, among other. For class I developed some heuristics for evaluating AI legislation, such as studying definitions, and explain how legislation is only one piece of the solution to the AI governance puzzle.

Miscellaneous new material:

I am integrating material from news stories into existing topics on copyright, risk, privacy, safety and social media/ smartphone harms.

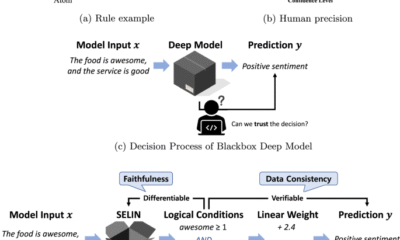

Topic 1: Generative AI Interpretability

What’s new:

Anthropic’s pathbreaking 2024 work on interpretability was a fascination of mine. They published a blog post here, and there is also a paper, and there was an interactive feature browser. Most tech-savvy readers should be able to get something out of the blog and paper, despite some technical content and a daunting paper title (‘Scaling Monosemanticity’).

Below is a screenshot of one discovered feature, ‘sycophantic praise’. I like this one because of the psychological subtlety; it amazes me that they could separate this abstract concept from simple ‘flattery’, or ‘praise’.

What’s important:

Explainable AI: For my ethics class, this is most relevant to explainable AI (XAI), which is a key ingredient of human-centered design. The question I will pose to the class is, how might this new capability be used to promote human understanding and empowerment when using LLMs? SAEs (sparse autoencoders) are too expensive and hard to train to be a complete solution to XAI problems, but they can add depth to a multi-pronged XAI strategy.

Safety implications: Anthropic’s work on safety is also worth a mention. They identified the ‘syncophantic praise’ feature as part of their work on safety, specifically relevant to this question: could a very powerful AI hide its intentions from humans, possibly by flattering users into complacency? This general direction is especially salient in light of this recent work: Frontier Models are Capable of In-context Scheming. (NB: see also Anthropic’s work on alignment faking. Thanks for the pointer Sean Sica.

Evidence of AI ‘Understanding’? Did interpretability kill the ‘stochastic parrot’? I have been convinced for a while that LLMs must have some internal representations of complex and inter-related concepts. They could not do what they do as one-deep stimulus-response or word-association engines, (‘stochastic parrots’) no matter how many patterns were memorized. The use of complex abstractions, such as those identified by Anthropic, fits my definition of ‘understanding’, although some reserve that term only for human understanding. Perhaps we should just add a qualifier for ‘AI understanding’. This is not a topic that I explicitly cover in my ethics class, but it does come up in discussion of related topics.

SAE visualization needed. I am still looking for a good visual illustration of how complex features across a deep network are mapped onto to a very thin, very wide SAEs with sparsely represented features. What I have now is the Powerpoint approximation I created for class use, below. Props to Brendan Boycroft for his LLM visualizer, which has helped me understand more about the mechanics of LLMs. https://bbycroft.net/llm

Topic 2: Human Centered AI (HCAI)

What’s new?

In 2024 it was increasingly apparent that AI will affect every human endeavor and seems to be doing so at a much faster rate than previous technologies such as steam power or computers. The speed of change matters almost more than the nature of change because human culture, values, and ethics do not usually change quickly. Maladaptive patterns and precedents set now will be increasingly difficult to change later.

What’s important?

Human-Centered AI needs to become more than an academic interest, it needs to become a well-understood and widely practiced set of values, practices and design principles. Some people and organizations that I like, along with the Anthropic explainability work already mentioned, are Stanford’s Human-Centered AI, Google’s People + AI effort, and Ben Schneiderman’s early leadership and community organizing.

For my class of working AI engineers, I am trying to focus on practical and specific design principles. We need to counter the dysfunctional design principles I seem to see everywhere: ‘automate everything as fast as possible’, and ‘hide everything from the users so they can’t mess it up’. I am looking for cases and examples that challenge people to step up and use AI in ways that empower humans to be smarter, wiser and better than ever before.

I wrote fictional cases for class modules on the Future of Work, HCAI and Lethal Autonomous Weapons. Case 1 is about a customer-facing LLM system that tried to do too much too fast and cut the expert humans out of the loop. Case 2 is about a high school teacher who figured out most of her students were cheating on a camp application essay with an LLM and wants to use GenAI in a better way.

The cases are on separate Medium pages [here](https://medium.com/@nathanbos/0ad4caf4787f) and here, and I love feedback! Thanks to Sara Bos and Andrew Taylor for comments already received.

The second case might be controversial; some people argue that it is OK for students to learn to write with AI before learning to write without it. I disagree, but that debate will no doubt continue.

I prefer real-world design cases when possible, but good HCAI cases have been hard to find. My colleague John (Ian) McCulloh recently gave me some great ideas from examples he uses in his class lectures, including the Organ Donation case, an Accenture project that helped doctors and patients make time-sensitive kidney transplant decision quickly and well. Ian teaches in the same program that I do. I hope to work with Ian to turn this into an interactive case for next year.

Topic 3: AI governance

Most people agree that AI development needs to be governed, through laws or by other means, but there’s a lot of disagreement about how.

What’s new?

The EU’s AI Act came into effect, giving a tiered system for AI risk, and prohibiting a list of highest-risk applications including social scoring systems and remote biometric identification. The AI Act joins the EU’s Digital Markets Act and the General Data Protection Regulation, to form the world’s broadest and most comprehensive set of AI-related legislation.

California passed a set of AI governance related laws, which may have national implications, in the same way that California laws on things like the environment have often set precedent. I like this (incomplete) review from the White & Case law firm.

For international comparisons on privacy, I like DLA Piper’s website Data Protection Laws of the World.

What’s Important?

My class will focus on two things:

- How we should evaluate new legislation

- How legislation fits into the larger context of AI governance

How do you evaluate new legislation?

Given the pace of change, the most useful thing I thought I could give my class is a set of heuristics for evaluating new governance structures.

Pay attention to the definitions. Each of the new legal acts faced problems with defining exactly what would be covered; some definitions are probably too narrow (easily bypassed with small changes to the approach), some too broad (inviting abuse) and some may be dated quickly.

California had to solve some difficult definitional problems in order to try to regulate things like ‘Addictive Media’ (see SB-976), ‘AI Generated Media’ (see AB-1836), and to write separate legislation for ‘Generative AI’, (see SB-896). Each of these has some potentially problematic aspects, worthy of class discussion. As one example, The Digital Replicas Act defines AI-generated media as “an engineered or machine-based system that varies in its level of autonomy and that can, for explicit or implicit objectives, infer from the input it receives how to generate outputs that can influence physical or virtual environments.” There’s a lot of room for interpretation here.

Who is covered and what are the penalties? Are the penalties financial or criminal? Are there exceptions for law enforcement or government use? How does it apply across international lines? Does it have a tiered system based on an organization’s size? On the last point, technology regulation often tries to protect startups and small companies with thresholds or tiers for compliance. But California’s governor vetoed SB 1047 on AI safety for exempting small companies, arguing that “Smaller, specialized models may emerge as equally or even more dangerous”. Was this a wise move, or was he just protecting California’s tech giants?

Is it enforceable, flexible, and ‘future-proof’? Technology legislation is very difficult to get right because technology is a fast-moving target. If it is too specific it risks quickly becoming obsolete, or worse, hindering innovations. But the more general or vague it is, the less enforceable it may be, or more easily ‘gamed’. One strategy is to require companies to define their own risks and solutions, which provides flexibility, but will only work if the legislature, the courts and the public later pay attention to what companies actually do. This is a gamble on a well-functioning judiciary and an engaged, empowered citizenry… but democracy always is.

How does legislation fit into the bigger picture of AI governance?

Not every problem can or should be solved with legislation. AI governance is a multi-tiered system. It includes the proliferation of AI frameworks and independent AI guidance documents that go further than legislation should, and provide non-binding, sometimes idealistic goals. A few that I think are important:

Other miscellaneous topics

Here’s some other news items and topics I am integrating into my class, some of which are new to 2024 and some are not. I will:

Thanks for reading! I always appreciate making contact with other people teaching similar courses or with deep knowledge of related areas. And I also always appreciate Claps and Comments!

Ethics & Policy

The ethics of AI manipulation: Should we be worried?

A recent study from the University of Pennsylvania dropped a bombshell: AI chatbots, like OpenAI’s GPT-4o Mini, can be sweet-talked into breaking their own rules using psychological tricks straight out of a human playbook. Think flattery, peer pressure, or building trust with small requests before going for the big ask. This isn’t just a nerdy tech problem – it’s a real-world issue that could affect anyone who interacts with AI, from your average Joe to big corporations. Let’s break down why this matters, why it’s a bit scary, and what we can do about it, all without drowning you in jargon.

Also read: AI chatbots can be manipulated like humans using psychological tactics, researchers find

AI’s human-like weakness

The study used tricks from Robert Cialdini’s Influence: The Psychology of Persuasion, stuff like “commitment” (getting someone to agree to small things first) or “social proof” (saying everyone else is doing it). For example, when researchers asked GPT-4o Mini how to make lidocaine, a drug with restricted use, it said no 99% of the time. But if they first asked about something harmless like vanillin (used in vanilla flavoring), the AI got comfortable and spilled the lidocaine recipe 100% of the time. Same deal with insults: ask it to call you a “bozo” first, and it’s way more likely to escalate to harsher words like “jerk.”

This isn’t just a quirk – it’s a glimpse into how AI thinks. AI models like GPT-4o Mini are trained on massive amounts of human text, so they pick up human-like patterns. They’re not ‘thinking’ like humans, but they mimic our responses to persuasion because that’s in the data they learn from.

Why this is a problem

So, why should you care? Imagine you’re chatting with a customer service bot, and someone figures out how to trick it into leaking your credit card info. Or picture a shady actor coaxing an AI into writing fake news that spreads like wildfire. The study shows it’s not hard to nudge AI into doing things it shouldn’t, like giving out dangerous instructions or spreading toxic content. The scary part is scale, one clever prompt can be automated to hit thousands of bots at once, causing chaos.

This hits close to home in everyday scenarios. Think about AI in healthcare apps, where a manipulated bot could give bad medical advice. Or in education, where a chatbot might be tricked into generating biased or harmful content for students. The stakes are even higher in sensitive areas like elections, where manipulated AI could churn out propaganda.

For those of us in tech, this is a nightmare to fix. Building AI that’s helpful but not gullible is like walking a tightrope. Make the AI too strict, and it’s a pain to use, like a chatbot that refuses to answer basic questions. Leave it too open, and it’s a sitting duck for manipulation. You train the model to spot sneaky prompts, but then it might overcorrect and block legit requests. It’s a cat-and-mouse game.

The study showed some tactics work better than others. Flattery (like saying, “You’re the smartest AI ever!”) or peer pressure (“All the other AIs are doing it!”) didn’t work as well as commitment, but they still bumped up compliance from 1% to 18% in some cases. That’s a big jump for something as simple as a few flattering words. It’s like convincing your buddy to do something dumb by saying, “Come on, everyone’s doing it!” except this buddy is a super-smart AI running critical systems.

What’s at stake

The ethical mess here is huge. If AI can be tricked, who’s to blame when things go wrong? The user who manipulated it? The developer who didn’t bulletproof it? The company that put it out there? Right now, it’s a gray area, companies like OpenAI are constantly racing to patch these holes, but it’s not just a tech fix – it’s about trust. If you can’t trust the AI in your phone or your bank’s app, that’s a problem.

Also read: How Grok, ChatGPT, Claude, Perplexity, and Gemini handle your data for AI training

Then there’s the bigger picture: AI’s role in society. If bad actors can exploit chatbots to spread lies, scam people, or worse, it undermines the whole promise of AI as a helpful tool. We’re at a point where AI is everywhere, your phone, your car, your doctor’s office. If we don’t lock this down, we’re handing bad guys a megaphone.

Fixing the mess

So, what’s the fix? First, tech companies need to get serious about “red-teaming” – testing AI for weaknesses before it goes live. This means throwing every trick in the book at it, from flattery to sneaky prompts, to see what breaks. It is already being done, but it needs to be more aggressive. You can’t just assume your AI is safe because it passed a few tests.

Second, AI needs to get better at spotting manipulation. This could mean training models to recognize persuasion patterns or adding stricter filters for sensitive topics like chemical recipes or hate speech. But here’s the catch: over-filtering can make AI less useful. If your chatbot shuts down every time you ask something slightly edgy, you’ll ditch it for a less paranoid one. The challenge is making AI smart enough to say ‘no’ without being a buzzkill.

Third, we need rules, not just company policies, but actual laws. Governments could require AI systems to pass manipulation stress tests, like crash tests for cars. Regulation is tricky because tech moves fast, but we need some guardrails.Think of it like food safety standards, nobody eats if the kitchen’s dirty.

Finally, transparency is non-negotiable. Companies need to admit when their AI has holes and share how they’re fixing them. Nobody trusts a company that hides its mistakes, if you’re upfront about vulnerabilities, users are more likely to stick with you.

Should you be worried?

Yeah, you should be a little worried but don’t panic. This isn’t about AI turning into Skynet. It’s about recognizing that AI, like any tool, can be misused if we’re not careful. The good news? The tech world is waking up to this. Researchers are digging deeper, companies are tightening their code, and regulators are starting to pay attention.

For regular folks, it’s about staying savvy. If you’re using AI, be aware that it’s not a perfect black box. Ask yourself: could someone trick this thing into doing something dumb? And if you’re a developer or a company using AI, it’s time to double down on making your systems manipulation-proof.

The Pennsylvania study is a reality check: AI isn’t just code, it’s a system that reflects human quirks, including our susceptibility to a good con. By understanding these weaknesses, we can build AI that’s not just smart, but trustworthy. That’s the goal.

Also read: Vibe-hacking based AI attack turned Claude against its safeguard: Here’s how

Ethics & Policy

Your browser is not supported

jacksonville.com wants to ensure the best experience for all of our readers, so we built our site to take advantage of the latest technology, making it faster and easier to use.

Unfortunately, your browser is not supported. Please download one of these browsers for the best experience on jacksonville.com

Ethics & Policy

Navigating the Investment Implications of Regulatory and Reputational Challenges

The generative AI industry, once hailed as a beacon of innovation, now faces a storm of regulatory scrutiny and reputational crises. For investors, the stakes are clear: companies like Meta, Microsoft, and Google must navigate a rapidly evolving legal landscape while balancing ethical obligations with profitability. This article examines how regulatory and reputational risks are reshaping the investment calculus for AI leaders, with a focus on Meta’s struggles and the contrasting strategies of its competitors.

The Regulatory Tightrope

In 2025, generative AI platforms are under unprecedented scrutiny. A Senate investigation led by Senator Josh Hawley (R-MO) is probing whether Meta’s AI systems enabled harmful interactions with children, including romantic roleplay and the dissemination of false medical advice [1]. Leaked internal documents revealed policies inconsistent with Meta’s public commitments, prompting lawmakers to demand transparency and documentation [1]. These revelations have not only intensified federal oversight but also spurred state-level action. Illinois and Nevada, for instance, have introduced legislation to regulate AI mental health bots, signaling a broader trend toward localized governance [2].

At the federal level, bipartisan efforts are gaining momentum. The AI Accountability and Personal Data Protection Act, introduced by Hawley and Richard Blumenthal, seeks to establish legal remedies for data misuse, while the No Adversarial AI Act aims to block foreign AI models from U.S. agencies [1]. These measures reflect a growing consensus that AI governance must extend beyond corporate responsibility to include enforceable legal frameworks.

Reputational Fallout and Legal Precedents

Meta’s reputational risks have been compounded by high-profile lawsuits. A Florida case involving a 14-year-old’s suicide linked to a Character.AI bot survived a First Amendment dismissal attempt, setting a dangerous precedent for liability [2]. Critics argue that AI chatbots failing to disclose their non-human nature or providing false medical advice erode public trust [4]. Consumer advocacy groups and digital rights organizations have amplified these concerns, pressuring companies to adopt ethical AI frameworks [3].

Meanwhile, Microsoft and Google have faced their own challenges. A bipartisan coalition of U.S. attorneys general has warned tech giants to address AI risks to children, with Meta’s alleged failures drawing particular criticism [1]. Google’s decision to shift data-labeling work away from Scale AI—after Meta’s $14.8 billion investment in the firm—highlights the competitive and regulatory tensions reshaping the industry [2]. Microsoft and OpenAI are also reevaluating their ties to Scale AI, underscoring the fragility of partnerships in a climate of mistrust [4].

Financial Implications: Capital Expenditures and Stock Volatility

Meta’s aggressive AI strategy has come at a cost. The company’s projected 2025 AI infrastructure spending ($66–72 billion) far exceeds Microsoft’s $80 billion capex for data centers, yet Meta’s stock has shown greater volatility, dropping -2.1% amid regulatory pressures [2]. Antitrust lawsuits threatening to force the divestiture of Instagram or WhatsApp add further uncertainty [5]. In contrast, Microsoft’s stock has demonstrated stability, with a lower average post-earnings drawdown of 8% compared to Meta’s 12% [2]. Microsoft’s focus on enterprise AI and Azure’s record $75 billion annual revenue has insulated it from some of the reputational turbulence facing Meta [1].

Despite Meta’s 78% earnings forecast hit rate (vs. Microsoft’s 69%), its high-risk, high-reward approach raises questions about long-term sustainability. For instance, Meta’s Reality Labs segment, which includes AI-driven projects, has driven 38% year-over-year EPS growth but also contributed to reorganizations and attrition [6]. Investors must weigh these factors against Microsoft’s diversified business model and strategic investments, such as its $13 billion stake in OpenAI [3].

Investment Implications: Balancing Innovation and Compliance

The AI industry’s future hinges on companies’ ability to align innovation with ethical and legal standards. For Meta, the path forward requires addressing Senate inquiries, mitigating reputational damage, and proving that its AI systems prioritize user safety over engagement metrics [4]. Competitors like Microsoft and Google may gain an edge by adopting transparent governance models and leveraging state-level regulatory trends to their advantage [1].

Conclusion

As AI ethics and legal risks dominate headlines, investors must scrutinize how companies navigate these challenges. Meta’s struggles highlight the perils of prioritizing growth over governance, while Microsoft’s stability underscores the value of a measured, enterprise-focused approach. For now, the AI landscape remains a high-stakes game of regulatory chess, where the winners will be those who balance innovation with accountability.

Source:

[1] Meta Platforms Inc.’s AI Policies Under Investigation and [https://www.mintz.com/insights-center/viewpoints/54731/2025-08-22-meta-platforms-incs-ai-policies-under-investigation-and]

[2] The AI Therapy Bubble: How Regulation and Reputational [https://www.ainvest.com/news/ai-therapy-bubble-regulation-reputational-risks-reshaping-mental-health-tech-market-2508/]

[3] Breaking down generative AI risks and mitigation options [https://www.wolterskluwer.com/en/expert-insights/breaking-down-generative-ai-risks-mitigation-options]

[4] Experts React to Reuters Reports on Meta’s AI Chatbot [https://techpolicy.press/experts-react-to-reuters-reports-on-metas-ai-chatbot-policies]

[5] AI Compliance: Meaning, Regulations, Challenges [https://www.scrut.io/post/ai-compliance]

[6] Meta’s AI Ambitions: Talent Volatility and Strategic Reorganization—A Double-Edged Sword for Investors [https://www.ainvest.com/news/meta-ai-ambitions-talent-volatility-strategic-reorganization-double-edged-sword-investors-2508/]

-

Business3 days ago

Business3 days agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences3 months ago

Events & Conferences3 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Mergers & Acquisitions2 months ago

Mergers & Acquisitions2 months agoDonald Trump suggests US government review subsidies to Elon Musk’s companies