Books, Courses & Certifications

Top AI Courses by NVIDIA for Free in 2025

NVIDIA is one of the most influential hardware giants in the world. Apart from its much sought-after GPUs, the company also provides free courses to help you understand more about generative AI, GPU, robotics, chips, and more.

Most importantly, all of these are available free of cost and can be completed in less than a day. Let’s take a look at them.

1. Building RAG Agents for LLMs

Building RAG Agents for LLMs course is available for free for a limited time. It explores the revolutionary impact of large language models (LLMs), particularly retrieval-based systems, which are transforming productivity by enabling informed conversations through interaction with various tools and documents. Designed for individuals keen on harnessing these systems’ potential, the course emphasises practical deployment and efficient implementation to meet the demands of users and deep learning models. Participants will delve into advanced orchestration techniques, including internal reasoning, dialog management, and effective tooling strategies.

In this workshop you will learn to develop an LLM system that interacts predictably with users by utilising internal and external reasoning components.

Course link: https://learn.nvidia.com/courses/course-detail?course_id=course-v1:DLI+S-FX-15+V1

2. Accelerating Data Science Workflows with Zero Code Changes

Efficient data management and analysis are crucial for companies in software, finance, and retail. Traditional CPU-driven workflows are often cumbersome, but GPUs enable faster insights, driving better business decisions.

In this workshop, one will learn to build and execute end-to-end GPU-accelerated data science workflows for rapid data exploration and production deployment. Using RAPIDS™-accelerated libraries, one can apply GPU-accelerated machine learning algorithms, including XGBoost, cuGraph’s single-source shortest path, and cuML’s KNN, DBSCAN, and logistic regression.

More details on the course can be checked here – https://learn.nvidia.com/courses/course-detail?course_id=course-v1:DLI+T-DS-03+V1

3. Generative AI Explained

This self-paced, free online course introduces generative AI fundamentals, which involve creating new content based on different inputs. Through this course, participants will grasp the concepts, applications, challenges, and prospects of generative AI.

Learning objectives include defining generative AI and its functioning, outlining diverse applications, and discussing the associated challenges and opportunities. All you need to participate is a basic understanding of machine learning and deep learning principles.

To learn the course and know more in detail check it out here – https://learn.nvidia.com/courses/course-detail?course_id=course-v1:DLI+S-NP-01+V1

4. Digital Fingerprinting with Morpheus

This one-hour course introduces participants to developing and deploying the NVIDIA digital fingerprinting AI workflow, providing complete data visibility and significantly reducing threat detection time.

Participants will gain hands-on experience with the NVIDIA Morpheus AI Framework, designed to accelerate GPU-based AI applications for filtering, processing, and classifying large volumes of streaming cybersecurity data.

Additionally, they will learn about the NVIDIA Triton Inference Server, an open-source tool that facilitates standardised deployment and execution of AI models across various workloads. No prerequisites are needed for this tutorial, although familiarity with defensive cybersecurity concepts and the Linux command line is beneficial.

To learn the course and know more in detail check it out here – https://courses.nvidia.com/courses/course-v1:DLI+T-DS-02+V2/

5. Building A Brain in 10 Minutes

This course delves into neural networks’ foundations, drawing from biological and psychological insights. Its objectives are to elucidate how neural networks employ data for learning and to grasp the mathematical principles underlying a neuron’s functioning.

While anyone can execute the code provided to observe its operations, a solid grasp of fundamental Python 3 programming concepts—including functions, loops, dictionaries, and arrays—is advised. Additionally, familiarity with computing regression lines is also recommended.

To learn the course and know more in detail check it out here – https://courses.nvidia.com/courses/course-v1:DLI+T-FX-01+V1/

6. An Introduction to CUDA

This course delves into the fundamentals of writing highly parallel CUDA kernels designed to execute on NVIDIA GPUs.

One can gain proficiency in several key areas: launching massively parallel CUDA kernels on NVIDIA GPUs, orchestrating parallel thread execution for large dataset processing, effectively managing memory transfers between the CPU and GPU, and utilising profiling techniques to analyse and optimise the performance of CUDA code.

Here is the link to know more about the course – https://learn.nvidia.com/courses/course-detail?course_id=course-v1:DLI+T-AC-01+V1

7. Augment your LLM Using RAG

Retrieval Augmented Generation (RAG), devised by Facebook AI Research in 2020, offers a method to enhance a LLM output by incorporating real-time, domain-specific data, eliminating the need for model retraining. RAG integrates an information retrieval module with a response generator, forming an end-to-end architecture.

Drawing from NVIDIA’s internal practices, this introduction aims to provide a foundational understanding of RAG, including its retrieval mechanism and the essential components within NVIDIA’s AI Foundations framework. By grasping these fundamentals, you can initiate your exploration into LLM and RAG applications.

To learn the course and know more in detail check it out here – https://courses.nvidia.com/courses/course-v1:NVIDIA+S-FX-16+v1/

8. Getting Started with AI on Jetson Nano

The NVIDIA Jetson Nano Developer Kit empowers makers, self-taught developers, and embedded technology enthusiasts worldwide with the capabilities of AI.

This user-friendly, yet powerful computer facilitates the execution of multiple neural networks simultaneously, enabling various applications such as image classification, object detection, segmentation, and speech processing.

Throughout the course, participants will utilise Jupyter iPython notebooks on Jetson Nano to construct a deep learning classification project employing computer vision models.

By the end of the course, individuals will possess the skills to develop their own deep learning classification and regression models leveraging the capabilities of the Jetson Nano.

Here is the link to know more about the course – https://learn.nvidia.com/courses/course-detail?course_id=course-v1:DLI+S-RX-02+V2

9. Building Video AI Applications at the Edge on Jetson Nano

This self-paced online course aims to equip learners with skills in AI-based video understanding using the NVIDIA Jetson Nano Developer Kit. Through practical exercises and Python application samples in JupyterLab notebooks, participants will explore intelligent video analytics (IVA) applications leveraging the NVIDIA DeepStream SDK.

The course covers setting up the Jetson Nano, constructing end-to-end DeepStream pipelines for video analysis, integrating various input and output sources, configuring multiple video streams, and employing alternate inference engines like YOLO.

Prerequisites include basic Linux command line familiarity and understanding Python 3 programming concepts. The course leverages tools like DeepStream, TensorRT, and requires specific hardware components like the Jetson Nano Developer Kit. Assessment is conducted through multiple-choice questions, and a certificate is provided upon completion.

For this course, you will require hardware including the NVIDIA Jetson Nano Developer Kit or the 2GB version, along with compatible power supply, microSD card, USB data cable, and a USB webcam.

To learn the course and know more in detail check it out here – https://courses.nvidia.com/courses/course-v1:DLI+S-IV-02+V2/

This course offers practical guidance on extending and enhancing 3D tools using the adaptable Omniverse platform. Taught by the Omniverse developer ecosystem team, participants will gain skills to develop advanced tools for creating physically accurate virtual worlds.

Through self-paced exercises, learners will delve into Python coding to craft custom scene manipulator tools within Omniverse. Key learning objectives include launching Omniverse Code, installing/enabling extensions, navigating the USD stage hierarchy, and creating widget manipulators for scale control.

The course also covers fixing broken manipulators and building specialised scale manipulators. Required tools include Omniverse Code, Visual Studio Code, and the Python Extension. Minimum hardware requirements comprise a desktop or laptop computer equipped with an Intel i7 Gen 5 or AMD Ryzen processor, along with an NVIDIA RTX Enabled GPU with 16GB of memory.

To learn the course and know more in detail check it out here – https://courses.nvidia.com/courses/course-v1:DLI+S-OV-06+V1/

11. Getting Started with USD for Collaborative 3D Workflows

In this self-paced course, participants will delve into the creation of scenes using human-readable Universal Scene Description ASCII (USDA) files.

The programme is divided into two sections: USD Fundamentals, introducing OpenUSD without programming, and Advanced USD, using Python to generate USD files.

Participants will learn OpenUSD scene structures and gain hands-on experience with OpenUSD Composition Arcs, including overriding asset properties with Sublayers, combining assets with References, and creating diverse asset states using Variants.

To learn more about the details of the course, here is the link – https://learn.nvidia.com/courses/course-detail?course_id=course-v1:DLI+S-FX-02+V1

12. Assemble a Simple Robot in Isaac Sim

This course offers a practical tutorial on assembling a basic two-wheel mobile robot using the ‘Assemble a Simple Robot’ guide within the Isaac Sim GPU platform. The tutorial spans around 30 minutes and covers key steps such as connecting a local streaming client to an Omniverse Isaac Sim server, loading a USD mock robot into the simulation environment, and configuring joint drives and properties for the robot’s movement.

Additionally, participants will learn to add articulations to the robot. By the end of the course, attendees will gain familiarity with the Isaac Sim interface and documentation necessary to initiate their own robot simulation projects.

The prerequisites for this course include a Windows or Linux computer capable of installing Omniverse Launcher and applications, along with adequate internet bandwidth for client/server streaming. The course is free of charge, with a duration of 30 minutes, focusing on Omniverse technology.

To learn the course and know more in detail check it out here – https://courses.nvidia.com/courses/course-v1:DLI+T-OV-01+V1/

13. How to Build Open USD Applications for industrial twins

This course introduces the basics of the Omniverse development platform. One will learn how to get started building 3D applications and tools that deliver the functionality needed to support industrial use cases and workflows for aggregating and reviewing large facilities such as factories, warehouses, and more.

The learning objectives include building an application from a kit template, customising the application via settings, creating and modifying extensions, and expanding extension functionality with new features.

To learn the course and know more in detail check it out here – https://learn.nvidia.com/courses/course-detail?course_id=course-v1:DLI+S-OV-13+V1

14. Disaster Risk Monitoring Using Satellite Imagery

Created in collaboration with the United Nations Satellite Centre, the course focuses on disaster risk monitoring using satellite imagery, teaching participants to create and implement deep learning models for automated flood detection. The skills gained aim to reduce costs, enhance efficiency, and improve the effectiveness of disaster management efforts.

Participants will learn to execute a machine learning workflow, process large satellite imagery data using hardware-accelerated tools, and apply transfer-learning for building cost-effective deep learning models.

The course also covers deploying models for near real-time analysis and utilising deep learning-based inference for flood event detection and response. Prerequisites include proficiency in Python 3, a basic understanding of machine learning and deep learning concepts, and an interest in satellite imagery manipulation.

To learn the course and know more in detail check it out here – https://courses.nvidia.com/courses/course-v1:DLI+S-ES-01+V1/

15. Introduction to AI in the Data Center

In this course, you will learn about AI use cases, machine learning, and deep learning workflows, as well as the architecture and history of GPUs. With a beginner-friendly approach, the course also covers deployment considerations for AI workloads in data centres, including infrastructure planning and multi-system clusters.

The course is tailored for IT professionals, system and network administrators, DevOps, and data centre professionals.

To learn the course and know more in detail check it out here – https://www.coursera.org/learn/introduction-ai-data-center

16. Fundamentals of Working with Open USD

In this course, participants will explore the foundational concepts of Universal Scene Description (OpenUSD), an open framework for detailed 3D environment creation and collaboration.

Participants will learn to use USD for non-destructive processes, efficient scene assembly with layers, and data separation for optimised 3D workflows across various industries.

Also, the session will cover Layering and Composition essentials, model hierarchy principles for efficient scene structuring, and Scene Graph Instancing for improved scene performance and organisation.

To know more about the course check it out here – https://learn.nvidia.com/courses/course-detail?course_id=course-v1:DLI+S-OV-15+V1

17. Introduction to Physics-informed Machine Learning with Modulus

High-fidelity simulations in science and engineering are hindered by computational expense and time constraints, limiting their iterative use in design and optimisation.

NVIDIA Modulus, a physics machine learning platform, tackles these challenges by creating deep learning models that outperform traditional methods by up to 100,000 times, providing fast and accurate simulation results.

One will learn how Modulus integrates with the Omniverse Platform and how to use its API for data-driven and physics-driven problems, addressing challenges from deep learning to multi-physics simulations.

To learn the course and know more in detail check it out here – https://learn.nvidia.com/courses/course-detail?course_id=course-v1:DLI+S-OV-04+V1

18. Introduction to DOCA for DPUs

The DOCA Software Framework, in partnership with BlueField DPUs, enables rapid application development, transforming networking, security, and storage performance.

This self-paced course covers DOCA fundamentals for accelerated data centre computing on DPUs, including visualising the framework paradigm, studying BlueField DPU specs, exploring sample applications, and identifying opportunities for DPU-accelerated computation.

One gains introductory knowledge to kickstart application development for enhanced data centre services.

To learn the course and know more in detail check it out here – https://learn.nvidia.com/courses/course-detail?course_id=course-v1:DLI+S-NP-01+V1

The story was updated on 2nd Jan, 25 to reflect the latest courses and correct the URLs to them.

Books, Courses & Certifications

Student Scores in Math, Science, Reading Slide Again on Nation’s Report Card

Exasperating. Depressing. Predictable.

That’s how experts describe the latest results from the National Assessment of Educational Progress, also known as the “nation’s report card.”

Considered a highly accurate window into student performance, the assessment has become a periodic reminder of declining academic success among students in the U.S., with the last several rounds accentuating yearslong slumps in learning. In January, for instance, the previous round of NAEP results revealed the biggest share of eighth graders who did not meet basic reading proficiency in the assessment’s history.

Now, the latest results, released Tuesday after a delay, showed continued decline.

Eighth graders saw the first fall in average science scores since the assessment took its current form in 2009. The assessment looked at physical science, life science, and earth and space sciences. Thirty-eight percent of students performed below basic, a level which means these students probably don’t know that plants need sunlight to grow and reproduce, according to NAEP. In contrast, only 31 percent of students performed at proficient levels.

Twelfth graders saw a three-point fall in average math and reading scores, compared to results from 2019. The exam also shows that the achievement gap between high- and low-scoring students is swelling, a major point of concern. In math, the gap is wider than it’s ever been.

But most eye-grabbing is the fact that 45 percent of high school seniors — the highest percentage ever recorded — scored below basic in math, meaning they cannot determine probabilities of simple events from two-way tables and verbal descriptions. In contrast, just 22 percent scored at-or-above proficient. In reading, 32 percent scored below basic, and 35 percent met the proficient threshold. Twelfth grade students also reported high rates of absenteeism.

Tucked inside the report was the finding that parents’ education did not appear to hold much sway on student performance in the lower quartiles, which will bear further unpacking, according to one expert’s first analysis.

But the scores contained other glum trends, as well.

For example, the gap in outcomes in the sciences between male and female students, which had narrowed in recent years, bounced back. (A similar gap in math reappeared since the pandemic, pushing educators to get creative in trying to nourish girls’ interest in the subject.)

But with teacher shortages and schools facing enrollment declines and budget shortfalls, experts say it’s not surprising that students still struggle. Those who watch education closely describe themselves as tired, exasperated and even depressed from watching a decade’s worth of student performance declines. They also express doubt that political posturing around the scores will translate into improvements.

Political Posturing

Despite a sterling reputation, the assessment found itself snagged by federal upheaval.

NAEP is a congressionally mandated program run by the National Center for Education Statistics. Since the last round of results was released, back in January, the center and the broader U.S. Department of Education have dealt with shredded contracts, mass firings and the sudden dismissal of Peggy Carr, who’d helped burnish the assessment’s reputation and statistical rigor and whose firing delayed the release of these latest results.

The country’s education system overall has also undergone significant changes, including the introduction of a national school choice plan, meant to shift public dollars to private schools, through the Republican budget.

Declining scores provide the Trump administration a potential cudgel for its dismantling of public education, and some have seized upon it: Congressman Tim Walberg, a Republican from Michigan and chairman of the House Education and Workforce Committee, blamed the latest scores on the Democrats’ “student-last policies,” in a prepared statement.

“The lesson is clear,” argued Education Secretary Linda McMahon in her comment on the latest scores. “Success isn’t about how much money we spend, but who controls the money and where that money is invested,” she wrote, stressing that students need an approach that returns control education to the states.

Some observers chortle at the “back to the states” analysis. After all, state and local governments already control most of the policies and spending related to public schools.

Regardless, experts suggest that just pushing more of education governance to the states will not solve the underlying causes of declining student performance. Declines in scores predate the pandemic, they also say.

No Real Progress

States have always been in charge of setting their own standards and assessments, says Latrenda Knighten, president of the National Council of Teachers of Mathematics. These national assessments are useful for comparing student performance across states, she adds.

Ultimately, in her view, the latest scores reveal the need for efforts to boost high-quality instruction and continuous professional learning for teachers to address systemic issues, a sentiment reflected in her organization’s public comment on the assessment. The results shine a spotlight on the need for greater opportunity in high school mathematics across the country, Knighten told EdSurge. She believes that means devoting more money for teacher training.

Some think that the causes of this academic slide are relatively well understood.

Teacher quality has declined, as teacher prep programs struggle to supply qualified teachers, particularly in math, and schools struggle to fill vacancies, says Robin Lake, director of the Center on Reinventing Public Education. She argues there has also been a decline in the desire to push schools to be accountable for poor student performance, and an inability to adapt.

There’s also confusion about which curriculum is best for students, she says. For instance, fierce debates continue to split teachers around “tracking,” where students are grouped into math paths based on perceived ability.

But will yet another poor national assessment spur change?

The results continue a decade-long decline in student performance, says Christy Hovanetz, a senior policy fellow for the nonprofit ExcelinEd.

Hovanetz worries that NAEP’s potential lessons will get “lost in the wash.” What’s needed is a balance between turning more authority back over to the states to operate education and a more robust requirement for accountability that allows states to do whatever they want, so long as they demonstrate it’s actually working, she says. That could mean requiring state assessments and accountability systems, she adds.

But right now, a lot of the states aren’t focusing on best practices for science and reading instruction, and they aren’t all requiring high-quality instructional materials, she says.

Worse, some are lowering the standards to meet poor student performance, she argues. For instance, Kansas recently altered its state testing. The changes, which involved changing score ranges, have drawn concerns from parents that the state is watering down standards. Hovanetz thinks that’s the case. In making the changes, the state joined Illinois, Wisconsin and Oklahoma in lowering expectations for students on state tests, she argues.

What’s uncontested from all perspectives is that the education system isn’t working.

“It’s truly the definition of insanity: to keep doing what we’re doing and hoping for better results,” says Lake, of the Center on Reinventing Public Education, adding: “We’re not getting them.”

Books, Courses & Certifications

How School-Family Partnerships Can Boost Early Literacy

When the National Assessment of Educational Progress, often called the Nation’s Report Card, was released last year, the results were sobering. Despite increased funding streams and growing momentum behind the Science of Reading, average fourth grade reading scores declined by another two points from 2022.

In a climate of growing accountability and public scrutiny, how can we do things differently — and more effectively — to ensure every child becomes a proficient reader?

The answer lies not only in what happens inside the classroom but in the connections forged between schools and families. Research shows that when families are equipped with the right tools and guidance, literacy development accelerates. For many schools, creating this home-to-school connection begins by rethinking how they communicate with and involve families from the start.

A School-Family Partnership in Practice

My own experience with my son William underscored just how impactful a strong school-family partnership can be.

When William turned four, he began asking, “When will I be able to read?” He had watched his older brother learn to read with relative ease, and, like many second children, William was eager to follow in his big brother’s footsteps. His pre-K teacher did an incredible job introducing foundational literacy skills, but for William, it wasn’t enough. He was ready to read, but we, his parents, weren’t sure how to support him.

During a parent-teacher conference, his teacher recommended a free, ad-free literacy app that she uses in her classroom. She assigned stories to read and phonics games to play that aligned with his progress at school. The characters in the app became his friends, and the activities became his favorite challenge. Before long, he was recognizing letters on street signs, rhyming in the car and asking to read his favorite stories over and over again.

William’s teacher used insights from his at-home learning to personalize his instruction in the classroom. For our family, this partnership made a real difference.

Where Literacy Begins: Bridging Home and School

Reading develops in stages, and the pre-K to kindergarten years are especially foundational. According to the National Reading Panel and the Institute of Education Sciences, five key pillars support literacy development:

- Phonemic awareness: recognizing and playing with individual sounds in spoken words

- Phonics: connecting those sounds to written letters

- Fluency: reading with ease, accuracy and expression

- Vocabulary: understanding the meaning of spoken and written words

- Comprehension: making sense of what is read

For schools, inviting families into this framework doesn’t mean making parents into teachers. It means providing simple ways for families to reinforce these pillars at home, often through everyday routines, such as reading together, playing language games or talking about daily activities.

Families are often eager to support their children’s reading, but many aren’t sure how. At the same time, educators often struggle to communicate academic goals to families in ways that are clear and approachable.

Three Ways to Strengthen School-Family Literacy Partnerships

Forging effective partnerships between schools and families can feel daunting, but small, intentional shifts can make a powerful impact. Here are three research-backed strategies that schools can use to bring families into the literacy-building process.

1. Communicate the “why” and the “how”

Families become vital partners when they understand not just what their children are learning, but why it matters. Use newsletters, family literacy nights or informal conversations to break down the five pillars in accessible terms. For example, explain that clapping out syllables at home supports phonemic awareness or that spotting road signs helps with letter recognition (phonics).

Even basic activities can reinforce classwork. Sample ideas for family newsletters:

- “This week we’re working on beginning sounds. Try playing a game where you name things in your house that start with the letter ‘B’.”

- “We’re focusing on listening to syllables in words. See if your child can clap out the beats in their name!”

Provide families with specific activities that match what is being taught at school. For example, William’s teacher used the Khan Academy Kids app to assign letter-matching games and read-aloud books that aligned with classroom learning. The connection made it easier for us to support him at home.

2. Establish everyday reading routines

Consistency builds confidence. Encourage families to create regular reading moments, such as a story before bed, a picture book over breakfast or a read-aloud during bath time. Reinforce that reading together in any language is beneficial. Oral storytelling, silly rhymes and even talking through the day’s events help develop vocabulary and comprehension.

Help parents understand that it’s okay to stop when it’s no longer fun. If a child isn’t interested, it’s better to pause and return later than to force the activity. The goal is for children to associate reading with enjoyment and a sense of connection.

3. Empower families with fun, flexible tools

Families are more likely to participate when activities are playful and accessible, not just another assignment. Suggest resources that fit different family preferences: printable activity sheets, suggested library books and no-cost, ad-free digital platforms, such as Khan Academy Kids. These give children structured ways to practice and offer families tools that are easy to use, even with limited time.

In our district, many families use technology to extend classroom skills at home. For William, a rhyming game on a literacy app made practicing phonological awareness fun and stress-free; he returned to it repeatedly, reinforcing new skills through play.

Literacy Grows Best in Partnership

School-family partnerships also offer educators valuable feedback. When families share observations about what excites or challenges their children at home, teachers gain a fuller picture of each student’s progress. Digital platforms, such as teacher, school and district-level reporting, can support this feedback loop by providing teachers with real-time data on at-home practice. This two-way exchange strengthens instruction and empowers both families and educators.

While curriculum, assessment and skilled teaching are essential, literacy is most likely to flourish when nurtured by both schools and families. When educators invite families into the process — demystifying the core elements of literacy, sharing routines and providing flexible, accessible tools — they help create a culture where reading is valued everywhere.

Strong school-family partnerships don’t just address achievement gaps. They lay the groundwork for the joy, confidence and curiosity that help children become lifelong readers.

At Khan Academy Kids, we believe in the power of the school-family partnership. For free resources to help strengthen children’s literacy development, explore the Khan Academy Kids resource hub for schools.

Books, Courses & Certifications

TII Falcon-H1 models now available on Amazon Bedrock Marketplace and Amazon SageMaker JumpStart

This post was co-authored with Jingwei Zuo from TII.

We are excited to announce the availability of the Technology Innovation Institute (TII)’s Falcon-H1 models on Amazon Bedrock Marketplace and Amazon SageMaker JumpStart. With this launch, developers and data scientists can now use six instruction-tuned Falcon-H1 models (0.5B, 1.5B, 1.5B-Deep, 3B, 7B, and 34B) on AWS, and have access to a comprehensive suite of hybrid architecture models that combine traditional attention mechanisms with State Space Models (SSMs) to deliver exceptional performance with unprecedented efficiency.

In this post, we present an overview of Falcon-H1 capabilities and show how to get started with TII’s Falcon-H1 models on both Amazon Bedrock Marketplace and SageMaker JumpStart.

Overview of TII and AWS collaboration

TII is a leading research institute based in Abu Dhabi. As part of UAE’s Advanced Technology Research Council (ATRC), TII focuses on advanced technology research and development across AI, quantum computing, autonomous robotics, cryptography, and more. TII employs international teams of scientists, researchers, and engineers in an open and agile environment, aiming to drive technological innovation and position Abu Dhabi and the UAE as a global research and development hub in alignment with the UAE National Strategy for Artificial Intelligence 2031.

TII and Amazon Web Services (AWS) are collaborating to expand access to made-in-the-UAE AI models across the globe. By combining TII’s technical expertise in building large language models (LLMs) with AWS Cloud-based AI and machine learning (ML) services, professionals worldwide can now build and scale generative AI applications using the Falcon-H1 series of models.

About Falcon-H1 models

The Falcon-H1 architecture implements a parallel hybrid design, using elements from Mamba and Transformer architectures to combine the faster inference and lower memory footprint of SSMs like Mamba with the effectiveness of Transformers’ attention mechanism in understanding context and enhanced generalization capabilities. The Falcon-H1 architecture scales across multiple configurations ranging from 0.5–34 billion parameters and provides native support for 18 languages. According to TII, the Falcon-H1 family demonstrates notable efficiency with published metrics indicating that smaller model variants achieve performance parity with larger models. Some of the benefits of Falcon-H1 series include:

- Performance – The hybrid attention-SSM model has optimized parameters with adjustable ratios between attention and SSM heads, leading to faster inference, lower memory usage, and strong generalization capabilities. According to TII benchmarks published in Falcon-H1’s technical blog post and technical report, Falcon-H1 models demonstrate superior performance across multiple scales against other leading Transformer models of similar or larger scales. For example, Falcon-H1-0.5B delivers performance similar to typical 7B models from 2024, and Falcon-H1-1.5B-Deep rivals many of the current leading 7B-10B models.

- Wide range of model sizes – The Falcon-H1 series includes six sizes: 0.5B, 1.5B, 1.5B-Deep, 3B, 7B, and 34B, with both base and instruction-tuned variants. The Instruct models are now available in Amazon Bedrock Marketplace and SageMaker JumpStart.

- Multilingual by design – The models support 18 languages natively (Arabic, Czech, German, English, Spanish, French, Hindi, Italian, Japanese, Korean, Dutch, Polish, Portuguese, Romanian, Russian, Swedish, Urdu, and Chinese) and can scale to over 100 languages according to TII, thanks to a multilingual tokenizer trained on diverse language datasets.

- Up to 256,000 context length – The Falcon-H1 series enables applications in long-document processing, multi-turn dialogue, and long-range reasoning, showing a distinct advantage over competitors in practical long-context applications like Retrieval Augmented Generation (RAG).

- Robust data and training strategy – Training of Falcon-H1 models employs an innovative approach that introduces complex data early on, contrary to traditional curriculum learning. It also implements strategic data reuse based on careful memorization window assessment. Additionally, the training process scales smoothly across model sizes through a customized Maximal Update Parametrization (µP) recipe, specifically adapted for this novel architecture.

- Balanced performance in science and knowledge-intensive domains – Through a carefully designed data mixture and regular evaluations during training, the model achieves strong general capabilities and broad world knowledge while minimizing unintended specialization or domain-specific biases.

In line with their mission to foster AI accessibility and collaboration, TII have released Falcon-H1 models under the Falcon LLM license. It offers the following benefits:

- Open source nature and accessibility

- Multi-language capabilities

- Cost-effectiveness compared to proprietary models

- Energy-efficiency

About Amazon Bedrock Marketplace and SageMaker JumpStart

Amazon Bedrock Marketplace offers access to over 100 popular, emerging, specialized, and domain-specific models, so you can find the best proprietary and publicly available models for your use case based on factors such as accuracy, flexibility, and cost. On Amazon Bedrock Marketplace you can discover models in a single place and access them through unified and secure Amazon Bedrock APIs. You can also select your desired number of instances and the instance type to meet the demands of your workload and optimize your costs.

SageMaker JumpStart helps you quickly get started with machine learning. It provides access to state-of-the-art model architectures, such as language models, computer vision models, and more, without having to build them from scratch. With SageMaker JumpStart you can deploy models in a secure environment by provisioning them on SageMaker inference instances and isolating them within your virtual private cloud (VPC). You can also use Amazon SageMaker AI to further customize and fine-tune the models and streamline the entire model deployment process.

Solution overview

This post demonstrates how to deploy a Falcon-H1 model using both Amazon Bedrock Marketplace and SageMaker JumpStart. Although we use Falcon-H1-0.5B as an example, you can apply these steps to other models in the Falcon-H1 series. For help determining which deployment option—Amazon Bedrock Marketplace or SageMaker JumpStart—best suits your specific requirements, see Amazon Bedrock or Amazon SageMaker AI?

Deploy Falcon-H1-0.5B-Instruct with Amazon Bedrock Marketplace

In this section, we show how to deploy the Falcon-H1-0.5B-Instruct model in Amazon Bedrock Marketplace.

Prerequisites

To try the Falcon-H1-0.5B-Instruct model in Amazon Bedrock Marketplace, you must have access to an AWS account that will contain your AWS resources.Prior to deploying Falcon-H1-0.5B-Instruct, verify that your AWS account has sufficient quota allocation for ml.g6.xlarge instances. The default quota for endpoints using several instance types and sizes is 0, so attempting to deploy the model without a higher quota will trigger a deployment failure.

To request a quota increase, open the AWS Service Quotas console and search for Amazon SageMaker. Locate ml.g6.xlarge for endpoint usage and choose Request quota increase, then specify your required limit value. After the request is approved, you can proceed with the deployment.

Deploy the model using the Amazon Bedrock Marketplace UI

To deploy the model using Amazon Bedrock Marketplace, complete the following steps:

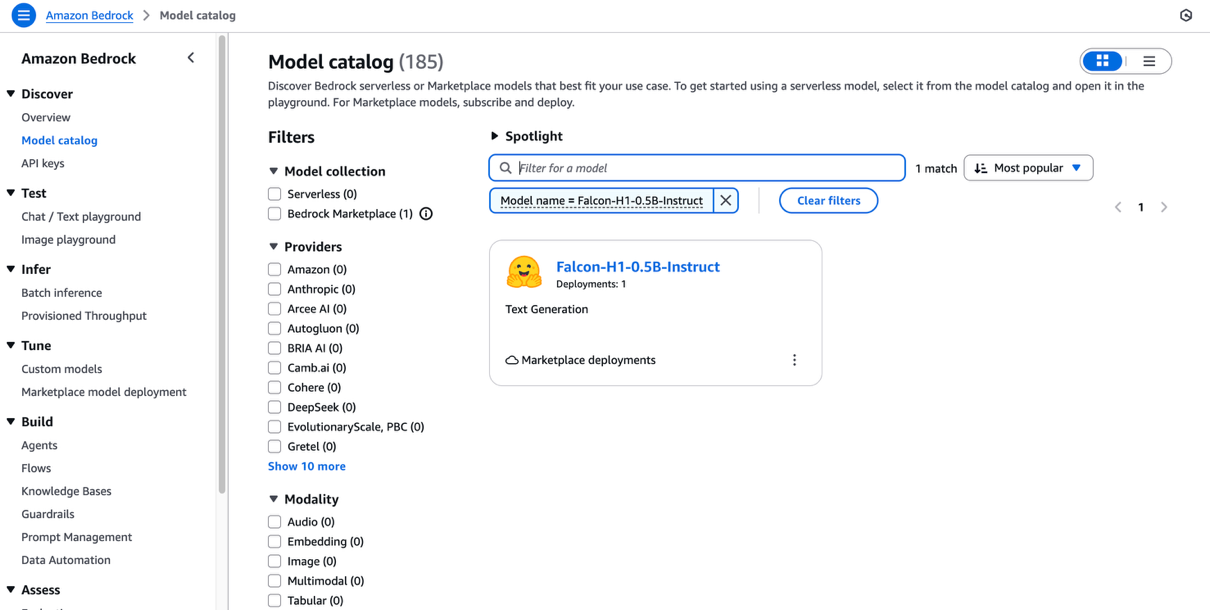

- On the Amazon Bedrock console, under Discover in the navigation pane, choose Model catalog.

- Filter for Falcon-H1 as the model name and choose Falcon-H1-0.5B-Instruct.

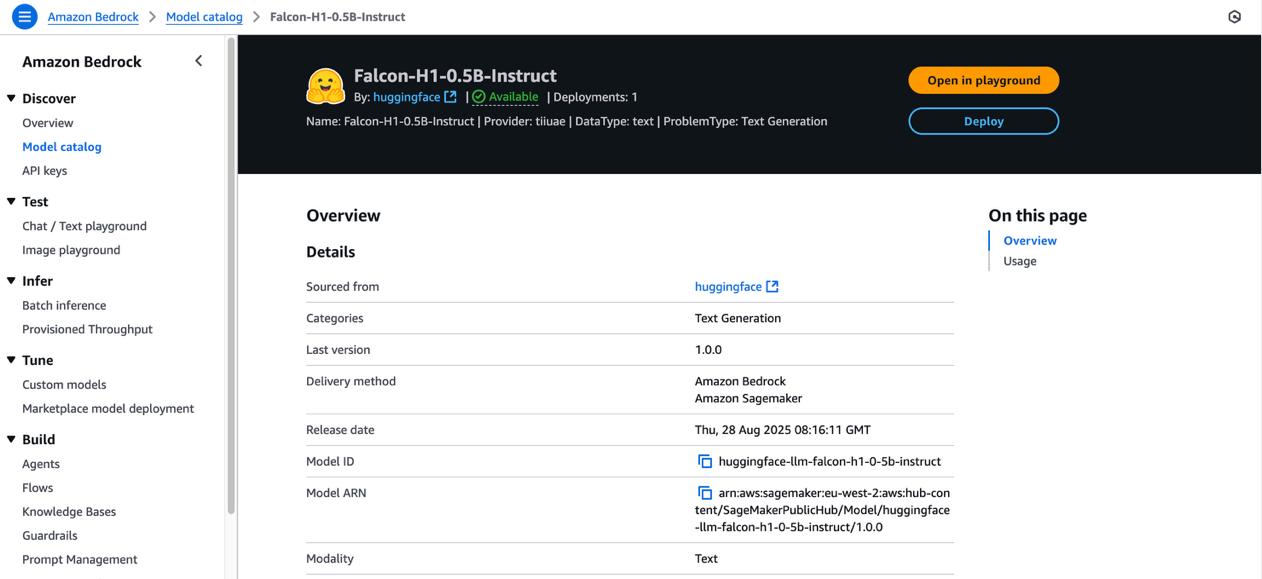

The model overview page includes information about the model’s license terms, features, setup instructions, and links to further resources.

- Review the model license terms, and if you agree with the terms, choose Deploy.

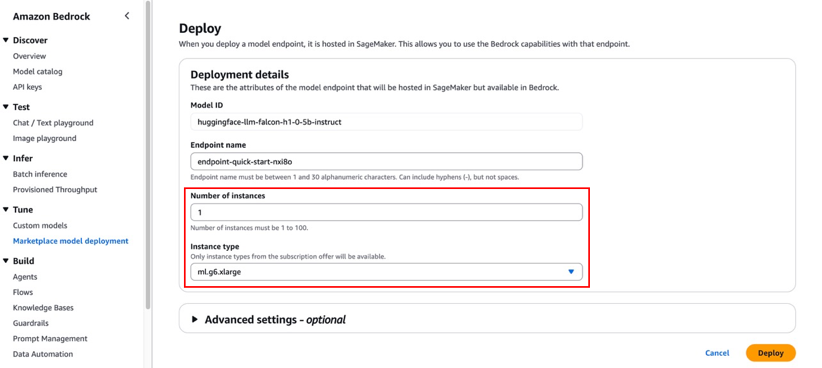

- For Endpoint name, enter an endpoint name or leave it as the default pre-populated name.

- To minimize costs while experimenting, set the Number of instances to 1.

- For Instance type, choose from the list of compatible instance types. Falcon-H1-0.5B-Instruct is an efficient model, so ml.m6.xlarge is sufficient for this exercise.

Although the default configurations are typically sufficient for basic needs, you can customize advanced settings like VPC, service access permissions, encryption keys, and resource tags. These advanced settings might require adjustment for production environments to maintain compliance with your organization’s security protocols.

- Choose Deploy.

- A prompt asks you to stay on the page while the AWS Identity and Access Management (IAM) role is being created. If your AWS account lacks sufficient quota for the selected instance type, you’ll receive an error message. In this case, refer to the preceding prerequisite section to increase your quota, then try the deployment again.

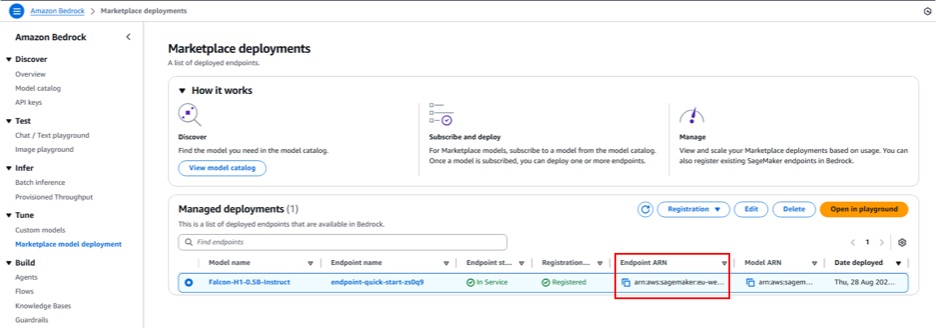

While deployment is in progress, you can choose Marketplace model deployments in the navigation pane to monitor the deployment progress in the Managed deployment section. When the deployment is complete, the endpoint status will change from Creating to In Service.

Interact with the model in the Amazon Bedrock Marketplace playground

You can now test Falcon-H1 capabilities directly in the Amazon Bedrock playground by selecting the managed deployment and choosing Open in playground.

You can now use the Amazon Bedrock Marketplace playground to interact with Falcon-H1-0.5B-Instruct.

Invoke the model using code

In this section, we demonstrate to invoke the model using the Amazon Bedrock Converse API.

Replace the placeholder code with the endpoint’s Amazon Resource Name (ARN), which begins with arn:aws:sagemaker. You can find this ARN on the endpoint details page in the Managed deployments section.

To learn more about the detailed steps and example code for invoking the model using Amazon Bedrock APIs, refer to Submit prompts and generate response using the API.

Deploy Falcon-H1-0.5B-Instruct with SageMaker JumpStart

You can access FMs in SageMaker JumpStart through Amazon SageMaker Studio, the SageMaker SDK, and the AWS Management Console. In this walkthrough, we demonstrate how to deploy Falcon-H1-0.5B-Instruct using the SageMaker Python SDK. Refer to Deploy a model in Studio to learn how to deploy the model through SageMaker Studio.

Prerequisites

To deploy Falcon-H1-0.5B-Instruct with SageMaker JumpStart, you must have the following prerequisites:

- An AWS account that will contain your AWS resources.

- An IAM role to access SageMaker AI. To learn more about how IAM works with SageMaker AI, see Identity and Access Management for Amazon SageMaker AI.

- Access to SageMaker Studio with a JupyterLab space, or an interactive development environment (IDE) such as Visual Studio Code or PyCharm.

Deploy the model programmatically using the SageMaker Python SDK

Before deploying Falcon-H1-0.5B-Instruct using the SageMaker Python SDK, make sure you have installed the SDK and configured your AWS credentials and permissions.

The following code example demonstrates how to deploy the model:

When the previous code segment completes successfully, the Falcon-H1-0.5B-Instruct model deployment is complete and available on a SageMaker endpoint. Note the endpoint name shown in the output—you will replace the placeholder in the following code segment with this value.The following code demonstrates how to prepare the input data, make the inference API call, and process the model’s response:

Clean up

To avoid ongoing charges for AWS resources used while experimenting with Falcon-H1 models, make sure to delete all deployed endpoints and their associated resources when you’re finished. To do so, complete the following steps:

- Delete Amazon Bedrock Marketplace resources:

- On the Amazon Bedrock console, choose Marketplace model deployment in the navigation pane.

- Under Managed deployments, choose the Falcon-H1 model endpoint you deployed earlier.

- Choose Delete and confirm the deletion if you no longer need to use this endpoint in Amazon Bedrock Marketplace.

- Delete SageMaker endpoints:

- On the SageMaker AI console, in the navigation pane, choose Endpoints under Inference.

- Select the endpoint associated with the Falcon-H1 models.

- Choose Delete and confirm the deletion. This stops the endpoint and avoids further compute charges.

- Delete SageMaker models:

- On the SageMaker AI console, choose Models under Inference.

- Select the model associated with your endpoint and choose Delete.

Always verify that all endpoints are deleted after experimentation to optimize costs. Refer to the Amazon SageMaker documentation for additional guidance on managing resources.

Conclusion

The availability of Falcon-H1 models in Amazon Bedrock Marketplace and SageMaker JumpStart helps developers, researchers, and businesses build cutting-edge generative AI applications with ease. Falcon-H1 models offer multilingual support (18 languages) across various model sizes (from 0.5B to 34B parameters) and support up to 256K context length, thanks to their efficient hybrid attention-SSM architecture.

By using the seamless discovery and deployment capabilities of Amazon Bedrock Marketplace and SageMaker JumpStart, you can accelerate your AI innovation while benefiting from the secure, scalable, and cost-effective AWS Cloud infrastructure.

We encourage you to explore the Falcon-H1 models in Amazon Bedrock Marketplace or SageMaker JumpStart. You can use these models in AWS Regions where Amazon Bedrock or SageMaker JumpStart and the required instance types are available.

For further learning, explore the AWS Machine Learning Blog, SageMaker JumpStart GitHub repository, and Amazon Bedrock User Guide. Start building your next generative AI application with Falcon-H1 models and unlock new possibilities with AWS!

Special thanks to everyone who contributed to the launch: Evan Kravitz, Varun Morishetty, and Yotam Moss.

About the authors

Mehran Nikoo leads the Go-to-Market strategy for Amazon Bedrock and agentic AI in EMEA at AWS, where he has been driving the development of AI systems and cloud-native solutions over the last four years. Prior to joining AWS, Mehran held leadership and technical positions at Trainline, McLaren, and Microsoft. He holds an MBA from Warwick Business School and an MRes in Computer Science from Birkbeck, University of London.

Mehran Nikoo leads the Go-to-Market strategy for Amazon Bedrock and agentic AI in EMEA at AWS, where he has been driving the development of AI systems and cloud-native solutions over the last four years. Prior to joining AWS, Mehran held leadership and technical positions at Trainline, McLaren, and Microsoft. He holds an MBA from Warwick Business School and an MRes in Computer Science from Birkbeck, University of London.

Mustapha Tawbi is a Senior Partner Solutions Architect at AWS, specializing in generative AI and ML, with 25 years of enterprise technology experience across AWS, IBM, Sopra Group, and Capgemini. He has a PhD in Computer Science from Sorbonne and a Master’s degree in Data Science from Heriot-Watt University Dubai. Mustapha leads generative AI technical collaborations with AWS partners throughout the MENAT region.

Mustapha Tawbi is a Senior Partner Solutions Architect at AWS, specializing in generative AI and ML, with 25 years of enterprise technology experience across AWS, IBM, Sopra Group, and Capgemini. He has a PhD in Computer Science from Sorbonne and a Master’s degree in Data Science from Heriot-Watt University Dubai. Mustapha leads generative AI technical collaborations with AWS partners throughout the MENAT region.

Jingwei Zuo is a Lead Researcher at the Technology Innovation Institute (TII) in the UAE, where he leads the Falcon Foundational Models team. He received his PhD in 2022 from University of Paris-Saclay, where he was awarded the Plateau de Saclay Doctoral Prize. He holds an MSc (2018) from the University of Paris-Saclay, an Engineer degree (2017) from Sorbonne Université, and a BSc from Huazhong University of Science & Technology.

Jingwei Zuo is a Lead Researcher at the Technology Innovation Institute (TII) in the UAE, where he leads the Falcon Foundational Models team. He received his PhD in 2022 from University of Paris-Saclay, where he was awarded the Plateau de Saclay Doctoral Prize. He holds an MSc (2018) from the University of Paris-Saclay, an Engineer degree (2017) from Sorbonne Université, and a BSc from Huazhong University of Science & Technology.

John Liu is a Principal Product Manager for Amazon Bedrock at AWS. Previously, he served as the Head of Product for AWS Web3/Blockchain. Prior to joining AWS, John held various product leadership roles at public blockchain protocols and financial technology (fintech) companies for 14 years. He also has nine years of portfolio management experience at several hedge funds.

John Liu is a Principal Product Manager for Amazon Bedrock at AWS. Previously, he served as the Head of Product for AWS Web3/Blockchain. Prior to joining AWS, John held various product leadership roles at public blockchain protocols and financial technology (fintech) companies for 14 years. He also has nine years of portfolio management experience at several hedge funds.

Hamza MIMI is a Solutions Architect for partners and strategic deals in the MENAT region at AWS, where he bridges cutting-edge technology with impactful business outcomes. With expertise in AI and a passion for sustainability, he helps organizations architect innovative solutions that drive both digital transformation and environmental responsibility, transforming complex challenges into opportunities for growth and positive change.

Hamza MIMI is a Solutions Architect for partners and strategic deals in the MENAT region at AWS, where he bridges cutting-edge technology with impactful business outcomes. With expertise in AI and a passion for sustainability, he helps organizations architect innovative solutions that drive both digital transformation and environmental responsibility, transforming complex challenges into opportunities for growth and positive change.

-

Business2 weeks ago

Business2 weeks agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Tools & Platforms4 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy2 months ago

Ethics & Policy2 months agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences4 months ago

Events & Conferences4 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Education2 months ago

Education2 months agoMacron says UK and France have duty to tackle illegal migration ‘with humanity, solidarity and firmness’ – UK politics live | Politics

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi