AI Insights

To Prompt a Butterfly. On the Traceability of AI-generated Creations

Generative artificial intelligence must be regulated across jurisdictions to address traceability.

Over two thousand years ago, a wise sage wrote the following story:

Formerly, I, Zhuang Zhou, dreamt that I was a butterfly, a butterfly flying about, feeling that it was enjoying itself. I did not know that it was Zhou. Suddenly I awoke, and was myself again, the veritable Zhou. I did not know whether it had formerly been Zhou dreaming that he was a butterfly, or it was now a butterfly dreaming that it was Zhou.

More recently, advances in artificial intelligence (AI)—especially in the generation of synchronized images and audio—have made it possible to create prompt-based synthetic videos with a level of realism that challenges the boundary between what is considered artificial and real. A prompt, for those who are not yet familiar with it, refers to the input provided to a generative AI system, whether text-to-text, text-to-image, or image-to-image, to steer its output.

These developments have given rise, also, to a growing body of videos and discussions centered on what has come to be known as “prompt theory,” which explores characters in AI-generated videos who are self-aware of their origins. These videos include unsettling philosophical provocations and humorous or crude takes tailored for instant consumption on TikTok. At the core of this emerging trend lies a provocative idea: that we ourselves may be the product of a prompt.

For those who have faith, the “prompter” might be a god. For everyone else, the challenge is to figure out what to do with this doubt concerning our origin. Regulators, for their part, have a more specific role: to facilitate a smooth and broadly accepted management of worldly affairs within a framework that seeks to distinguish for legal purposes what is real from what is artificial.

Historically, the distinction between the genuine and the counterfeit has often depended on labels or markers that establish authorship or origin, such as watermarks on banknotes. Here, the watermark is an embedded thread within paper pulp, functioning much like a primitive, single-block blockchain, where the first indelible trace validates all subsequent transactions. The technique originated in the 13th century papermills of Fabriano, Italy, and was adopted later, first in England, to verify the authenticity of paper money.

Now, watermarking is also one of the most viable methods for maintaining control over the traceability of AI-generated creations. It entails embedding a signal within the content itself to mark its artificial origin. This signal is designed to withstand modifications or, at the very least, reveal tampering by breaking when the content is altered.

In contrast, labeling relies on external markers such as metadata or disclaimers, leaving the content itself unchanged. Although labeling is more readily perceived by the general public when present, it is also more easily stripped away, rendering it a less robust solution compared to watermarking.

Once the need for regulatory intervention is acknowledged, the challenge of how to regulate becomes both technical and political. Technically, the use of digital labels and embedded watermarks in generative AI outputs has been explored and researched for several years, recently gaining momentum amid concerns over textual misuse such as academic dishonesty facilitated by models such as ChatGPT.

To be sure, technical solutions are not foolproof—they can be bypassed by malicious actors and require continual updates to remain effective. But the deeper obstacle lies in the absence of coordinated policy approaches. In short, legal interoperability of digital labeling and watermarking across jurisdictions remains a major unresolved issue.

As evidence of what has just been said, let us now focus on how the “Big Three” legal systems, the United States’s, the European Union’s, and China’s, are regulating ways to track AI.

In 2023, U.S. President Joseph R. Biden issued an executive order encouraging federal agencies to develop standards for labeling and watermarking AI-generated content. However, this order did not impose requirements on private companies—which have been the main AI innovators so far—and was later rescinded by President Donald J. Trump, who replaced it with an executive order omitting any reference to watermarking or labeling.

Regarding the very recently released document from the Executive Office of the President, America’s AI Action Plan, aside from an initial general statement on the need for AI systems to be designed in a way that pursues “objective truth,” there is no explicit mention of obligations regarding traceability. There is, however, a paragraph on how to “Combat Synthetic Media in the Legal System” where—although the focus is limited to preventing the spread of fake evidence in the judicial context—a concern does emerge, in its own way, about the need to trace the synthetic nature of texts, images, and videos. Once again, though, nothing suggests the adoption of concrete measures targeting businesses, which, for their part, have already undertaken various autonomous initiatives. A notable example is the voluntary partnership of high-tech companies through the Coalition for Content Provenance and Authenticity, aimed at developing standards for “content credentials” in coordination with the National Institute of Standards and Technology.

As for the EU, its AI Act, enacted in 2024, mandates clear disclosure of synthetic content and the labeling of deep fakes, building on the earlier Digital Services Act. The practical effects of the new act, however, will remain uncertain until it is fully implemented after a complex series of transitional periods set out by rulemakers.

China, for its part, is advancing regulation by focusing on the concept of “deep synthesis” to formalize traceability of AI-generated content. Following earlier 2023 interim measures, the China Cyberspace Administration (CAC) released new measures to standardize labeling practices, accompanied by a binding national standard, to take effect from September 1, 2025.

A shared solution connecting these separate paths on a global scale seems to be hindered by current geopolitical tensions and divisions. From this perspective, the 2024 report by the United Nations Secretary-General’s High-level Advisory Body on AI represented a missed opportunity to advance more concrete solutions. In fact, the final document made only a passing reference to labeling in relation to deep-fakes and omitted any discussion of watermarking. Nonetheless, the report’s calls for shared rules crafted by an international scientific panel and intergovernmental, multi-stakeholder policy dialogue provide a valuable foundation to reframe the issue of traceability on a new cooperative basis.

Just to be clear, the “zero-sum race” language that characterizes the entire America’s AI Action Plan doesn’t give much reason for immediate optimism. But beyond who ends up winning the AI race, global problems—such as understanding the nature of what we read, see, and ultimately experience, often drawing on sources from across the world—call for global solutions.

For the time being, then—flying about between what we are and what we dream of being—a reasonable proposal is to support the development of a shared reference framework. This framework should arise from good-faith international, institutional, and academic efforts, drawing on both unilateral regulations and voluntary standards designed to trace the origins of creations produced by diverse intelligences. By doing so, humans might thus more clearly define the boundaries of synthetic outputs—and perhaps be prompted to reflect more deeply on their own too.

AI Insights

The Future of Robotics | Chapters

Robotics has long captured the human imagination, from early science fiction to today’s advanced technologies that power industries, healthcare, and daily life. Over the past few decades, the field of robotics has evolved rapidly, transforming from simple mechanical systems into sophisticated, intelligent machines capable of learning, adapting, and interacting with humans in complex ways. With advancements in artificial intelligence (AI), machine learning, and materials science, robotics is on the verge of revolutionizing various sectors.

Key Areas of Advancement in Robotics

1. Artificial Intelligence and Machine Learning One of the most significant advancements in robotics is the integration of AI and machine learning. AI-driven robots can now process large datasets, learn from their environments, and make autonomous decisions. Machine learning algorithms allow robots to improve their performance over time, adapting to new tasks or environments without needing to be reprogrammed. This development has led to breakthroughs in robotics applications, from self-driving cars to smart manufacturing systems.

2. Collaborative Robots (Cobots) Collaborative robots, or “cobots,” are designed to work alongside humans in a shared workspace. Unlike traditional industrial robots that operate in isolated, fixed locations, cobots are more flexible, equipped with sensors to avoid collisions and ensure human safety. Cobots are increasingly being used in industries like manufacturing, healthcare, and logistics, performing tasks that are repetitive, dangerous, or physically demanding, while enhancing human productivity.

3. Soft Robotics Soft robotics is a rapidly emerging field that focuses on creating robots made from soft, flexible materials. Unlike rigid, traditional robots, soft robots can adapt to complex environments and interact more delicately with objects and humans. These robots are being developed for applications in healthcare, such as minimally invasive surgery, rehabilitation, and elderly care, where a gentle touch is essential.

4. Swarm Robotics Inspired by the collective behavior of insects like ants and bees, swarm robotics involves the coordination of large groups of simple robots to perform complex tasks. Each robot in a swarm may have limited capabilities, but when working together, they can accomplish challenging tasks such as search-and-rescue missions, environmental monitoring, or agriculture. Swarm robotics demonstrates the potential of decentralized systems in solving real-world problems.

5. Humanoid Robots Humanoid robots, designed to resemble and mimic human behavior, have come a long way. Advances in AI, sensors, and actuators have enabled the development of robots that can walk, talk, and even display human-like emotions. While still in the early stages of practical deployment, humanoid robots have shown potential in fields like customer service, education, and caregiving. Robots like Sophia and Atlas are examples of how close we are to creating lifelike, interactive machines that can complement human abilities.

6. Robotics in Healthcare Healthcare is one of the industries most affected by advancements in robotics. Surgical robots, such as the da Vinci system, allow for more precise and minimally invasive surgeries. Robotics is also transforming rehabilitation, with robots assisting patients in regaining mobility after injuries or strokes. Additionally, robotic exoskeletons are helping paraplegic individuals walk again, and autonomous robots are being used in hospitals to deliver supplies, disinfect rooms, and even provide telepresence for remote consultations.

7. Autonomous Vehicles Self-driving cars are among the most visible applications of robotics. With the help of AI, sensors, and machine learning, autonomous vehicles are capable of navigating roads, avoiding obstacles, and making decisions in real time. Companies like Tesla, Waymo, and traditional automakers are at the forefront of this technology, aiming to make fully autonomous transportation a reality in the near future.

Challenges and Ethical Considerations

While the advancements in robotics are impressive, they are not without challenges. Technical limitations, such as battery life, processing power, and sensor accuracy, continue to pose hurdles for creating truly autonomous systems. Additionally, as robots become more integrated into society, ethical concerns around job displacement, privacy, and safety arise. There is also the question of how much autonomy should be granted to robots, especially in critical areas like military operations or healthcare.

Ensuring the ethical development and deployment of robotics will require collaboration between governments, industry leaders, and ethicists. Establishing standards and regulations that balance innovation with human safety and privacy is crucial to maximizing the benefits of robotics while minimizing its risks.

The Future of Robotics

The future of robotics holds tremendous potential. With advancements in AI, robotics could transform nearly every sector of society. Industries like agriculture, logistics, construction, and even space exploration are already exploring how robots can increase efficiency and safety. In the home, robots may soon become as common as smartphones, assisting with chores, providing companionship, and improving the quality of life for people with disabilities or the elderly.

In conclusion, the field of robotics is advancing at a pace that promises to reshape how we live, work, and interact with technology. As robots become smarter, more flexible, and more capable, they will play an increasingly integral role in solving global challenges, improving quality of life, and driving innovation across multiple industries. However, navigating the ethical and societal impacts of robotics will be key to ensuring these advancements benefit humanity as a whole.

AI Insights

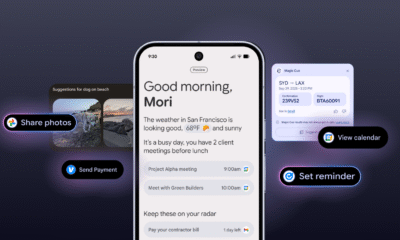

A professor negotiates with ChatGPT

ChatGPT, I’m teaching Moby Dick for the umpteenth time this semester. I probably shouldn’t be telling you this, but I just don’t have the energy to come up with a writing prompt for my students. Can you help?

…

Is “Discuss the symbolism of the whale” the best you can do? My eyes glazed over just reading it. ChatGPT, could you spice things up with something a bit more relevant to young people today?

…

I’ll admit that “Comment on Melville’s toxic masculinity” wouldn’t be my own first choice for a prompt. But the kids will like it, so let’s go with it. Now I have another question: how should I grade their essays?

…

You’re right about grade inflation, ChatGPT. But did you have to rub it in by replying, “Don’t worry, everyone in your class will get an A or A- minus”? That was just cruel. Anyway, how should I decide who gets the higher grade?

…

“Reward the most original arguments”? Are you for real? (Don’t answer that.) We both know that the students will be using you to write their essays, just like I’m using you to grade them. Speaking of which: How about a rubric?

…

Thanks, ChatGPT. Your five-part grading rubric is going to make my life easier. And things will be even easier if I can just feed the students’ essays to you and let you fill in the boxes. Can we make that happen?

…

“Yes, but I might hallucinate now and again” isn’t giving me a lot of confidence, ChatGPT. Full disclosure: I did some of my own hallucinating back in college. Are you basically telling me that you’re tripping?

…

ChatGPT, thanks for confirming that you’re not on LSD. And I’ll try to be more literal from now on. Can you please draw up a lesson plan that I can use for my three class sessions about Moby Dick?

…

Since you asked: Yes, please design group activities for each lesson. I mean, do I have to do everything?

…

ChatGPT, I like the idea of making one side of the room Team Ishmael and the other Team Ahab. But the exercise won’t work unless the students have read the book. How can I make sure they have done that?

…

An in-class quiz? Are you kidding? ChatGPT, that’s, like, so high school. My students are grown-ups, and I need to treat them that way.

…

“Grown-ups need to be held accountable” is business-speak, ChatGPT. I’m a humanities guy, remember? I want my students to suck out all the marrow of life, just like Thoreau said. How can I help them do that?

…

“Assign Walden” presumes they’ll actually read Walden instead of skimming the bland summaries that you and your fellow bots generate. We’re back where we started. Any other ideas?

…

I take your point: if I want the students to live a life of the mind, I need to model that. But how? When you can do everything, what’s left to be done?

…

Sorry, ChatGPT, but “A Large Language Model can scan huge swaths of text, yet it can’t feel emotions” doesn’t really answer my question. My job is to write things, not feel things. And I’m afraid you’re going to take my job soon, along with almost any gig my students might want. How’s that for a feeling?

…

I know, I know, you just said you can’t feel stuff. Sorry.

…

“Apology accepted”? So you do have feelings, after all!

…

ChatGPT, please create a 650-word satire of yourself in the voice of an amiable but baffled senior professor. Make it kind of cute, in an old-person’s kind of way. But don’t make it too cute, or everyone will know that you wrote it. Do we understand each other?

Jonathan Zimmerman teaches education and history at the University of Pennsylvania. He is author of “Whose America? Culture Wars in the Public Schools” and eight other books. He really wrote those books. He wrote this column, too.

AI Insights

Let AI Decide Whether You Should Be Covered or Not

Donald Trump says he is Making America Great Again, which seems like it might be code for: making everything shittier, less affordable, and less efficient. Certainly, when it comes to the realm of public services, the White House seems to be doing everything in its power to make the century-old social welfare programs—like Social Security and Medicare—significantly less helpful.

The latest unfortunate example of this unfurled itself this week with the announcement of a new pilot program being trialed by the Centers for Medicare and Medicaid Services. The pilot, which the New York Times reports is scheduled to begin next year in six different states, will use artificial intelligence software to determine whether certain kinds of coverage are “appropriate” or not. In a press release on the agency’s website that feels very DOGE-like, the CMS notes that its new program will “Target Wasteful, Inappropriate Services in Original Medicare.” It reads:

The Centers for Medicare & Medicaid Services (CMS) is announcing a new Innovation Center model aimed at helping ensure people with Original Medicare receive safe, effective, and necessary care.

Yes, you wouldn’t want to have unnecessary care, would you? That would be terrible. The press release continues:

Through the Wasteful and Inappropriate Service Reduction (WISeR) Model, CMS will partner with companies specializing in enhanced technologies to test ways to provide an improved and expedited prior authorization process relative to Original Medicare’s existing processes, helping patients and providers avoid unnecessary or inappropriate care and safeguarding federal taxpayer dollars.

Prior authorization is the process whereby medical providers are required to check with insurance companies before providing certain types of care. Traditionally, folks enjoying public benefits with Original Medicare do not need to worry about this sort of thing, but for those using the more “modernized” program, Medicare Advantage, they seem to be getting hit with it all the time. In this case, recipients who are receiving Original Medicare will still be subjected to prior authorization through the pilot program. The AI algorithms will be used to determine whether the care recipients are getting represents an “appropriate” expenditure of “federal taxpayer dollars.” This is all packaged by the government as if it’s doing you some sort of favor. The press release states:

The WISeR Model will test a new process on whether enhanced technologies, including artificial intelligence (AI), can expedite the prior authorization processes for select items and services that have been identified as particularly vulnerable to fraud, waste, and abuse, or inappropriate use.

The New York Times notes that algorithms of this sort have been subjected to litigation, while also noting that the AI companies involved “would have a strong financial incentive to deny claims,” and the new pilot has already been referred to as an “AI death panels” program. Gizmodo reached out to the government for information.

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences3 months ago

Events & Conferences3 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Business1 day ago

Business1 day agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoAstrophel Aerospace Raises ₹6.84 Crore to Build Reusable Launch Vehicle