AI Research

The Overlooked Climate Risks of Artificial Intelligence

Artificial Intelligence (AI) is rapidly diffusing into every sector of the economy and daily life. Its proliferation is often framed within the prevailing narrative of ‘AI for good,’ including the promise of AI as a tool to address global challenges such as climate change. Yet this optimistic framing overlooks a growing and underexamined reality: the extent to which AI itself can contribute to climate risks.

Current concerns about AI’s adverse climate impacts are largely confined to operational energy and water use by data centers. While these impacts are important, they represent only a fraction of the ways in which AI can influence climate outcomes. AI systems will reshape behavior, affect infrastructure, and alter economic and political dynamics in ways that may work against decarbonization efforts. The risks extend from individual choices and system-level efficiencies to public trust in technologies and climate governance.

Recognizing and identifying these varied risk pathways is essential if we are to ensure AI supports—rather than undermines—climate action. Drawing on a broad taxonomy of AI-related risks, our recent analysis has mapped dozens of potential linkages between AI and climate vulnerabilities, capturing key dimensions of AI-related risks including misinformation, discrimination, privacy and security, malicious use, human-computer interaction failures, and broader socioeconomic harms. These include links between AI risks and the building blocks of net-zero energy pathways, including: 1) sectoral energy demand and electricity supply networks; 2) low-carbon technology deployment and behavioural shifts; 3) climate policy; and 4) climate governance institutions (see full map here). While these links are still emerging, they highlight the urgent need to account for AI’s indirect and systemic impacts on climate goals.

This article outlines several key areas where AI contributes to climate risk—directly, indirectly, or through unintended consequences. Understanding these systemic risk linkages is essential to align AI development with climate change mitigation goals, or those goals will be pushed out of reach.

Direct energy impacts and emissions

AI-driven efficiency gains, while potentially reducing resource use, often trigger rebound effects. These occur when improved efficiency lowers costs, which in turn spur increased consumption, ultimately offsetting any environmental benefits. Efficiency and productivity gains from many different AI applications that reduce frictions or transaction costs can lead to a surge in energy-hungry activity. This is evident in contexts from e-commerce and advertising to freight logistics and buildings’ energy performance, as well as AI data centers themselves.

Moreover, AI-powered platforms frequently shape consumer behavior through automated nudging, prioritizing engagement, the interests of AI developers and deployers, over sustainability. For instance, ChatGPT automatically provides follow-up suggestions in chats that prompt people to continue using it for more queries or more image generation, stimulating rather than managing demand. Such systemic incentivization of energy-intensive behaviour is at odds with climate objectives.

Another risk is the growing reliance on agentic AI, capable of making decisions on behalf of users. In contexts like travel, healthcare, and finance, such systems risk misalignment with users’ values. For example, an AI agent tasked with booking your next travel destination might optimize for comfort or price, rather than low-carbon options.

Cybersecurity threats to low-carbon technologies

AI significantly escalates cybersecurity risks. Researchers widely anticipate an increase in AI-based hacking data breaches from automated propagation and attack capability. This presents an obstacle to the deployment of smart, networked low-carbon technologies, including electric vehicles, smart building systems, and grid-responsive technologies such as heat pumps, that need to be adopted at scale and where perceived security risks could seriously delay progress.

Furthermore, the integration of AI into increasingly decentralized and digital systems introduces vulnerabilities that could trigger cascading failures across critical infrastructure, including energy networks, industrial sectors, and agriculture. These systemic risks are often glossed over by the hype surrounding AI as a sustainability solution in the major carbon-emitting sectors.

Climate misinformation and disinformation

The expansion of generative AI has exacerbated challenges associated with online misinformation and disinformation. AI tools can produce biased, misleading, or false responses to questions about climate change, including greenwashing and other information that misrepresents fossil fuel companies’ role in the climate crisis. In the wrong hands, AI can be misused to create more convincing, personalized deepfakes on climate at a cheaper and faster rate. AI can also widen the dissemination of climate misinformation by creating bots to promote certain content.

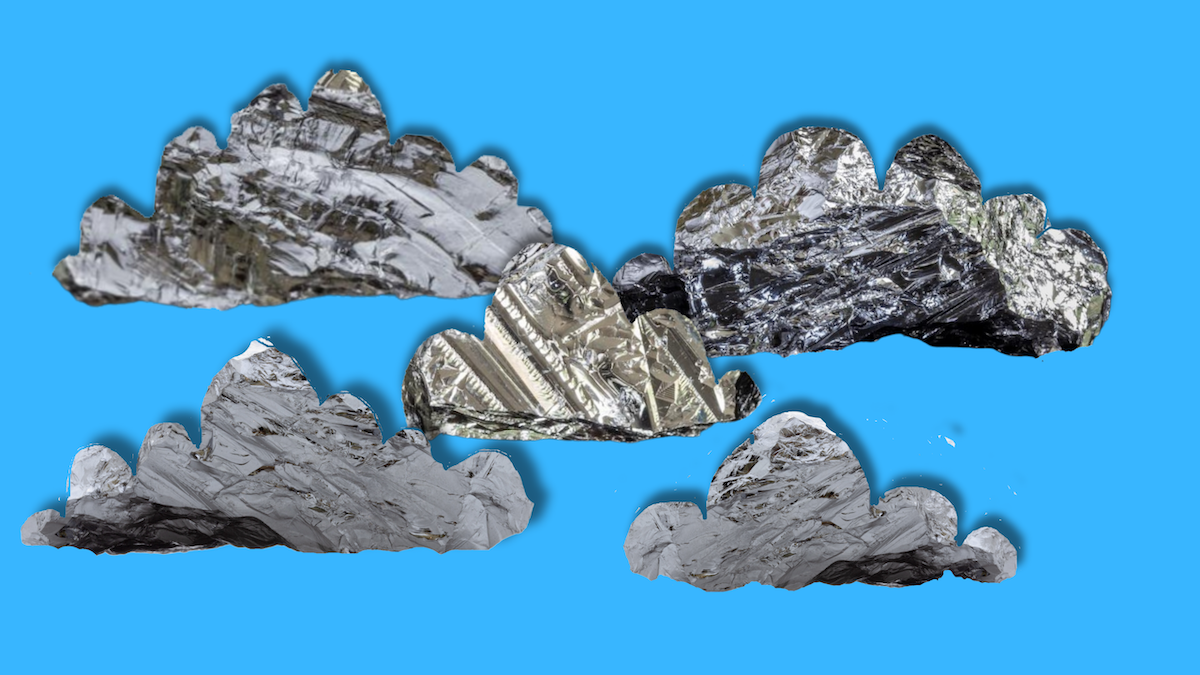

Climate mis- and disinformation may also promote climate scepticism, dissuade people from adopting low-carbon choices and behaviours, or worse, convince people to reject climate action altogether. A climate denial think tank managed to do just that by creating and spreading an image of a dead whale in front of wind turbines, claiming that offshore wind was responsible. Social media campaigns of this nature risk creating a feedback loop between scientific denialism and political inaction.

Socioeconomic disruption and indirect climate harms

As a general-purpose technology, AI’s societal impacts are transformative and diffuse, affecting employment, income distribution, and social cohesion. A great deal of research has been focused on AI’s impact on jobs, skills, wages, and widening inequalities, as well as the risks for discrimination, surveillance, and civil liberties. While such issues are widely recognized, including in legislation like the EU AI Act and governance discussions focused on AI red lines, their indirect implications for climate action remain underexplored.

AI-induced socioeconomic harms—such as inequality, reduced autonomy, and increased surveillance—can indirectly undermine climate efforts. These effects can reduce an individual’s agency and capabilities to act on climate and diminish civic engagement more broadly. At a higher level, they contribute to public distrust, weaken institutional legitimacy, and sap the social consensus and collective action necessary to pursue shared climate goals that protect the global commons.

A call for climate-responsive AI governance

These examples outlined above are only a subset of a broader landscape of climate-related AI risks, documented in our larger database. As AI becomes more embedded in daily life and critical infrastructure, so too does its influence on systems, behaviours, and policies that shape our climate outcomes. The impacts of AI on climate are dense, complex, and diffuse, extending way beyond its energy use in data centers.

Recognizing these systemic linkages is crucial if we want to align AI development with climate goals. It is also the first step towards developing a more robust regulatory and governance framework with appropriate risk mitigation strategies. A first step is to identify who should take on which risks, and then identify how they should be tackled. AI and climate are both global collective problems that require collective actions; only when we acknowledge the extent of the impacts of AI on the climate can we start addressing them.

AI Research

New WalkMe offering embeds training directly into apps – Computerworld

Now, said Bickley, “imagine today’s alternative: a system that sees you perform a task correctly the first time, learns from it, and memorializes those sequenced steps, mouse clicks, and keystrokes, such that when the employee gets stuck, the system can pull them through to task completion. With many end users accessing dozens or hundreds of system transactions as part of their job, this functionality is invaluable — a real efficiency driver, and also a means to reduce risk via the built-in guardrails and guidance ensuring accurate data is entered into the system.”

He said, “WalkMe tracks users’ usage of their systems — where they stop, what they do, and where they run into problems. This existing baseline of ‘user context’ is a natural jumping-off point for an AI-assisted evolution of the product. The Visual No Code Editor is where the employee guidance flows are built, and the no-code, visual point and click nature of this tool enables business teams to build the training tools and not be dependent on developers.”

‘I see this as much more than Clippy for SAP’

“[The ability] to build highly granular workflows that can discern across user group segments (for example, role, device type, geography, behavior), coupled with already existing automation for things like auto-completion of form fields as an example, provides a meaningful nudge when an employee gets stuck on a process step,” said Bickley.

AI Research

Notre Dame to host summit on AI, faith and human flourishing, introducing new DELTA framework | News | Notre Dame News

Artificial intelligence is advancing at a breakneck pace, as governments and industries commit resources to its development at a scale not seen since the Space Race. These technologies have the potential to disrupt every aspect of life, including education, the economy, labor and human relationships.

“As a leading global Catholic research university, Notre Dame is uniquely positioned to help the world confront and understand AI’s benefits and risks to human flourishing,” said John T. McGreevy, the Charles and Jill Fischer Provost. “Technology ethics is a key priority for Notre Dame, and we are fully committed to bringing the wisdom of the global Church to bear on this critical theme.”

In support of this work, the Institute for Ethics and the Common Good and the Notre Dame Ethics Initiative will host the Notre Dame Summit on AI, Faith and Human Flourishing on the University’s campus from Monday, Sept. 22 through Thursday, Sept. 25. This event will draw together a dynamic, ecumenical group of educators, faith leaders, technologists, journalists, policymakers and young people who believe in the enduring relevance of Christian ethical thought in a world of powerful AI.

“As artificial intelligence becomes more powerful, the ‘ethical floor’ of safety, privacy and transparency is simply not enough,” said Meghan Sullivan, the Wilsey Family College Professor of Philosophy and the director of the Institute for Ethics and the Common Good and the Notre Dame Ethics Initiative. “This moment in time demands a response rooted in the Christian tradition — a richer, more holistic perspective that recognizes the nature of the human person as a spiritual, emotional, moral and physical being.”

Sullivan noted that a unified, faith-based response to AI is a priority of newly elected Pope Leo XIV, who has spoken publicly about the new challenges to human dignity, justice and labor posed by these technologies.

The summit will begin at 5:15 p.m. Monday with an opening Mass at the University’s Basilica of the Sacred Heart. His Eminence Cardinal Christophe Pierre, Apostolic Nuncio to the United States, will serve as primary celebrant and homilist with University President Rev. Robert A. Dowd, C.S.C., as concelebrant. All members of the campus community are invited to attend this opening Mass.

Summit speakers include Andy Crouch, Praxis; Alex Hartemink, Duke University; Molly Kinder, Brookings Institution; Andrew Schuman, Veritas Forum; Anne Snyder, Comment Magazine and Elizabeth Dias, The New York Times. Over the course of the summit, attendees will take part in use case workshops, panels and community of practice sessions focused on public engagement, ministry and education. Executives from Google, Microsoft, Apple and many other organizations are among the 200 invited guests who will attend.

At the summit, Notre Dame will launch DELTA, a new framework for guiding conversations about AI. DELTA — an acronym that stands for Dignity, Embodiment, Love, Transcendence and Agency — will serve as a practical resource across sectors that are experiencing disruption from AI, including homes, schools, churches and workplaces, while also providing a platform for credible, principled voices to promote moral clarity and human dignity in the face of advancing technology.

“Our goal is for DELTA to become a common lens through which to engage AI — a language that reflects the depth of the Christian tradition while remaining accessible to people of all faiths,” Sullivan said. “By bringing together this remarkable group of leaders here at Notre Dame, we’re launching a community that will work passionately to create — as the Vatican puts it — ‘a growth in human responsibility, values and conscience that is proportionate to the advances posed by technology.’”

Although the summit sessions are by invitation only, Sullivan’s keynote on DELTA will be livestreamed. Those interested are invited to view the livestream and learn more about DELTA at https://ethics.nd.edu/summit-livestream at 8:30 a.m. EST on Tuesday, Sept. 23.

The Notre Dame Summit on AI, Faith and Human Flourishing is supported with a grant provided by Lilly Endowment Inc.

Lilly Endowment Inc. is a private foundation created in 1937 by J.K. Lilly Sr. and his sons Eli and J.K. Jr. through gifts of stock in their pharmaceutical business, Eli Lilly and Company. While those gifts remain the financial bedrock of the Endowment, it is a separate entity from the company, with a distinct governing board, staff and location. In keeping with the founders’ wishes, the Endowment supports the causes of community development, education and religion and maintains a special commitment to its hometown, Indianapolis, and home state, Indiana. A principal aim of the Endowment’s religion grantmaking is to deepen and enrich the lives of Christians in the United States, primarily by seeking out and supporting efforts that enhance the vitality of congregations and strengthen the pastoral and lay leadership of Christian communities. The Endowment also seeks to improve public understanding of religious traditions in the United States and across the globe.

Contact: Carrie Gates, associate director of media relations, 574-993-9220, c.gates@nd.edu

AI Research

New Research Reveals That “Evidence-Based Creativity” Is the Next Must-Have Skill Set for Marketers in the Age of AI

Global report from Contentful and Atlantic Insights finds nearly half of marketers rank data analysis and interpretation as a top skill; marketers must now demonstrate breakthrough creativity and validate with data

DENVER & BERLIN–(BUSINESS WIRE)–Contentful, a leading digital experience platform, in collaboration with Atlantic Insights, the marketing research division of The Atlantic, today released a new study, ‘When Machines Make Marketers More Human’ challenging the notion that AI will replace many marketing functions and instead demonstrates how AI can amplify marketers’ effectiveness, creativity, and impact.

The report, based on surveys and interviews with hundreds of senior marketing leaders around the world, finds that “evidence-based creativity” is emerging as the defining capability of modern marketers. Whether on skills – 46% of respondents cited data analysis and interpretation as the top skill needed in the profession today – or on measuring efficacy – 34% of successful marketers define success according to strong performance metrics or ROI – it’s clear that data-driven marketing is only accelerating in the age of AI. The ability to combine human creativity with AI-driven insights is becoming essential to producing, testing, and scaling ideas with measurable impact.

Beyond creativity, the research highlights a broader evolution of the marketing skillset. A new generation of “full-stack marketers” is taking shape. They are fluent in creating AI-enabled workflows, writing effective prompts, navigating diverse technology stacks, and embedding AI tools into daily operations. Nearly half of marketers report using both AI copilots in productivity software (49%) and generative tools for content creation (48%), underscoring how quickly these tools are becoming part of the day-to-day workflow. Combined with growing expertise in digital experience design, personalization strategy, and governance, these capabilities signal a fundamental shift in what it takes to succeed in marketing today.

“There is a growing fear that AI will erase marketing jobs, but that concern is misplaced. The real risk is failing to use AI strategically,” said Elizabeth Maxson, Chief Marketing Officer, Contentful. “When marketers invest in the right tools that support their teams’ daily work and prioritize marketing talent that blends creativity with analytics, that’s when AI stops being hype and starts delivering meaningful results.”

“Marketing is a deeply creative industry, but there is an urgent need for marketers to start thinking more like engineers in order to keep pace with the rise in AI,” said Alice McKown, Publisher, The Atlantic. “Tomorrow’s most valuable marketing leaders won’t be defined as creative or analytical. They’ll be both.”

Key Findings from the Report

Evidence-based creativity is the new marketing superpower — and organizations are investing to get them there.

- The marketing skills that matter most today are data analysis and interpretation (46%) and digital experience design (40%), followed by personalization strategy (37%), and writing for AI tools (37%).

- 33% of marketers rank campaign testing and optimization as a top skill — reflecting a shift toward data-informed creative instincts.

- 45% of organizations are already offering AI training, a clear marker of organizational maturity.

The “Optimism-Execution Gap” reflects the chasm between AI’s potential and reality; ROI is still a work in progress.

- AI investment is a priority for marketers, with 74% investing in the technology and 34% allocating at least $500k toward AI marketing tools or initiatives over the next 12-36 months.

- Despite the investment, two-thirds of marketers say their current marketing technology stack isn’t helping them do more with fewer resources (yet).

- 89% of marketing teams are already using AI tools, but only 18% say it has reduced their reliance on developers or data teams

AI’s key opportunities and challenges differ by region, with Europeans prioritizing compliance and Americans focusing on rapid experimentation.

- EMEA marketers adopt a methodical, compliance-ready approach, with 58% selectively testing AI tools under a defined plan. Nearly a third (32%) emphasize governance skills such as brand voice, compliance and quality standards.

- U.S. marketers emphasize experimentation and rapid testing, with 37% focusing on campaign optimization (vs. 26% in EMEA). U.S. teams measure success by high content quality (45%) and flexibility (39%), while EMEA teams lean toward operational excellence and speed (43%).

To read the full report, visit: https://www.theatlantic.com/sponsored/contentful-2025/making-marketers-more-human/4024/

About the Report

Research from the report was conducted by Contentful in collaboration with Atlantic Insights, and included three primary components:

- Quantitative survey – 425 marketing decision-makers across industries, company sizes, and regions, executed by Cint.

- Diary studies – Ten-day live user testing with marketing professionals using AI tools in their real workflows, executed by Dscout. Participants completed eight activities, including content creation, campaign optimization, translation/localization, personalization, and A/B testing, while recording their screens and providing commentary.

- Subject matter expert (SME) interviews – In-depth interviews with Contentful executives, team leads, and partner organizations to contextualize quantitative findings and capture emerging best practices.

All survey data was collected and analyzed using advanced statistical methods to ensure reliability and significance of findings.

About Contentful

Contentful is a leading digital experience platform that helps modern businesses meet the growing demand for engaging, personalized content at scale. By blending composability with native AI capabilities, Contentful enables dynamic personalization, automated content delivery, and real-time experimentation, powering next-generation digital experiences across brands, regions, and channels for more than 4,200 organizations worldwide. For more information, visit www.contentful.com. Contentful, the Contentful logo, and other trademarks listed here are registered trademarks of Contentful Inc., Contentful GmbH and/or its affiliates in the United States and other countries. Other names may be trademarks of their respective owners.

About Atlantic Insights

Atlantic Insights is the marketing research division of The Atlantic, with custom and co-branded research experience spanning industries and sectors ranging across finance, luxury, technology, healthcare, and small business.

Contacts

-

Business3 weeks ago

Business3 weeks agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Tools & Platforms1 month ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy2 months ago

Ethics & Policy2 months agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences4 months ago

Events & Conferences4 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers3 months ago

Jobs & Careers3 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Education3 months ago

Education3 months agoVEX Robotics launches AI-powered classroom robotics system

-

Education2 months ago

Education2 months agoMacron says UK and France have duty to tackle illegal migration ‘with humanity, solidarity and firmness’ – UK politics live | Politics

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Funding & Business3 months ago

Funding & Business3 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries