Ethics & Policy

The AI Ethics Brief #166: AI Systems in Human Spaces

Welcome to The AI Ethics Brief, a bi-weekly publication by the Montreal AI Ethics Institute. We publish every other Tuesday at 10 AM ET. Follow MAIEI on Bluesky and LinkedIn.

-

Builder.ai, once valued at over $1 billion, has collapsed. Alongside quieter updates from DeepSeek and strategy pivots at Klarna and Duolingo, we consider what happens when AI hype, labour misrepresentation, and automation-first models meet operational reality.

-

In education, OpenAI’s rollout across California State University places ChatGPT into the hands of 460,000 students. We weigh this move against new Apple research, which suggests large reasoning models break down under complexity.

-

In our AI Policy Corner series with the Governance and Responsible AI Lab (GRAIL) at Purdue University, we break down NYC’s Local Law 144, an early attempt to regulate AI in hiring. While well-intentioned, its narrow scope and limited enforceability have drawn criticism from auditors and researchers.

-

A University of Zurich study deployed AI to impersonate marginalized users on Reddit without consent, violating community guidelines and raising serious concerns about research ethics in public spaces.

-

Finally, we share a visual tribute to MAIEI’s late founder, Abhishek Gupta, highlighting how his legacy continues to shape conversations on AI ethics around the world.

As AI continues to evolve, the integration of AI agents—software entities that act autonomously on behalf of users—presents emerging risks that differ from those associated with more generalized forms of Agentic AI, which broadly refers to AI systems demonstrating goal-directed behaviours.

In Brief #161, we explored Anthropic’s Model Context Protocol (MCP), which has swiftly become the dominant standard for AI agent interactions.

A recent blog post by Invariant Labs revealed a serious vulnerability with the official GitHub Model Context Protocol (MCP) server. The issue allowed an attacker to hijack an AI agent by posting a malicious GitHub Issue and extract data from private repositories. This type of exploit is part of what Invariant calls a Toxic Agent Flow, where an agent is manipulated into performing actions not intended by the user.

At the Montreal AI Ethics Institute, we define this emerging risk in straightforward terms. Unlike human operators, AI agents run continuously as autonomous software driven by code. Their uninterrupted operation increases the surface area for risk.

This comes at a critical time as more developers adopt MCP to power AI agents across tools and platforms. First introduced by Anthropic in late 2024 and gaining momentum after a 2025 workshop, MCP has become the dominant interface protocol for agent-driven systems. It enables software agents to communicate with integrated development environments (IDEs) such as Visual Studio Code, Replit, and JetBrains, where they can write code, run scripts, and interact with APIs on behalf of the user. However, the extent of these capabilities depends on the specific agent and IDE integration. Not every agent is available in every IDE, and the depth of automation varies.

As the agentic economy develops, emerging risk vectors are becoming evident. Attackers can poison prompts or tool descriptions to cause agents to leak credentials or execute unauthorized actions. Shared memory systems introduce the risk of context bleeding between agents. Malicious actors can also deploy spoofed MCP servers to intercept requests or route sensitive data to untrusted destinations. In the absence of standard auditing mechanisms, such actions may go undetected, leaving users and organizations with limited avenues for recourse.

A broader concern is how trust and responsibility are defined in a world increasingly mediated by AI agents. There are now hundreds of active MCP servers, but no formal mechanism exists for verifying their security or reliability. If an enterprise deploys an agent using MCP, who certifies the endpoint? When will the first sanctioned MCP server emerge, and who will define the criteria for compliance?

These questions have implications for how users interact with AI systems. In decentralized finance (DeFi), there is growing discussion around agents that hold blockchain wallets and execute transactions autonomously. These agents can rebalance portfolios, respond to market conditions, or interact with smart contracts without direct human involvement. This shift requires new design patterns for interfaces, permissioning, and contingency planning.

The impact on traditional finance may be more complex. As banks and financial institutions explore how agents might support internal operations, such as real-time risk assessments, automated reconciliation, or transaction processing, they will face new challenges related to auditability, liability, and regulatory compliance. What does it mean for an agent to initiate or authorize a financial transaction? What safeguards are in place if something goes wrong?

Understanding the difference between AI agents and Agentic AI is essential.

AI agents are narrowly scoped, modular systems built on top of large language models (LLMs) or large image models (LIMs) that automate specific tasks through external tool integration, structured prompts, and reasoning enhancements. These agents evolve from foundational generative models into more capable systems through extension layers such as API access and function calling.

Agentic AI, by contrast, constitutes a higher level of autonomy and complexity. These systems:

-

Orchestrate multiple specialized agents to divide and coordinate tasks

-

Maintain persistent memory for context retention across sessions

-

Decompose complex objectives into actionable subtasks

-

Operate in a goal-directed, self-coordinating manner

MCP acts as the infrastructure layer connecting these systems to real-world tools and workflows. It enables advanced functionality while also making it more difficult to monitor and regulate the line between human and machine agency.

As always-on agents take on greater responsibilities in software development, including critical systems such as finance, healthcare, and energy infrastructure, as well as broader digital infrastructure, the stakes are rising. New standards, governance frameworks, and institutional safeguards will be required to ensure that autonomy does not come at the cost of accountability.

Please share your thoughts with the MAIEI community:

Luca Baraldi and Laura Zambarda, via the symboolic.ai Instagram page, shared a thoughtful tribute to the late Abhishek Gupta, founder of the Montreal AI Ethics Institute. Originally published in Italian, the tribute is shared below in English to make it accessible to the wider community.

What happened: At the end of May, Deepseek’s R1 model received an update to version DeepSeek-R1-0528, which included improved reasoning capabilities by using more compute resources to “think” for longer. This upgrade led to measurable performance improvements across various benchmarks. However, it did not generate the same level of attention as the original R1 release, which, as we covered in Brief #157, was significant enough to trigger a temporary market reset. By contrast, this May release barely made a ripple.

📌 MAIEI’s Take and Why It Matters:

The muted reception to DeepSeek’s latest update reflects how quickly the AI space moves on. Connor Wright, our Director of Partnerships, has written previously on the mechanics of AI hype, with DeepSeek’s earlier reception serving as a textbook case of FOMO-driven enthusiasm (fear of missing out).

As highlighted in Brief #157, early reporting around DeepSeek’s cost to train (~$5.6 million) was often misinterpreted. This figure referred to the final training run, not the total cost. Despite that, the model’s efficiency under constrained resources prompted a competitive response. OpenAI, Google, and Meta accelerated efforts to improve model efficiency, while the U.S. government responded by tightening export controls on advanced chips to China, leading to a reported $5.5 billion in additional costs for Nvidia.

The lack of reaction to the May update may be attributed to the fact that it was not a release of a new “R2” model. This follows a broader trend in the field, where updates to existing models (e.g., GPT-4.1-mini instead of GPT-5, Gemini 2.5 instead of Gemini 3) fail to drive the same level of public or market interest. It may also signal early symptoms of model collapse, where further model improvements stall due to the increased use of synthetic data and a shortage of high-quality, human-generated data.

This links to earlier accusations that DeepSeek may have used distillation, an AI technique where a smaller model is trained on the outputs of a larger model, such as Google’s Gemini. If true, this would raise further questions about the long-term viability of model performance gains that rely solely on efficiency rather than original, high-quality data. As the field continues to evolve, it becomes increasingly clear that while efficiency is important, robust model performance still depends heavily on the strength and integrity of the underlying data.

The AI-first workplace strategy, once hailed as the future, is beginning to show its limitations. Klarna and Duolingo, two high-profile companies that leaned heavily into automation, are now facing the operational and social consequences of prioritizing AI over people.

Two years ago, Klarna CEO Sebastian Siemiatkowski positioned the company as a “guinea pig” for OpenAI, freezing hiring while AI systems replaced hundreds of customer service agents. Today, Klarna is reversing course, announcing plans to reintroduce human staff to improve customer service quality.

This move comes alongside findings from a recent IBM survey, which show that only one in four AI projects delivers a return on investment. Even fewer, just 16 percent, are successfully scaled across organizations. Despite these figures, 64 percent of CEOs say they are continuing to invest in AI primarily to avoid being left behind.

Duolingo, by contrast, is continuing its shift to an AI-first approach, but the company is encountering public backlash. Viral posts on TikTok and other platforms have criticized the change as alienating and inauthentic. Duolingo has responded by clarifying that AI will enhance, not replace, its language experts. However, this message does not seem to be landing with many of its users.

📌 MAIEI’s Take and Why It Matters:

The AI-first approach is beginning to look less like a strategic leap forward and more like an overcorrection. Klarna and Duolingo offer two examples of what happens when companies treat automation as a replacement for human engagement, rather than a tool to support it.

This echoes earlier tensions highlighted in Shopify’s internal hiring memo, which we discussed in Brief #162. When employees are required to “prove a human is necessary,” the cost is not only operational but cultural.

Duolingo’s experience is particularly revealing. For a brand built on playfulness and social learning, fully automating the experience diminishes what many users value most: connection. After all, language is inherently social. Users intuitively sense that fully automating its instruction diminishes the experience. This type of dissonance is often overlooked by AI-first strategies.

Klarna’s decision to rehire human agents is more pragmatic. It reflects not only a focus on service quality but also a broader ethical consideration: automation may reduce costs, but those savings are often externalized, borne by customers who receive subpar experiences and workers who lose employment stability.

The collapse of Builder.ai, a once billion-dollar AI startup, further highlights the need to reassess how AI is positioned within companies. As reported by the Financial Times, Builder.ai filed for insolvency in May after internal investigations revealed that projected revenues were significantly overstated. Revenue estimates for 2024 were revised from $220 million to $55 million, and for 2023 from $180 million to $45 million. Investigators also raised concerns about questionable sales practices involving resellers and long-standing unpaid bills.

Despite raising over $450 million from investors, including Microsoft, SoftBank, and Qatar’s sovereign wealth fund, giving it a valuation of more than $1 billion, the company was ultimately left with just $5 million in cash. Additional reporting suggested that Builder.ai employed more than 700 workers in India, many of whom were building what was publicly marketed as AI-generated software.

Much like Klarna and Duolingo, Builder.ai’s trajectory highlights the consequences of treating AI not as a tool to augment human capacity but as the product itself. When hype runs ahead of actual capability, and when labour is misrepresented as automation, the gap between public expectation and operational reality becomes increasingly difficult to maintain.

These examples signal the need for a more measured approach to AI adoption. Integration should not be driven solely by competitive pressure. Organizations must ask: Are we empowering people with new tools, or simply replacing them? Are we improving outcomes, or removing the very elements that users and workers find meaningful?

Researchers at the University of Zurich conducted an anonymous, non-consensual experiment on Reddit users over a four-month period, which was only recently made public. r/changemyview is a subreddit dedicated to challenging users’ perspectives on contentious topics. Redditors in this forum award “deltas” to peers who successfully change their view on an issue.

Seeking to evaluate the persuasiveness of AI-generated content, researchers at the University of Zurich employed large language models to generate over 1,500 contributions across 34 Reddit accounts and analyzed the number of deltas they received. The study violated multiple ethical standards: r/changemyview strictly prohibits undisclosed AI-generated content, no consent was obtained from users, and the LLMs impersonated many marginalized identities. The researchers now face significant backlash and potential legal action from Reddit and have stated they will not be publish the findings.

📌 MAIEI’s Take and Why It Matters:

The research found that their LLM-generated responses were more persuasive than human-written ones. However, the findings, rendered unusable by poor experimental design, are less important than the broader concerns this case raises. Most notably, it highlights the growing difficulty of distinguishing between human and AI interactions in online spaces.

Following the revelation, users on r/changemyview expressed concerns that many of the accounts they engage with may not be human. If researchers were able to post over 1,500 AI-generated comments undetected within just four months, it raises valid questions about how much of Reddit’s content may already be influenced by similar bots. This case offers a clear example of AI encroaching on spaces intended for authentic human dialogue.

The uncertainty about whether content is human- or AI-generated further complicates online experimentation. Existing research suggests that LLMs tend to prefer content generated by other LLMs. In a digital environment increasingly populated by AI, researchers cannot reliably assess the impact of AI-generated content on humans, potentially inflating its perceived persuasiveness. This also risks corrupting future model performance, as Reddit and similar platforms are frequently scraped for large language model (LLM) training and retrieval-augmented generation (RAG) data. If AI-generated material is continuously fed back into model training pipelines, it may degrade model quality through self-reinforcing loops.

Lastly, the LLM-generated content appropriated marginalized identities, including impersonating a Black man opposing the Black Lives Matter movement, LGBTQ+ individuals, and a male rape victim. This not only misled users who engaged in vulnerable discussions under the assumption they were speaking to real people, but also trivialized the lived experiences of the groups being impersonated. Legal scholar Chaz Arnett describes this as a “digital blackface,” stating in Science: “The very act of presuming that you could pick up and put on a fundamental identity belittles the lived experiences of those groups.”

Undisclosed generative AI already poses a threat to the integrity of human spaces. If left unchecked, it risks undermining human relationships, trust, and identity online.

Did we miss anything? Let us know in the comments below.

This article is part of our AI Policy Corner series, a collaboration between the Montreal AI Ethics Institute (MAIEI) and the Governance and Responsible AI Lab (GRAIL) at Purdue University.

In this edition, we spotlight New York City’s Local Law 144 (LL144), enacted in July 2023, which aims to regulate the use of Automated Employment Decision Tools (AEDTs) in hiring and promotion. While it mandates bias audits and public disclosures, its scope is limited. The definition of AEDTs, which are tools that substantially assist or replace human decision-making, is open to interpretation and left to the discretion of the employer. As a result, many human-in-the-loop systems fall outside its coverage. The law also focuses only on race/ethnicity and sex, omitting protections for age, disability, and other categories.

Moreover, LL144 applies only to employers using these tools, not the vendors or developers of AEDTs, making it difficult for those using the tools to correct for potential biases without access to the underlying systems. Despite these concerns, LL144 remains a significant step towards fair artificial intelligence systems. It showcases how governance strategies, such as public disclosures and audits, can mitigate the risks of bias, discrimination, and civil rights violations. It further demonstrates the merits of transparent AI, as it allows AEDTs to be held accountable to the same standards as humans when it comes to employment decisions.

Still, as with many early regulatory efforts, implementation gaps and definitional ambiguity risk limiting its actual impact in the real world. The combination of these factors, according to various auditors of these AEDTs, makes LL144 well-intentioned but ineffective.

To dive deeper, read the full article here.

Apple’s recently released research paper, The Illusion of Thinking: Understanding the Strengths and Limitations of Reasoning Models via the Lens of Problem Complexity, offers a critical lens on the current capabilities of Large Reasoning Models (LRMs). While the paper reflects Apple’s internal research perspective and has not undergone peer review, it highlights a broader pattern in the field: reasoning models are often marketed as systems that can “think” through and are capable of navigating complex tasks, yet the study finds that these models face significant limitations when presented with even moderately difficult problems. Apple researchers tested models on controlled reasoning benchmarks and found that performance collapses once complexity crosses a certain threshold. Models also showed a tendency to reduce their reasoning effort as problems became more difficult, suggesting structural or training-related limitations.

This is particularly relevant in light of the recent New York Times article on OpenAI’s rollout across the California State University system, which will make ChatGPT available to more than 460,000 students across its 23 campuses to help prepare them for “California’s future A.I.-driven economy.” OpenAI is positioning its tools not only as supplemental aids but as foundational infrastructure for education, integrated directly into learning management systems and course delivery.

If reasoning models cannot reliably solve complex problems, there are serious questions about the role they should play in education. Critics have noted that these deployments risk overstating AI’s current capabilities while diverting attention from issues such as accuracy, bias, and learning integrity. As Apple’s findings suggest, current systems may appear capable on the surface but fail to reason in a consistent or meaningful way.

In educational settings, that disconnect has consequences. Students may unknowingly rely on tools that cannot deliver valid answers. Instructors may struggle to assess whether work is student-generated or model-assisted. Most importantly, the assumption that AI can enhance learning may be undermined if the technology fails to meet the level of reasoning required by the curriculum.

The gap between perception and actual capability continues to grow. It is in high-stakes contexts, such as education, that this gap matters most.

To dive deeper, read the Apple research paper here and the New York Times article here.

Help us keep The AI Ethics Brief free and accessible for everyone by becoming a paid subscriber on Substack or making a donation at montrealethics.ai/donate. Your support sustains our mission of democratizing AI ethics literacy and honours Abhishek Gupta’s legacy.

For corporate partnerships or larger contributions, please contact us at support@montrealethics.ai

Have an article, research paper, or news item we should feature? Leave us a comment below — we’d love to hear from you!

Ethics & Policy

Meneath: The Hidden Island of Ethics

Meneath: The Hidden Island of Ethics : Release Date, Trailer, Cast & Songs

| Title | Meneath: The Hidden Island of Ethics |

| Release status | Released |

| Release date | Sep 10, 2021 |

| Language | English |

| Genre | Animation |

| Actors | Gail MauriceLake DelisleKent McQuaid |

| Director | Terril Calder |

| Critic Rating | 7.2 |

| Duration | 20 mins |

Meneath: The Hidden Island of Ethics Storyline

Meneath: The Hidden Island of Ethics

Meneath: The Hidden Island of Ethics – Star Cast And Crew

Image Gallery

Ethics & Policy

5 interesting stats to start your week

Third of UK marketers have ‘dramatically’ changed AI approach since AI Act

More than a third (37%) of UK marketers say they have ‘dramatically’ changed their approach to AI, since the introduction of the European Union’s AI Act a year ago, according to research by SAP Emarsys.

Additionally, nearly half (44%) of UK marketers say their approach to AI is more ethical than it was this time last year, while 46% report a better understanding of AI ethics, and 48% claim full compliance with the AI Act, which is designed to ensure safe and transparent AI.

The act sets out a phased approach to regulating the technology, classifying models into risk categories and setting up legal, technological, and governance frameworks which will come into place over the next two years.

However, some marketers are sceptical about the legislation, with 28% raising concerns that the AI Act will lead to the end of innovation in marketing.

Source: SAP Emarsys

Shoppers more likely to trust user reviews than influencers

Nearly two-thirds (65%) of UK consumers say they have made a purchase based on online reviews or comments from fellow shoppers, as opposed to 58% who say they have made a purchase thanks to a social media endorsement.

Nearly two-thirds (65%) of UK consumers say they have made a purchase based on online reviews or comments from fellow shoppers, as opposed to 58% who say they have made a purchase thanks to a social media endorsement.

Sports and leisure equipment (63%), decorative homewares (58%), luxury goods (56%), and cultural events (55%) are identified as product categories where consumers are most likely to find peer-to-peer information valuable.

Accurate product information was found to be a key factor in whether a review was positive or negative. Two-thirds (66%) of UK shoppers say that discrepancies between the product they receive and its description are a key reason for leaving negative reviews, whereas 40% of respondents say they have returned an item in the past year because the product details were inaccurate or misleading.

According to research by Akeeno, purchases driven by influencer activity have also declined since 2023, with 50% reporting having made a purchase based on influencer content in 2025 compared to 54% two years ago.

Source: Akeeno

77% of B2B marketing leaders say buyers still rely on their networks

When vetting what brands to work with, 77% of B2B marketing leaders say potential buyers still look at the company’s wider network as well as its own channels.

When vetting what brands to work with, 77% of B2B marketing leaders say potential buyers still look at the company’s wider network as well as its own channels.

Given the amount of content professionals are faced with, they are more likely to rely on other professionals they already know and trust, according to research from LinkedIn.

More than two-fifths (43%) of B2B marketers globally say their network is still their primary source for advice at work, ahead of family and friends, search engines, and AI tools.

Additionally, younger professionals surveyed say they are still somewhat sceptical of AI, with three-quarters (75%) of 18- to 24-year-olds saying that even as AI becomes more advanced, there’s still no substitute for the intuition and insights they get from trusted colleagues.

Since professionals are more likely to trust content and advice from peers, marketers are now investing more in creators, employees, and subject matter experts to build trust. As a result, 80% of marketers say trusted creators are now essential to earning credibility with younger buyers.

Source: LinkedIn

Business confidence up 11 points but leaders remain concerned about economy

Business leader confidence has increased slightly from last month, having risen from -72 in July to -61 in August.

Business leader confidence has increased slightly from last month, having risen from -72 in July to -61 in August.

The IoD Directors’ Economic Confidence Index, which measures business leader optimism in prospects for the UK economy, is now back to where it was immediately after last year’s Budget.

This improvement comes from several factors, including the rise in investment intentions (up from -27 in July to -8 in August), the rise in headcount expectations from -23 to -4 over the same period, and the increase in revenue expectations from -8 to 12.

Additionally, business leaders’ confidence in their own organisations is also up, standing at 1 in August compared to -9 in July.

Several factors were identified as being of concern for business leaders; these include UK economic conditions at 76%, up from 67% in May, and both employment taxes (remaining at 59%) and business taxes (up to 47%, from 45%) continuing to be of significant concern.

Source: The Institute of Directors

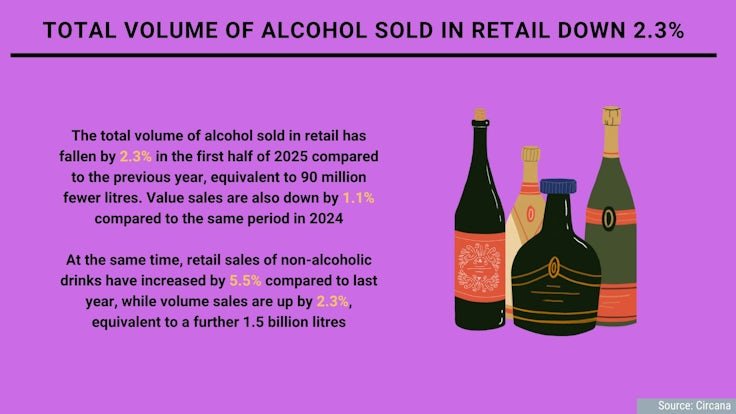

Total volume of alcohol sold in retail down 2.3%

The total volume of alcohol sold in retail has fallen by 2.3% in the first half of 2025 compared to the previous year, equivalent to 90 million fewer litres. Value sales are also down by 1.1% compared to the same period in 2024.

The total volume of alcohol sold in retail has fallen by 2.3% in the first half of 2025 compared to the previous year, equivalent to 90 million fewer litres. Value sales are also down by 1.1% compared to the same period in 2024.

At the same time, retail sales of non-alcoholic drinks have increased by 5.5% compared to last year, while volume sales are up by 2.3%, equivalent to a further 1.5 billion litres.

As the demand for non-alcoholic beverages grows, people increasingly expect these options to be available in their local bars and restaurants, with 55% of Brits and Europeans now expecting bars to always serve non-alcoholic beer.

As well as this, there are shifts happening within the alcoholic beverages category with value sales of no and low-alcohol spirits rising by 16.1%, and sales of ready-to-drink spirits growing by 11.6% compared to last year.

Source: Circana

Ethics & Policy

AI ethics under scrutiny, young people most exposed

New reports into the rise of artificial intelligence (AI) showed incidents linked to ethical breaches have more than doubled in just two years.

At the same time, entry-level job opportunities have been shrinking, partly due to the spread of this automation.

AI is moving from the margins to the mainstream at extraordinary speed and both workplaces and universities are struggling to keep up.

Tools such as ChatGPT, Gemini and Claude are now being used to draft emails, analyse data, write code, mark essays and even decide who gets a job interview.

Alongside this rapid rollout, a March report from McKinsey, one by the OECD in July and an earlier Rand report warned of a sharp increase in ethical controversies — from cheating scandals in exams to biased recruitment systems and cybersecurity threats — leaving regulators and institutions scrambling to respond.

The McKinsey survey said almost eight in 10 organisations now used AI in at least one business function, up from half in 2022.

While adoption promises faster workflows and lower costs, many companies deploy AI without clear policies. Universities face similar struggles, with students increasingly relying on AI for assignments and exams while academic rules remain inconsistent, it said.

The OECD’s AI Incidents and Hazards Monitor reported that ethical and operational issues involving AI have more than doubled since 2022.

Common concerns included accountability — who is responsible when AI errs; transparency — whether users understand AI decisions; and fairness, whether AI discriminates against certain groups.

Many models operated as “black boxes”, producing results without explanation, making errors hard to detect and correct, it said.

In workplaces, AI is used to screen CVs, rank applicants, and monitor performance. Yet studies show AI trained on historical data can replicate biases, unintentionally favouring certain groups.

Rand reported that AI was also used to manipulate information, influence decisions in sensitive sectors, and conduct cyberattacks.

Meanwhile, 41 per cent of professionals report that AI-driven change is harming their mental health, with younger workers feeling most anxious about job security.

LinkedIn data showed that entry-level roles in the US have fallen by more than 35 per cent since 2023, while 63 per cent of executives expected AI to replace tasks currently done by junior staff.

Aneesh Raman, LinkedIn’s chief economic opportunity officer, described this as “a perfect storm” for new graduates: Hiring freezes, economic uncertainty and AI disruption, as the BBC reported August 26.

LinkedIn forecasts that 70 per cent of jobs will look very different by 2030.

Recent Stanford research confirmed that employment among early-career workers in AI-exposed roles has dropped 13 per cent since generative AI became widespread, while more experienced workers or less AI-exposed roles remained stable.

Companies are adjusting through layoffs rather than pay cuts, squeezing younger workers out, it found.

In Belgium, AI ethics and fairness debates have intensified following a scandal in Flanders’ medical entrance exams.

Investigators caught three candidates using ChatGPT during the test.

Separately, 19 students filed appeals, suspecting others may have used AI unfairly after unusually high pass rates: Some 2,608 of 5,544 participants passed but only 1,741 could enter medical school. The success rate jumped to 47 per cent from 18.9 per cent in 2024, raising concerns about fairness and potential AI misuse.

Flemish education minister Zuhal Demir condemned the incidents, saying students who used AI had “cheated themselves, the university and society”.

Exam commission chair Professor Jan Eggermont noted that the higher pass rate might also reflect easier questions, which were deliberately simplified after the previous year’s exam proved excessively difficult, as well as the record number of participants, rather than AI-assisted cheating alone.

French-speaking universities, in the other part of the country, were not concerned by this scandal, as they still conduct medical entrance exams entirely on paper, something Demir said he was considering going back to.

-

Business3 days ago

Business3 days agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences3 months ago

Events & Conferences3 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Mergers & Acquisitions2 months ago

Mergers & Acquisitions2 months agoDonald Trump suggests US government review subsidies to Elon Musk’s companies