Artificial Intelligence, which was once science fiction, has effortlessly integrated itself into the landscape of everyday life, quietly driving the intelligent assistants in our homes, propelling global financial markets to their optimal performance, and revolutionising the healthcare environment.

This all-encompassing integration ushers in the beginning of a new epoch, one characterised by the deep capabilities of advanced algorithms and enormous data processing. But this technological wonder also contains a core paradox: while AI possesses enormous potential to transform human advancement and address some of the world’s most recalcitrant challenges, it also contains the potential to destabilise societies, remake economies, and raise fundamental questions of ethics that test our definition of fairness, privacy, and accountability.

The ubiquitous integration of AI is not merely a series of technological advancements; it represents a fundamental societal shift, echoing the transformative scale of previous industrial revolutions. The presence of AI in diverse sectors, from enhancing medical diagnostics to powering complex financial operations, signifies a foundational change in how industries operate and how individuals interact with technology daily.

This deep integration demands a holistic re-evaluation of human roles, societal structures, and even our collective values. It necessitates proactive adaptation and thoughtful governance, rather than merely reactive problem-solving, to navigate the significant societal friction that could arise from such a profound reordering of human activity and interaction.

Understanding the AI landscape

Artificial Intelligence, in today’s existence, is advanced programs and computer processing systems that can carry out tasks that human intelligence would usually undertake, including learning, problem-solving, perception, and decision-making. It goes beyond science fiction, appearing in a wide range of applications that touch almost every facet of contemporary life.

The swift pace of AI progress is fueled mainly by exponential growth in computing power, the widespread availability of big data, and advances in machine learning methods, which enable systems to learn from huge amounts of data with unheralded speed and precision. This is not a monolithic field but a multifaceted discipline with multiple sub-fields and applications, ranging from highly specialised tools for narrowly defined tasks to more general-purpose AI systems.

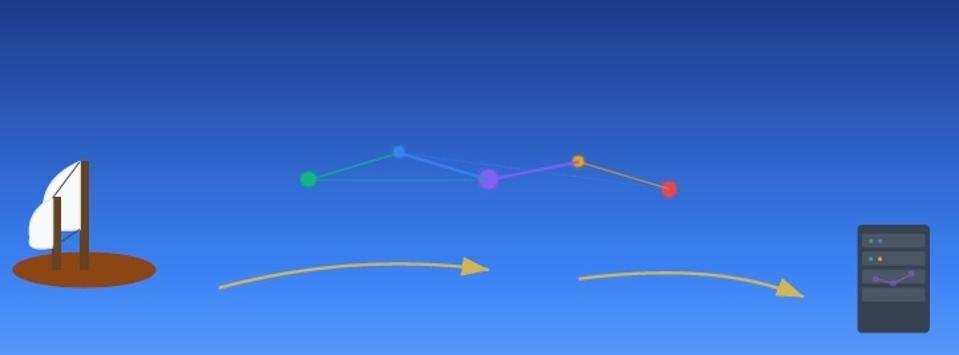

The rapid, exponential expansion of AI capability, driven by an increasingly abundant data supply and computing power, presents a huge challenge for public understanding and governance. Technological development typically proceeds at a pace that outstrips conventional regulatory cycles and societal adaptation. This regulation lag is such that by the time frameworks are being considered or put in place, the technology has already changed, perhaps new challenges or reinforcing old ones, like accountability issues or undermining public trust.

The interdisciplinary nature of AI systems and this fast-paced development make adaptive, forward-looking governance models imperative absolutely. Without such foresight and agility, there is a risk of unmanageable societal disruptions or an undue concentration of power, as the technology’s evolution outpaces our collective ability to understand, manage, and guide its trajectory responsibly.

AI as a Catalyst for Progress

The revolutionary positive contributions of AI are emerging in many key sectors, making it a potent driver of human development. Its capacity to process and analyse enormous sets of data at rates inconceivable for humans is opening up solutions to age-old problems and generating whole new opportunities.

Transforming Healthcare

In medicine, AI is dramatically enhancing the precision and speed of diagnosis, speeding up drug development, and making personalized medicine possible. AI platforms are able to scan enormous sets of genomic, clinical, and research information much more quickly than human scientists. This makes possible the detection of hidden patterns signifying early disease diagnosis, treatment effectiveness in individual patients’ prediction, and the creation of new drug compounds with increased accuracy. This results in more accurate, efficient, and individualised patient care, pushing healthcare in the direction of being more preventive and personalised. The capacity of AI to process such intricate medical and biological data is not merely an incremental innovation; it revolutionises the paradigm of medical research and patient care, with the potential for a future where treatments are customised to the individual.

Powering a sustainable future

AI is also driving the global charge towards a sustainable future. Its uses extend to optimization of energy grids, more accurate climate patterns predictions, and designing new sustainable approaches. Through the use of real-time data on energy consumption and production, AI can optimize efficiency in managing resources, minimize waste, and augment the predictability of renewable sources.

Additionally, AI’s ability to deal with intricate environmental data enables more advanced climate modeling, giving important information for mitigation efforts and adaptation strategies. This technological ability allows for smarter infrastructure planning and more enlightened decision-making amid environmental issues, furthering the cause of global sustainability.

Improving accessibility and inclusion

Perhaps the most human-oriented use of AI is its ability to make people more accessible and include them more. AI makes people with disabilities more capable with a variety of innovative technologies, including sophisticated speech-to-text conversion, smart navigation systems, and tailored assistive devices.

These technologies disintegrate old barriers, allowing marginalized communities more independence and inclusion. For instance, visual recognition powered by AI can narrate surroundings for the blind, and advanced language processing can enable people with speech disorders to communicate. This use of AI highlights how it has the ability to empower people and make the world accessible and fairer to all.

Boosting economic growth and innovation

Economically, AI is a major productivity driver, giving rise to new industries and contributing to increased efficiency in different industries. In the financial industry, for example, AI is a necessity for advanced fraud detection, algorithmic trading strategy optimisation, and effective risk analysis. Its capability to scan large financial data sets at high speeds enables fraud anomalies to be detected and more accurate and faster trading decisions.

Aside from finance, AI-powered automation and analytical potential bring more output in industries, bring wholly new market opportunities, and lead to a stronger and more efficient global economic system. The economic effect goes beyond efficiency, radically changing industries and creating new classes of jobs and services.

Enhancing human creativity and learning

In opposition to anxieties that AI would replace human creativity, the technology is now more than ever a versatile tool for artists, designers, and teachers, working as a co-creator and learning facilitator. AI can complement, not replace, human imagination, as a collaborator in the act of creation.

For example, AI algorithms can create new musical pieces, visual artwork, or literary genres that challenge human creators to venture in new directions. In learning, AI makes learning experiences personalised and adaptive content delivery, aligning curricula to specific student needs and learning speeds. This way, education becomes more efficient and engaging, equipping people for the future with AI by emphasizing problem-solving and critical thinking. This collaborative model emphasizes a vision where AI maximises human capabilities and potential, not curtails them.

The analogy of diverse beneficial applications of AI across seemingly disparate industries – from medicine and environmental conservation to economic growth and education – suggests a fundamental change.

AI is more than evolving tools; it is a systemic facilitator, implying that its real value is not solely its tools analogy but its cross-industry synergy and utility to make complex systems better rather than perform specific tasks. This ubiquitous optimization suggests that AI is slowly becoming a foundational level of infrastructure, one that can reach across and enhance formerly isolated systems.

In the future, societal advancement will rely more and more on the ability to integrate and utilize these cross-domain capabilities, potentially achieving levels of human well-being and problem-solving never before seen. But this also requires unprecedented amounts of interdisciplinarity and data sharing to realize these benefits in full.

Navigating AI’s ethical minefield

While the potential of AI is enormous, its accelerated deployment also raises enormous challenges and ethical issues that must be properly examined and proactively addressed. These are not just technical issues but existential societal and ethical concerns based on human values and governance.

Displacement and reskilling

Perhaps the most immediate and critically discussed issue is the future of work. AI automation is set to render jobs redundant, especially those with repetitive and routine duties. This economic nervousness is felt in many sectors, calling for a paradigm change in building workforce capabilities.

While AI will certainly generate new jobs, specifically for AI professionals and those in positions demanding uniquely human capabilities, there is a need for aggressive education and training programs to enable the current workforce to occupy these jobs.

Without inclusive reskilling initiatives and flexible education systems, the social costs of displacement could be high, even worsening economic inequalities and social instability.

Bias and discrimination

One of the most important ethical challenges arises from the inherent bias of AI systems. AI systems are trained on huge datasets, and if the datasets are biased, reflecting historical or societal biases, the AI will learn and replicate those inequalities. This has the potential to create discriminatory results in sensitive fields like hiring, loan approval, criminal justice, and even medical diagnoses.

For example, an AI that has been trained on biased past hiring data could inadvertently discriminate against certain groups over others, perpetuating current biases. Solving this involves not just technical fixes for detecting and mitigating bias, but a societal mission to identify and correct underlying human biases that inform these systems.

Privacy and data security

The data-intensive nature of AI raises fundamental privacy issues. The amount and level of detail of data needed to train and run sophisticated AI systems can encroach on personal privacy rights. This calls for strong data protection laws and open data governance structures, like the General Data Protection Regulation (GDPR).

In addition, although AI can be an effective instrument in cybersecurity, it also has a dual character to offer, threatening to detect and augment security features. AI systems themselves may be targeted by malicious actors, and their potential may be turned against them to use for advanced surveillance or cyberattacks, leading to an ongoing arms race between offense and defense powered by AI.

Accountability and control

The “black box” challenge of sophisticated AI systems presents a major challenge to accountability. If an AI system commits a mistake or brings harm, attributing the responsibility to someone in particular – the developer, deployer, user, or the AI itself – is extremely challenging given the absence of precise legal frameworks. This confusion destroys trust and makes legal action difficult.

Furthermore, although AI can provide support in decision-making, it still does not possess actual emotional intelligence, empathy, and critical thinking skills. These uniquely human qualities are still necessary for balanced judgment, particularly in situations where high stakes are involved. The problem is making sure AI is a tool used to enhance human decision-making with final responsibility in the hands of human oversight, not an autonomous system that can no longer be controlled by humans.

The danger of misinformation

The potentially disastrous ability of AI to be used to create and disseminate misinformation poses a great danger to democratic systems and public dialogue. AI’s capability to generate deeply realistic ‘deepfakes’ – artificial media that present people speaking or doing things they never did in a convincingly realistic manner – can be leveraged for propaganda, disinformation campaigns, and character assassination.

This is a capability that can erode public trust in information, confuse reality and fabrication, and present an immediate challenge to critical thinking and media literacy. The speed at which AI content spreads renders it more and more challenging for people to separate fact from fiction, and is likely to cause social polarization and instability.

The quintessential problems of AI – job displacement, bias, privacy, accountability, and misinformation – are not simply technical glitches to be patched; they are innate society and ethics problems grounded in values and governance frameworks.

For example, algorithmic bias tends to reflect and enhance pre-existing biases in the data at hand, and not something entirely novel. Likewise, the accountability gap points to a deficiency of legal foresight and adequate frameworks to address autonomous systems.

Thus, solutions to these issues demand more than enhanced AI algorithms or new software. It requires a deep societal reckoning with data ethics, a redefinition of work and social safety nets, a new legal precedent for AI liability, and an international, concerted effort to fight digital manipulation.

This means that the “peril” of AI is less technological than human, namely, our ability to adjust its institutions, values, and collective behavior to an evolving technology.

Towards responsible AI governance

It will take an concerted, multi-pronged effort involving responsible governance, ethical-driven development, and human-centered approaches to navigate the double-edged future of AI. The way ahead goes beyond mitigating risks and is about shaping an AI future in line with humanity’s highest aspirations.

The Imperative for global governance

The global and boundary-less character of AI development and use makes it imperative to have global cooperation. The fast pace of AI evolution tends to leave the capacity for national laws behind, thereby developing a regulatory vacuum.

Adding to this, countries are making significant investments in AI for economic and strategic purposes, resulting in a “race to the bottom” where ethical issues are given a lower priority in favor of technologically surpassing others.

In order to avoid a fragmented and potentially dangerous AI environment, global cooperation is necessary to create shared ethical standards and policies. This entails conducting dialogues, best-practice sharing, and coordination towards harmonized standards with the emphasis on safety, equity, and accountability transnationally.

Constructing ethical frameworks and principles

At the heart of safe AI development is the consistent pursuit of constructing strong ethical frameworks and principles. Governments, global organisations, and technology firms are becoming more and more involved in creating guidelines based on principles such as fairness, transparency, and accountability.

Transparency and explainability are especially important for building public acceptance and trust for AI systems. It means creating AI that is able to explain its decision-making process so it can be audited and understood, particularly in life-critical applications such as healthcare or finance.

These ethical frameworks act as a moral compass, advising developers and policymakers so that AI systems are designed and deployed in such a way that they are aligned with human values and societal welfare.

Investment in reskilling and lifelong learning

With AI revolutionising the world of work, significant investment in reskilling and lifelong learning programs becomes top priority. The focus needs to move towards the development of adaptive skills among people, emphasising uniquely human skills like creativity, critical thinking, emotional intelligence, and complex problem-solving.

Education systems, including elementary schools, vocational training schemes, and corporate upskilling programs, need to change to prepare the workforce for new jobs and the changing needs of an AI-based economy. This proactive human capital investment is needed to prevent job displacement, create economic resilience, and make sure that people are able to thrive in an increasingly technologically advanced world.

Encouraging Human-AI cooperation

A visionary approach to AI focuses on cooperation, not replacement. AI must be seen as a collaborator, not a tool, to support the human factor and produce synergy. This perspective acknowledges that although AI is superior at data processing and pattern discovery, human beings contribute unique qualities like intuition, empathy, subtle judgment, and innovative ideation.

Through human-AI collaboration, new types of productivity and innovation can be unleashed, resulting in outcomes that neither humans nor AI could produce on their own. This collaborative intelligence approach ensures that technology is used to augment human potential, not deplete it.

The present scattered and frequently reactive style of AI regulation, combined with a growing global AI arms race, threatens to give rise to a future where AI’s enormous value is unremittingly concentrated in a few hands, and its damage is unremittingly suffered by the most vulnerable populations. Unless countries prioritize competitive gain over all other considerations, they could forgo essential ethical considerations or create incompatible regulatory environments, resulting in a disorganized and potentially disastrous global AI environment. This disintegration and competitive force would result in a future where strong players capture most of the benefits of AI, while the dangers—like the broad displacement of jobs, extensive monitoring, or uncontrolled disinformation—are pushed outside to the world’s population, especially the weaker nations or marginal groups. Thus, “the path forward” is not merely the passage of a bill; it requires a shift in paradigm to proactive, inclusive, and globally networked governance styles. These need to actively place human welfare, fair access, and strong protection above mere technological progress or strategic control, establishing an actual collaborative global AI development architecture.

Human Element in the Age of AI

The emergence of Artificial Intelligence is a potent, transformative power that is neither inherently good nor evil. Its eventual influence on humanity will be heavily determined by the combined decisions today about its ethics, regulation, education, and nurturing of human-AI collaboration.

The future of AI is not an inevitable fate but a formable realm that humanity must be custodian of and shape.

The final test of the AI revolution goes beyond the mere technological achievement; it is humanity’s ability to exercise moral vision, collective action, and a fundamental reinterpretation of its own purpose in an era in which intelligence is no longer just augmented and concentrated but also collectively distributed. This will require a profound philosophical and societal reexamination, not just a series of policy refinements. It involves keeping and promoting distinctly human values like empathy, critical thinking, and collective intelligence as the defining principles for AI development.

By keeping these human aspects at the core of each decision, human civilization can chart AI’s course towards a future that is inclusive, sustainable, and genuinely serves to help all thrive, not just improve technology for technology’s sake. The responsibility to harness this revolution for the common good rests firmly on our shoulders.