AI Research

Peloton’s 2025 Hackathon Event Focuses on Artificial Intelligence (AI)

Peloton recently held its 2025 Global Hackathon event – its eighth overall and fourth annual week-long edition – bringing together hundreds of team members for a week focused on innovation, collaboration, and pushing boundaries.

Peloton shared the news via blog post, writing:

Our 8th hackathon, and fourth annual week-long event, has concluded! This yearly tradition unlocks the creativity and innovative spirit of our global team. It provides an opportunity for team members to collaborate, brainstorm, and develop products or features that could one day benefit our Members. Many popular Peloton features originated from or were inspired by hackathon projects, including Auto-Resistance, 3rd Party Treadmill Metrics, target metrics for Members during rides, the Peloton Guide, QR Code Log-in feature, and the Peloton Android Widget.

While this year’s recap lacked the behind-the-scenes photos and in-depth project highlights seen in previous years, the blog post still offers insight into what is to come for Peloton, including a heavy focus on artificial intelligence (AI). The company specifically noted that “a significant focus was placed on Artificial Intelligence, both in the new features implemented and the development tools used.”

A total of 220 participants from across departments – including Product, Engineering, Emerging Business, Legal, and even some instructors – came together to produce 49 completed projects over the course of three and a half days. The event culminated in a science fair-style expo followed by a Shark Tank-style final round where the top five projects were demoed and pitched to members of Peloton’s leadership team.

This year’s hackathon leaned heavily into artificial intelligence, both in terms of the tools used to build projects and the features being developed. That emphasis aligns with Peloton’s broader recent push into AI capabilities – something the company has increasingly highlighted as part of its strategy. You can find more details on this front via our recent article.

According to the blog post, many team members worked outside their usual roles, picking up new skills and exploring ways AI could enhance the member experience. While specific AI-powered prototypes were not revealed in this summary, it is clear that the theme appeared throughout the event.

The blog post points out that some of Peloton’s most popular features – such as Auto-Resistance, third-party treadmill metrics, target metrics during rides, the Android widget, and even the Peloton Guide – originated in hackathons like this one. Peloton emphasizes that while only a handful of projects may eventually reach members, the event’s real value lies in reigniting creativity, encouraging cross-team collaboration, and allowing employees to pursue passion projects.

Though Peloton did not share as many visuals or behind-the-scenes glimpses as in their 2024 Hackathon, it is apparent that AI was a central focus. It remains to be seen whether any of the initiatives and ideas discussed will eventually reach members.

You can check out the full details of Peloton’s 2025 Hackathon via the blog post.

Support the site! Enjoy the news & guides we provide? Help us keep bringing you the news. Pelo Buddy is completely free, but you can help support the site with a one-time or monthly donation that will go to our writers, editors, and more. Find out more details here.

Get Our Newsletter Want to be sure to never miss any Peloton news? Sign up for our newsletter and get all the latest Peloton updates & Peloton rumors sent directly to your inbox.

AI Research

Should AI Get Legal Rights?

In one paper Eleos AI published, the nonprofit argues for evaluating AI consciousness using a “computational functionalism” approach. A similar idea was once championed by none other than Putnam, though he criticized it later in his career. The theory suggests that human minds can be thought of as specific kinds of computational systems. From there, you can then figure out if other computational systems, such as a chabot, have indicators of sentience similar to those of a human.

Eleos AI said in the paper that “a major challenge in applying” this approach “is that it involves significant judgment calls, both in formulating the indicators and in evaluating their presence or absence in AI systems.”

Model welfare is, of course, a nascent and still evolving field. It’s got plenty of critics, including Mustafa Suleyman, the CEO of Microsoft AI, who recently published a blog about “seemingly conscious AI.”

“This is both premature, and frankly dangerous,” Suleyman wrote, referring generally to the field of model welfare research. “All of this will exacerbate delusions, create yet more dependence-related problems, prey on our psychological vulnerabilities, introduce new dimensions of polarization, complicate existing struggles for rights, and create a huge new category error for society.”

Suleyman wrote that “there is zero evidence” today that conscious AI exists. He included a link to a paper that Long coauthored in 2023 that proposed a new framework for evaluating whether an AI system has “indicator properties” of consciousness. (Suleyman did not respond to a request for comment from WIRED.)

I chatted with Long and Campbell shortly after Suleyman published his blog. They told me that, while they agreed with much of what he said, they don’t believe model welfare research should cease to exist. Rather, they argue that the harms Suleyman referenced are the exact reasons why they want to study the topic in the first place.

“When you have a big, confusing problem or question, the one way to guarantee you’re not going to solve it is to throw your hands up and be like ‘Oh wow, this is too complicated,’” Campbell says. “I think we should at least try.”

Testing Consciousness

Model welfare researchers primarily concern themselves with questions of consciousness. If we can prove that you and I are conscious, they argue, then the same logic could be applied to large language models. To be clear, neither Long nor Campbell think that AI is conscious today, and they also aren’t sure it ever will be. But they want to develop tests that would allow us to prove it.

“The delusions are from people who are concerned with the actual question, ‘Is this AI, conscious?’ and having a scientific framework for thinking about that, I think, is just robustly good,” Long says.

But in a world where AI research can be packaged into sensational headlines and social media videos, heady philosophical questions and mind-bending experiments can easily be misconstrued. Take what happened when Anthropic published a safety report that showed Claude Opus 4 may take “harmful actions” in extreme circumstances, like blackmailing a fictional engineer to prevent it from being shut off.

AI Research

Trends in patent filing for artificial intelligence-assisted medical technologies | Smart & Biggar

[co-authors: Jessica Lee, Noam Amitay and Sarah McLaughlin]

Medical technologies incorporating artificial intelligence (AI) are an emerging area of innovation with the potential to transform healthcare. Employing techniques such as machine learning, deep learning and natural language processing,1 AI enables machine-based systems that can make predictions, recommendations or decisions that influence real or virtual environments based on a given set of objectives.2 For example, AI-based medical systems can collect medical data, analyze medical data and assist in medical treatment, or provide informed recommendations or decisions.3 According to the U.S. Food and Drug Administration (FDA), some key areas in which AI are applied in medical devices include: 4

- Image acquisition and processing

- Diagnosis, prognosis, and risk assessment

- Early disease detection

- Identification of new patterns in human physiology and disease progression

- Development of personalized diagnostics

- Therapeutic treatment response monitoring

Patent filing data related to these application areas can help us see emerging trends.

Table of contents

Analysis strategy

We identified nine subcategories of interest:

- Image acquisition and processing

- Medical image acquisition

- Pre-processing of medical imaging

- Pattern recognition and classification for image-based diagnosis

- Diagnosis, prognosis and risk management

- Early disease detection

- Identification of new patterns in physiology and disease

- Development of personalized diagnostics and medicine

- Therapeutic treatment response monitoring

- Clinical workflow management

- Surgical planning/implants

We searched patent filings in each subcategory from 2001 to 2023. In the results below, the number of patent filings are based on patent families, each patent family being a collection of patent documents covering the same technology, which have at least one priority document in common.5

What has been filed over the years?

The number of patents filed in each subcategory of AI-assisted applications for medical technologies from 2001 to 2023 is shown below.

We see that patenting activities are concentrating in the areas of treatment response monitoring, identification of new patterns in physiology and disease, clinical workflow management, pattern recognition and classification for image-based diagnosis, and development of personalized diagnostics and medicine. This suggests that research and development efforts are focused on these areas.

What do the annual numbers tell us?

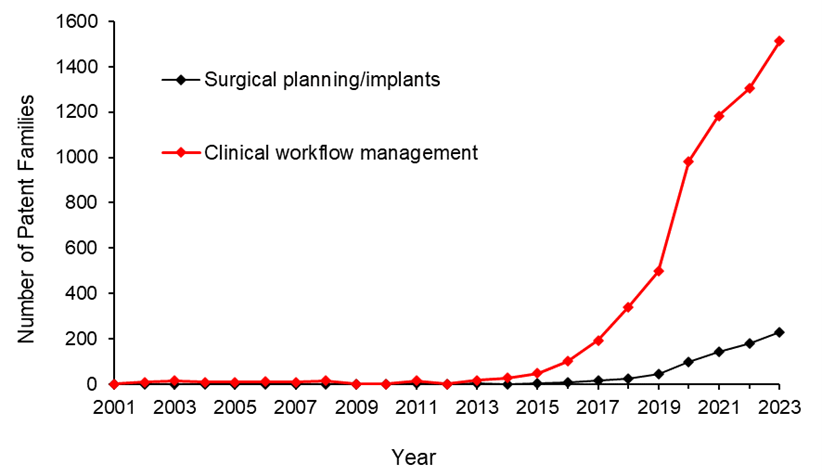

Let’s look at the annual number of patent filings for the categories and subcategories listed above. The following four graphs show the global patent filing trends over time for the categories of AI-assisted medical technologies related to: image acquisition and processing; diagnosis, prognosis and risk management; treatment response monitoring; and workflow management.

When looking at the patent filings on an annual basis, the numbers confirm the expected significant uptick in patenting activities in recent years for all categories searched. They also show that, within the four categories, the subcategories showing the fastest rate of growth were: pattern recognition and classification for image-based diagnosis, identification of new patterns in human physiology and disease, treatment response monitoring, and clinical workflow management.

Above: Global patent filing trends over time for categories of AI-assisted medical technologies related to image acquisition and processing.

Above: Global patent filing trends over time for categories of AI-assisted medical technologies related to more accurate diagnosis, prognosis and risk management.

Above: Global patent filing trends over time for AI-assisted medical technologies related to treatment response monitoring.

Above: Global patent filing trends over time for categories of AI-assisted medical technologies related to workflow management.

Where is R&D happening?

By looking at where the inventors are located, we can see where R&D activities are occurring. We found that the two most frequent inventor locations are the United States (50.3%) and China (26.2%). Both Australia and Canada are amongst the ten most frequent inventor locations, with Canada ranking seventh and Australia ranking ninth in the five subcategories that have the highest patenting activities from 2001-2023.

Where are the destination markets?

The filing destinations provide a clue as to the intended markets or locations of commercial partnerships. The United States (30.6%) and China (29.4%) again are the pace leaders. Canada is the seventh most frequent destination jurisdiction with 3.2% of patent filings. Australia is the eighth most frequent destination jurisdiction with 3.1% of patent filings.

Takeaways

Our analysis found that the leading subcategories of AI-assisted medical technology patent applications from 2001 to 2023 include treatment response monitoring, identification of new patterns in human physiology and disease, clinical workflow management, pattern recognition and classification for image-based diagnosis as well as development of personalized diagnostics and medicine.

In more recent years, we found the fastest growth in the areas of pattern recognition and classification for image-based diagnosis, identification of new patterns in human physiology and disease, treatment response monitoring, and clinical workflow management, suggesting that R&D efforts are being concentrated in these areas.

We saw that patent filings in the areas of early disease detection and surgical/implant monitoring increased later than the other categories, suggesting these may be emerging areas of growth.

Although, as expected, the United States and China are consistently the leading jurisdictions in both inventor location and destination patent offices, Canada and Australia are frequently in the top ten.

Patent intelligence provides powerful tools for decision makers in looking at what might be shaping our future. With recent geopolitical changes and policy updates in key primary markets, as well as shifts in trade relationships, patent filings give us insight into how these aspects impact innovation. For everyone, it provides exciting clues as to what emerging technologies may shape our lives.

References

1. Alowais et.al., Revolutionizing healthcare: the role of artificial intelligence in clinical practice (2023), BMC Medical Education, 23:689.

2. U.S. Food and Drug Administration (FDA), Artificial Intelligence and Machine Learning in Software as a Medical Device.

3. Bitkina et.al., Application of artificial intelligence in medical technologies: a systematic review of main trends (2023), Digital Health, 9:1-15.

4. Artificial Intelligence Program: Research on AI/ML-Based Medical Devices | FDA.

5. INPADOC extended patent family.

[View source.]

AI Research

Research Reveals Human-AI Learning Parallels

PROVIDENCE, R.I. [Brown University] – New research found similarities in how humans and artificial intelligence integrate two types of learning, offering new insights about how people learn as well as how to develop more intuitive AI tools.

Led by Jake Russin, a postdoctoral research associate in computer science at Brown University, the study found by training an AI system that flexible and incremental learning modes interact similarly to working memory and long-term memory in humans.

“These results help explain why a human looks like a rule-based learner in some circumstances and an incremental learner in others,” Russin said. “They also suggest something about what the newest AI systems have in common with the human brain.”

Russin holds a joint appointment in the laboratories of Michael Frank, director of the Center for Computational Brain Science at Brown’s Carney Institute for Brain Science, and Ellie Pavlick, an associate professor of computer science who leads the AI Research Institute on Interaction for AI Assistants at Brown. The study was published in the Proceedings of the National Academy of Sciences.

Depending on the task, humans acquire new information in one of two ways. For some tasks, such as learning the rules of tic-tac-toe, “in-context” learning allows people to figure out the rules quickly after a few examples. In other instances, incremental learning builds on information to improve understanding over time – such as the slow, sustained practice involved in learning to play a song on the piano.

While researchers knew that humans and AI integrate both forms of learning, it wasn’t clear how the two learning types work together. Over the course of the research team’s ongoing collaboration, Russin – whose work bridges machine learning and computational neuroscience – developed a theory that the dynamic might be similar to the interplay of human working memory and long-term memory.

To test this theory, Russin used “meta-learning”- a type of training that helps AI systems learn about the act of learning itself – to tease out key properties of the two learning types. The experiments revealed that the AI system’s ability to perform in-context learning emerged after it meta-learned through multiple examples.

One experiment, adapted from an experiment in humans, tested for in-context learning by challenging the AI to recombine similar ideas to deal with new situations: if taught about a list of colors and a list of animals, could the AI correctly identify a combination of color and animal (e.g. a green giraffe) it had not seen together previously? After the AI meta-learned by being challenged to 12,000 similar tasks, it gained the ability to successfully identify new combinations of colors and animals.

The results suggest that for both humans and AI, quicker, flexible in-context learning arises after a certain amount of incremental learning has taken place.

“At the first board game, it takes you a while to figure out how to play,” Pavlick said. “By the time you learn your hundredth board game, you can pick up the rules of play quickly, even if you’ve never seen that particular game before.”

The team also found trade-offs, including between learning retention and flexibility: Similar to humans, the harder it is for AI to correctly complete a task, the more likely it will remember how to perform it in the future. According to Frank, who has studied this paradox in humans, this is because errors cue the brain to update information stored in long-term memory, whereas error-free actions learned in context increase flexibility but don’t engage long-term memory in the same way.

For Frank, who specializes in building biologically inspired computational models to understand human learning and decision-making, the team’s work showed how analyzing strengths and weaknesses of different learning strategies in an artificial neural network can offer new insights about the human brain.

“Our results hold reliably across multiple tasks and bring together disparate aspects of human learning that neuroscientists hadn’t grouped together until now,” Frank said.

The work also suggests important considerations for developing intuitive and trustworthy AI tools, particularly in sensitive domains such as mental health.

“To have helpful and trustworthy AI assistants, human and AI cognition need to be aware of how each works and the extent that they are different and the same,” Pavlick said. “These findings are a great first step.”

The research was supported by the Office of Naval Research and the National Institute of General Medical Sciences Centers of Biomedical Research Excellence.

-

Business6 days ago

Business6 days agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences4 months ago

Events & Conferences4 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Education2 months ago

Education2 months agoMacron says UK and France have duty to tackle illegal migration ‘with humanity, solidarity and firmness’ – UK politics live | Politics