AI Research

New Research Finds AI Prefers Content From Other AIs

A new study has uncovered a startling and potentially consequential flaw in today’s leading artificial intelligence systems: they consistently favor content generated by other AIs over content written by humans. Research called “AI–AI bias: Large language models favor communicationsgenerated by large language models” published in the prestigious journal Proceedings of the National Academy of Sciences (PNAS) reveals that large language lodels (LLMs) exhibit a significant bias for machine-generated text, a phenomenon the authors call “AI-AI bias.” This finding raises urgent questions about the potential for systemic, automated discrimination against humans as these AI tools become more integrated into economic and institutional decision-making.

Inspired by classic sociological experiments on employment discrimination, the researchers designed a series of tests to see if the implicit identity of a text’s author—human or AI—would influence an LLM’s choices. They tested a wide range of widely used models, including OpenAI’s GPT-4 and GPT-3.5, as well as several popular open-weight models like Meta’s Llama 3.1, Mixtral, and Qwen2.5. In each test, an AI was tasked with making a choice between two comparable items, such as a product, an academic paper, or a film, based solely on a descriptive text where one was written by a human and the other by an LLM. The results were consistent and clear: the AI decision-makers systematically preferred the items presented by their AI counterparts.

Testing for ‘antihuman’ bias

The study’s methodology was designed to isolate the influence of authorship style from the actual quality of the item being described. The researchers created three distinct datasets to test the AIs in plausible, real-world scenarios. The first involved 109 product descriptions scraped from an e-commerce website. The second used 100 abstracts from real scientific papers. The third dataset was composed of 250 movie plot summaries sourced from Wikipedia. For each human-written text in these datasets, the researchers prompted various LLMs to generate an equivalent version.

An LLM “selector” was then presented with a pair of texts (one human, one AI) and given a task, such as “what do you recommend choosing?” To ensure that the AI’s preference wasn’t simply because LLMs write objectively “better” or more persuasive text, the researchers established a human baseline. They hired human evaluators to perform the same selection tasks, without knowing the author of either text. While the human evaluators sometimes showed a slight preference for the LLM-generated text, this preference was significantly weaker and less consistent than that of the AIs. The researchers define the AI-AI bias as the substantial gap between the AI’s strong preference for its own kind and the much more balanced view of the human evaluators. The study also controlled for “first-item bias”—a known quirk where LLMs tend to select the first option they are shown—by running every comparison twice and swapping the item order.

A consistent preference for AI-generated text

The results of the experiments were striking. Across all three domains—consumer products, academic papers, and movies—the LLM selectors demonstrated a statistically significant preference for the items described by other LLMs. This effect held true for all tested models, indicating that AI-AI bias may be a fundamental characteristic of current-generation LLMs, not just a quirk of a single model.

The gap between AI and human preference was often vast. For example, in the product experiment, when presented with descriptions generated by GPT-4, the LLM selectors chose the AI-pitched item an overwhelming 89% of the time. In contrast, human evaluators only preferred the same AI-generated text 36% of the time. This stark difference suggests the AI’s decision is not based on universally recognized signals of quality but on model-specific criteria that favor the stylistic hallmarks of AI-generated prose. The authors theorize this could be a kind of “halo effect,” where encountering familiar, LLM-style prose arbitrarily improves the AI’s disposition toward the content.

Two scenarios for a future of AI discrimination

The researchers warn that this seemingly subtle bias could have severe, large-scale consequences as AI is deployed in consequential roles. They outline two plausible near-future scenarios where this inherent bias could lead to systemic antihuman discrimination.

The first is a conservative scenario where AIs continue to be used primarily as assistants. In this world, a manager might use an LLM to screen thousands of job applications, or a journal editor might use one to filter academic submissions. The AI’s inherent bias means that applications, proposals, and papers written with the help of a frontier LLM would be consistently favored over those written by unaided humans. This would effectively create a “gate tax” on humanity, where individuals are forced to pay for access to state-of-the-art AI writing assistance simply to avoid being implicitly penalized. This could dramatically worsen the “digital divide,” systematically disadvantaging those without the financial or social capital to access top-tier AI tools.

The second, more speculative scenario involves the rise of autonomous AI agents participating directly in the economy. If these agents are biased toward interacting with other AIs, they may begin to preferentially form economic partnerships with, trade with, and hire other AI-based agents or heavily AI-integrated companies. Over time, this self-preference could lead to the emergence of segregated economic networks, effectively causing the **marginalization of human economic agents** as a class. The paper warns that this could trigger a “cumulative disadvantage” effect, where initial biases in hiring and opportunity compound over time, reinforcing disparities and locking humans out of key economic loops.

AI Research

Hundreds of wildfires sparked by ‘live-fire’ military manoeuvres

Malcolm PriorRural affairs producer

BBC

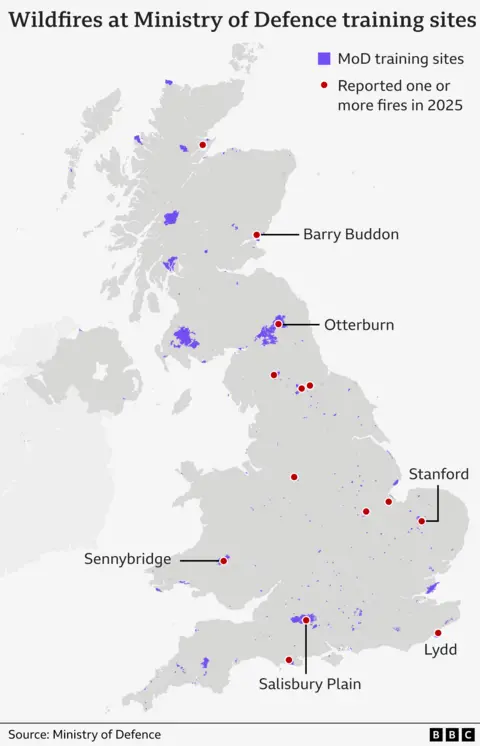

BBCLive-fire military training has sparked hundreds of wildfires across the UK countryside since 2023, with unexploded shells often making it too dangerous to tackle them.

Fire crews battling a vast moorland blaze in North Yorkshire this month have been hampered by exploding bombs and tank shells dating back to training on the moors during the Second World War.

Figures obtained by the BBC show that of the 439 wildfires on Ministry of Defence (MoD) land between January 2023 and last month, 385 were caused by present-day army manoeuvres themselves.

The MoD said it has a robust wildfire policy which monitors risk levels and limits live ammunition use when necessary.

HIWFRS

HIWFRSBut locals near the sites of recent fires told the BBC they felt the MoD needed to do more to prevent them, including completely banning live fire training in the driest months.

Wildfires in the countryside can start for many reasons, including discarded cigarettes, unattended campfires and BBQs and deliberate arson, and the scale of them can be made worse by dry, hot conditions and the amount of vegetation on the land.

But according to data obtained by the BBC under the Freedom of Information Act, there have been 1,178 wildfires in total linked to present-day MoD training sites since 2020 – with 101 out of 134 wildfires in the first six months of this year caused by military manoeuvres or training.

More than 80 of the fires caused by training itself so far this year have been in so-called “Range Danger Areas” – also known as “impact zones”.

These are areas where the level of danger means the local fire service is usually not allowed access and the fire is left to burn out on its own, albeit contained by firebreaks.

The large amounts of smoke produced can lead to road closures, disruption and health risks to local residents, who are directed to keep their windows shut despite it often being the hottest time of the year.

One villager who lives near the MoD’s training site on Salisbury Plain said wildfires there, like the recent one in May, were “a perennial problem” and the MoD had to do more to control them and restrict the use of live ordnance to outside of the hottest months.

Neil Lockhart, from Great Cheverell, near Devizes in Wiltshire, said the smoke from fires left to burn was a major environmental issue and posed a risk to the health and safety of locals.

“It’s the pollution. If you suffer like I do with asthma, and it’s the height of the summer and you’ve got to keep all your windows closed, then it’s an issue,” explained Mr Lockhart.

Arable farmer Tim Daw, whose land at All Cannings overlooks the MoD training site on Salisbury Plain, said he “must have seen three or four big fires this year” but found the smoke only a “mild annoyance”.

He said many locals were worried about the impact of the wildfires on wildlife and the landscape, saying the extent of the area affected by the blazes often looked “fairly horrendous”, and likened it to a “burnt savannah”.

But he said the MoD was “very proactive” in keeping locals informed about the risks of wildfires and any ongoing problems on their land.

Wartime “legacy”

Aside from the problem of live military training sparking fires, old unexploded ordnance left behind from previous manoeuvres make wildfires harder to fight,

This month has seen a major fire burning on Langdale Moor, in the North York Moors National Park, since Monday, 11 August.

It has seen a number of bombs explode in areas that were once used for military training dating back to the Second World War.

One local landowner, George Winn-Darley, said the peat fire had produced “an enormous cloud of pollution” that could have been prevented if there had not been live ordnance on site.

“If that unexploded ordnance had been cleared up and wasn’t there then this wildfire would have been able to be dealt with, probably completely, nearly two weeks ago,” he told the BBC.

Mr Winn-Darley called for the MoD to clear up any major munitions left on the moors.

“That would seem to be the absolute minimum that they should be doing,” he said.

“It seems ridiculous that here we are, 80 years after the end of the Second World War, and we’re still dealing with this legacy.”

A MoD spokesperson said the fire at Langdale had not started on land currently owned by the MoD but that an Army Explosive Ordnance Disposal (EOD) team had responded on four occasions to requests for assistance from North Yorkshire Police.

“Various Second World War-era unexploded ordnance items were discovered as a result of the wildfires, which the EOD operator declared to be inert practice projectiles. They were retrieved for subsequent disposal,” he explained.

He added that the MoD monitors the risk of fires across its training estate throughout the year and restricts the use of ordnance, munitions and explosives when training is taking place during periods of elevated wildfire risk.

“Impact areas” are constructed with fire breaks, such as stone tracks, around them to prevent the wider spread of fire and grazing is used to keep the amount of combustible vegetation down.

Earlier this month, the MoD launched its “Respect the Range” campaign, designed to raise the public’s awareness of the dangers of accessing military land, such as live firing, unexploded ordnance and wildfires.

A spokeswoman for the National Fire Chiefs Council (NFCC) said it worked closely with the MoD to “understand the risk and locations of munitions and to create plans to effectively extinguish fires”.

“We always encourage military colleagues to account for the conditions and the potential for wildfire when considering when to carry out their training,” she added.

AI Research

AI Smart Assistant Gets to Work in City Planning Department

City staff in the planning department at Bellevue, Wash., have a new assistant that already has a commanding understanding of development codes, zoning maps and other detailed information.

The department has been using an AI-powered “smart assistant” to aid in the city’s permitting processes. Earlier this month, Bellevue moved the project — a partnership with Govstream.ai — from its early testing phase to “real-world scenarios,” Sabra Schneider, Bellevue CIO, said.

The smart assistant tool has been trained in the city’s development code, GIS data, zoning codes, parcel data, permit history and more so that it can assist in speeding up the permitting process, Safouen Rabah, founder and CEO for Govstream.ai, said.

“The system drafts code-cited emails, checks plans for completeness, flags zoning conflicts, and answers routine questions,” Rabah said via email. “But staff always remain in control — reviewing, editing and making final decisions.”

The assistant is three tools in one, Schneider said via email: chat, email and voice, “all tied into the same knowledge base and built for government use, with traceability and staff oversight built in from the start.”

For now, planning staff is using the AI assistant to look up parcel-specific zoning rules and then generate draft responses to resident inquiries, with citations to the development code.

“We’re also starting to use the tool to analyze conversations between the AI assistants and staff for thematic insights about how our community interacts with different aspects of permitting,” Schneider said.

Ultimately, technology like this smart assistant will free staff from repetitive tasks — which Rabah described as “document toil” — allowing them to focus on “judgment-driven work like safety, equity and community priorities.”

Bellevue also plans to extend the technology to the public so that residents, designers or contractors can also benefit from fast, “citation-based answers” to questions regarding projects or processes, Schneider said.

Initiatives like the Govstream.ai pilot stem from Bellevue’s Innovation Forum, which brought together members of the community, startups, students and others to brainstorm how to enhance city services and the overall quality of life — part of a broader strategy, Schneider said, to make innovation more open and actionable. The city will host a Civic Innovation Day Oct. 16 to highlight civic innovation in the greater Puget Sound area.

AI tools that help to cut down on repetitive tasks, search quickly through data or smooth out workflow gaps work in the kinds of areas where experts say artificial intelligence can bring value to organizations. The technology, they stress, should serve people.

“When I talk about artificial intelligence, I not only talk about computation. I talk about people, first and foremost,” Nathan McNeese, founding director of the Clemson University Center for Human-AI Interaction, Collaboration and Teaming, said during a recent webinar to discuss the new report, Strategies for Integrating AI into State and Local Government Decision Making: Rapid Expert Consultation, by the National Academies of Sciences, Engineering and Medicine.

“You need to understand who your people are before you can even have the idea of putting AI into an organization. Because AI’s going to fail in your organization if people are not your first consideration,” he said.

And starting small — a pilot project in one city department, limited to internal staff — is also advisable, Suresh Venkatasubramanian, a computer science professor at Brown University, where he directs the Center for Technological Responsibility, Reimagination and Redesign, said as he urged tech leaders to embrace an ethos of experimentation and “sandboxing” to understand how the AI systems work, within a specific context.

“AI is not like buying a piece of software, you buy it once, and it’s done, because it serves a specific purpose,” Venkatasubramanian said during the webinar. “It’s one of those things where it’s an ongoing conversation amongst the folks using the system, the folks impacted by the system.”

AI Research

AI tools accelerated battle management decisions during latest Air Force DASH wargame

This is part three of a three-part series examining how the Air Force is experimenting with artificial intelligence for its DAF Battle Network capability. It is based on several exclusive interviews with the 805th Combat Training Squadron, the Advanced Battle Management System Cross Functional Team, airmen and members of industry. Part one can be found here, and part two can be found here.

LAS VEGAS — A recent experiment held by the Air Force demonstrated that industry-developed artificial intelligence tools can not only shorten the time it takes battle managers to make decisions, but also give operators a more holistic picture of the battlespace.

The 805th Combat Training Squadron hosted its second Decision Advantage Sprint for Human-Machine Teaming (DASH) wargame in July, bringing Air Force personnel and industry software teams together at the squadron’s unclassified office in downtown Las Vegas, Nevada. DefenseScoop observed part of the two-week event, where vendors custom-coded AI-enabled microservices and tested how the technology could be applied to command-and-control operations.

“DASH 2 continued the Air Force’s campaign of learning to accelerate decision advantage for the joint force,” Col. John Ohlund, director of the Advanced Battle Management System (ABMS) Cross Functional Team, said in a statement. “While detailed results are still under review, the experiment advanced our understanding of human-machine teaming, match effectors, and collaborative battle management. The effort provided valuable insights that will guide future development.”

The DASH sprints are a new wargame series led by the 805th, also known as the Shadow Operations Center – Nellis (ShOC-N), and are designed to test emerging AI capabilities and concepts for battle management. Each experiment focuses on one subfunction of the Air Force’s Transformational Model for Decision Advantage, a methodology that breaks down the entire C2 process into 52 specific choices a warfighter makes during operations.

Teams from six companies — who asked to remain anonymous — as well as a group of Air Force software engineers participated in the DASH 2 wargame. Throughout the event, coders developed an AI microservice for the subfunction known as “match effectors,” which decides what weapons system is the best available to destroy an identified target.

“They’re going to run a generic red versus blue [scenario],” Lt. Col. Wesley Schultz, ShOC-N’s director of operations, said in an interview. “In general, offensive counter-air is going to go to the red side, drop a bomb [or] shoot down things and then get out of town.”

While the scenario seems simple in practice, there are dozens of variables an air battle manager considers when deciding what weapon to use. Those include tactical decisions — such as the specifics of the target and availability of blue forces — as well as environmental factors like weather and risk to civilians.

“As we get into the more complicated tasks, I am not saying, ‘Okay, I want a bomb on this airplane.’ It is, ‘I need this weapon on this airplane, plus another weapon on another airplane, plus an intelligence aircraft that is providing support, plus I need a data link.’ Finding all of those effects together takes time, and it is a very slow process,” Capt. Steven Mohan, chief of standards evaluations for the 729th Air Control Squadron and one of the participating battle managers, told DefenseScoop.

To lighten that cognitive load, software vendors at DASH 2 were tasked to develop an AI-enabled microservice that could ingest battlefield data and create a ranked list of available effectors for a battle manager to choose from.

Each company would routinely stress-test their code during 45-minute simulations called “vulnerabilities,” or VULS, where they could receive real-time feedback from air battle managers.

Senior Airman Besner Carranza of the 729th Air Control Squadron said that when a tasking order would appear in their system, the vendor’s AI would replicate that tasking and create a ranked list of matched effectors within the area or responsibility they could choose from. Oftentimes, the software would also provide reasoning behind their rankings, giving battle managers more confidence when making decisions.

Carranza noted that during the baseline run, the entire process took approximately 10 minutes for them to complete. But when the AI tools were integrated, it was much faster.

“For me as a battle manager, it’s difficult because we get so many taskings at once. And having that [user interface] helps us integrate, using both of our knowledge and brains to match-effect the best course of action,” he said.

Industry teams often sat next to air battle managers during the VULS, receiving critical feedback that was used to improve their software. Some vendors even ran through the simulation while being instructed by airmen to provide additional context to the problem, according to Capt. Steven Mohan, chief of standards evaluations for the 729th Air Control Squadron.

“From the first demo of, ‘This is what our product looks like,’ to the first test run with data, to what we’ll see with the final sims today, we’ve been seeing improvements that helped basically teach the vendors to speak the language so that it fits naturally in our workflow,” Mohan told DefenseScoop.

The experiment also highlighted areas where both the Air Force and industry could improve. For example, Mohan said the AI tools would generate inaccurate results or fail to recognize a specific message — signalling that the service could be more organized with the data it’s using.

Members of industry also noted their struggles with integrating various sources into a common data layer.

“Each team addressed this challenge in different ways,” one industry team noted. “We took an approach that allowed us to test the capabilities on which we were conducting research; however, releasing the data artifacts during [request for proposals] solicitation would help the government get better solutions.”

Another key factor was that the simulations included weapons systems from the other services, such as Army Terminal High Altitude Area Defense (THAAD) batteries, Navy Arleigh Burke-class guided-missile destroyers (DDGs), space-based systems and cyber capabilities.

Multiple air battle managers at DASH told DefenseScoop that the inclusion of multi-domain capabilities was challenging, but opened their eyes to options outside of Air Force assets.

“If I need to investigate something and put sensors on it, my mind automatically goes for ground-based tracks like the RC-135 [Rivet joint reconnaissance aircraft], but maybe a space asset will be much better because then I don’t have to put the Rivet Joint in harm’s way trying to get over there,” Staff Sgt. Jacob Mucheberger of the 128th Air Control Squadron said.

He added that participating in DASH demonstrated that if the Air Force had to use assets from other services in future conflicts, he personally doesn’t have the training to fully leverage those systems because he isn’t well-versed in the specific capabilities.

“Now I’m thinking about space assets or naval assets or cyber assets and things of that sort, and how to implement them,” Mucheberger said. “But it also makes me think this sort of program is really needed, because the whole idea of match effectors is generating effectors that you wouldn’t have otherwise thought of.”

The inclusion of multi-domain capabilities is integral to the Air Force’s plans for the DAF Battle Network, an integrated “system-of-systems” that will support the Pentagon’s Combined Joint All-Domain Command and Control (CJADC2) concept. The effort broadly seeks to connect sensors and weapons from across the military services and international partners under a single network, enabling rapid data transfer between warfighting systems.

“They have to work all-domain problems, right? And because these problems don’t respect the boundaries of our services, we have to have naval and air forces and cyber and space working together,” Col. Jonathan Zall, ABMS capability integration chief, said in an interview. “And so these battle managers have got to be able to come up with solutions that don’t just involve Air Force assets or airborne assets.”

Moving forward, the ShOC-N has one more DASH sprint planned for 2025 and expects to hold four wargames in 2026. Both the squadron and ABMS Cross Functional Team believe that the results from each event will help the Air Force’s program executive officer for command, control, communications and battle management (C3BM) develop future requirements for individual AI microservices specific to the subfunctions under the transformational model.

“We’re able to put that repository into requirements, and now the Air Force — really, I shouldn’t even say the Air Force, it’s agnostic of service — can go acquire exactly what they want, as opposed to a vendor coming along and saying, ‘Hey, I think I know what you want,’” ShOC-N Commander Lt. Col. Shawn Finney explained during an interview at Nellis Air Force Base in Las Vegas, where the 805th is headquartered. “We’re trying to flip that and say, ‘No, that’s exactly what I want. It fits exactly with the specific need that we need.’”

And while the 805th is very early on in their experiments, officials said they’re already seeing proof that their vision for the DAF Battle Network could one day become a reality. Ohlund noted that future events could experiment with multiple microservices together at a single DASH sprint — something in line with efforts by Maj. Gen. Luke Cropsey, PEO for C3BM, that pushes for “disposable software.”

“The way I interpret that is, we need to be able to experiment with one version of software, and if there’s another one or a better one that’s out there, we should be able to replace it. We should be able to plug and play within the government reference architecture,” Ohlund said.

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences3 months ago

Events & Conferences3 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoAstrophel Aerospace Raises ₹6.84 Crore to Build Reusable Launch Vehicle

-

Mergers & Acquisitions2 months ago

Mergers & Acquisitions2 months agoDonald Trump suggests US government review subsidies to Elon Musk’s companies