AI Research

Nebius Stock Has Made a Big Move. This Artificial Intelligence (AI) Stock Could Be Next.

Nebius shot up 50% in a day thanks to its Microsoft deal, but investors who missed its impressive spike have a solid alternative to buy right now.

Nebius Holdings (NBIS 1.24%) stock took off on Sept. 9, rising almost 50% in a single day after it emerged that the cloud artificial intelligence (AI) infrastructure provider has landed a massive contract with Microsoft (MSFT 1.82%) that’s going to supercharge its growth for years to come.

For a company that has clocked $250 million in revenue in the past four quarters, the $19.4 billion contract from Microsoft that will run until 2031 is definitely a big deal. The tech giant has given Nebius such a lucrative deal to gain access to the latter’s New Jersey data center. This is a dedicated AI data center powered by graphics processing units (GPUs), and Microsoft is going to use the same for handling AI workloads.

However, Nebius is not the only dedicated AI cloud infrastructure company out there. There is another company that’s been in fine form on the stock market this year, is growing at a solid pace, has a terrific backlog, and is cheaper than Nebius from a valuation perspective.

Let’s take a closer look at that name and why it will be a good idea to buy it right away.

Image source: Getty Images.

Nebius’ surge has rubbed off positively on this cloud infrastructure provider

CoreWeave (CRWV -0.67%) stock jumped more than 7% in the wake of the Nebius-Microsoft deal. It is easy to see why that was the case. Just like Nebius, CoreWeave also rents out its dedicated, GPU-powered data centers to customers looking to run AI and machine learning (ML) workloads.

The company points out that its AI infrastructure is purpose-built for AI, allowing its customers to reduce the time to market and improve efficiency and speed significantly. Not surprisingly, CoreWeave has witnessed remarkably solid demand for its AI infrastructure offerings, as is evident from the rapid growth in the company’s revenue and backlog.

CoreWeave’s top line jumped 207% in the second quarter of 2025 to $1.2 billion. But what’s more impressive is its backlog worth $30.1 billion, which increased by nearly $14 billion from the year-ago quarter. CoreWeave’s revenue backlog has doubled in the first half of 2025, thanks to the sizable contracts it has won from the likes of OpenAI and Google Cloud.

OpenAI has awarded CoreWeave contracts totaling $15.9 billion this year. CoreWeave has won business from new hyperscale and enterprise customers as well. Looking ahead, it won’t be surprising to see CoreWeave’s revenue backlog getting fatter. That’s because major cloud computing providers are witnessing massive growth in their contractual obligations, and that’s why they are tapping the likes of Nebius and CoreWeave to get their hands on more AI data center capacity.

Microsoft, for instance, saw its contracted remaining performance obligations (RPO) jump by 35% year over year in the previous quarter to a whopping $368 billion. The company credited this massive backlog to the robust demand for its cloud services. This makes it clear why the tech giant decided to turn to Nebius to unlock more capacity so that it can fulfill the heavy AI computing demand that it is witnessing.

An important thing worth noting here is that Microsoft is CoreWeave’s largest customer, accounting for 62% of its top line in 2024. Meta Platforms and IBM are some of its other customers, and CoreWeave has also brought OpenAI on board this year. Given that the company sees its addressable market hitting $400 billion by 2028, investors can expect it to sustain its healthy growth levels in the long run, especially considering its focus on bringing more capacity online.

CoreWeave operated 33 AI data centers in North America and Europe at the end of the previous quarter with an active power capacity of 470 megawatts (MW). The company plans to end the year with 900 MW of active data center capacity. What’s more, its contracted data center capacity of 2.2 gigawatts (GW) indicates that it can substantially ramp up its capacity in the long run.

Contracted power refers to the data center capacity that CoreWeave can bring online by deploying compute, networking, and storage equipment, which will turn the contracted capacity into active capacity. What’s worth noting here is that CoreWeave’s active capacity was well above Nebius’ 220 MW at the end of the previous quarter. Additionally, its contracted capacity is much higher than that of Nebius, which expects to have 1 GW of contracted capacity by the end of next year.

So, CoreWeave is ahead of Nebius as far as capacity is concerned. Throw in a more attractive valuation, and it’s easy to see why CoreWeave has the potential to fly higher in the future.

CoreWeave’s solid growth potential can send the stock flying

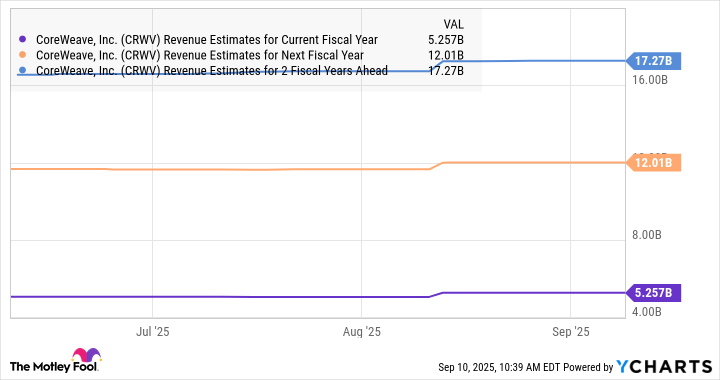

Consensus estimates are projecting red-hot growth in CoreWeave’s revenue.

CRWV Revenue Estimates for Current Fiscal Year data by YCharts

Its outstanding backlog and the size of its total addressable market can indeed allow it to more than triple its top line in the space of just two years, as seen in the chart above. This makes CoreWeave an attractive buy since it is trading at less than 14 times sales, a massive discount to Nebius’ price-to-sales ratio of 91.

Even if CoreWeave trades at 5 times sales (in line with the Nasdaq Composite index’s multiple) after three years and achieves $17 billion in revenue, its market cap could jump to $85 billion. That points toward a 73% jump from current levels, though stronger gains cannot be ruled out.

Harsh Chauhan has no position in any of the stocks mentioned. The Motley Fool has positions in and recommends International Business Machines, Meta Platforms, and Microsoft. The Motley Fool recommends Nebius Group and recommends the following options: long January 2026 $395 calls on Microsoft and short January 2026 $405 calls on Microsoft. The Motley Fool has a disclosure policy.

AI Research

University Spinout TransHumanity secures £400k | News and events

TransHumanity Ltd., a spinout from Loughborough University, has secured approximately £400,000 in pre-seed investment. The round was led by SFC Capital, the UK’s most active seed-stage investor, with additional investment from Silicon Valley-based Plug and Play.

TransHumanity’s vision is to empower faster, smarter human decisions by transforming data into accessible intelligence using large language model based agentic AI.

Agentic AI refers to artificial intelligence systems that collaborate with people to reach specific goals, understanding and responding in plain English. These systems use AI “agents” — models that can gather information, make suggestions, and carry out tasks in real time — helping people solve problems more quickly and effectively.

TransHumanity’s first product, AptIq, is designed to help transport authorities quickly analyse transport data and models, turning days of analysis into seconds.

By simply asking questions in plain English, users can gain instant insights to support key initiatives like congestion reduction, road safety, creation of business cases and net-zero targets.

Dr Haitao He, Co-founder and Director of TransHumanity, said: “I am proud to see my rigorous research translated into trusted real-world AI innovation for the transport sector. With this investment, we can now realise my Future Leaders Fellowship vision, scaling a technology that empowers authorities across the UK to deliver integrated, net-zero transport.”

Developed from rigorous research by Dr Haitao He, a UKRI Future Leaders Fellow in Transport AI at Loughborough University, AptIq, previously known as TraffEase, has already garnered significant recognition.

The technology was named a Top 10 finalist for the 2024 Manchester Prize for AI innovation and was recently highlighted as one of the Top 40 UK tech start-ups at London Tech Week by the UK Department for Business and Trade.

Adam Beveridge, Investment Principal at SFC Capital, said: “We are excited to back TransHumanity. The combination of cutting-edge research, a proven founding team, clear market demand, and positive societal impact makes this exactly the kind of high-growth venture we are committed to supporting.”

AptIq is currently in a test deployment with Nottingham City Council and Transport for Greater Manchester, with plans to expand to other city, regional, and national authorities across the UK within the next 12 months.

With a product roadmap that includes diverse data sources, advanced analytics and giving the user full control over the AI tool when required, interest from the transport sector is already high. Professor Nick Jennings, Vice-Chancellor and President of Loughborough University, noted: “I am delighted to see TransHumanity fast-tracked from lab to investment-ready spinout.

This journey was accelerated by TransHumanity’s selection as a finalist in the prestigious Manchester Prize and shows what’s possible when the University’s ambition aligns with national innovation policy.”

AI Research

Legal-Ready AI: 7 Tips for Engineers Who Don’t Want to Be Caught Flat-Footed

An oversimplified approach I have taken in the past to explain wisdom is to share that “We don’t know what we don’t know until we know it.” This absolutely applies to the fast-moving AI space, where unknowingly introducing legal and compliance risk through an organization’s use of AI is a top concern among IT leaders.

We’re now building systems that learn and evolve on their own, and that raises new questions along with new kinds of risk affecting contracts, compliance, and brand trust.

At Broadcom, we’ve adopted what I’d call a thoughtful ‘move smart and then fast’’ approach. Every AI use case requires sign-off from both our legal and information security teams. Some folks may complain, saying it slows them down. But if you’re moving fast with AI and putting sensitive data at risk, you’re also inviting trouble if you don’t also move smart.

Here are seven things I’ve learned about collaborating with legal teams on AI projects.

1. Partner with Legal Early On

Don’t wait until the AI service is built to bring legal in. There’s always the risk that choices you make about data, architecture, and system behavior can create regulatory headaches or break contracts later on.

Besides, legal doesn’t need every answer on day one. What they do need is visibility into the gray areas. What data are you using and producing? How does the model make decisions? Could those decisions shift over time? Walk them through what you’re building and flag the parts that still need figuring out.

2. Document Your Decisions as You Go

AI projects move fast with teams needing to make dozens of early decisions on everything from data sources to training logic. So, it’s only natural that a few months later, chances are no one remembers why those choices were made. Then someone from compliance shows up with questions about those choices, and you’ve got nothing to point to.

To avoid that situation, keep a simple log as you work. Then, should a subsequent audit or inquiry occur, you’ll have something solid to help answer any questions.

3. Build Systems You Can Explain

Legal teams need to understand your system so they can explain it to regulators, procurement officers, or internal risk reviewers. If they can’t, there’s the risk that your project could stall or even fail after it ships.

I’ve seen teams consume SaaS-based AI services without realizing the provider could swap out a backend AI model without their knowledge. If that leads to changes in the system’s behavior behind the scenes, it could redirect your data in ways you didn’t intend. That’s one reason why you’ve got to know your AI supply chain, top to bottom. Ensure that services you build or consume have end-to-end auditability of the AI software supply chain. Legal can’t defend a system if they don’t understand how it works.

4. Watch Out for Shadow AI

Any engineer can subscribe to an AI service and accept the provider’s terms without knowing they don’t have the authority to do that on behalf of the company.

That exposes the organization to major risk. An engineer might accidentally agree to data-sharing terms that violate regulatory restrictions or expose sensitive customer data to a third party.

And it’s not just deliberate use anymore. Run a search in Google and you’re already getting AI output. It’s everywhere. The best way to avoid this is by building a culture where employees are aware of the legal boundaries. You can give teams a safe place to experiment, but at the same time, make sure you know what tools they’re using and what data they’re touching.

5. Help Legal Navigate Contract Language

AI systems get tangled in contract language; there are ownership rights, retraining rules, model drift, and more. Most engineers aren’t trained to spot those issues, but we’re the ones who understand how the systems behave.

That’s another reason why you’ve got to know your AI supply chain, top to bottom. In this case, when legal needs our help in reviewing vendor or customer agreements to put the contractual language into the appropriate technical context. What happens when the model changes? How are sensitive data sets safeguarded from being indexed or accessed via AI agents such as those that use Model Context Protocol (MCP)? We can translate the technical behavior into simple English—and that goes a long way toward helping the lawyers write better contracts.

6. Design with Auditability in Mind

AI is developing rapidly, with legal frameworks, regulatory requirements, and customer expectations evolving to keep pace. You need to be prepared for what might come next.

Can you explain where your training data came from? Can you show how the model was tested for bias? Can you justify how it works? If someone from a regulatory body walked in tomorrow, would you be ready?

Design with auditability in mind. Especially when AI agents are chained together, you need to be able to prove that identity and access controls are enforced end-to-end.

7. Handle Customer Data with Care

We don’t get to make decisions on behalf of our customers about how their data gets used. It’s their data. And when it’s private, it shouldn’t be fed to a model. Period.

You’ve got to be disciplined about what data gets ingested. If your AI tool indexes everything by default, that can get messy fast. Are you touching private logs or passing anything to a hosted model without realizing it? Support teams might need access to diagnostic logs but that doesn’t mean third-party models should touch them. Tools are rapidly evolving that can generate comparable synthetic data devoid of any customer private data that could help with support use cases for example, but these tools and techniques should be fully vetted with your legal and CISO organizations prior to using them.

The Reality

The engineering ethos is to move fast. But since safety and trust are on the line, you need to move smart, which means it’s okay if things take a little longer. The extra steps are worth it when they help protect your customers and your company.

Nobody has this all figured out. So ask questions by talking to people who’ve handled this kind of work before. The goal isn’t perfection—it’s to make smart, careful progress. For enterprises, the AI race isn’t a question of “Who’s best?” but rather “Who’s leveraging AI safely to drive the best business outcomes.”

AI Research

Co-Inventors of Random Contrast Learning Rejoin Lumina to Accelerate Research and Development

As Random Contrast Learning™ enters a new chapter of growth and adoption, Lumina AI announces the return of co-inventors, Ben and Sam Martin, to lead groundbreaking research and unlock new frontiers in machine learning.

TAMPA, Fla., Sept. 16, 2025 /PRNewswire/ — Lumina AI is proud to announce that Ben Martin and Sam Martin, co-inventors of RCL with Dr. Morten Middelfart, are rejoining the company as AI Research Scientists to support its next phase of growth.

The brothers bring distinct but complementary perspectives to RCL’s continued development. Ben, whose academic background is in philosophy and Husserl’s phenomenology, brings a foundational lens to RCL, supporting ongoing research into the algorithm’s theoretical structure and how the development of machine learning can draw upon models of human consciousness. Sam, whose technical background focuses on applied machine learning and algorithm performance, will focus on driving research on algorithmic scalability, and comparative performance against state-of-the-art machine learning methods.

“In machine learning, simplicity scales,” said Dr. Morten Middelfart, Chief Data Scientist of Lumina AI.“Ben and Sam understood that from day one, and their return marks a renewed focus on delivering clear, fast, and reliable models that work without unnecessary complexity.”

The Martins will contribute to Lumina’s expanding research footprint, including initiatives around hybrid model architectures, alternative learning systems, and long-term theoretical implications of machine intelligence. Their work will guide both internal development and external co-innovation partnerships.

“Welcoming Ben and Sam back to Lumina is both personally meaningful and strategically aligned with our mission,” said Allan Martin, CEO of Lumina AI. “As two of the three original minds behind RCL, their vision has shaped our algorithm from inception. Their return ensures that the future of RCL will proceed with both conceptual rigor and innovation. “

About Lumina AI

Lumina AI is redefining machine learning with Random Contrast Learning™ (RCL), a novel algorithm that achieves state-of-the-art accuracy while training rapidly on standard CPU hardware. By eliminating the need for GPUs, Lumina makes advanced AI more accessible, cost-effective, and sustainable.

Media Contact

[email protected] | +1 (813) 443 0745

SOURCE Lumina AI

-

Business3 weeks ago

Business3 weeks agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Tools & Platforms1 month ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy2 months ago

Ethics & Policy2 months agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences4 months ago

Events & Conferences4 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers3 months ago

Jobs & Careers3 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Education3 months ago

Education3 months agoVEX Robotics launches AI-powered classroom robotics system

-

Education2 months ago

Education2 months agoMacron says UK and France have duty to tackle illegal migration ‘with humanity, solidarity and firmness’ – UK politics live | Politics

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Funding & Business3 months ago

Funding & Business3 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries