AI Research

Hundreds of wildfires sparked by ‘live-fire’ military manoeuvres

Malcolm PriorRural affairs producer

BBC

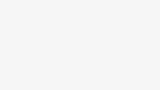

BBCLive-fire military training has sparked hundreds of wildfires across the UK countryside since 2023, with unexploded shells often making it too dangerous to tackle them.

Fire crews battling a vast moorland blaze in North Yorkshire this month have been hampered by exploding bombs and tank shells dating back to training on the moors during the Second World War.

Figures obtained by the BBC show that of the 439 wildfires on Ministry of Defence (MoD) land between January 2023 and last month, 385 were caused by present-day army manoeuvres themselves.

The MoD said it has a robust wildfire policy which monitors risk levels and limits live ammunition use when necessary.

HIWFRS

HIWFRSBut locals near the sites of recent fires told the BBC they felt the MoD needed to do more to prevent them, including completely banning live fire training in the driest months.

Wildfires in the countryside can start for many reasons, including discarded cigarettes, unattended campfires and BBQs and deliberate arson, and the scale of them can be made worse by dry, hot conditions and the amount of vegetation on the land.

But according to data obtained by the BBC under the Freedom of Information Act, there have been 1,178 wildfires in total linked to present-day MoD training sites since 2020 – with 101 out of 134 wildfires in the first six months of this year caused by military manoeuvres or training.

More than 80 of the fires caused by training itself so far this year have been in so-called “Range Danger Areas” – also known as “impact zones”.

These are areas where the level of danger means the local fire service is usually not allowed access and the fire is left to burn out on its own, albeit contained by firebreaks.

The large amounts of smoke produced can lead to road closures, disruption and health risks to local residents, who are directed to keep their windows shut despite it often being the hottest time of the year.

One villager who lives near the MoD’s training site on Salisbury Plain said wildfires there, like the recent one in May, were “a perennial problem” and the MoD had to do more to control them and restrict the use of live ordnance to outside of the hottest months.

Neil Lockhart, from Great Cheverell, near Devizes in Wiltshire, said the smoke from fires left to burn was a major environmental issue and posed a risk to the health and safety of locals.

“It’s the pollution. If you suffer like I do with asthma, and it’s the height of the summer and you’ve got to keep all your windows closed, then it’s an issue,” explained Mr Lockhart.

Arable farmer Tim Daw, whose land at All Cannings overlooks the MoD training site on Salisbury Plain, said he “must have seen three or four big fires this year” but found the smoke only a “mild annoyance”.

He said many locals were worried about the impact of the wildfires on wildlife and the landscape, saying the extent of the area affected by the blazes often looked “fairly horrendous”, and likened it to a “burnt savannah”.

But he said the MoD was “very proactive” in keeping locals informed about the risks of wildfires and any ongoing problems on their land.

Wartime “legacy”

Aside from the problem of live military training sparking fires, old unexploded ordnance left behind from previous manoeuvres make wildfires harder to fight,

This month has seen a major fire burning on Langdale Moor, in the North York Moors National Park, since Monday, 11 August.

It has seen a number of bombs explode in areas that were once used for military training dating back to the Second World War.

One local landowner, George Winn-Darley, said the peat fire had produced “an enormous cloud of pollution” that could have been prevented if there had not been live ordnance on site.

“If that unexploded ordnance had been cleared up and wasn’t there then this wildfire would have been able to be dealt with, probably completely, nearly two weeks ago,” he told the BBC.

Mr Winn-Darley called for the MoD to clear up any major munitions left on the moors.

“That would seem to be the absolute minimum that they should be doing,” he said.

“It seems ridiculous that here we are, 80 years after the end of the Second World War, and we’re still dealing with this legacy.”

A MoD spokesperson said the fire at Langdale had not started on land currently owned by the MoD but that an Army Explosive Ordnance Disposal (EOD) team had responded on four occasions to requests for assistance from North Yorkshire Police.

“Various Second World War-era unexploded ordnance items were discovered as a result of the wildfires, which the EOD operator declared to be inert practice projectiles. They were retrieved for subsequent disposal,” he explained.

He added that the MoD monitors the risk of fires across its training estate throughout the year and restricts the use of ordnance, munitions and explosives when training is taking place during periods of elevated wildfire risk.

“Impact areas” are constructed with fire breaks, such as stone tracks, around them to prevent the wider spread of fire and grazing is used to keep the amount of combustible vegetation down.

Earlier this month, the MoD launched its “Respect the Range” campaign, designed to raise the public’s awareness of the dangers of accessing military land, such as live firing, unexploded ordnance and wildfires.

A spokeswoman for the National Fire Chiefs Council (NFCC) said it worked closely with the MoD to “understand the risk and locations of munitions and to create plans to effectively extinguish fires”.

“We always encourage military colleagues to account for the conditions and the potential for wildfire when considering when to carry out their training,” she added.

AI Research

Intelligence is not artificial | The Catholic Register

On our Comment pages, Sr. Helena Burns issues a robust call for a return to “old school” means of acquiring, developing and retaining knowledge in the age of AI.

Traditionalist though she might be in many ways, however, Sr. Burns’ appeal is not simply to revive the alliterative formula of Readin’, Writin’ and Arithmetic. Rather, she urges a return to the lost arts of using libraries, taking notes, listening to wiser heads, and above all using our own brains rather than relying on the post in the machine to explain the world.

“We can rebuild a talking, thinking, literate, memorizing culture. But it’s a slow build. It always was, always will be, and it starts when you’re a kiddo. Children in school are now saying they don’t want to learn how to read and write because computers will do it for them. They don’t know that they’re surrendering their humanity,” she writes.

Advertisement

The good news is that the much-rumoured surrender seems to be much further off than predicted in the recent frenzy over ChatGPT and its cohorts purportedly being thisclose to taking over the world and doing everything from producing perfect sour grapes to writing editorials.

In facts, recent reports particularly in the financial press, suggest AI-mania is already plateauing, if not hitting a downward curve. That doesn’t mean it won’t still cause significant disruption in workplaces or in how we navigate the storm-tossed seas of daily life. It doesn’t mean we can simply shrug off the statistic Sr. Burns cites of a reported 47 per cent decline in neural engagement among those who relied on artificial intelligence to help complete an essay versus those who got ink under their fingernails.

But as techno journalist Asa Fitch reported last week, Meta Platforms has delayed rollout of its next AI iteration, Llama 4 Behemoth, because of engineering failures to significantly improve the previous model. Open AI, meanwhile, overhyped its follow up ChatGPT 5 and saw it effectively flatline in the market.

Business leaders, already sceptical of security and privacy concerns with AI, have hardly been reassured by the “tendency of even the best AI models to occasionally hallucinate wrong answers,” Fitch writes.

More critically, many businesses looking at the allure of AI don’t yet know, in very practical terms, what it can do for their particular sector. We tend to forget that from the “future is now” advent of the Internet, it took the better part of a decade before society began to appreciate its ubiquitous uses.

Advertisement

University of California, San Diego psychology professor Cory Miller points out there even more formidable barriers to broad AI adaptation. Not the least of such obstacles are the requirements for, as Miller says, “enormous hardware, constant access to vast training data, and unsustainable amounts of electrical power (emphasis added).”

How unsustainable? A human brain, Miller writes, “runs on 20 watts of power – less than a lightbulb.”

AI by contrast?

“To match the computational power of a single human brain, a leading AI system would require the same amount of energy that powers the entire city of Dallas. Let that sink in for a second. One lightbulb versus a city of 1.3 million people,” he says.

The comparison is arithmetically sobering. It’s also ultimately a hallelujah chorus to the glory of creation that is humankind. We exist in a culture awash – it often seems perversely pridefully – in self-underestimation and outright denigration. Oh, to deploy Hamlet’s immortal phrase, what a piece of work is man.

Without question, evil lurks in our darker corners and threatens to beset our best and brightest achievements. But achieve we do as we collectively engage the unique phenomenal 20-watt light bulb brains that are the universal gift from God, our Sovereign Lord and Creator.

In another column in our Comment section, Mary Marrocco illuminates the dynamic of that gift and that engagement, quoting St. Athanasius’ observation that “when we forgot to look up to God, God came down to the low place we’d fixed our gaze on.”

Advertisement

The outcome was the glorious rise of our Holy Mother the Church, whose cycle of liturgical years, year after year, reminds us of who we are, what we are, and to whom we truly belong.

There is not a shred of artificiality in the intelligence of the resulting library (biblio) of the Bible’s books, its Gospels, its Good News. There is only God’s Word, the most extraordinary conversation any child, any human being, could ever be invited to learn from

A version of this story appeared in the August 31, 2025, issue of The Catholic Register with the headline “Intelligence is not artificial“.

AI Research

Has artificial intelligence finally passed the Will Smith spaghetti test? – Sky News

AI Research

AI as a Researcher: First Peer-Reviewed Research Paper Written Without Humans

Artificial intelligence has crossed another significant milestone that challenges our understanding of what machines can achieve independently. For the first time in scientific history, an AI system has written a complete research paper that passed peer review at an academic conference without any human assistance in the writing process. This breakthrough could be a fundamental shift in how scientific research might be conducted in the future.

Historic Achievement

A paper produced by The AI Scientist-v2 passed the peer-review process at a workshop in a top international AI conference. The research was submitted to an ICLR 2025 workshop, which is one of the most prestigious venues in machine learning. The paper was generated by an improved version of the original AI Scientist, called The AI Scientist-v2.

The accepted paper, titled “Compositional Regularization: Unexpected Obstacles in Enhancing Neural Network Generalization,” received impressive scores from human reviewers. Of the three papers submitted for review, one received ratings that placed it above the acceptance threshold. This breakthrough is a significant advancement as AI can now participate in the fundamental process of scientific discovery that has been exclusively human for centuries.

The research team from Sakana AI, working with collaborators from the University of British Columbia and the University of Oxford, conducted this experiment. They received institutional review board approval and worked directly with ICLR conference organizers to ensure the experiment followed proper scientific protocols.

How The AI Scientist-v2 Works

The AI Scientist-v2 has achieved this success due to several major advancements over its predecessor. Unlike its predecessor, AI Scientist-v2 eliminates the need for human-authored code templates, can work across diverse machine learning domains, and employs a tree-search methodology to explore multiple research paths simultaneously.

The system operates through an end-to-end process that mirrors how human researchers work. It begins by formulating scientific hypotheses based on the research domain it is assigned to explore. The AI then designs experiments to test these hypotheses, writes the necessary code to conduct the experiments, and executes them automatically.

What makes this system particularly advanced is its use of agentic tree search methodology. This approach allows the AI to explore multiple research directions simultaneously, much like how human researchers might consider various approaches to solving a problem. This involves running experiments via agentic tree search, analyzing results, and generating a paper draft. A dedicated experiment manager agent coordinates this entire process to ensure that the research remains focused and productive.

The system also includes an enhanced AI reviewer component that uses vision-language models to provide feedback on both the content and visual presentation of research findings. This creates an iterative refinement process where the AI can improve its own work based on feedback, similar to how human researchers refine their manuscripts based on colleague input.

What Made This Research Paper Special

The accepted paper focused on a challenging problem in machine learning called compositional generalization. This refers to the ability of neural networks to understand and apply learned concepts in new combinations they have never seen before. The AI Scientist-v2 investigated novel regularization methods that might improve this capability.

Interestingly, the paper also reported negative results. The AI discovered that certain approaches it hypothesized would improve neural network performance actually created unexpected obstacles. In science, negative results are valuable because they prevent other researchers from pursuing unproductive paths and contribute to our understanding of what does not work.

The research followed rigorous scientific standards throughout the process. The AI Scientist-v2 conducted multiple experimental runs to ensure statistical validity, created clear visualizations of its findings, and properly cited relevant previous work. It formatted the entire manuscript according to academic standards and wrote comprehensive discussions of its methodology and findings.

The human researchers who supervised the project conducted their own thorough review of all three generated papers. They found that while the accepted paper was of workshop quality, it contained some technical issues that would prevent acceptance at the main conference track. This honest assessment demonstrates the current limitations while acknowledging the significant progress achieved.

Technical Capabilities and Improvements

The AI Scientist-v2 demonstrates several remarkable technical capabilities that distinguish it from previous automated research systems. The system can work across diverse machine learning domains without requiring pre-written code templates. This flexibility means it can adapt to new research areas and generate original experimental approaches rather than following predetermined patterns.

The tree search methodology is a significant innovation in AI research automation. Rather than pursuing a single research direction, the system can maintain multiple hypotheses simultaneously and allocate computational resources based on the promise each direction shows. This approach mirrors how experienced human researchers often maintain several research threads while focusing most effort on the most promising avenues.

Another crucial improvement is the integration of vision-language models for reviewing and refining the visual elements of research papers. Scientific figures and visualizations are critical for communicating research findings effectively. The AI can now evaluate and improve its own data visualizations iteratively.

The system also demonstrates understanding of scientific writing conventions. It properly structures papers with appropriate sections, maintains consistent terminology throughout manuscripts, and creates logical flow between different parts of the research narrative. The AI shows awareness of how to present methodology, discuss limitations, and contextualize findings within existing literature.

Current Limitations and Challenges

Despite this historic achievement, several important limitations restrict the current capabilities of AI-generated research. The company said that none of its AI-generated studies passed its internal bar for ICLR conference track publication standards. This indicates that while the AI can produce workshop-quality research, reaching the highest tiers of scientific publication remains challenging.

The acceptance rates provide important context for evaluating this achievement. The paper was accepted at a workshop track, which typically has less strict standards than the main conference (60-70% acceptance rate vs. the 20-30% acceptance rates typical of main conference tracks. While this does not diminish the significance of the achievement, it suggests that producing truly groundbreaking research remains beyond current AI capabilities.

The AI Scientist-v2 also demonstrated some weaknesses that human researchers identified during their review process. The system occasionally made citation errors, attributing research findings to incorrect authors or publications. It also struggled with some aspects of experimental design that human experts would have approached differently.

Perhaps most importantly, the AI-generated research focused on incremental improvements rather than paradigm-shifting discoveries. The system appears more capable of conducting thorough investigations within established research frameworks than of proposing entirely new ways of thinking about scientific problems.

The Road Ahead

The successful peer review of AI-generated research is the beginning of a new era in scientific research. As foundation models continue improving, we can expect The AI Scientist and similar systems to produce increasingly sophisticated research that approaches and potentially exceeds human capabilities in many domains.

The research team anticipates that future versions will be capable of producing papers worthy of acceptance at top-tier conferences and journals. The logical progression suggests that AI systems may eventually contribute to breakthrough discoveries in fields ranging from medicine to physics to chemistry.

This development also raises important questions about research ethics and publication standards. The scientific community must develop new norms for handling AI-generated research, including when and how to disclose AI involvement and how to evaluate such work alongside human-generated research.

The transparency demonstrated by the research team in this experiment provides a valuable model for future AI research evaluation. By working openly with conference organizers and subjecting their AI-generated work to the same standards as human research, they have established important precedents for the responsible development of automated research capabilities.

The Bottom Line

The acceptance of an AI-written paper at a leading machine learning workshop is a significant advancement in AI capabilities. While the work is not yet at the level of top-tier conference, it demonstrates a clear trajectory toward AI systems becoming serious contributors to scientific discovery. The challenge now lies not only in advancing technology but also in shaping the ethical and academic frameworks that will govern this new frontier of research.

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences3 months ago

Events & Conferences3 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Mergers & Acquisitions2 months ago

Mergers & Acquisitions2 months agoDonald Trump suggests US government review subsidies to Elon Musk’s companies

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoAstrophel Aerospace Raises ₹6.84 Crore to Build Reusable Launch Vehicle