AI Research

How Google Cut AI Training Requirements by 10,000x

The artificial intelligence industry faces a fundamental paradox. While machines can now process data at massive scales, the learning remains surprisingly inefficient, facing the challenge of diminishing returns. Traditional machine learning approaches demand massive, labeled datasets that can cost millions of dollars and take years to create. These approaches typically operate under the belief that more data leads to better AI models. However, Google researchers have recently introduced an innovative method that challenges this long-standing belief. They demonstrate that similar AI performance can be achieved with up to 10,000 times less training data. This development has the potential to fundamentally change how we approach AI. In this article, we will explore how Google researchers achieved this breakthrough, the potential future impact of the development, and the challenges and directions ahead.

The Big Data Challenge in AI

For decades, the mantra “more data equals better AI” has driven the industry’s approach to AI. Large language models like GPT-4 consume trillions of tokens during training. This data-hungry approach creates a significant barrier for organizations lacking extensive resources or specialized datasets. First, the cost of human labeling is significantly high. Expert annotators charge high rates, and the sheer volume of data needed makes projects expensive. Second, most of the collected data is often redundant and could not play a crucial role in the learning process. The traditional method also struggles with changing requirements. When policies shift or new types of problematic content emerge, companies must start the labeling process from scratch. This process creates a constant cycle of expensive data collection and model retraining.

Addressing Big Data Challenges with Active Learning

One of the known ways we can address these data challenges is through empowering active learning. This approach relies on a careful curation process that identifies the most valuable training examples for human labelling. The underlying idea is that models learn best from examples they find most confusing rather than passively consuming all available data. Unlike traditional AI methods, which require large datasets, active learning takes a more strategic approach by focusing on gathering only the most informative examples. This approach helps to avoid the inefficiency of labeling obvious or redundant data that provides little value to the model. Instead, active learning targets edge cases and uncertain examples that have the potential to improve model performance significantly.

By concentrating experts’ effort on these key examples, active learning allows models to learn faster and more effectively with far fewer data points. This approach has the potential to address both the data bottleneck and the inefficiencies of traditional machine learning approaches.

Google’s Active Learning Approach

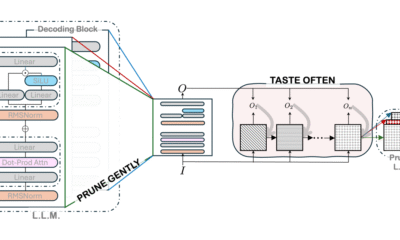

Google’s research team has successfully employed this paradigm. Their new active learning methodology demonstrates that carefully curated, high-quality examples can replace vast quantities of labelled data. For example, they show that models trained on fewer than 500 expert-labeled examples matched or exceeded the performance of systems trained on 100,000 traditional labels.

The process works through what Google calls an “LLM-as-Scout” system. The large language model first scans through vast amounts of unlabeled data, identifying cases where it feels most uncertain. These boundary cases represent the exact scenarios where the model needs human guidance to improve its decision-making. The process begins with an initial model that labels large datasets using basic prompts. The system then clusters examples by their predicted classifications and identifies regions where the model shows confusion between different categories. These overlapping clusters reveal the precise points where expert human judgment can become most valuable.

The methodology explicitly targets pairs of examples that lie closest together but carry different labels. These boundary cases represent the exact scenarios where human expertise matters the most. By concentrating expert labeling efforts on these confusing examples, the system achieves remarkable efficiency gains.

Quality Over Quantity

The research reveals a key finding regarding data quality that challenges a common assumption in AI. It demonstrates that expert labels, with their high fidelity, consistently outperform large-scale crowdsourced annotations. They measured this using Cohen’s Kappa, a statistical tool that assesses how well the model’s predictions align with expert opinions, beyond what would happen by chance. In Google’s experiments, expert annotators achieved Cohen’s Kappa scores above 0.8, significantly outperforming what crowdsourcing typically provides.

This higher consistency allows models to learn effectively from far fewer examples. In tests with Gemini Nano-1 and Nano-2, models matched or exceeded expert alignment using just 250–450 carefully selected examples as compared to around 100,000 random crowdsourced labels. That’s a reduction of three to four orders of magnitude. However, the benefits are not just limited to using less data. Models trained with this approach often outperform those trained with traditional methods. For complex tasks and larger models, performance improvements reached 55–65% over the baseline, which shows more substantial and more reliable alignment with policy experts.

Why This Breakthrough Matters Now

This development comes at a critical time for the AI industry. As models grow larger and more sophisticated, the traditional approach of scaling training data has become increasingly unsustainable. The environmental cost of training massive models continues to grow, and the economic barriers to entry remain high for many organizations.

Google’s method addresses multiple industry challenges simultaneously. The dramatic reduction in labeling costs makes AI development more accessible to smaller organizations and research teams. The faster iteration cycles enable rapid adaptation to changing requirements, which is essential in dynamic fields like content moderation or cybersecurity.

The approach also has broader implications for AI safety and reliability. By focusing on the cases where models are most uncertain, the method naturally identifies potential failure modes and edge cases. This process creates more robust systems that better understand their limitations.

The Broader Implications for AI Development

This breakthrough suggests we may be entering a new phase of AI development where efficiency matters more than scale. The traditional “bigger is better” approach to training data may give way to more sophisticated methods that prioritize data quality and strategic selection.

The environmental implications alone are significant. Training large AI models currently requires enormous computational resources and energy consumption. If similar performance can be achieved with dramatically less data, the carbon footprint of AI development could shrink substantially.

The democratizing effect could be equally important. Smaller research teams and organizations that previously couldn’t afford massive data collection efforts now have a path to competitive AI systems. This development could accelerate innovation and create more diverse perspectives in AI development.

Limitations and Considerations

Despite its promising results, the methodology faces several practical challenges. The requirement for expert annotators with Cohen’s Kappa scores above 0.8 may limit applicability in domains lacking sufficient expertise or clear evaluation criteria. The research primarily focuses on classification tasks and content safety applications. Whether the same dramatic improvements apply to other types of AI tasks like language generation or reasoning remains to be seen.

The iterative nature of active learning also introduces complexity compared to traditional batch processing approaches. Organizations must develop new workflows and infrastructure to support the query-response cycles that enable continuous model improvement.

Future research will likely explore automated approaches for maintaining expert-level annotation quality and developing domain-specific adaptations of the core methodology. The integration of active learning principles with other efficiency techniques, like parameter-efficient fine-tuning, could yield additional performance gains.

The Bottom Line

Google’s research shows that targeted, high-quality data can be more effective than massive datasets. By focusing on labeling only the most valuable examples, they reduced training needs by up to 10,000 times while improving performance. This approach lowers costs, speeds up development, reduces environmental impact, and makes advanced AI more accessible. It marks a significant shift toward efficient and sustainable AI development.

AI Research

How artificial intelligence is transforming hospitals

Story highlights

AI is changing healthcare. From faster X-ray reports to early warnings for sepsis, new tools are helping doctors diagnose quicker and more accurately. What the future holds for ethical and safe use of AI in hospitals is worth watching. Know more below.

AI Research

AI is becoming the new travel agent for younger generations, survey finds

Is travel planning the next space AI is taking over?

A new survey shows that younger Americans are relying on AI and ChatGPT more and more to construct their vacation itineraries.

The survey of 2,000 Americans (split evenly by generation) by Talker Research found that only 29% of millennials have never used AI for this reason, with just 33% of Gen Z saying the same.

This is a stark contrast to older generations that still rely on old-school, traditional methods to sort their travel plans. Seven in ten baby boomers also say they have never used AI for their travel plans.

IN CASE YOU MISSED IT | Travel cutbacks: Americans planning shorter, more frequent trips this summer

So exactly how are people utilizing AI in this way? The interesting results emerged in Talker Research’s new travel trend report.

The top application for AI in travel planning was found to be asking it to compare flight prices for wherever they’re headed, with 29% of all those polled saying they’ve done this.

A similar amount says AI comes in even before that: Twenty-nine percent of respondents have even asked it where they should go for their trip.

Another one in five even let AI complete a detailed plan for their whole trip, complete with sights to see, local things to do and museums to tick off.

While word of mouth and recommendations from loved ones have always been the most common way to learn about fun places to travel, the survey revealed that there’s a new contender.

YouTube (34%) was crowned as the top resource people use for travel inspo, officially topping recommendations from family (30%) and friends (28%).

The generations were split on this, as unsurprisingly, younger generations were a lot more reliant on social media than older generations.

FROM THE ARCHIVES | Affordable travel destinations that can save you thousands of dollars

While YouTube was the most popular when accounting for every survey-taker, Gen Z was overwhelmingly using TikTok for travel inspiration (52%).

In comparison, just 27% of millennials and only 2% of boomers said they use TikTok for this purpose.

While AI is still fairly new, it’s easy to see this trend growing as the technology becomes more sophisticated.

Survey methodology:

This random double-opt-in survey of 2,000 Americans (500 Gen Z, 500 millennials, 500 Gen X, 500 baby boomers) was conducted between May 5 and May 8, 2025 by market research company Talker Research, whose team members are members of the Market Research Society (MRS) and the European Society for Opinion and Marketing Research (ESOMAR).

AI Research

If I Could Only Buy 1 Artificial Intelligence (AI) Chip Stock Over The Next 10 Years, This Would Be It (Hint: It’s Not Nvidia)

While Nvidia continues to capture headlines, a critical enabler of the artificial intelligence (AI) infrastructure boom may be better positioned for long-term gains.

When investors debate the future of the artificial intelligence (AI) trade, the conversation generally finds its way back to the usual suspects: Nvidia, Advanced Micro Devices, and cloud hyperscalers like Microsoft, Amazon, and Alphabet.

Each of these companies is racing to design GPUs or develop custom accelerators in-house. But behind this hardware, there’s a company that benefits no matter which chip brand comes out ahead: Taiwan Semiconductor Manufacturing (TSM -3.05%).

Let’s unpack why Taiwan Semi is my top AI chip stock over the next 10 years, and assess whether now is an opportune time to scoop up some shares.

Agnostic to the winner, leveraged to the trend

As the world’s leading semiconductor foundry, TSMC manufactures chips for nearly every major AI developer — from Nvidia and AMD to Amazon’s custom silicon initiatives, dubbed Trainium and Inferentia.

Unlike many of its peers in the chip space that rely on new product cycles to spur demand, Taiwan Semi’s business model is fundamentally agnostic. Whether demand is allocated toward GPUs, accelerators, or specialized cloud silicon, all roads lead back to TSMC’s fabrication capabilities.

With nearly 70% market share in the global foundry space, Taiwan Semi’s dominance is hard to ignore. Such a commanding lead over the competition provides the company with unmatched structural demand visibility — a trend that appears to be accelerating as AI infrastructure spend remains on the rise.

Image source: Getty Images.

Scaling with more sophisticated AI applications

At the moment, AI development is still concentrated on training and refining large language models (LLMs) and embedding them into downstream software applications.

The next wave of AI will expand into far more diverse and demanding use cases — autonomous systems, robotics, and quantum computing remain in their infancy. At scale, these workloads will place greater demands on silicon than today’s chips can support.

Meeting these demands doesn’t simply require additional investments in chips. Rather, it requires chips engineered for new levels of efficiency, performance, and power management. This is where TSMC’s competitive advantages begin to compound.

With each successive generation of process technology, the company has a unique opportunity to widen the performance gap between itself and rivals like Samsung or Intel.

Since Taiwan Semi already has such a large footprint in the foundry landscape, next-generation design complexities give the company a chance to further lock in deeper, stickier customer relationships.

TSMC’s valuation and the case for expansion

Taiwan Semi may trade at a forward price-to-earnings (P/E) ratio of 24, but dismissing the stock as “expensive” overlooks the company’s extraordinary positioning in the AI realm. To me, the company’s valuation reflects a robust growth outlook, improving earnings prospects, and a declining risk premium.

TSM PE Ratio (Forward) data by YCharts

Unlike many of its semiconductor peers, which are vulnerable to cyclicality headwinds, TSMC has become an indispensable utility for many of the world’s largest AI developers, evolving into one of the backbones of the ongoing infrastructure boom.

The scale of investment behind current AI infrastructure is jaw-dropping. Hyperscalers are investing staggering sums to expand and modernize data centers, and at the heart of each new buildout is an unrelenting demand for more chips. Moreover, each of these companies is exploring more advanced use cases that will, at some point, require next-generation processing capabilities.

These dynamics position Taiwan Semi at the crossroad of immediate growth and enduring long-term expansion, as AI infrastructure swiftly evolves from a constant driver of growth today into a multidecade secular theme.

TSMC’s manufacturing dominance ensures that its services will continue to witness robust demand for years to come. For this reason, I think Taiwan Semi is positioned to experience further valuation expansion over the next decade as the infrastructure chapter of the AI story continues to unfold.

While there are many great opportunities in the chip space, TSMC stands alone. I see it as perhaps the most unique, durable semiconductor stock to own amid a volatile technology landscape over the next several years.

Adam Spatacco has positions in Alphabet, Amazon, Microsoft, and Nvidia. The Motley Fool has positions in and recommends Advanced Micro Devices, Alphabet, Amazon, Intel, Microsoft, Nvidia, and Taiwan Semiconductor Manufacturing. The Motley Fool recommends the following options: long January 2026 $395 calls on Microsoft, short August 2025 $24 calls on Intel, short January 2026 $405 calls on Microsoft, and short November 2025 $21 puts on Intel. The Motley Fool has a disclosure policy.

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Business2 days ago

Business2 days agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences3 months ago

Events & Conferences3 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Mergers & Acquisitions2 months ago

Mergers & Acquisitions2 months agoDonald Trump suggests US government review subsidies to Elon Musk’s companies