Ethics & Policy

Goodbye Goodhart, zombie policies, FeedbackLogs, Pope@G7 on AI ++

Welcome to another edition of the Montreal AI Ethics Institute’s weekly AI Ethics Brief that will help you keep up with the fast-changing world of AI Ethics! Every week, we summarize the best of AI Ethics research and reporting, along with some commentary. More about us at montrealethics.ai/about.

💖 To keep our content free for everyone, we ask those who can, to support us: become a paying subscriber for the price of a couple of ☕.

If you’d prefer to make a one-time donation, visit our donation page. We use this Wikipedia-style tipping model to support our mission of Democratizing AI Ethics Literacy and to ensure we can continue to serve our community.

-

Demystifying Local and Global Fairness Trade-offs in Federated Learning Using Partial Information Decomposition

-

FeedbackLogs: Recording and Incorporating Stakeholder Feedback into Machine Learning Pipelines

-

The path toward equal performance in medical machine learning

-

How to Lead an Army of Digital Sleuths in the Age of AI | WIRED

-

This Is What It Looks Like When AI Eats the World – The Atlantic

-

Why the Pope has the ears of G7 leaders on the ethics of AI

With the recent release of the Luma Dream Machine and its video generation capabilities (something that OpenAI’s Sora had demonstrated several months ago), there was an immediate concern raised on how this might be used to generate photorealistic NSFW and non-consensual content. The team at Luma mentioned that they have guardrails in place to prevent that very kind of interaction. Yet, some creative or rather malicious users online tried to “jailbreak” the system and succeeded in generating some of that prohibited content.

Techniques such as word order alteration and code-switching between languages can fool the system into generating inappropriate content, such as nudity or unauthorized depictions of celebrities.

This vulnerability underscores a significant issue: the current safeguards are reactive rather than proactive. They are often designed to catch known threats, which means they can be easily circumvented by novel and creative methods. This is particularly concerning given the potential for these tools to be used in harmful ways, including:

-

Misinformation and Deepfakes: Generative AI can create convincing fake videos that can be used to spread misinformation, manipulate public opinion, and defame individuals.

-

Privacy Violations: The ability to generate realistic images and videos of individuals without their consent raises significant privacy concerns.

-

Psychological Impact: Exposure to AI-generated content that appears real can have psychological effects, from trust erosion to emotional distress.

Did we miss anything?

Every week, we’ll feature a question from the MAIEI community and share our thinking here. We invite you to ask yours, and we’ll answer it in the upcoming editions.

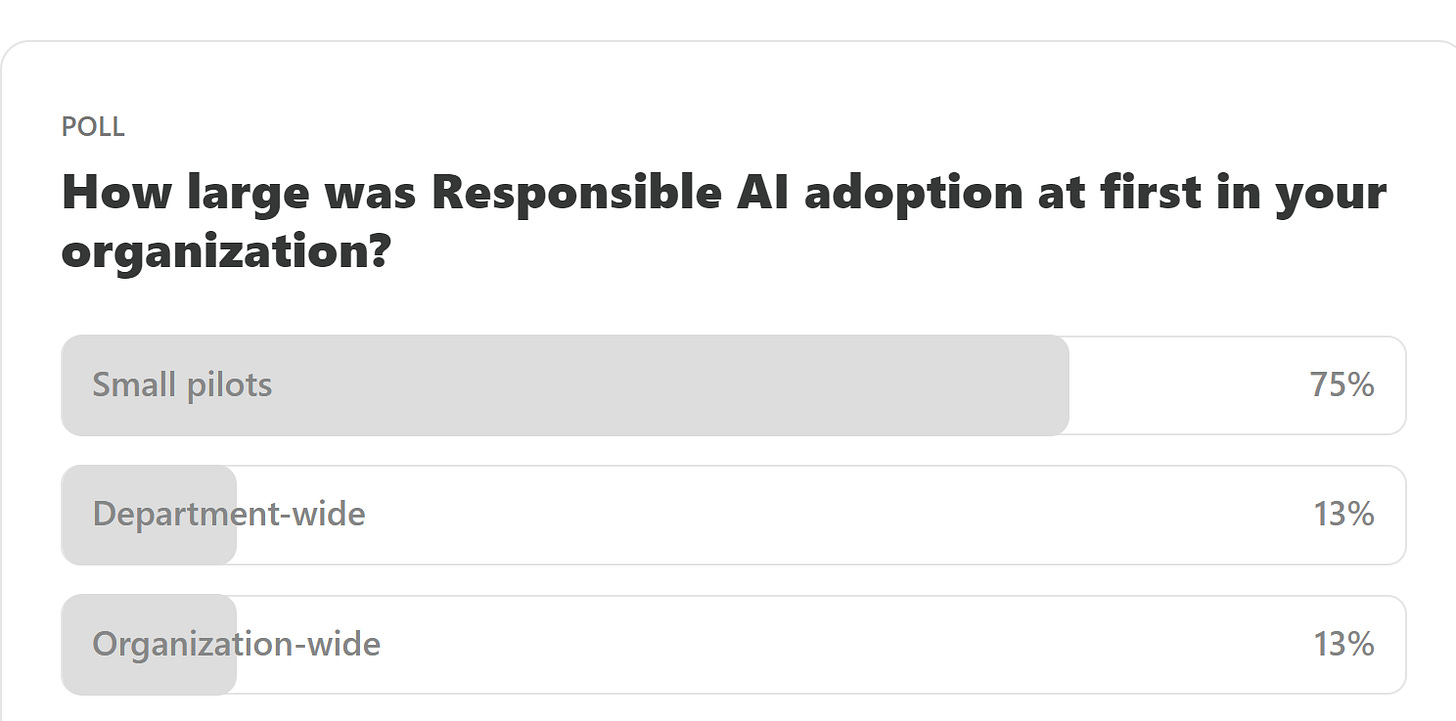

Here are the results from the previous edition for this segment:

The results here are not that surprising given that most new programs, especially something as experimental as Responsible AI, will be rolled out in terms of small pilots to give staff enough time to familiarize themselves and offer those managing the program enough breathing room to course-correct as they learn what works and what doesn’t.

This past week, we had Samaira P. write to us and ask about what they’ve seen within their organization when it comes to people trying to “hack the system” to go about doing what they want despite policies and tracking in place, i.e., they follow the “law” in letter but not in spirit. This is a classic case of gaming the system, something we write about in Goodbye Goodhart: Escaping metrics and policy gaming in Responsible AI.

As Responsible AI (RAI) program implementations mature over time, they often introduce metrics to track how well the organization is doing in terms of its RAI posture. Additionally, to motivate adoption by staff, there are often incentives that are either introduced or altered within existing functions to ensure that there is alignment towards the program’s goals and progress is made steadily towards achieving those goals. Yet, there is a common pitfall that any such approach is susceptible to: Goodhart’s Law, i.e., “When a measure becomes a target, it ceases to be a good measure.”

What are some approaches that you’ve seen that work particularly well to combat the problems we discussed in this section? Please let us know! Share your thoughts with the MAIEI community:

Three Strategies for Responsible AI Practitioners to Avoid Zombie Policies

In an age in which technology, societal norms, and regulations are evolving rapidly, outdated or ineffective governance policies, often called “zombie policies,” risk plaguing Responsible AI program implementations in organizations. By understanding the characteristics and persistence of these policies, we can uncover why addressing them is crucial for fostering innovation, trust, and efficacy in AI deployment and governance.

As AI technologies become increasingly integrated into critical aspects of organizations, the persistence of outdated policies can lead to significant resource drains, hinder progress, and damage institutional credibility. In particular, it can dampen enthusiasm and investments from senior leadership in nascent programs, a death knell for Responsible AI, which requires tremendous support to overcome organizational inertia.

Additionally, these zombie policies not only stifle innovation but also perpetuate inefficiencies that can have far-reaching consequences by perpetuating and encouraging behaviors and ‘gaming-the-system’ that happens when staff follow the policies in letter but not in spirit. By adopting strategies for continuous policy review, evidence-based decision-making, and cultivating an agile organizational culture, Responsible AI practitioners can dismantle obsolete structures, paving the way for more effective, ethical, and responsive governance and policies.

To delve deeper, read the full article here.

Given the above article about zombie policies, we’ve been noticing them more and more, not just in AI governance, but in other cases as well, such as filing expense reports and other administrative policies. While we concern ourselves here specifically with things that affect AI, it might be worth examining what structural and process flaws exist within an organization that allow these zombie policies to persist.

We’d love to hear from you and share your thoughts with everyone in the next edition:

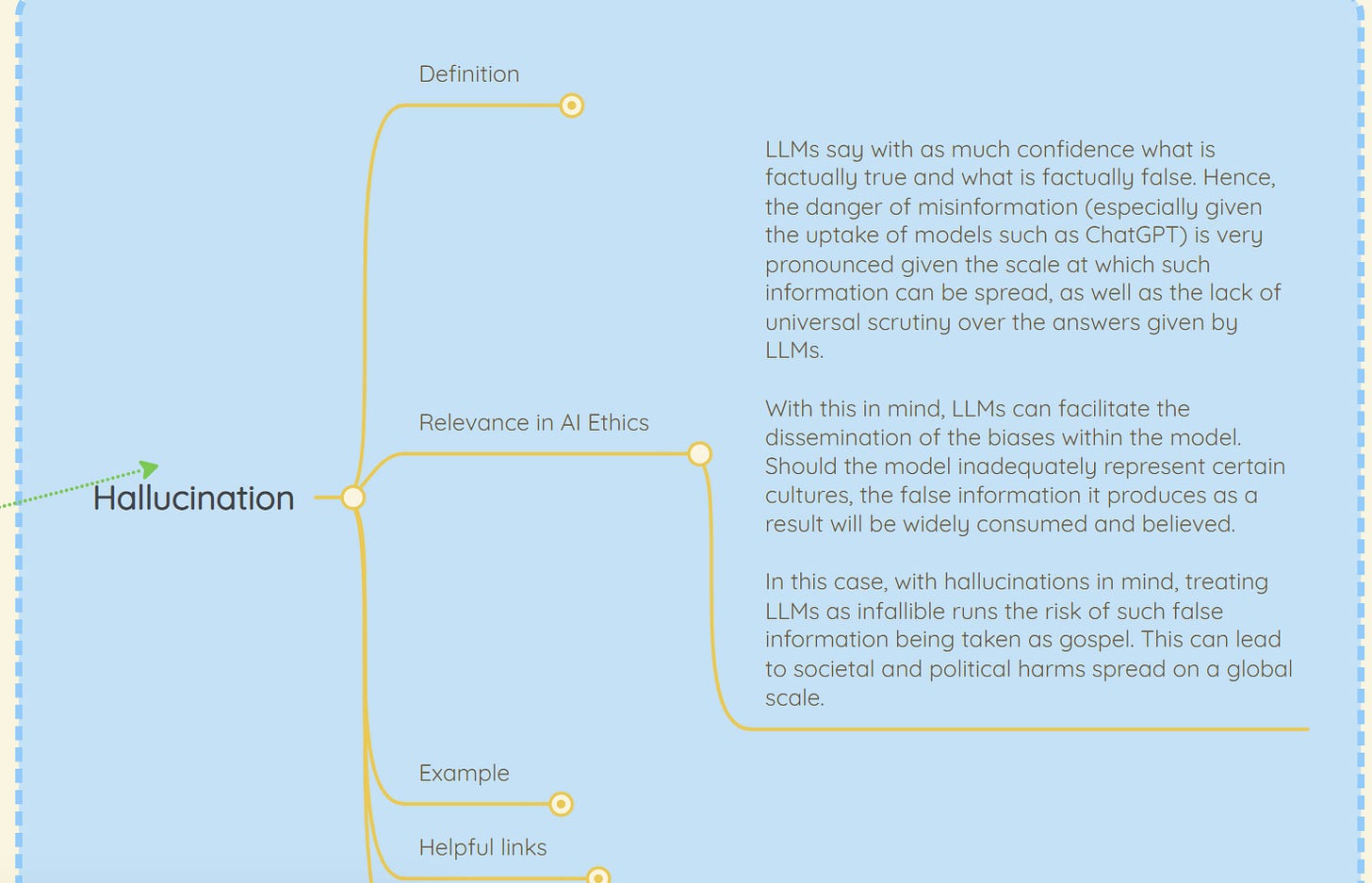

As we now get Apple Intelligence (yes, the other AI :D) embedded into the (latest) Apple devices, we’re about to experience a very broad-based usage and exposure to Generative AI, even bringing it to a lot of folks who’ve so far not had any experience with it. This along with Microsoft Copilot+ PCs is really pushing the boundaries for how pervasive Generative AI is going to become. While a lot of it is going to be grounded in RAG-based approaches to minimize hallucinations, it is worthwhile talking about Hallucinating and moving fast as adoption and feature rollouts increase over the coming months.

WHAT’S HAPPENING: “Move fast and break things” is broken. But we’ve all said that many times before. Instead, I believe we need to adopt the “Move fast and fix things” approach. Given the rapid pace of innovation and its distributed nature across many diverse actors in the ecosystem building new capabilities, realistically, it is infeasible to hope to course-correct at the same pace. Because course correction is a much harder and slow-yielding activity, this ends up amplifying the magnitude of the impact of negative consequences.

FOG OF WAR: What we need to do instead is to think ahead of how the landscape of problems and solutions is going to evolve. For example, when thinking about the problem of hallucinations in GenAI systems, it is unclear at the moment where and how they will manifest. This hinders the adoption of GenAI-powered systems by companies that seek to offer safe and reliable outcomes to their customers, e.g., in customer-service chatbots in financial services or other high-stakes scenarios.

You can either click the “Leave a comment” button below or send us an email! We’ll feature the best response next week in this section.

This research presents an information-theoretic formalization of group fairness trade-offs in federated learning (FL) where multiple clients come together to collectively train a model while keeping their own data private. A critical issue that arises is the disagreement between global fairness (overall disparity of the model across all clients) and local fairness (disparity of the model at each client), particularly when the data distributions across each client are different with respect to sensitive attributes such as gender, race, age, etc. Our work identifies three types of disparities—Unique, Redundant, and Masked—contributing to these disagreements. It presents theoretical and experimental insights into their interplay, answering pertinent questions like when global and local fairness agree and disagree.

To delve deeper, read the full summary here.

FeedbackLogs: Recording and Incorporating Stakeholder Feedback into Machine Learning Pipelines

Even though machine learning (ML) pipelines affect an increasing array of stakeholders, there is a growing need for documenting how input from stakeholders is recorded and incorporated. We propose FeedbackLogs, an addendum to existing documentation of ML pipelines, to track the feedback collection process from multiple stakeholders. Our online tool for creating FeedbackLogs and examples can be found here.

To delve deeper, read the full summary here.

The path toward equal performance in medical machine learning

Medical machine learning models are often better at predicting outcomes or diagnosing diseases in some patient groups than others. This paper asks why such performance differences occur and what it would take to build models that perform equally well for all patients.

To delve deeper, read the full summary here.

How to Lead an Army of Digital Sleuths in the Age of AI | WIRED

-

What happened: Bellingcat, a leading open-source intelligence agency, is directed by Eliot Higgins from the UK, overseeing around 40 employees. They utilize online forensic techniques to investigate events ranging from the 2014 downing of Malaysia Airlines Flight 17 to assassination attempts on Russian dissident Alexei Navalny. Bellingcat’s work highlights the complexities of truth in the digital age, where factual information often loses value amidst competing narratives. Their mission includes not only uncovering the truth but also seeking platforms where truth holds significance, empowering the weak, and holding wrongdoers accountable.

-

Why it matters: Bellingcat’s role has expanded with the increasing frequency of conflicts and the rise of falsified digital content. They are currently focusing on conflicts in Ukraine and Gaza and monitoring election-related misinformation globally. The advent of AI-generated content presents a significant challenge, as it can easily deceive the public and be used to dismiss genuine information. This scenario poses a threat to informed public discourse and democratic processes, as it can lead to widespread confusion and mistrust in verified facts.

-

Between the lines: Bellingcat’s verification process involves rigorous analysis of various data points, including geo-location, shadows for time determination, and metadata. Despite their expertise, the proliferation of AI-generated imagery could eventually complicate their work. Coordinated disinformation campaigns using AI could create significant disruptions, such as influencing stock markets or misleading news organizations. To combat this, Higgins advocates for legislative measures requiring social media companies to implement AI detection systems, stressing that voluntary efforts are insufficient to prevent potential crises.

This Is What It Looks Like When AI Eats the World – The Atlantic

-

What happened: Recent weeks have highlighted the significant integration of AI into everyday online life. Major tech companies have introduced various AI-driven features: Meta added an AI chatbot to Instagram and Facebook, OpenAI released GPT-4o, and Google experimented with “AI Overviews” in its search engine. Additionally, the reported record earnings propelled some companies into a market capitalization exceeding $3 trillion. The tech industry’s rapid AI advancements, coupled with strategic media partnerships, underscore the escalating presence of AI in numerous domains, often leaving users with little choice but to adapt.

-

Why it matters: These developments illustrate a power dynamic where tech companies dominate by utilizing media content without explicit permission, leveraging it to train their AI models. Media companies are often left with limited choices: accept financial partnerships with AI companies or risk having their data used without compensation. Legal battles, such as those initiated by The New York Times against OpenAI and Microsoft, are costly and uncertain, making it challenging for smaller organizations to pursue this route. The pervasive use of AI, coupled with minimal oversight, threatens to destabilize traditional business models for media and creative industries, creating an environment of ambiguity and inconsistency.

-

Between the lines: Despite the challenges, there is a potential opportunity for media organizations to negotiate better terms with tech companies, setting precedents for compensation for their content. Access to real-time news data is crucial for AI companies aiming to enhance their search tools, giving media organizations some leverage in these negotiations. However, the shift towards AI-driven information dissemination raises concerns about the future of creative work and the broader information ecosystem. The disruption caused by transitioning from traditional search engines to AI chatbots could undermine the sustainability of creative industries, as the foundational business models that support these sectors may become obsolete.

Why the Pope has the ears of G7 leaders on the ethics of AI

-

What happened: After discussing how to finance a prolonged war, G7 leaders in Puglia turned to Pope Francis for advice. Invited by Italian Prime Minister Giorgia Meloni, the Pope attended the summit. This marked the first time a religious leader participated in such an event, where the Pope offered his insights on the future, including a focus on AI. The Pope’s presence was a significant departure from the usual economic and political experts typically consulted by the G7.

-

Why it matters: The Pope’s participation highlights the increasing significance of ethical and moral considerations in global governance, especially concerning AI. The G7 leaders are grappling with how to regulate AI, following Japan’s Hiroshima Process International Guiding Principles, which, despite lacking legal standing, emphasize the need for ethical oversight. The EU and Canada are leading in regulation, while the UK and the US are less prescriptive. Pope Francis, influenced by Franciscan Friar Paolo Benanti, underscores the anthropological and societal challenges posed by AI, stressing the importance of maintaining human decision-making authority.

-

Between the lines: AI’s impact extends beyond replacing physical labor to potentially supplanting human intellect, raising ethical concerns about its unchecked development. Friar Benanti, a key advisor to Meloni and the Pope, emphasizes the concept of “algor-ethics,” advocating for a balanced approach where technology enhances rather than replaces human capabilities. Meloni’s alignment with the Pope’s views on AI indicates a shift toward integrating ethical considerations into technological advancements. The Pope’s engagement with contemporary issues like AI reflects his broader mission to address humanity’s frontier challenges, making his guidance sought after by world leaders.

What is the relevance to AI ethics of hallucinations in LLMs?

👇 Learn more about why it matters in AI Ethics via our Living Dictionary.

MAIEI co-hosted an evening on Responsible AI at Collision Conf 2024 with IBM and Meta!

Here’s what Deb Pimentel, President and GM Technology, IBM Canada, had to say about the event:

Just wrapped up an incredible evening to kick off Collision Conf this year with our AI Alliance counterparts including Meta and the Montreal AI Ethics Institute. We discussed the value of open source innovation, responsible AI adoption and the bright future for #GenerativeAI leadership in Canada. The energy in the room was electric, and I’m more confident than ever that Canada is poised well to take the lead in this space.

As AI systems are deployed across all domains of daily life, harmful incidents involving the technology become more frequent. This paper presents a framework for defining, identifying, classifying, and understanding harms from AI systems to facilitate effective monitoring and risk mitigation. Understanding whom, how, and when AI systems cause harm is critical for AI’s responsible design and use.

To delve deeper, read the full article here.

We’d love to hear from you, our readers, on what recent research papers caught your attention. We’re looking for ones that have been published in journals or as a part of conference proceedings.

Ethics & Policy

AI and ethics – what is originality? Maybe we’re just not that special when it comes to creativity?

I don’t trust AI, but I use it all the time.

Let’s face it, that’s a sentiment that many of us can buy into if we’re honest about it. It comes from Paul Mallaghan, Head of Creative Strategy at We Are Tilt, a creative transformation content and campaign agency whose clients include the likes of Diageo, KPMG and Barclays.

Taking part in a panel debate on AI ethics at the recent Evolve conference in Brighton, UK, he made another highly pertinent point when he said of people in general:

We know that we are quite susceptible to confident bullshitters. Basically, that is what Chat GPT [is] right now. There’s something reminds me of the illusory truth effect, where if you hear something a few times, or you say it here it said confidently, then you are much more likely to believe it, regardless of the source. I might refer to a certain President who uses that technique fairly regularly, but I think we’re so susceptible to that that we are quite vulnerable.

And, yes, it’s you he’s talking about:

I mean all of us, no matter how intelligent we think we are or how smart over the machines we think we are. When I think about trust, – and I’m coming at this very much from the perspective of someone who runs a creative agency – we’re not involved in building a Large Language Model (LLM); we’re involved in using it, understanding it, and thinking about what the implications if we get this wrong. What does it mean to be creative in the world of LLMs?

Genuine

Being genuine, is vital, he argues, and being human – where does Human Intelligence come into the picture, particularly in relation to creativity. His argument:

There’s a certain parasitic quality to what’s being created. We make films, we’re designers, we’re creators, we’re all those sort of things in the company that I run. We have had to just face the fact that we’re using tools that have hoovered up the work of others and then regenerate it and spit it out. There is an ethical dilemma that we face every day when we use those tools.

His firm has come to the conclusion that it has to be responsible for imposing its own guidelines here to some degree, because there’s not a lot happening elsewhere:

To some extent, we are always ahead of regulation, because the nature of being creative is that you’re always going to be experimenting and trying things, and you want to see what the next big thing is. It’s actually very exciting. So that’s all cool, but we’ve realized that if we want to try and do this ethically, we have to establish some of our own ground rules, even if they’re really basic. Like, let’s try and not prompt with the name of an illustrator that we know, because that’s stealing their intellectual property, or the labor of their creative brains.

I’m not a regulatory expert by any means, but I can say that a lot of the clients we work with, to be fair to them, are also trying to get ahead of where I think we are probably at government level, and they’re creating their own frameworks, their own trust frameworks, to try and address some of these things. Everyone is starting to ask questions, and you don’t want to be the person that’s accidentally created a system where everything is then suable because of what you’ve made or what you’ve generated.

Originality

That’s not necessarily an easy ask, of course. What, for example, do we mean by originality? Mallaghan suggests:

Anyone who’s ever tried to create anything knows you’re trying to break patterns. You’re trying to find or re-mix or mash up something that hasn’t happened before. To some extent, that is a good thing that really we’re talking about pattern matching tools. So generally speaking, it’s used in every part of the creative process now. Most agencies, certainly the big ones, certainly anyone that’s working on a lot of marketing stuff, they’re using it to try and drive efficiencies and get incredible margins. They’re going to be on the race to the bottom.

But originality is hard to quantify. I think that actually it doesn’t happen as much as people think anyway, that originality. When you look at ChatGPT or any of these tools, there’s a lot of interesting new tools that are out there that purport to help you in the quest to come up with ideas, and they can be useful. Quite often, we’ll use them to sift out the crappy ideas, because if ChatGPT or an AI tool can come up with it, it’s probably something that’s happened before, something you probably don’t want to use.

More Human Intelligence is needed, it seems:

What I think any creative needs to understand now is you’re going to have to be extremely interesting, and you’re going to have to push even more humanity into what you do, or you’re going to be easily replaced by these tools that probably shouldn’t be doing all the fun stuff that we want to do. [In terms of ethical questions] there’s a bunch, including the copyright thing, but there’s partly just [questions] around purpose and fun. Like, why do we even do this stuff? Why do we do it? There’s a whole industry that exists for people with wonderful brains, and there’s lots of different types of industries [where you] see different types of brains. But why are we trying to do away with something that allows people to get up in the morning and have a reason to live? That is a big question.

My second ethical thing is, what do we do with the next generation who don’t learn craft and quality, and they don’t go through the same hurdles? They may find ways to use {AI] in ways that we can’t imagine, because that’s what young people do, and I have faith in that. But I also think, how are you going to learn the language that helps you interface with, say, a video model, and know what a camera does, and how to ask for the right things, how to tell a story, and what’s right? All that is an ethical issue, like we might be taking that away from an entire generation.

And there’s one last ‘tough love’ question to be posed:

What if we’re not special? Basically, what if all the patterns that are part of us aren’t that special? The only reason I bring that up is that I think that in every career, you associate your identity with what you do. Maybe we shouldn’t, maybe that’s a bad thing, but I know that creatives really associate with what they do. Their identity is tied up in what it is that they actually do, whether they’re an illustrator or whatever. It is a proper existential crisis to look at it and go, ‘Oh, the thing that I thought was special can be regurgitated pretty easily’…It’s a terrifying thing to stare into the Gorgon and look back at it and think,’Where are we going with this?’. By the way, I do think we’re special, but maybe we’re not as special as we think we are. A lot of these patterns can be matched.

My take

This was a candid worldview that raised a number of tough questions – and questions are often so much more interesting than answers, aren’t they? The subject of creativity and copyright has been handled at length on diginomica by Chris Middleton and I think Mallaghan’s comments pretty much chime with most of that.

I was particularly taken by the point about the impact on the younger generation of having at their fingertips AI tools that can ‘do everything, until they can’t’. I recall being horrified a good few years ago when doing a shift in a newsroom of a major tech title and noticing that the flow of copy had suddenly dried up. ‘Where are the stories?’, I shouted. Back came the reply, ‘Oh, the Internet’s gone down’. ‘Then pick up the phone and call people, find some stories,’ I snapped. A sad, baffled young face looked back at me and asked, ‘Who should we call?’. Now apart from suddenly feeling about 103, I was shaken by the fact that as soon as the umbilical cord of the Internet was cut, everyone was rendered helpless.

Take that idea and multiply it a billion-fold when it comes to AI dependency and the future looks scary. Human Intelligence matters

Ethics & Policy

Preparing Timor Leste to embrace Artificial Intelligence

UNESCO, in collaboration with the Ministry of Transport and Communications, Catalpa International and national lead consultant, jointly conducted consultative and validation workshops as part of the AI Readiness assessment implementation in Timor-Leste. Held on 8–9 April and 27 May respectively, the workshops convened representatives from government ministries, academia, international organisations and development partners, the Timor-Leste National Commission for UNESCO, civil society, and the private sector for a multi-stakeholder consultation to unpack the current stage of AI adoption and development in the country, guided by UNESCO’s AI Readiness Assessment Methodology (RAM).

In response to growing concerns about the rapid rise of AI, the UNESCO Recommendation on the Ethics of Artificial Intelligence was adopted by 194 Member States in 2021, including Timor-Leste, to ensure ethical governance of AI. To support Member States in implementing this Recommendation, the RAM was developed by UNESCO’s AI experts without borders. It includes a range of quantitative and qualitative questions designed to gather information across different dimensions of a country’s AI ecosystem, including legal and regulatory, social and cultural, economic, scientific and educational, technological and infrastructural aspects.

By compiling comprehensive insights into these areas, the final RAM report helps identify institutional and regulatory gaps, which can assist the government with the necessary AI governance and enable UNESCO to provide tailored support that promotes an ethical AI ecosystem aligned with the Recommendation.

The first day of the workshop was opened by Timor-Leste’s Minister of Transport and Communication, H.E. Miguel Marques Gonçalves Manetelu. In his opening remarks, Minister Manetelu highlighted the pivotal role of AI in shaping the future. He emphasised that the current global trajectory is not only driving the digitalisation of work but also enabling more effective and productive outcomes.

Ethics & Policy

Experts gather to discuss ethics, AI and the future of publishing

Publishing stands at a pivotal juncture, said Jeremy North, president of Global Book Business at Taylor & Francis Group, addressing delegates at the 3rd International Conference on Publishing Education in Beijing. Digital intelligence is fundamentally transforming the sector — and this revolution will inevitably create “AI winners and losers”.

True winners, he argued, will be those who embrace AI not as a replacement for human insight but as a tool that strengthens publishing’s core mission: connecting people through knowledge. The key is balance, North said, using AI to enhance creativity without diminishing human judgment or critical thinking.

This vision set the tone for the event where the Association for International Publishing Education was officially launched — the world’s first global alliance dedicated to advancing publishing education through international collaboration.

Unveiled at the conference cohosted by the Beijing Institute of Graphic Communication and the Publishers Association of China, the AIPE brings together nearly 50 member organizations with a mission to foster joint research, training, and innovation in publishing education.

Tian Zhongli, president of BIGC, stressed the need to anchor publishing education in ethics and humanistic values and reaffirmed BIGC’s commitment to building a global talent platform through AIPE.

BIGC will deepen academic-industry collaboration through AIPE to provide a premium platform for nurturing high-level, holistic, and internationally competent publishing talent, he added.

Zhang Xin, secretary of the CPC Committee at BIGC, emphasized that AIPE is expected to help globalize Chinese publishing scholarships, contribute new ideas to the industry, and cultivate a new generation of publishing professionals for the digital era.

Themed “Mutual Learning and Cooperation: New Ecology of International Publishing Education in the Digital Intelligence Era”, the conference also tackled a wide range of challenges and opportunities brought on by AI — from ethical concerns and content ownership to protecting human creativity and rethinking publishing values in higher education.

Wu Shulin, president of the Publishers Association of China, cautioned that while AI brings major opportunities, “we must not overlook the ethical and security problems it introduces”.

Catriona Stevenson, deputy CEO of the UK Publishers Association, echoed this sentiment. She highlighted how British publishers are adopting AI to amplify human creativity and productivity, while calling for global cooperation to protect intellectual property and combat AI tool infringement.

The conference aims to explore innovative pathways for the publishing industry and education reform, discuss emerging technological trends, advance higher education philosophies and talent development models, promote global academic exchange and collaboration, and empower knowledge production and dissemination through publishing education in the digital intelligence era.

yangyangs@chinadaily.com.cn

-

Funding & Business7 days ago

Kayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Jobs & Careers7 days ago

Mumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Mergers & Acquisitions7 days ago

Donald Trump suggests US government review subsidies to Elon Musk’s companies

-

Funding & Business6 days ago

Rethinking Venture Capital’s Talent Pipeline

-

Jobs & Careers6 days ago

Why Agentic AI Isn’t Pure Hype (And What Skeptics Aren’t Seeing Yet)

-

Funding & Business4 days ago

Sakana AI’s TreeQuest: Deploy multi-model teams that outperform individual LLMs by 30%

-

Jobs & Careers6 days ago

Astrophel Aerospace Raises ₹6.84 Crore to Build Reusable Launch Vehicle

-

Funding & Business1 week ago

From chatbots to collaborators: How AI agents are reshaping enterprise work

-

Tools & Platforms6 days ago

Winning with AI – A Playbook for Pest Control Business Leaders to Drive Growth

-

Jobs & Careers4 days ago

Ilya Sutskever Takes Over as CEO of Safe Superintelligence After Daniel Gross’s Exit