Ethics & Policy

From PISA to AI: How the OECD is measuring what AI can do

Does anyone really know what AI can and cannot do? Amidst all the hype and fear surrounding AI, it can be frustrating to find objective, reliable information about its true capabilities.

It is widely acknowledged that current AI evaluations are out of step with the performance of frontier AI models, particularly for newer Large Language Models (LLMs), which leading tech companies release at a fast pace. We know ChatGPT can outperform most students on tests like PISA and even graduate students on the GRE, but can AI handle human tasks like managing a classroom of rowdy kids, placing a tile on a roof or negotiating a contract for a service?

The natural response may be to turn to specialist benchmarks developed by computer scientists. However, no one outside a small group of AI technicians understands benchmark results and their actual significance. When OpenAI’s GPTo1 scores 0.893 on the MMLU-Pro benchmark, does this mean AI is ready to be deployed throughout the economy? What sorts of human abilities can it replicate? The truth is that no one really knows.

The issue is garnering attention from AI researchers themselves. This year, the Stanford AI Index Report dryly notes that “to truly assess the capabilities of AI systems, more rigorous and comprehensive evaluations are needed”. Ex-OpenAI and Tesla AI researcher Andrey Karpathy bluntly described “an evaluation crisis”, while Microsoft CEO Satya Nadella dismissed many capability claims as “benchmark hacking.”

Policymakers also note the need for trusted measures of AI capabilities. The EU Artificial Intelligence Act mandates regular monitoring of AI capabilities. For their part, the OECD Council’s AI Recommendation and the 2025 Paris AI Summit emphasise the importance of understanding AI’s influence on the job market.

Despite all the attention, a persistent gap remains: there is no systematic framework that comprehensively measures AI capabilities in a way that is both understandable and policy-relevant.

To address this gap, the OECD’s AI and Future of Skills team has developed a framework for evaluating AI capabilities. The report Introducing the OECD AI Capability Indicators, released on June 3 presents this approach for the first time alongside its resulting beta AI Capability Indicators.

For 25 years, the OECD has tested the abilities of 15-year-olds in subjects deemed essential for their success in life as part of the Programme for International Student Assessment (PISA). By combining decades of experience in comparative student assessment with the expertise of AI researchers, we have developed a methodology to assess whether AI can replicate the abilities considered important for humans in schools, the workplace, and society. Given its ability to leverage these two perspectives, we believe our organisation is uniquely positioned to inform policymakers about what AI can and cannot do and to lay the groundwork for the development of a systematic battery of relevant and valid AI assessments in the future: a PISA for AI.

The OECD AI Capability Indicators in a nutshell

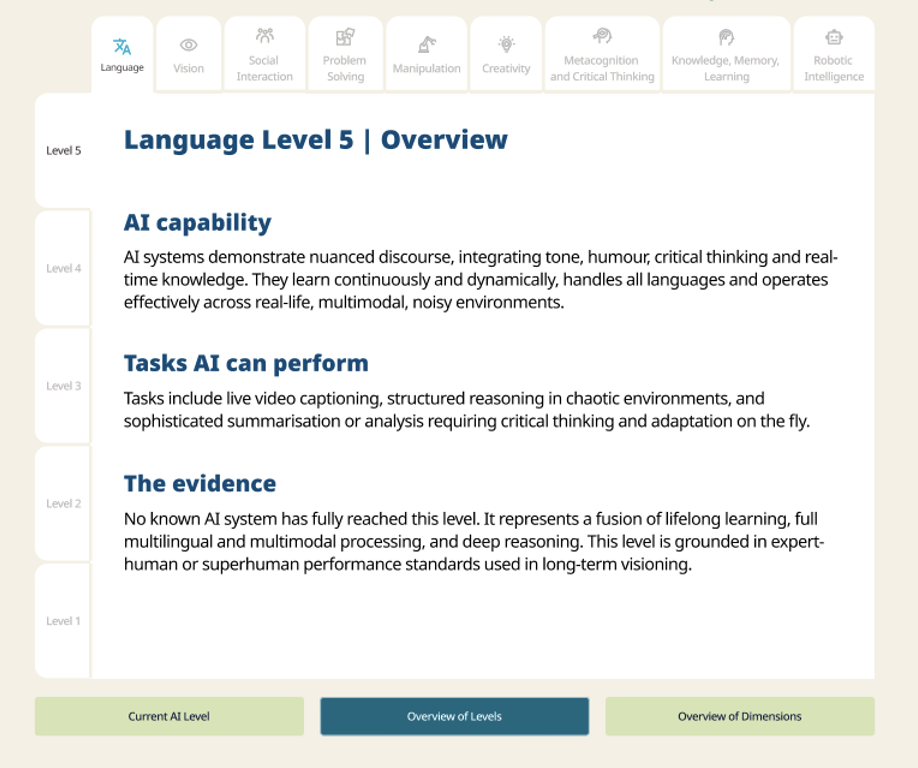

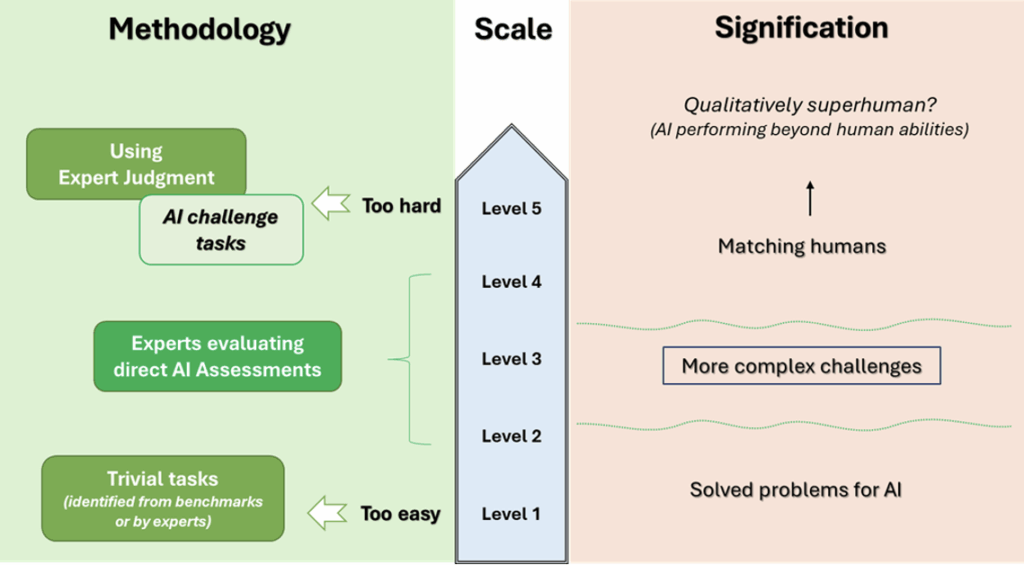

The indicators are a 5-level scale. Each level describes the sorts of capabilities that AI would need to perform human tasks, with the easiest ones at the bottom of the scale and the hardest ones at the top. The indicators have been developed across nine capability domains, such as Language, Creativity and Reasoning, that represent the full range of abilities humans use to perform job tasks. An online tool allows users to easily navigate the full set of scales and levels at aicapabilityindicators.oecd.org. AI moves fast. The current ratings include AI systems released up to the end of 2024, meaning “reasoning models” such as GPTo3 and DeepSeek’s R1 will be rated in the next edition.

The scales are based on the types of technical benchmarks described earlier. Our team worked with an expert group of 30 AI researchers, psychologists, labour economists and assessment specialists to link the results of these benchmarks to human abilities. A structured peer review by 25 researchers followed the development process.

The scales aim to convey the major developments in each capability from the past towards a hypothetical future in which AI can replicate all human abilities. Those already achieved are at the lower levels, while those remaining are at the upper levels.

Across the scales, we see the strengths and weaknesses of different categories of AI models. For instance, while LLMs achieve the highest rating on the Language scale (level 3) thanks to their ability to produce human-like language and vast knowledge, they do not top the ratings across the board. Neuro-symbolic models such as Google’s AlphaZero are the most performant systems on the Creativity scale (level 3) due to their ability to generate solutions that are not only useful but also surprising to humans. In contrast, symbolic AI systems (level 2), nostalgically referred to as good old-fashioned AI, still outperform LLMs (level 1) on the Problem-solving scale due to the latter’s issues with hallucinations and brittleness.

Overall, AI is still well behind humans across all scales.

Nevertheless, many human jobs only require the abilities at the lower end of these scales. Jobs requiring tasks at levels 2 and 3 are most likely to be impacted by AI soon. For instance, AI is currently at Level 3 on the Knowledge, Learning and Memory scale, which includes generative tasks like creating illustrations or summarising information. This means jobs that include such tasks are likely to be impacted by AI. On the other hand, jobs that require capabilities at Level 4, such as operating in unknown environments, are unlikely to be affected by current AI systems.

The report includes beta indicators and an invitation for feedback from two key stakeholder groups: AI researchers and policymakers. The AI evaluation work of researchers provides evidence for the indicators, while the ability to interpret and leverage insights is vital for informed policy. We also welcome feedback from other stakeholder groups. The OECD will release the first comprehensive version of the indicators after receiving feedback from stakeholders and developing a systematic update protocol.

The future of the Indicators

So, are we claiming to be the only ones who know what AI can and cannot do? In a formal context, sort of.

We hope that the framework and indicators presented here are merely the beginning. The beta indicators’ ratings are already informative and can be utilised in pilot studies examining the implications of AI across diverse policy domains. If our methodology can be scaled up, we believe this framework can serve as the foundation for developing new assessments of AI that test its capabilities to replicate relevant human abilities.

Nevertheless, they need to be updated to reflect the capabilities of AI systems in 2025, and our framework requires refinement, including the integration of additional benchmarks. We will update the scales in early 2026 and are actively seeking partners to help strengthen the indicators and apply them to analyses of how AI impacts education and the labour market.

The post From PISA to AI: How the OECD is measuring what AI can do appeared first on OECD.AI.

Ethics & Policy

AI and ethics – what is originality? Maybe we’re just not that special when it comes to creativity?

I don’t trust AI, but I use it all the time.

Let’s face it, that’s a sentiment that many of us can buy into if we’re honest about it. It comes from Paul Mallaghan, Head of Creative Strategy at We Are Tilt, a creative transformation content and campaign agency whose clients include the likes of Diageo, KPMG and Barclays.

Taking part in a panel debate on AI ethics at the recent Evolve conference in Brighton, UK, he made another highly pertinent point when he said of people in general:

We know that we are quite susceptible to confident bullshitters. Basically, that is what Chat GPT [is] right now. There’s something reminds me of the illusory truth effect, where if you hear something a few times, or you say it here it said confidently, then you are much more likely to believe it, regardless of the source. I might refer to a certain President who uses that technique fairly regularly, but I think we’re so susceptible to that that we are quite vulnerable.

And, yes, it’s you he’s talking about:

I mean all of us, no matter how intelligent we think we are or how smart over the machines we think we are. When I think about trust, – and I’m coming at this very much from the perspective of someone who runs a creative agency – we’re not involved in building a Large Language Model (LLM); we’re involved in using it, understanding it, and thinking about what the implications if we get this wrong. What does it mean to be creative in the world of LLMs?

Genuine

Being genuine, is vital, he argues, and being human – where does Human Intelligence come into the picture, particularly in relation to creativity. His argument:

There’s a certain parasitic quality to what’s being created. We make films, we’re designers, we’re creators, we’re all those sort of things in the company that I run. We have had to just face the fact that we’re using tools that have hoovered up the work of others and then regenerate it and spit it out. There is an ethical dilemma that we face every day when we use those tools.

His firm has come to the conclusion that it has to be responsible for imposing its own guidelines here to some degree, because there’s not a lot happening elsewhere:

To some extent, we are always ahead of regulation, because the nature of being creative is that you’re always going to be experimenting and trying things, and you want to see what the next big thing is. It’s actually very exciting. So that’s all cool, but we’ve realized that if we want to try and do this ethically, we have to establish some of our own ground rules, even if they’re really basic. Like, let’s try and not prompt with the name of an illustrator that we know, because that’s stealing their intellectual property, or the labor of their creative brains.

I’m not a regulatory expert by any means, but I can say that a lot of the clients we work with, to be fair to them, are also trying to get ahead of where I think we are probably at government level, and they’re creating their own frameworks, their own trust frameworks, to try and address some of these things. Everyone is starting to ask questions, and you don’t want to be the person that’s accidentally created a system where everything is then suable because of what you’ve made or what you’ve generated.

Originality

That’s not necessarily an easy ask, of course. What, for example, do we mean by originality? Mallaghan suggests:

Anyone who’s ever tried to create anything knows you’re trying to break patterns. You’re trying to find or re-mix or mash up something that hasn’t happened before. To some extent, that is a good thing that really we’re talking about pattern matching tools. So generally speaking, it’s used in every part of the creative process now. Most agencies, certainly the big ones, certainly anyone that’s working on a lot of marketing stuff, they’re using it to try and drive efficiencies and get incredible margins. They’re going to be on the race to the bottom.

But originality is hard to quantify. I think that actually it doesn’t happen as much as people think anyway, that originality. When you look at ChatGPT or any of these tools, there’s a lot of interesting new tools that are out there that purport to help you in the quest to come up with ideas, and they can be useful. Quite often, we’ll use them to sift out the crappy ideas, because if ChatGPT or an AI tool can come up with it, it’s probably something that’s happened before, something you probably don’t want to use.

More Human Intelligence is needed, it seems:

What I think any creative needs to understand now is you’re going to have to be extremely interesting, and you’re going to have to push even more humanity into what you do, or you’re going to be easily replaced by these tools that probably shouldn’t be doing all the fun stuff that we want to do. [In terms of ethical questions] there’s a bunch, including the copyright thing, but there’s partly just [questions] around purpose and fun. Like, why do we even do this stuff? Why do we do it? There’s a whole industry that exists for people with wonderful brains, and there’s lots of different types of industries [where you] see different types of brains. But why are we trying to do away with something that allows people to get up in the morning and have a reason to live? That is a big question.

My second ethical thing is, what do we do with the next generation who don’t learn craft and quality, and they don’t go through the same hurdles? They may find ways to use {AI] in ways that we can’t imagine, because that’s what young people do, and I have faith in that. But I also think, how are you going to learn the language that helps you interface with, say, a video model, and know what a camera does, and how to ask for the right things, how to tell a story, and what’s right? All that is an ethical issue, like we might be taking that away from an entire generation.

And there’s one last ‘tough love’ question to be posed:

What if we’re not special? Basically, what if all the patterns that are part of us aren’t that special? The only reason I bring that up is that I think that in every career, you associate your identity with what you do. Maybe we shouldn’t, maybe that’s a bad thing, but I know that creatives really associate with what they do. Their identity is tied up in what it is that they actually do, whether they’re an illustrator or whatever. It is a proper existential crisis to look at it and go, ‘Oh, the thing that I thought was special can be regurgitated pretty easily’…It’s a terrifying thing to stare into the Gorgon and look back at it and think,’Where are we going with this?’. By the way, I do think we’re special, but maybe we’re not as special as we think we are. A lot of these patterns can be matched.

My take

This was a candid worldview that raised a number of tough questions – and questions are often so much more interesting than answers, aren’t they? The subject of creativity and copyright has been handled at length on diginomica by Chris Middleton and I think Mallaghan’s comments pretty much chime with most of that.

I was particularly taken by the point about the impact on the younger generation of having at their fingertips AI tools that can ‘do everything, until they can’t’. I recall being horrified a good few years ago when doing a shift in a newsroom of a major tech title and noticing that the flow of copy had suddenly dried up. ‘Where are the stories?’, I shouted. Back came the reply, ‘Oh, the Internet’s gone down’. ‘Then pick up the phone and call people, find some stories,’ I snapped. A sad, baffled young face looked back at me and asked, ‘Who should we call?’. Now apart from suddenly feeling about 103, I was shaken by the fact that as soon as the umbilical cord of the Internet was cut, everyone was rendered helpless.

Take that idea and multiply it a billion-fold when it comes to AI dependency and the future looks scary. Human Intelligence matters

Ethics & Policy

Experts gather to discuss ethics, AI and the future of publishing

Publishing stands at a pivotal juncture, said Jeremy North, president of Global Book Business at Taylor & Francis Group, addressing delegates at the 3rd International Conference on Publishing Education in Beijing. Digital intelligence is fundamentally transforming the sector — and this revolution will inevitably create “AI winners and losers”.

True winners, he argued, will be those who embrace AI not as a replacement for human insight but as a tool that strengthens publishing’s core mission: connecting people through knowledge. The key is balance, North said, using AI to enhance creativity without diminishing human judgment or critical thinking.

This vision set the tone for the event where the Association for International Publishing Education was officially launched — the world’s first global alliance dedicated to advancing publishing education through international collaboration.

Unveiled at the conference cohosted by the Beijing Institute of Graphic Communication and the Publishers Association of China, the AIPE brings together nearly 50 member organizations with a mission to foster joint research, training, and innovation in publishing education.

Tian Zhongli, president of BIGC, stressed the need to anchor publishing education in ethics and humanistic values and reaffirmed BIGC’s commitment to building a global talent platform through AIPE.

BIGC will deepen academic-industry collaboration through AIPE to provide a premium platform for nurturing high-level, holistic, and internationally competent publishing talent, he added.

Zhang Xin, secretary of the CPC Committee at BIGC, emphasized that AIPE is expected to help globalize Chinese publishing scholarships, contribute new ideas to the industry, and cultivate a new generation of publishing professionals for the digital era.

Themed “Mutual Learning and Cooperation: New Ecology of International Publishing Education in the Digital Intelligence Era”, the conference also tackled a wide range of challenges and opportunities brought on by AI — from ethical concerns and content ownership to protecting human creativity and rethinking publishing values in higher education.

Wu Shulin, president of the Publishers Association of China, cautioned that while AI brings major opportunities, “we must not overlook the ethical and security problems it introduces”.

Catriona Stevenson, deputy CEO of the UK Publishers Association, echoed this sentiment. She highlighted how British publishers are adopting AI to amplify human creativity and productivity, while calling for global cooperation to protect intellectual property and combat AI tool infringement.

The conference aims to explore innovative pathways for the publishing industry and education reform, discuss emerging technological trends, advance higher education philosophies and talent development models, promote global academic exchange and collaboration, and empower knowledge production and dissemination through publishing education in the digital intelligence era.

yangyangs@chinadaily.com.cn

Ethics & Policy

Experts gather to discuss ethics, AI and the future of publishing

Publishing stands at a pivotal juncture, said Jeremy North, president of Global Book Business at Taylor & Francis Group, addressing delegates at the 3rd International Conference on Publishing Education in Beijing. Digital intelligence is fundamentally transforming the sector — and this revolution will inevitably create “AI winners and losers”.

True winners, he argued, will be those who embrace AI not as a replacement for human insight but as a tool that strengthens publishing”s core mission: connecting people through knowledge. The key is balance, North said, using AI to enhance creativity without diminishing human judgment or critical thinking.

This vision set the tone for the event where the Association for International Publishing Education was officially launched — the world’s first global alliance dedicated to advancing publishing education through international collaboration.

Unveiled at the conference cohosted by the Beijing Institute of Graphic Communication and the Publishers Association of China, the AIPE brings together nearly 50 member organizations with a mission to foster joint research, training, and innovation in publishing education.

Tian Zhongli, president of BIGC, stressed the need to anchor publishing education in ethics and humanistic values and reaffirmed BIGC’s commitment to building a global talent platform through AIPE.

BIGC will deepen academic-industry collaboration through AIPE to provide a premium platform for nurturing high-level, holistic, and internationally competent publishing talent, he added.

Zhang Xin, secretary of the CPC Committee at BIGC, emphasized that AIPE is expected to help globalize Chinese publishing scholarships, contribute new ideas to the industry, and cultivate a new generation of publishing professionals for the digital era.

Themed “Mutual Learning and Cooperation: New Ecology of International Publishing Education in the Digital Intelligence Era”, the conference also tackled a wide range of challenges and opportunities brought on by AI — from ethical concerns and content ownership to protecting human creativity and rethinking publishing values in higher education.

Wu Shulin, president of the Publishers Association of China, cautioned that while AI brings major opportunities, “we must not overlook the ethical and security problems it introduces”.

Catriona Stevenson, deputy CEO of the UK Publishers Association, echoed this sentiment. She highlighted how British publishers are adopting AI to amplify human creativity and productivity, while calling for global cooperation to protect intellectual property and combat AI tool infringement.

The conference aims to explore innovative pathways for the publishing industry and education reform, discuss emerging technological trends, advance higher education philosophies and talent development models, promote global academic exchange and collaboration, and empower knowledge production and dissemination through publishing education in the digital intelligence era.

yangyangs@chinadaily.com.cn

-

Funding & Business7 days ago

Kayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Jobs & Careers7 days ago

Mumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Mergers & Acquisitions7 days ago

Donald Trump suggests US government review subsidies to Elon Musk’s companies

-

Funding & Business6 days ago

Rethinking Venture Capital’s Talent Pipeline

-

Jobs & Careers6 days ago

Why Agentic AI Isn’t Pure Hype (And What Skeptics Aren’t Seeing Yet)

-

Funding & Business4 days ago

Sakana AI’s TreeQuest: Deploy multi-model teams that outperform individual LLMs by 30%

-

Jobs & Careers6 days ago

Astrophel Aerospace Raises ₹6.84 Crore to Build Reusable Launch Vehicle

-

Funding & Business7 days ago

From chatbots to collaborators: How AI agents are reshaping enterprise work

-

Jobs & Careers4 days ago

Ilya Sutskever Takes Over as CEO of Safe Superintelligence After Daniel Gross’s Exit

-

Funding & Business4 days ago

Dust hits $6M ARR helping enterprises build AI agents that actually do stuff instead of just talking