Funding & Business

From chatbots to collaborators: How AI agents are reshaping enterprise work

Join the event trusted by enterprise leaders for nearly two decades. VB Transform brings together the people building real enterprise AI strategy. Learn more

Scott White still marvels at how quickly artificial intelligence has transformed from a novelty into a true work partner. Just over a year ago, the product lead for Claude AI at Anthropic watched as early AI coding tools could barely complete a single line of code. Today, he’s building production-ready software features himself — despite not being a professional programmer.

“I no longer think about my job as writing a PRD and trying to convince someone to do something,” White said during a fireside chat at VB Transform 2025, VentureBeat’s annual enterprise AI summit in San Francisco. “The first thing I do is, can I build a workable prototype of this on our staging server and then share a demo of it actually working.”

This shift represents a broader transformation in how enterprises are adopting AI, moving beyond simple chatbots that answer questions to sophisticated “agentic” systems capable of autonomous work. White’s experience offers a glimpse into what may be coming for millions of other knowledge workers.

From code completion to autonomous programming: AI’s breakneck evolution

The evolution has been remarkably swift. When White joined Anthropic, the company’s Claude 2 model could handle basic text completion. The release of Claude 3.5 Sonnet enabled the creation of entire applications, leading to features like Artifacts that let users generate custom interfaces. Now, with Claude 4 achieving a 72.5% score on the SWE-bench coding benchmark, the model can function as what White calls “a fully remote agentic software engineer.”

Claude Code, the company’s latest coding tool, can analyze entire codebases, search the internet for API documentation, issue pull requests, respond to code review comments, and iterate on solutions — all while working asynchronously for hours. White noted that 90% of Claude Code itself was written by the AI system.

“That is like an entire agentic process in the background that was not possible six months ago,” White explained.

Enterprise giants slash work time from weeks to minutes with AI agents

The implications extend far beyond software development. Novo Nordisk, the Danish pharmaceutical giant, has integrated Claude into workflows that previously took 10 weeks to complete clinical reports, now finishing the same work in 10 minutes. GitLab uses the technology for everything from sales proposals to technical documentation. Intuit deploys Claude to provide tax advice directly to consumers.

White distinguishes between different levels of AI integration: simple language models that answer questions, models enhanced with tools like web search, structured workflows that incorporate AI into business processes, and full agents that can pursue goals autonomously using multiple tools and iterative reasoning.

“I think about an agent as something that has a goal, and then it can just do many things to accomplish that goal,” White said. The key enabler has been what he calls the “inexorable” relationship between model intelligence and new product capabilities.

The infrastructure revolution: Building networks of AI collaborators

A critical infrastructure development has been Anthropic’s Model Context Protocol (MCP), which White describes as “the USB-C for integrations.” Rather than companies building separate connections to each data source or tool, MCP provides a standardized way for AI systems to access enterprise software, from Salesforce to internal knowledge repositories.

“It’s really democratizing access to data,” White said, noting that integrations built by one company can be shared and reused by others through the open-source protocol.

For organizations looking to implement AI agents, White recommends starting small and building incrementally. “Don’t try to build an entire agentic system from scratch,” he advised. “Build the component of it, make sure that component works, then build a next component.”

He also emphasized the importance of evaluation systems to ensure AI agents perform as intended. “Evals are the new PRD,” White said, referring to product requirement documents, highlighting how companies must develop new methods to assess AI performance on specific business tasks.

From AI assistants to AI organizations: The next workforce frontier

Looking ahead, White envisions AI development becoming accessible to non-technical workers, similar to how coding capabilities have advanced. He imagines a future where individuals manage not just one AI agent but entire organizations of specialized AI systems.

“How can everyone be their own mini CPO or CEO?” White asked. “I don’t exactly know what that looks like, but that’s the kind of thing that I wake up and want to get there.”

The transformation White describes reflects broader industry trends as companies grapple with AI’s expanding capabilities. While early adoption focused on experimental use cases, enterprises are increasingly integrating AI into core business processes, fundamentally changing how work gets done.

As AI agents become more autonomous and capable, the challenge shifts from teaching machines to perform tasks to managing AI collaborators that can work independently for extended periods. For White, this future is already arriving — one production feature at a time.

Source link

Funding & Business

US Tariffs: Swiss Gold Industry Lobby Rejects Idea of US Relocation

Switzerland’s trade group for gold refiners opposes a relocation of some operations to the US to ease a trade imbalance between the countries and help tariff negotiations, newspaper Neue Zuercher Zeitung reported.

The government is trying to find ways to get President Donald Trump to lower his 39% tariff on Switzerland, which is damaging companies and the economy. There have been suggestions that moving gold refining capacity to the US would be an option to appease Trump.

Funding & Business

Software commands 40% of cybersecurity budgets as gen AI attacks execute in milliseconds

Software spending now makes up 40% of cybersecurity budgets, with investment expected to grow as CISOs prioritize real-time AI defenses.Read More

Source link

Funding & Business

How Sakana AI’s new evolutionary algorithm builds powerful AI models without expensive retraining

Want smarter insights in your inbox? Sign up for our weekly newsletters to get only what matters to enterprise AI, data, and security leaders. Subscribe Now

A new evolutionary technique from Japan-based AI lab Sakana AI enables developers to augment the capabilities of AI models without costly training and fine-tuning processes. The technique, called Model Merging of Natural Niches (M2N2), overcomes the limitations of other model merging methods and can even evolve new models entirely from scratch.

M2N2 can be applied to different types of machine learning models, including large language models (LLMs) and text-to-image generators. For enterprises looking to build custom AI solutions, the approach offers a powerful and efficient way to create specialized models by combining the strengths of existing open-source variants.

What is model merging?

Model merging is a technique for integrating the knowledge of multiple specialized AI models into a single, more capable model. Instead of fine-tuning, which refines a single pre-trained model using new data, merging combines the parameters of several models simultaneously. This process can consolidate a wealth of knowledge into one asset without requiring expensive, gradient-based training or access to the original training data.

For enterprise teams, this offers several practical advantages over traditional fine-tuning. In comments to VentureBeat, the paper’s authors said model merging is a gradient-free process that only requires forward passes, making it computationally cheaper than fine-tuning, which involves costly gradient updates. Merging also sidesteps the need for carefully balanced training data and mitigates the risk of “catastrophic forgetting,” where a model loses its original capabilities after learning a new task. The technique is especially powerful when the training data for specialist models isn’t available, as merging only requires the model weights themselves.

AI Scaling Hits Its Limits

Power caps, rising token costs, and inference delays are reshaping enterprise AI. Join our exclusive salon to discover how top teams are:

- Turning energy into a strategic advantage

- Architecting efficient inference for real throughput gains

- Unlocking competitive ROI with sustainable AI systems

Secure your spot to stay ahead: https://bit.ly/4mwGngO

Early approaches to model merging required significant manual effort, as developers adjusted coefficients through trial and error to find the optimal blend. More recently, evolutionary algorithms have helped automate this process by searching for the optimal combination of parameters. However, a significant manual step remains: developers must set fixed sets for mergeable parameters, such as layers. This restriction limits the search space and can prevent the discovery of more powerful combinations.

How M2N2 works

M2N2 addresses these limitations by drawing inspiration from evolutionary principles in nature. The algorithm has three key features that allow it to explore a wider range of possibilities and discover more effective model combinations.

First, M2N2 eliminates fixed merging boundaries, such as blocks or layers. Instead of grouping parameters by pre-defined layers, it uses flexible “split points” and “mixing ration” to divide and combine models. This means that, for example, the algorithm might merge 30% of the parameters in one layer from Model A with 70% of the parameters from the same layer in Model B. The process starts with an “archive” of seed models. At each step, M2N2 selects two models from the archive, determines a mixing ratio and a split point, and merges them. If the resulting model performs well, it is added back to the archive, replacing a weaker one. This allows the algorithm to explore increasingly complex combinations over time. As the researchers note, “This gradual introduction of complexity ensures a wider range of possibilities while maintaining computational tractability.”

Second, M2N2 manages the diversity of its model population through competition. To understand why diversity is crucial, the researchers offer a simple analogy: “Imagine merging two answer sheets for an exam… If both sheets have exactly the same answers, combining them does not make any improvement. But if each sheet has correct answers for different questions, merging them gives a much stronger result.” Model merging works the same way. The challenge, however, is defining what kind of diversity is valuable. Instead of relying on hand-crafted metrics, M2N2 simulates competition for limited resources. This nature-inspired approach naturally rewards models with unique skills, as they can “tap into uncontested resources” and solve problems others can’t. These niche specialists, the authors note, are the most valuable for merging.

Third, M2N2 uses a heuristic called “attraction” to pair models for merging. Rather than simply combining the top-performing models as in other merging algorithms, it pairs them based on their complementary strengths. An “attraction score” identifies pairs where one model performs well on data points that the other finds challenging. This improves both the efficiency of the search and the quality of the final merged model.

M2N2 in action

The researchers tested M2N2 across three different domains, demonstrating its versatility and effectiveness.

The first was a small-scale experiment evolving neural network–based image classifiers from scratch on the MNIST dataset. M2N2 achieved the highest test accuracy by a substantial margin compared to other methods. The results showed that its diversity-preservation mechanism was key, allowing it to maintain an archive of models with complementary strengths that facilitated effective merging while systematically discarding weaker solutions.

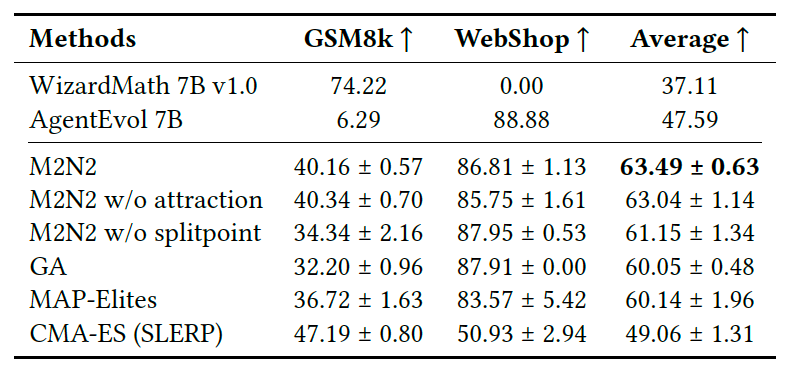

Next, they applied M2N2 to LLMs, combining a math specialist model (WizardMath-7B) with an agentic specialist (AgentEvol-7B), both of which are based on the Llama 2 architecture. The goal was to create a single agent that excelled at both math problems (GSM8K dataset) and web-based tasks (WebShop dataset). The resulting model achieved strong performance on both benchmarks, showcasing M2N2’s ability to create powerful, multi-skilled models.

Finally, the team merged diffusion-based image generation models. They combined a model trained on Japanese prompts (JSDXL) with three Stable Diffusion models primarily trained on English prompts. The objective was to create a model that combined the best image generation capabilities of each seed model while retaining the ability to understand Japanese. The merged model not only produced more photorealistic images with better semantic understanding but also developed an emergent bilingual ability. It could generate high-quality images from both English and Japanese prompts, even though it was optimized exclusively using Japanese captions.

For enterprises that have already developed specialist models, the business case for merging is compelling. The authors point to new, hybrid capabilities that would be difficult to achieve otherwise. For example, merging an LLM fine-tuned for persuasive sales pitches with a vision model trained to interpret customer reactions could create a single agent that adapts its pitch in real-time based on live video feedback. This unlocks the combined intelligence of multiple models with the cost and latency of running just one.

Looking ahead, the researchers see techniques like M2N2 as part of a broader trend toward “model fusion.” They envision a future where organizations maintain entire ecosystems of AI models that are continuously evolving and merging to adapt to new challenges.

“Think of it like an evolving ecosystem where capabilities are combined as needed, rather than building one giant monolith from scratch,” the authors suggest.

The researchers have released the code of M2N2 on GitHub.

The biggest hurdle to this dynamic, self-improving AI ecosystem, the authors believe, is not technical but organizational. “In a world with a large ‘merged model’ made up of open-source, commercial, and custom components, ensuring privacy, security, and compliance will be a critical problem.” For businesses, the challenge will be figuring out which models can be safely and effectively absorbed into their evolving AI stack.

Source link

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences3 months ago

Events & Conferences3 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Mergers & Acquisitions2 months ago

Mergers & Acquisitions2 months agoDonald Trump suggests US government review subsidies to Elon Musk’s companies

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoAstrophel Aerospace Raises ₹6.84 Crore to Build Reusable Launch Vehicle