AI Research

Despite the immense promise of artificial intelligence in urban infrastructure, most Indian cities remain far from fully integrating it.

Despite the immense promise of artificial intelligence in urban infrastructure, most Indian cities remain far from fully integrating it. According to Raghunath K., senior consultant at the Texas Department of Transportation, “Urban departments often operate in silos with fragmented data systems,” severely limiting AI’s predictive power. With 80% of Indian cities lacking integrated data frameworks and only 20% of municipal leaders confident in using AI, real progress remains slow.

Prajjwal Misra, director at Rudrabhishek Infosystem (RIPL), adds that the hesitation isn’t just technological—it’s cultural and economic. “AI is helping us automate a lot of manual work… it’s doing the exact opposite [of replacing jobs],” he noted. High upfront costs and public mistrust around data privacy remain strong deterrents, while most government projects still fail to scale beyond pilot phases. “For every 500 AI pilot projects, only two or three are fully implemented,” Misra revealed.

Even so, AI is quietly at work. From satellite imagery detecting property encroachments to digital twins in public transport, small wins are emerging. Koilakonda points out that AI-based tools could reduce procurement waste by up to 20% and cut downtime by 30% in predictive maintenance scenarios. “If you’ve ever gotten a traffic ticket for overspeeding in India, that’s AI,” Misra remarked.

Yet the path forward requires more than isolated efforts. Misra envisions AI as part of a multi-tech ecosystem, while Koilakonda urges for investment in data governance and capacity-building. Until then, AI may remain an invisible assistant rather than a true planner of India’s urban future.

AI Research

How London Stock Exchange Group is detecting market abuse with their AI-powered Surveillance Guide on Amazon Bedrock

London Stock Exchange Group (LSEG) is a global provider of financial markets data and infrastructure. It operates the London Stock Exchange and manages international equity, fixed income, and derivative markets. The group also develops capital markets software, offers real-time and reference data products, and provides extensive post-trade services. This post was co-authored with Charles Kellaway and Rasika Withanawasam of LSEG.

Financial markets are remarkably complex, hosting increasingly dynamic investment strategies across new asset classes and interconnected venues. Accordingly, regulators place great emphasis on the ability of market surveillance teams to keep pace with evolving risk profiles. However, the landscape is vast; London Stock Exchange alone facilitates the trading and reporting of over £1 trillion of securities by 400 members annually. Effective monitoring must cover all MiFID asset classes, markets and jurisdictions to detect market abuse, while also giving weight to participant relationships, and market surveillance systems must scale with volumes and volatility. As a result, many systems are outdated and unsatisfactory for regulatory expectations, requiring manual and time-consuming work.

To address these challenges, London Stock Exchange Group (LSEG) has developed an innovative solution using Amazon Bedrock, a fully managed service that offers a choice of high-performing foundation models from leading AI companies, to automate and enhance their market surveillance capabilities. LSEG’s AI-powered Surveillance Guide helps analysts efficiently review trades flagged for potential market abuse by automatically analyzing news sensitivity and its impact on market behavior.

In this post, we explore how LSEG used Amazon Bedrock and Anthropic’s Claude foundation models to build an automated system that significantly improves the efficiency and accuracy of market surveillance operations.

The challenge

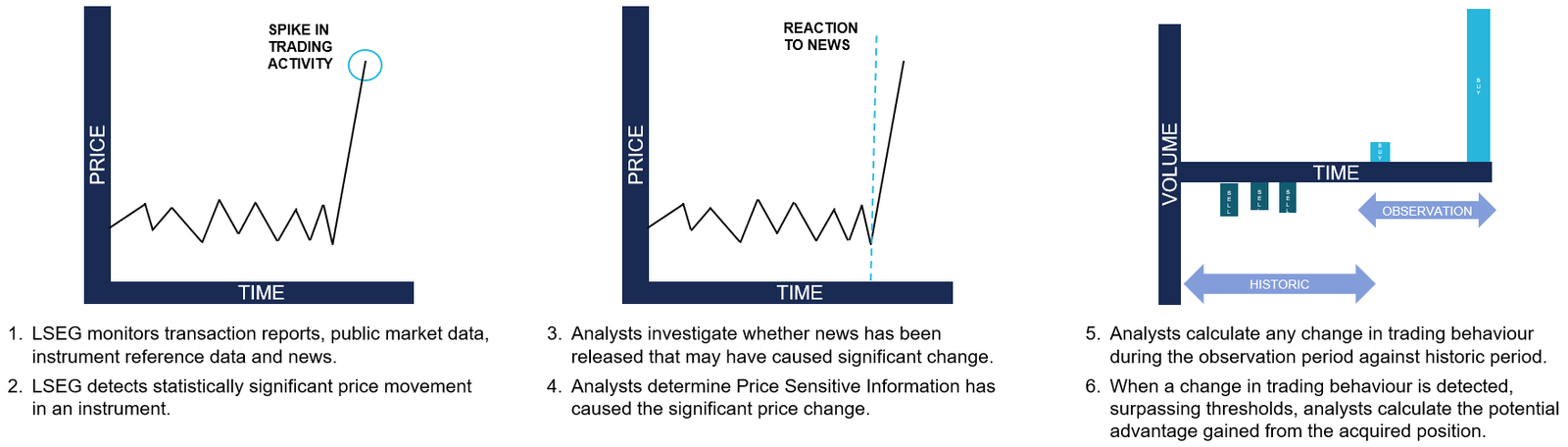

Currently, LSEG’s surveillance monitoring systems generate automated, customized alerts to flag suspicious trading activity to the Market Supervision team. Analysts then conduct initial triage assessments to determine whether the activity warrants further investigation, which might require undertaking differing levels of qualitative analysis. This could involve manual collation of all and any evidence that might be applicable when methodically corroborating regulation, news, sentiment and trading activity. For example, during an insider dealing investigation, analysts are alerted to statistically significant price movements. The analyst must then conduct an initial assessment of related news during the observation period to determine if the highlighted price move has been caused by specific news and its likely price sensitivity, as shown in the following figure. This initial step in assessing the presence, or absence, of price sensitive news guides the subsequent actions an analyst will take with a possible case of market abuse.

Initial triaging can be a time-consuming and resource-intensive process and still necessitate a full investigation if the identified behavior remains potentially suspicious or abusive.

Moreover, the dynamic nature of financial markets and evolving tactics and sophistication of bad actors demand that market facilitators revisit automated rules-based surveillance systems. The increasing frequency of alerts and high number of false positives adversely impact an analyst’s ability to devote quality time to the most meaningful cases, and such heightened emphasis on resources could result in operational delays.

Solution overview

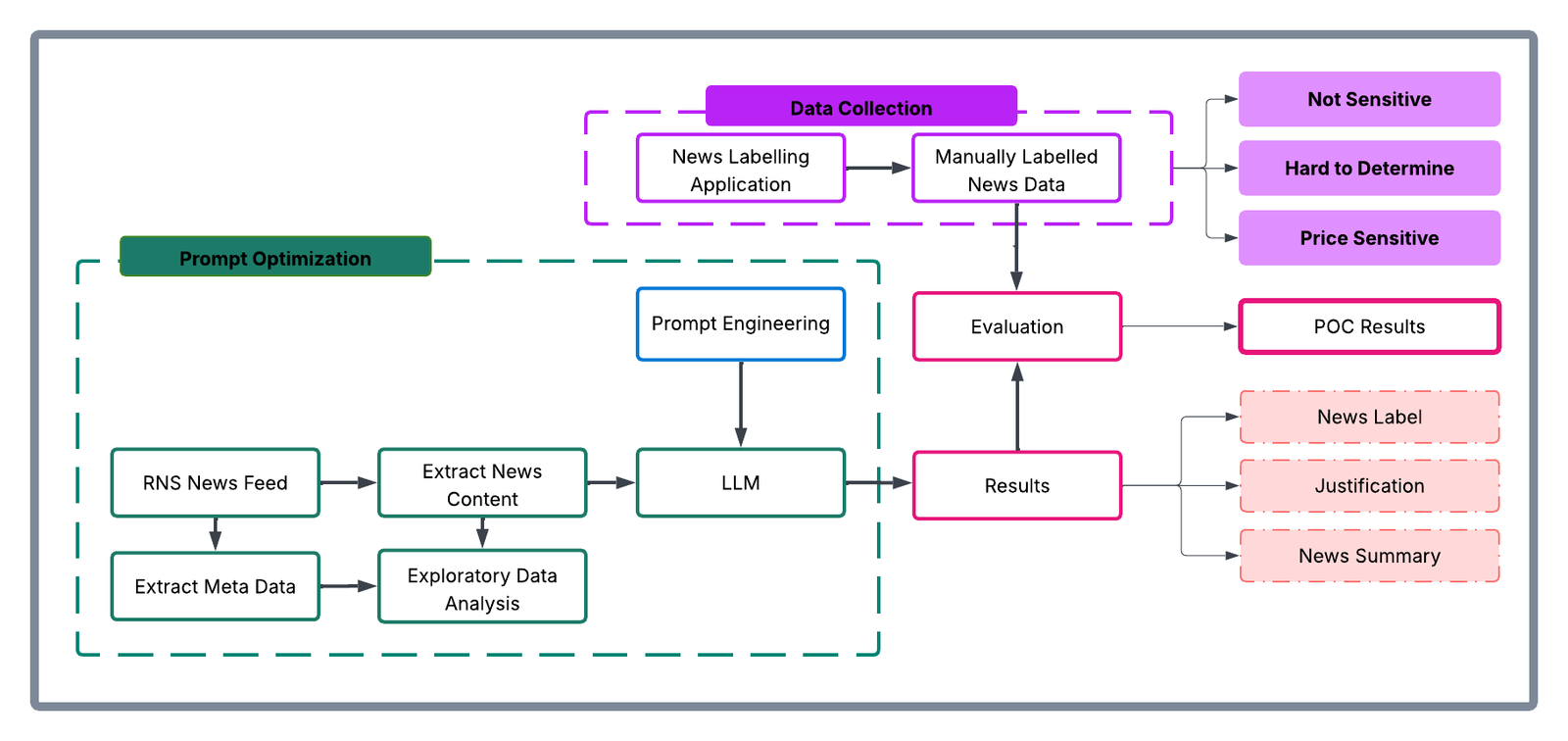

To address these challenges, LSEG collaborated with AWS to improve insider dealing detection, developing a generative AI prototype that automatically predicts the probability of news articles being price sensitive. The system employs Anthropic’s Claude Sonnet 3.5 model—the most price performant model at the time—through Amazon Bedrock to analyze news content from LSEG’s Regulatory News Service (RNS) and classify articles based on their potential market impact. The results support analysts to more quickly determine whether highlighted trading activity can be mitigated during the observation period.

The architecture consists of three main components:

- A data ingestion and preprocessing pipeline for RNS articles

- Amazon Bedrock integration for news analysis using Claude Sonnet 3.5

- Inference application for visualising results and predictions

The following diagram illustrates the conceptual approach:

The workflow processes news articles through the following steps:

- Ingest raw RNS news documents in HTML format

- Preprocess and extract clean news text

- Fill the classification prompt template with text from the news documents

- Prompt Anthropic’s Claude Sonnet 3.5 through Amazon Bedrock

- Receive and process model predictions and justifications

- Present results through the visualization interface developed using Streamlit

Methodology

The team collated a comprehensive dataset of approximately 250,000 RNS articles spanning 6 consecutive months of trading activity in 2023. The raw data—HTML documents from RNS—were initially pre-processed within the AWS environment by removing extraneous HTML elements and formatted to extract clean textual content. Having isolated substantive news content, the team subsequently carried out exploratory data analysis to understand distribution patterns within the RNS corpus, focused on three dimensions:

- News categories: Distribution of articles across different regulatory categories

- Instruments: Financial instruments referenced in the news articles

- Article length: Statistical distribution of document sizes

Exploration provided contextual understanding of the news landscape and informed the sampling strategy in creating a representative evaluation dataset. 110 articles were selected to cover major news categories, and this curated subset was presented to market surveillance analysts who, as domain experts, evaluated each article’s price sensitivity on a nine-point scale, as shown in the following image:

- 1–3: PRICE_NOT_SENSITIVE – Low probability of price sensitivity

- 4–6: HARD_TO_DETERMINE – Uncertain price sensitivity

- 7–9: PRICE_SENSITIVE – High probability of price sensitivity

The experiment was executed within Amazon SageMaker using Jupyter Notebooks as the development environment. The technical stack consisted of:

- Instructor library: Provided integration capabilities with Anthropic’s Claude Sonnet 3.5 model in Amazon Bedrock

- Amazon Bedrock: Served as the API infrastructure for model access

- Custom data processing pipelines (Python): For data ingestion and preprocessing

This infrastructure enabled systematic experimentation with various algorithmic approaches, including traditional supervised learning methods, prompt engineering with foundation models, and fine-tuning scenarios.

The evaluation framework established specific technical success metrics:

- Data pipeline implementation: Successful ingestion and preprocessing of RNS data

- Metric definition: Clear articulation of precision, recall, and F1 metrics

- Workflow completion: Execution of comprehensive exploratory data analysis (EDA) and experimental workflows

The analytical approach was a two-step classification process, as shown in the following figure:

- Step 1: Classify news articles as potentially price sensitive or other

- Step 2: Classify news articles as potentially price not sensitive or other

This multi-stage architecture was designed to maximize classification accuracy by allowing analysts to focus on specific aspects of price sensitivity at each stage. The results from each step were then merged to produce the final output, which was compared with the human-labeled dataset to generate quantitative results.

To consolidate the results from both classification steps, the data merging rules followed were:

| Step 1 Classification | Step 2 Classification | Final Classification |

|---|---|---|

| Sensitive | Other | Sensitive |

| Other | Non-sensitive | Non-sensitive |

| Other | Other | Ambiguous – requires manual review i.e., Hard to Determine |

| Sensitive | Non-sensitive | Ambiguous – requires manual review i.e., Hard to Determine |

Based on the insights gathered, prompts were optimized. The prompt templates elicited three key components from the model:

- A concise summary of the news article

- A price sensitivity classification

- A chain-of-thought explanation justifying the classification decision

The following is an example prompt:

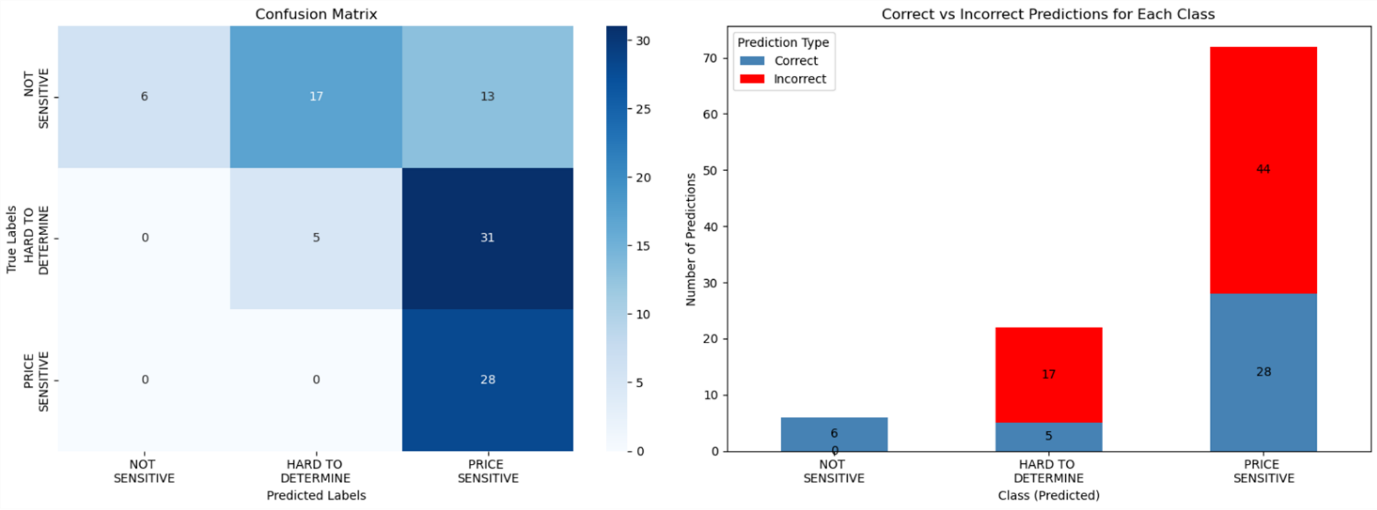

As shown in the following figure, the solution was optimized to maximize:

- Precision for the

NOT SENSITIVEclass - Recall for the

PRICE SENSITIVEclass

This optimization strategy was deliberate, facilitating high confidence in non-sensitive classifications to reduce unnecessary escalations to human analysts (in other words, to reduce false positives). Through this methodical approach, prompts were iteratively refined while maintaining rigorous evaluation standards through comparison against the expert-annotated baseline data.

Key benefits and results

Over a 6-week period, Surveillance Guide demonstrated remarkable accuracy when evaluated on a representative sample dataset. Key achievements include the following:

- 100% precision in identifying non-sensitive news, allocating 6 articles to this category that analysts confirmed were non price sensitive

- 100% recall in detecting price-sensitive content, allocating 36 hard to determine and 28 price sensitive articles labelled by analysts into one of these two categories (never misclassifying price sensitive content)

- Automated analysis of complex financial news

- Detailed justifications for classification decisions

- Effective triaging of results by sensitivity level

In this implementation, LSEG has employed Amazon Bedrock so that they can use secure, scalable access to foundation models through a unified API, minimizing the need for direct model management and reducing operational complexity. Because of the serverless architecture of Amazon Bedrock, LSEG can take advantage of dynamic scaling of model inference capacity based on news volume, while maintaining consistent performance during market-critical periods. Its built-in monitoring and governance features support reliable model performance and maintain audit trails for regulatory compliance.

Impact on market surveillance

This AI-powered solution transforms market surveillance operations by:

- Reducing manual review time for analysts

- Improving consistency in price-sensitivity assessment

- Providing detailed audit trails through automated justifications

- Enabling faster response to potential market abuse cases

- Scaling surveillance capabilities without proportional resource increases

The system’s ability to process news articles instantly and provide detailed justifications helps analysts focus their attention on the most critical cases while maintaining comprehensive market oversight.

Proposed next steps

LSEG plans to first enhance the solution, for internal use, by:

- Integrating additional data sources, including company financials and market data

- Implementing few-shot prompting and fine-tuning capabilities

- Expanding the evaluation dataset for continued accuracy improvements

- Deploying in live environments alongside manual processes for validation

- Adapting to additional market abuse typologies

Conclusion

LSEG’s Surveillance Guide demonstrates how generative AI can transform market surveillance operations. Powered by Amazon Bedrock, the solution improves efficiency and enhances the quality and consistency of market abuse detection.

As financial markets continue to evolve, AI-powered solutions architected along similar lines will become increasingly important for maintaining integrity and compliance. AWS and LSEG are intent on being at the forefront of this change.

The selection of Amazon Bedrock as the foundation model service provides LSEG with the flexibility to iterate on their solution while maintaining enterprise-grade security and scalability. To learn more about building similar solutions with Amazon Bedrock, visit the Amazon Bedrock documentation or explore other financial services use cases in the AWS Financial Services Blog.

About the authors

Charles Kellaway is a Senior Manager in the Equities Trading team at LSE plc, based in London. With a background spanning both Equity and Insurance markets, Charles specialises in deep market research and business strategy, with a focus on deploying technology to unlock liquidity and drive operational efficiency. His work bridges the gap between finance and engineering, and he always brings a cross-functional perspective to solving complex challenges.

Charles Kellaway is a Senior Manager in the Equities Trading team at LSE plc, based in London. With a background spanning both Equity and Insurance markets, Charles specialises in deep market research and business strategy, with a focus on deploying technology to unlock liquidity and drive operational efficiency. His work bridges the gap between finance and engineering, and he always brings a cross-functional perspective to solving complex challenges.

Rasika Withanawasam is a seasoned technology leader with over two decades of experience architecting and developing mission-critical, scalable, low-latency software solutions. Rasika’s core expertise lies in big data and machine learning applications, focusing intently on FinTech and RegTech sectors. He has held several pivotal roles at LSEG, including Chief Product Architect for the flagship Millennium Surveillance and Millennium Analytics platforms, and currently serves as Manager of the Quantitative Surveillance & Technology team, where he leads AI/ML solution development.

Rasika Withanawasam is a seasoned technology leader with over two decades of experience architecting and developing mission-critical, scalable, low-latency software solutions. Rasika’s core expertise lies in big data and machine learning applications, focusing intently on FinTech and RegTech sectors. He has held several pivotal roles at LSEG, including Chief Product Architect for the flagship Millennium Surveillance and Millennium Analytics platforms, and currently serves as Manager of the Quantitative Surveillance & Technology team, where he leads AI/ML solution development.

Richard Chester is a Principal Solutions Architect at AWS, advising large Financial Services organisations. He has 25+ years’ experience across the Financial Services Industry where he has held leadership roles in transformation programs, DevOps engineering, and Development Tooling. Since moving across to AWS from being a customer, Richard is now focused on driving the execution of strategic initiatives, mitigating risks and tackling complex technical challenges for AWS customers.

Richard Chester is a Principal Solutions Architect at AWS, advising large Financial Services organisations. He has 25+ years’ experience across the Financial Services Industry where he has held leadership roles in transformation programs, DevOps engineering, and Development Tooling. Since moving across to AWS from being a customer, Richard is now focused on driving the execution of strategic initiatives, mitigating risks and tackling complex technical challenges for AWS customers.

AI Research

CMU Research Uses AI to Better Predict Kidney Failure – News

Carnegie Mellon University researchers have created new AI models that do a better job of predicting which patients with chronic kidney disease (CKD) will go on to develop end-stage renal disease (ESRD). By combining medical records and insurance data, their approach gives doctors a better chance of catching the disease earlier, the ability to plan care more effectively and help reduce health gaps for people living with kidney disease.

“Our study presents a robust framework for predicting ESRD outcomes, improving clinical decision-making through integrated multisourced data and advanced analytics,” explained Rema Padman(opens in new window), Trustees Professor of Management Science and Healthcare Informatics at Carnegie Mellon’s Heinz College of Information Systems and Public Policy(opens in new window), who led the study(opens in new window). “Future research will expand data integration and extend this framework to other chronic diseases.”

CKD is a long-term condition where kidney function slowly declines, sometimes leading to ESRD, when kidneys lose nearly all function and patients need dialysis or a transplant to survive. Globally, CKD affects between 8% and 16% of people, with about 5% to 10% eventually developing ESRD.

The disease is expensive: in the U.S., Medicare spends a disproportionate amount on CKD patients, especially those who reach ESRD. Many ESRD patients are also readmitted to the hospital within a month of discharge, underscoring the urgent need for earlier detection and better management.

For this study, the researchers looked at data from more than 10,000 CKD patients collected between 2009 and 2018. They tested several statistical, machine learning and deep learning models, using different time windows to see how early predictions could be made accurately. The models that combined both clinical and claims data performed better than those using only one type of information.

They found that a 24-month observation window offered the best balance between early detection and prediction accuracy.

“Our work bridges a critical gap by developing a framework that uses integrated clinical and claims data rather than isolated data sources,” noted Yubo Li, a Ph.D. student at Heinz who co-authored the study. “By minimizing the observation window needed for accurate predictions, our approach balances clinical relevance with patient-centered practicality; this integration enhances both predictive accuracy and clinical utility, enabling more informed decision-making to improve patient outcomes.”

Among the study’s limitations, the authors say their reliance on data from one institution may limit the generalizability of their model to other care settings. In addition, their use of data from electronic health records can introduce observational bias, incomplete records and underrepresentation of certain patient groups, which can undermine both accuracy and fairness.

The research was funded by the Center for Machine Learning and Health(opens in new window) at Carnegie Mellon University.

AI Research

UMD Researchers Leverage AI to Enhance Confidence in HPV Vaccination

Human papillomavirus (HPV) vaccination represents a critical breakthrough in cancer prevention, yet its uptake among adolescents remains disappointingly low. Despite overwhelming evidence supporting the vaccine’s safety and efficacy against multiple types of cancer—including cervical, anal, and oropharyngeal cancers—only about 61% of teenagers aged 13 to 17 in the United States have received the recommended doses. Even more concerning are the even lower vaccination rates among younger children, starting at age nine, when the vaccine is first suggested. Addressing this paradox between scientific consensus and public hesitancy has become a focal point for an innovative research project spearheaded by communication expert Professor Xiaoli Nan at the University of Maryland (UMD).

The project’s core ambition involves harnessing artificial intelligence (AI) to transform the way vaccine information is communicated to parents, aiming to dismantle the barriers that fuel hesitancy. With a robust $2.8 million grant from the National Cancer Institute, part of the National Institutes of Health, Nan and her interdisciplinary team are developing a personalized, AI-driven chatbot. This technology is engineered not only to provide accurate health information but to adapt dynamically to parents’ individual concerns, beliefs, and communication preferences in real time—offering a tailored conversational experience that traditional brochures and websites simply cannot match.

HPV vaccination has long struggled with public misconceptions, stigma, and misinformation that discourage uptake. A significant factor behind the reluctance is tied to the vaccine’s association with a sexually transmitted infection, which prompts some parents to believe their children are too young for the vaccine or that vaccination might imply premature engagement with sexual activity. This misconception, alongside a lack of tailored communication strategies, has contributed to persistent disparities in vaccination rates. These disparities are especially pronounced among men, individuals with lower educational attainment, and those with limited access to healthcare, as Professor Cheryl Knott, a public health behavioral specialist at UMD, highlights.

Unlike generic informational campaigns, the AI chatbot leverages cutting-edge natural language processing (NLP) to simulate nuanced human dialogue. However, it does so without succumbing to the pitfalls of generative AI models, such as ChatGPT, which can sometimes produce inaccurate or misleading answers. Instead, the system draws on large language models to generate a comprehensive array of possible responses. These are then rigorously curated and vetted by domain experts before deployment, ensuring that the chatbot’s replies remain factual, reliable, and sensitive to users’ needs. When interacting live, the chatbot analyzes parents’ input in real time, selecting the most appropriate response from this trusted set, thereby balancing flexibility with accuracy.

This “middle ground” model, as described by Philip Resnik, an MPower Professor affiliated with UMD’s Department of Linguistics and Institute for Advanced Computer Studies, preserves the flexibility of conversational AI while instituting “guardrails” to maintain scientific integrity. The approach avoids the rigidity of scripted chatbots that deliver canned, predictable replies; simultaneously, it steers clear of the “wild west” environment of fully generative chatbots, where the lack of control can lead to misinformation. Instead, it offers an adaptive yet responsible communication tool, capable of engaging parents on their terms while preserving public health objectives.

The first phase of this ambitious experiment emphasizes iterative refinement of the chatbot via a user-centered design process. This involves collecting extensive feedback from parents, healthcare providers, and community stakeholders to optimize the chatbot’s effectiveness and cultural sensitivity. Once this foundational work is complete, the team plans to conduct two rigorous randomized controlled trials. The first trial will be conducted online with a nationally representative sample of U.S. parents, compare the chatbot’s impact against traditional CDC pamphlets, and measure differences in vaccine acceptance. The second trial will take place in clinical environments in Baltimore, including pediatric offices, to observe how the chatbot influences decision-making in real-world healthcare settings.

Min Qi Wang, a behavioral health professor participating in the project, emphasizes that “tailored, timely, and actionable communication” facilitated by AI signals a paradigm shift in public health strategies. This shift extends beyond HPV vaccination, as such advanced communication systems possess the adaptability to address other complex public health challenges. By delivering personalized guidance directly aligned with users’ expressed concerns, AI can foster a more inclusive health dialogue that values empathy and relevance, which traditional mass communication methods often lack.

Beyond increasing HPV vaccination rates, the research team envisions broader implications for public health infrastructure. In an era where misinformation can spread rapidly and fear often undermines scientific recommendations, AI-powered tools offer a scalable, responsive mechanism to disseminate trustworthy information quickly. During future pandemics or emergent health crises, such chatbots could serve as critical channels for delivering customized, real-time guidance to diverse populations, helping to flatten the curve of misinformation while respecting individual differences.

The integration of AI chatbots into health communication represents a fusion of technological innovation with behavioral science, opening new horizons for personalized medicine and health education. By engaging users empathetically and responsively, these systems can build trust and facilitate informed decision-making, critical components of successful public health interventions. Professor Nan highlights the profound potential of this marriage between AI and public health communication by posing the fundamental question: “Can we do a better job with public health communication—with speed, scale, and empathy?” Project outcomes thus far suggest an affirmative answer.

As the chatbot advances through its pilot phases and into clinical trials, the research team remains committed to maintaining a rigorous scientific approach, ensuring that the tool’s recommendations align with the highest standards of evidence-based medicine. This careful balance between innovation and reliability is essential to maximize public trust and the chatbot’s ultimate impact on vaccine uptake. Should these trials demonstrate efficacy, the model could serve as a blueprint for deploying AI-driven communication tools across various domains of health behavior change.

Moreover, the collaborative nature of this project—bringing together communication experts, behavioral scientists, linguists, and medical professionals—illustrates the importance of interdisciplinary efforts in addressing complex health challenges. Each field contributes unique insights: linguistic analysis enables nuanced conversation design, behavioral science guides motivation and persuasion strategies, and medical expertise ensures factual accuracy and clinical relevance. This holistic framework strengthens the chatbot’s ability to resonate with diverse parent populations and to overcome entrenched hesitancy.

In conclusion, while HPV vaccines represent a major advancement in cancer prevention, their potential remains underutilized due to deeply embedded hesitancy fueled by stigma and misinformation. Leveraging AI-driven, personalized communication stands as a promising strategy to bridge this gap. The University of Maryland’s innovative chatbot project underscores the use of responsible artificial intelligence to meet parents where they are, addressing their unique concerns with empathy and scientific rigor. This initiative not only aspires to improve HPV vaccine uptake but also to pave the way for AI’s transformative role in future public health communication efforts.

Subject of Research: Artificial intelligence-enhanced communication to improve HPV vaccine uptake among parents.

Article Title: Transforming Vaccine Communication: AI Chatbots Target HPV Vaccine Hesitancy in Parents

News Publication Date: Information not provided in the source content.

Web References:

https://sph.umd.edu/people/cheryl-knott

https://sph.umd.edu/people/min-qi-wang

Image Credits: Credit: University of Maryland (UMD)

Keywords: Vaccine research, Science communication

Tags: adolescent vaccination ratesAI-driven health communicationcancer prevention strategieschatbot technology in healthcareevidence-based vaccine educationHPV vaccination awarenessinnovative communication strategies for parentsNational Cancer Institute fundingovercoming vaccine hesitancyparental engagement in vaccinationpersonalized health informationUniversity of Maryland research

-

Business2 weeks ago

Business2 weeks agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Tools & Platforms4 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences4 months ago

Events & Conferences4 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Education2 months ago

Education2 months agoMacron says UK and France have duty to tackle illegal migration ‘with humanity, solidarity and firmness’ – UK politics live | Politics

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi