The Dawn of Human–AI Synergy

In every era of human civilization, science and technology have acted as the fuel of progress. From the invention of the wheel to the discovery of electricity, and from the first printing press to the age of the internet, technology has always pushed society forward. Yet, in the 21st century, we find ourselves at the edge of something even more profound—a future where human intelligence and artificial intelligence converge to reshape how we live, work, and even think.

This is not a story of distant centuries or futuristic fantasy. It is unfolding now, in real time, around us. Artificial Intelligence (AI), biotechnology, robotics, space exploration, and quantum computing are no longer dreams on paper; they are living realities with the potential to redefine what it means to be human.

AI: From Tools to Partners

Only a few decades ago, computers were seen as sophisticated calculators. Today, AI systems are generating music, diagnosing diseases, writing novels, and even driving cars. What makes this revolutionary is not just the speed of computation, but the ability of machines to *learn* and *adapt*.

Consider healthcare: AI-powered systems are now able to detect cancers in their earliest stages with accuracy that surpasses human doctors. In agriculture, AI drones are analyzing soil and weather patterns to guide farmers in planting crops more efficiently. In creative industries, algorithms are designing clothes, painting art, and even composing film scores.

The line between man and machine is slowly fading. Instead of replacing humans, the most successful innovations are those where AI works *with* us, not against us. This partnership opens the door to a future where tasks once thought impossible become routine.

Biotechnology: Editing Life Itself

Perhaps the most striking frontier of science today is biotechnology. With CRISPR gene-editing technology, scientists are rewriting the code of life. Genetic disorders that once doomed generations—like sickle-cell anemia or Huntington’s disease—may one day vanish from humanity’s story.

But beyond curing illness, biotechnology raises deeper ethical and philosophical questions. If we can design stronger, smarter, or more resilient humans, should we? Where is the line between medicine and enhancement?

At the same time, biotechnology is revolutionizing food production. Lab-grown meat and genetically engineered crops promise to feed billions sustainably, without exhausting our planet’s resources. The same tools that can design cures for rare diseases might also prevent global hunger.

Space Exploration: Humanity Beyond Earth

For centuries, the night sky has been a canvas for human imagination. Today, it is becoming our next great frontier. Private companies like SpaceX and Blue Origin are competing with national space agencies to make space travel more affordable and routine. Mars is no longer just a dream in science fiction novels; it is a target for colonization within the next few decades.

Space exploration is not merely about adventure. It is about survival. With climate change, overpopulation, and natural resource depletion threatening our planet, looking beyond Earth may one day be essential. Mining asteroids, building lunar bases, and developing interplanetary habitats could secure the future of our species.

And yet, the universe is not only a resource but a mystery. The search for extraterrestrial life, the study of black holes, and the pursuit of understanding dark matter remind us that science is not just about solving problems—it is about expanding our horizons.

Quantum Computing: The New Revolution

If AI is about intelligence and biotechnology about life, then quantum computing is about the very fabric of reality. Unlike traditional computers that process information in bits (0 or 1), quantum computers use *qubits* that can exist in multiple states simultaneously.

This gives quantum computers the potential to solve problems that would take classical supercomputers millions of years. From modeling new medicines to simulating climate systems and cracking complex codes, quantum technology could transform every industry.

Still in its infancy, quantum computing is like electricity in the 19th century—full of promise, waiting for its Edison or Tesla moment.

Challenges and Responsibilities

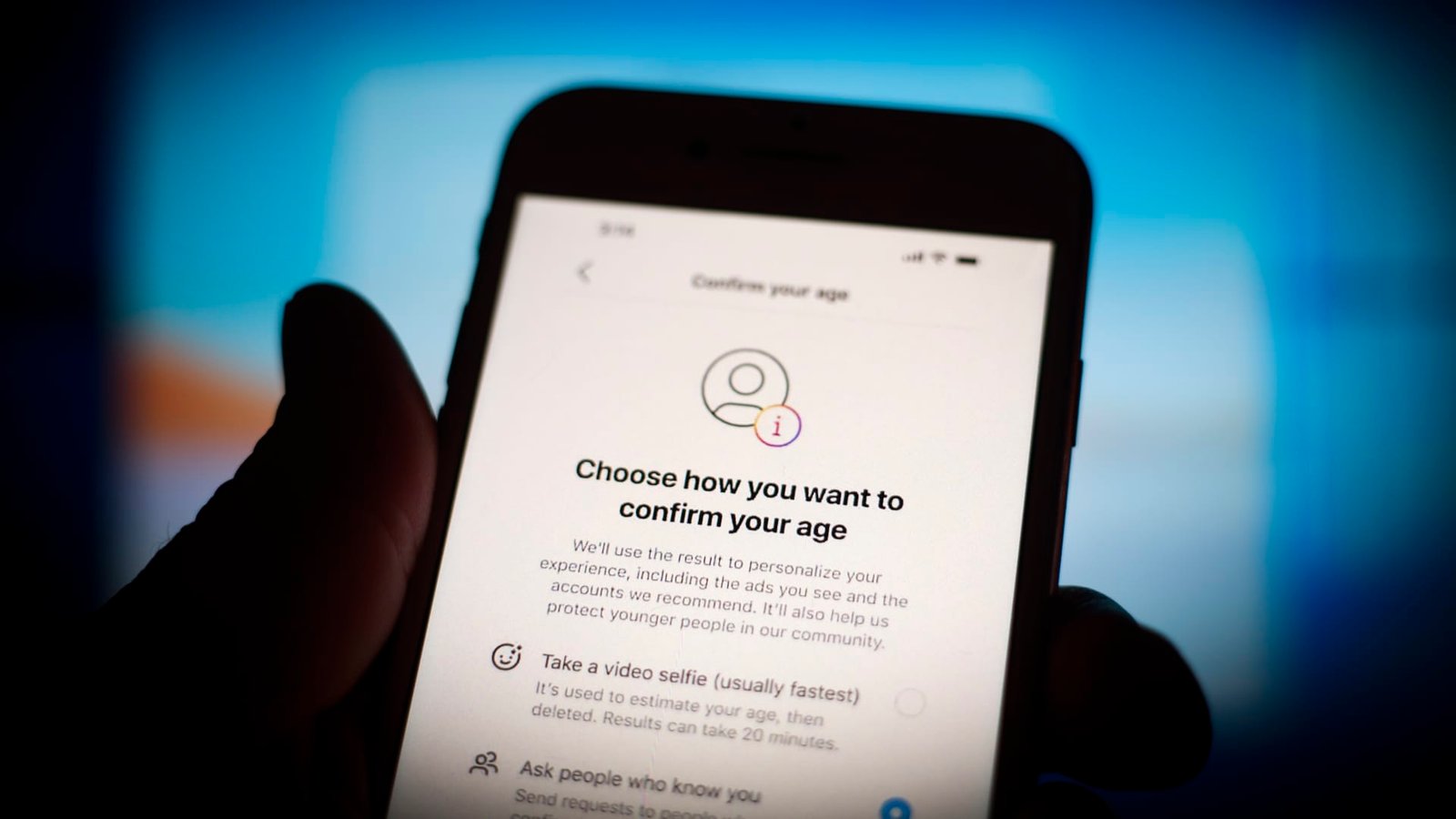

With every leap in technology comes responsibility. AI raises questions about privacy, job displacement, and bias. Biotechnology forces us to confront moral dilemmas about altering human life. Space exploration challenges us to unite globally for missions larger than any one nation. Quantum computing raises security risks that could upend global cybersecurity.

The danger is not the technology itself, but how humanity chooses to use it. Fire can warm a home or burn it down. Nuclear fission can power cities or destroy them. Likewise, the tools of the future will test our wisdom as much as our creativity.

Conclusion: A Shared Future

Science and technology are no longer separate subjects confined to laboratories. They are becoming the foundation of everyday life and the blueprint of tomorrow. What we build today—our machines, our medicines, our codes, and our ethics—will echo for generations.

The future will not be defined by whether humans or machines are smarter, but by how we choose to collaborate. The dawn of human–AI synergy is here. It is not about replacing humanity but about enhancing it, pushing us toward possibilities our ancestors could only dream of.

In this new age, the most important invention will not be a machine, a rocket, or a genome. It will be wisdom—the wisdom to use our tools not just to survive, but to thrive, to explore, and to create a future worthy of the human spirit.