Large language models (LLMs) go through several stages of training on mixed datasets with different distributions, stages that include pretraining, instruction tuning, and reinforcement learning from...

As climate change intensifies, our ability to predict and respond to cascading and compounding disasters grows increasingly critical. Floods, droughts, wildfires, and extreme storms are no...

At Amazon, responsible AI development includes partnering with leading universities to foster breakthrough research. Recognizing that many academic institutions lack the resources for large-scale studies, we’re...

Quantum computing (QC) is a new computational paradigm that promises significant speedup over classical computing on some problems. Quantum computations are often represented as complex circuits...

Today we are happy to announce Ocelot, our first-generation quantum chip. Ocelot represents Amazon Web Services’ pioneering effort to develop, from the ground up, a hardware...

Code generation — automatically translating natural-language specifications into computer code — is one of the most promising applications of large language models (LLMs). But the more...

Relational databases (RDBs) store vast amounts of structured data across multiple interconnected tables. This rich relational information has immense potential for predictive machine learning. However, the...

One of the most important features of today’s generative models is their ability to take unstructured, partially unstructured, or poorly structured inputs and convert them into...

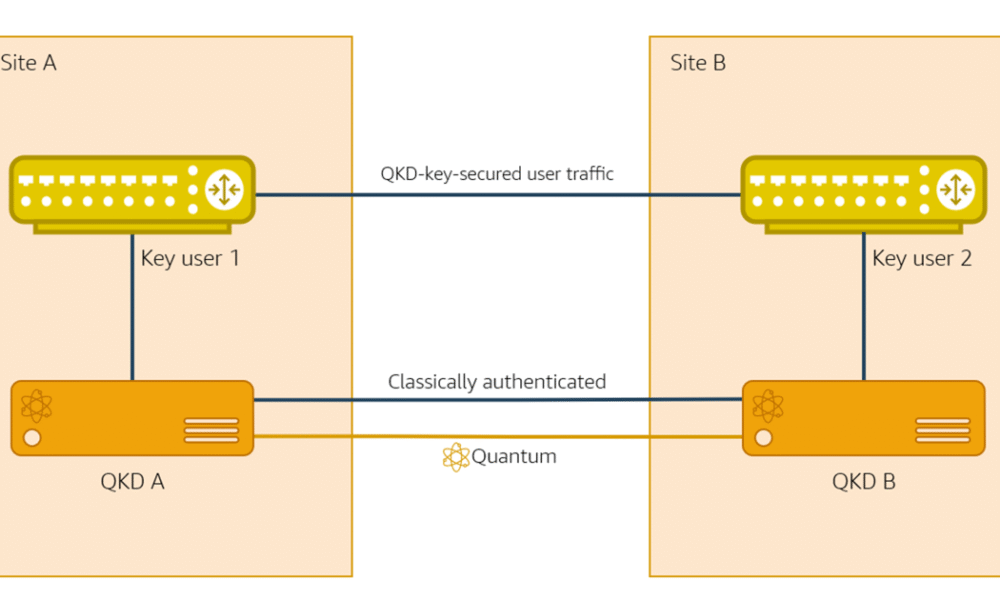

Quantum key distribution (QKD) is a technology that leverages the laws of quantum physics to securely share secret information between distant communicating parties. With QKD, quantum-mechanical...

Database research and development is heavily influenced by benchmarks, such as the industry-standard TPC-H and TPC-DS for analytical systems. However, these 20-year-old benchmarks capture neither how...