From virtual counselors that can hear depression creeping into a person’s voice to smart watches that can detect stress, artificial intelligence is poised to revolutionize mental health care for patients with breast cancer.

In a new paper, UVA Cancer Center’s J. Kim Penberthy, PhD, and colleagues detail the many ways artificial intelligence could help ensure patients receive the support they need. AI, they say, can identify patients at risk for mental-health struggles, get them treatment earlier, provide continuous psychological monitoring and even perform personalized interventions tailored to the individual.

But that’s just the tip of the iceberg. AI, the researchers say, can overcome some of the biggest barriers patients face to getting mental-health support by expanding options beyond clinic walls and delivering care exactly where and when it’s needed – even to rural areas where patients lack local mental-health treatment options.

Soon, doctors may combine multiple AI technologies to provide patients a “holistic, interactive treatment experience” that ensures the mental-health support is every bit as good as the care for the cancer itself, the UVA researchers write.

“AI can help us notice when a patient is struggling and get them the right support faster,” said Penberthy, a clinical psychologist at UVA Health and UVA Cancer Center. “This technology is moving quickly, and it’s exciting to see how soon it could make a real difference in people’s lives.“

Breast cancer and mental health

Breast cancer is the most common cancer in women, with 2.3 million new diagnoses each year. Up to half of those patients will go on to experience anxiety, depression or post-traumatic stress disorder (PTSD). While there have been great advances in how we treat breast cancer, mental-health support for these patients has lagged behind, the UVA researchers note.

“Mental health care is a lifeline for women with breast cancer,” Penberthy said. “Up to half experience anxiety or depression, and without support, treatment and quality of life can suffer. AI can help spot distress early and connect women to the care they need.“

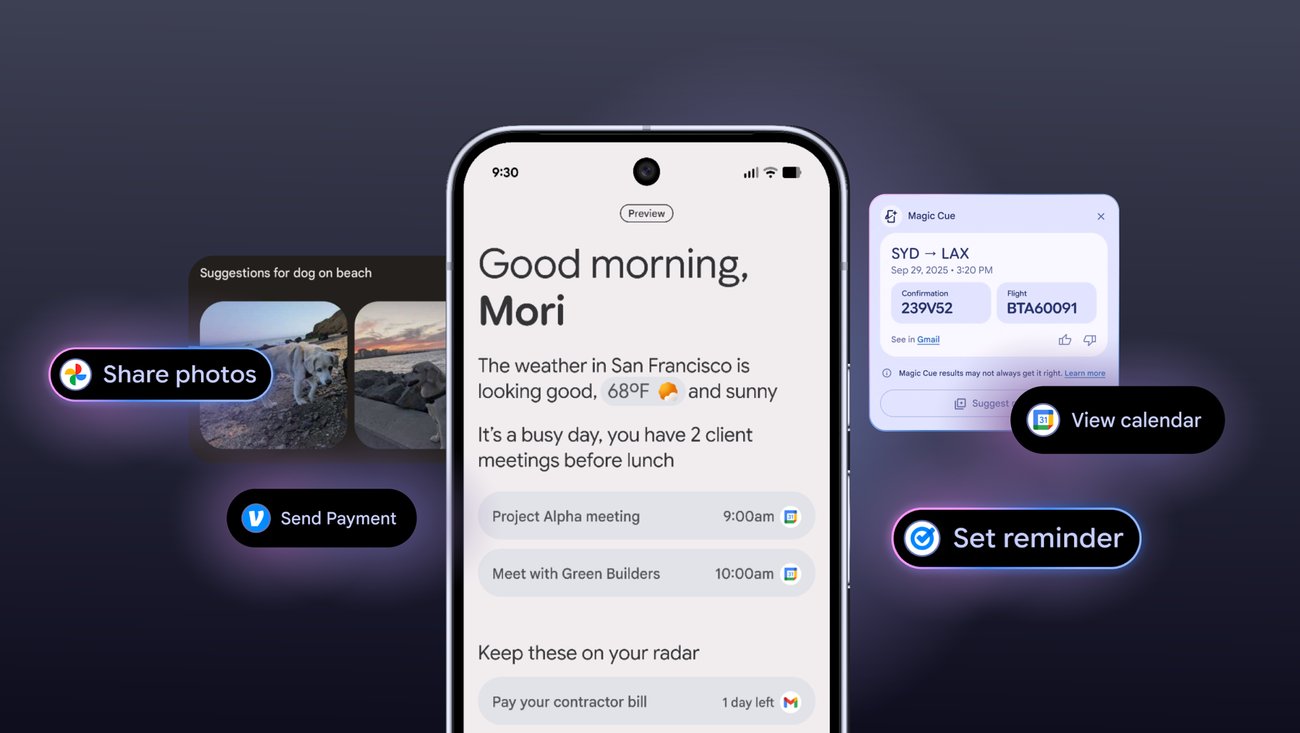

The co-authors envision a future – a near future – where AI plays a vast and crucial role in supporting patients’ mental health. The technology, they say, should not replace clinicians and care providers, but instead can extend providers’ reach and presence. By monitoring patients in real time, for example, AI could alert doctors that a patient may be struggling or slipping into depression.

Similarly, AI-powered chatbots and telepsychiatry platforms offer “scalable, cost-effective solutions” to increase access to psychological care, the researchers write. These advanced AI chatbots go far beyond the simple conversations often associated with their ilk. Instead of just responding to straightforward questions, the electronic entities can provide on-demand emotional support, suggest coping mechanisms, detail relaxation techniques and offer continuous psychological support even when therapists are unavailable.

AI, the researchers write, has tremendous potential to improve “accessibility, personalization, efficiency and cost-effectiveness“ of mental health care for patients with breast cancer. But they caution that the technology also brings challenges and ethical considerations. For example, AI can be a powerful tool to analyze mental health data, but this requires strict safeguards to protect patient privacy. Similarly, studies have shown that AI can “underperform” for patients from minority or underrepresented backgrounds, potentially contributing to care disparities, the authors write.

Those are the type of things that doctors and researchers will have to keep in mind as they explore the potential of AI, Penberthy and her collaborators say. But they are excited for what the future holds, noting that AI has “immense” potential for improving mental health support for patients with breast cancer.

We’re just beginning to scratch the surface of AI’s potential in health care and the positive impact AI will have in our lives. I’m incredibly optimistic about what the future will bring!”

David Penberthy, MD, MBA, co-author

UVA’s cutting-edge cancer research

Finding new ways to improve patient care is a core mission of both UVA Cancer Center and UVA’s Paul and Diane Manning Institute of Biotechnology. UVA Cancer Center is one of only 57 cancer centers in the country designated “comprehensive” by the National Cancer Institute in recognition of their exceptional patient care and cutting-edge cancer research.

The Manning Institute, meanwhile, has been launched to accelerate the development of new treatment and cures for the most challenging diseases. This will be complemented by a statewide clinical trials network that expands access to potential new treatments as they are developed and tested.

AI paper published

The Penberthys and their co-author – Jennifer Bires, MSW, LCSW, OSW-C – have published their paper in the scientific journal AI in Precision Oncology.

Source:

Journal reference:

Kim Penberthy, J., et al. (2025) A Narrative Review of the Role of Artificial Intelligence in Supporting the Mental Health of Patients with Breast Cancer. AI in Precision Oncology. https://doi.org/10.1177/2993091X251361147.