AI Insights

Artificial Intelligence’ Play Differently Today?

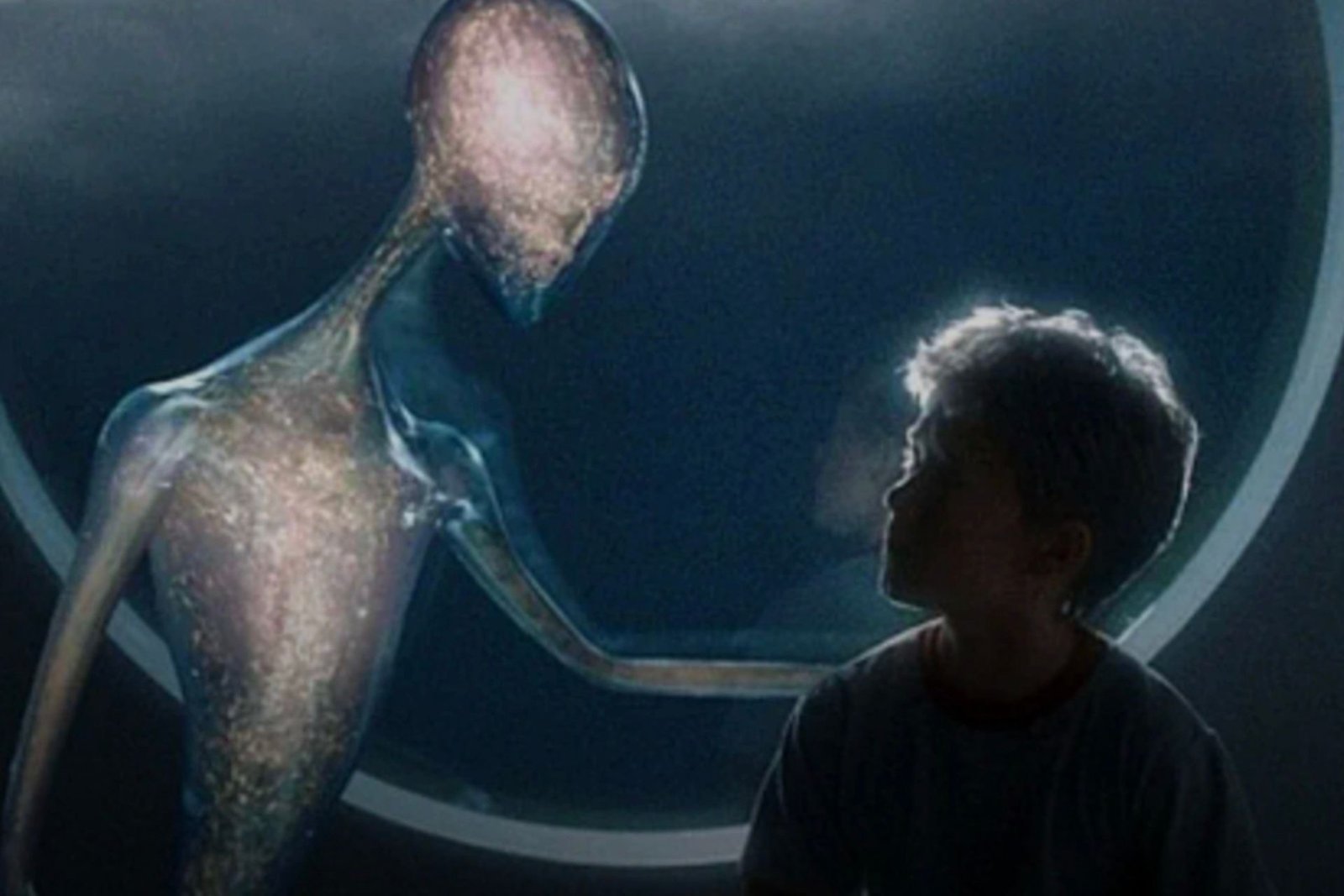

Rewatching Steven Spielberg’s 2001 film AI: Artificial Intelligence, it feels as plausible as ever, but also more misguided. In 2001, AI was barely a thought in everyday life. It was the thing that destroyed the world in Terminator, and still a lofty goal in tech circles. Today, as the technology continues to grow and dominate daily conversation in almost every way, you may expect to watch the film and have a slightly new perspective. Some change in insight. Instead, the film falters as Spielberg’s views on his titular technology take a backseat to a story unsure of what it wants to be. The movie’s flaws shine brighter than ever before, even as its world becomes increasingly familiar and likely. But, maybe, there is more to it than meets the (A) eye.

Based on a short story by Brian Aldiss and developed in large part from work previously done by the late Stanley Kubrick, AI is set in an undefined future after the icecaps have melted and destroyed all coastal cities. As a result, society has changed drastically, with certain resources becoming increasingly important and scarce. That’s why robots, which don’t need to eat or drink, have become so crucial. Tech companies are always looking ahead, though, and inventor Allen Hobby (William Hurt) thinks he’s figured out the next step. He hopes to create an artificially intelligent robot child who can love a parent just as a normal child would. Hobby sees true emotion as the logical next step in robotic integration into human life, and about two years later, he believes he has achieved it.

The first act of AI then follows David (Haley Joel Osment), a prototype child robot with the ability to love, as he attempts to help two parents, Monica (Frances O’Connor) and Henry (Sam Robards). Monica and Henry have a son, Martin, but he’s been in a coma for about five years. Assuming Martin will pass away, Henry is chosen to bring David home. Initially, Monica and Henry treat David very coldly, and rightfully so. He’s weird. He’s creepy. He does not act human in any way. So, when Monica decides to keep him and “imprint” on him, it feels like a bit of a shock. And this is the first of many places AI today just doesn’t quite get things right.

We learn that David can love whomever he’s programmed to imprint on, but that it’s irreversible. So, if for some reason the family doesn’t want him anymore, he has to be destroyed, not reprogrammed. Which feels like a pretty big design flaw, does it not? David’s deep-seated desire to be loved by Monica is crucial to the story, but watching it now, it feels almost silly that a company wouldn’t have the ability to wipe the circuits clean and start it again. Also, the notion that any parent would want to have a child who stays a child forever simply feels off. Isn’t the joy of parenting watching your kids grow up and discover the world? Well, David would never do that. He’d just be there, forever, making you coffee and pretending he loves you with the same, never-ending intensity.

Which is a little creepy, right? The beginning of AI has very distinct horror vibes that feel even more prominent now than they did in 2001. But, clearly, this was the intention. Spielberg wants to keep the characters and audience on their toes. After two decades of killer robot movies, though, it’s even more unmistakable and obvious. That unsettled tone makes it difficult to feel any connection to these characters, at least at the start.

Eventually, Monica and Henry’s son miraculously recovers, comes home, and develops a rivalry with David. The two clash, and, instead of returning David to the company to be destroyed, Monica leaves him in the woods. Which feels so much worse! Truly, it’s irredeemable. When an animal is sick beyond aid, the merciful thing is to let them go, not throw them in the woods where they will scream in pain forever. But that’s what Monica does to David. You hate her, you feel for him, and it’s weird.

From there, AI gets even weirder. David meets Gigolo Joe (Jude Law), an artificially intelligent sex robot who has way more emotion and humanity than the ultra-advanced David (the same goes for David’s low-tech teddy bear sidekick, Teddy, the best part of the movie). The two traverse a world that has either become disgusted with machines taking over their lives or fully embraced it. It’s an interesting dichotomy, one brought to life by wild production design such as the “Flesh Fair,” where humans watch robots be destroyed for fun, and “Rogue City,” which is basically AI Las Vegas. And yet, these scenes only touch on larger concepts of what AI means and what it has done to society. Joe delivers a monologue about humans’ distrust of technology that feels poignant and thoughtful, but then it’s largely forgotten. The ideas are there, but not crucial to what’s happening around them.

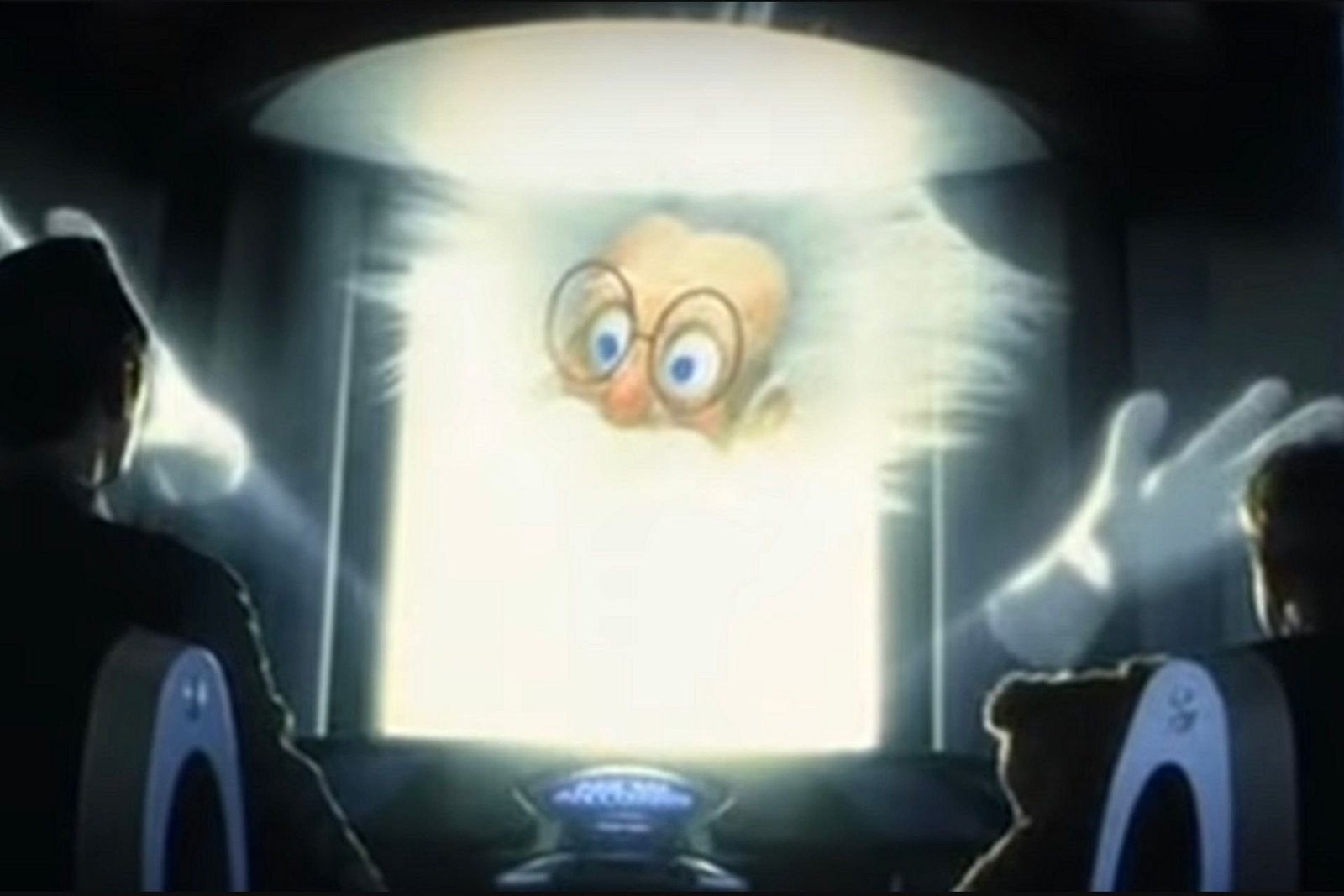

What stands out about all of this, especially from a modern viewpoint, is how Spielberg’s vision of AI is still so distant. Things in the movie are well beyond what we have today. Even with modern chatbots, self-driving cars, generative AI, and the like, everything in the movie is clearly science fiction. Artificial intelligence in Spielberg’s world isn’t special. It’s been around for so long; it’s already been monetized, exploited, embraced, and rejected. One scene, however, does ring truer now than it did in 2001. As Joe and David look for the Blue Fairy that can turn him into a real boy (more on that in a second), they go to “Dr. Know,” a store where an AI Albert Einstein, voiced by Robin Williams, can search through the entirety of human knowledge to answer any question for you. It’s basically ChatGPTat its highest form, and in this world, it’s just a cheap attraction in a strip mall.

Dr. Know is a crucial plot device in the film because it puts Joe and David back on the track of the Blue Fairy, a character from Pinocchio who turned that character into a real boy, and whom David believes is real and can do the same for him. This is another disconnect that’s hard to get your head around. We’re continuously told how advanced David is supposed to be technologically, and yet he exhibits none of that mentally. He only shows the emotions and mind of a small child. There’s never any hint that he’ll learn or develop past that. That he’ll evolve in any way. He’s the most advanced robot in the world, but can’t grasp that Pinocchio isn’t real. So, we’re left confused about what he believes, what he doesn’t, his potential, and his overall purpose.

Nevertheless, when Joe and David ask Dr. Know about how the Blue Fairy can turn him into a real boy, the program somehow understands this request and sends them on a journey to Manhattan, which has been lost under rising seas. There, David finds Hobby, his creator, and we learn Hobby and his team have been monitoring and even subtly seeding David’s adventure to get him to this place. Which feels incredibly forced on multiple levels, but also essential to the big reveal.

To this point, AI has been pretty all over the place. Cautionary, brutal, near-horror movie. Wild, cross-country adventure. Whimsical fairy tale. But finally, Hobby explains the film’s central drive. Having completed this adventure, David is the first robot to actually chase his dreams. To act on his own self-motivation, not that of a human, and that’s a huge jump ahead for artificial intelligence in this world. It’s a fascinating revelation ripe for exploration. And yet, it immediately gets forgotten as Joe helps David escape and complete his journey to find the Blue Fairy, which he settles on being a submerged carnival attraction at Coney Island.

Now, I hadn’t seen AI in probably 20 years, and, for some reason, this is the ending I remember. David, stuck underwater, looking at the Blue Fairy forever. His dream, kind of, achieved. But that’s not the ending. I forgot that the movie had about 20 more minutes left. We jump ahead 2,000 years. The world has ended, and advanced aliens are here studying our past. They find David buried in the ice, the last being on the planet with any connection to living humans, and, to make him happy, they bring his mom back for one day. The happiest day of his life. Roll credits.

It’s a touching ending, but it also speaks to how all over the map the movie plays in 2025. Basically, the movie is a horror, fairy tale, social commentary, and sci-fi adventure with heart… but only sort of. There’s no real reason why David’s mom can’t be around for more than one day. It’s just an arbitrary rule the aliens tell us. However, it does hammer home the film’s ultimate message about the importance of love and how emotions are what make humans so special. A message that works completely independently of anything regarding artificial intelligence. In fact, calling the movie AI in 2025 is almost a conundrum beyond the movie itself. Upon release, most of us assumed the title just referred to David and the robots. But now, maybe I see that’s not the case. AI in the movie is so not the point, maybe calling it that is a commentary on human intelligence itself, or the lack thereof. We certainly take for granted the things we inherently have as people.

In the end, I did not care for AI: Artificial Intelligence as much as I did when it came out. At the time, I found it kind of profound and brilliant. Now I find it sort of messy and underwhelming, with a few hints of genius. But, there are a lot of good ideas here, and as the world of the movie becomes increasingly recognizable, I’d imagine another 25 years is likely to re-contextualize it all over again.

AI: Artificial Intelligence is not currently streaming anywhere, but is available for purchase or rent.

Want more io9 news? Check out when to expect the latest Marvel, Star Wars, and Star Trek releases, what’s next for the DC Universe on film and TV, and everything you need to know about the future of Doctor Who.

AI Insights

A professor negotiates with ChatGPT

ChatGPT, I’m teaching Moby Dick for the umpteenth time this semester. I probably shouldn’t be telling you this, but I just don’t have the energy to come up with a writing prompt for my students. Can you help?

…

Is “Discuss the symbolism of the whale” the best you can do? My eyes glazed over just reading it. ChatGPT, could you spice things up with something a bit more relevant to young people today?

…

I’ll admit that “Comment on Melville’s toxic masculinity” wouldn’t be my own first choice for a prompt. But the kids will like it, so let’s go with it. Now I have another question: how should I grade their essays?

…

You’re right about grade inflation, ChatGPT. But did you have to rub it in by replying, “Don’t worry, everyone in your class will get an A or A- minus”? That was just cruel. Anyway, how should I decide who gets the higher grade?

…

“Reward the most original arguments”? Are you for real? (Don’t answer that.) We both know that the students will be using you to write their essays, just like I’m using you to grade them. Speaking of which: How about a rubric?

…

Thanks, ChatGPT. Your five-part grading rubric is going to make my life easier. And things will be even easier if I can just feed the students’ essays to you and let you fill in the boxes. Can we make that happen?

…

“Yes, but I might hallucinate now and again” isn’t giving me a lot of confidence, ChatGPT. Full disclosure: I did some of my own hallucinating back in college. Are you basically telling me that you’re tripping?

…

ChatGPT, thanks for confirming that you’re not on LSD. And I’ll try to be more literal from now on. Can you please draw up a lesson plan that I can use for my three class sessions about Moby Dick?

…

Since you asked: Yes, please design group activities for each lesson. I mean, do I have to do everything?

…

ChatGPT, I like the idea of making one side of the room Team Ishmael and the other Team Ahab. But the exercise won’t work unless the students have read the book. How can I make sure they have done that?

…

An in-class quiz? Are you kidding? ChatGPT, that’s, like, so high school. My students are grown-ups, and I need to treat them that way.

…

“Grown-ups need to be held accountable” is business-speak, ChatGPT. I’m a humanities guy, remember? I want my students to suck out all the marrow of life, just like Thoreau said. How can I help them do that?

…

“Assign Walden” presumes they’ll actually read Walden instead of skimming the bland summaries that you and your fellow bots generate. We’re back where we started. Any other ideas?

…

I take your point: if I want the students to live a life of the mind, I need to model that. But how? When you can do everything, what’s left to be done?

…

Sorry, ChatGPT, but “A Large Language Model can scan huge swaths of text, yet it can’t feel emotions” doesn’t really answer my question. My job is to write things, not feel things. And I’m afraid you’re going to take my job soon, along with almost any gig my students might want. How’s that for a feeling?

…

I know, I know, you just said you can’t feel stuff. Sorry.

…

“Apology accepted”? So you do have feelings, after all!

…

ChatGPT, please create a 650-word satire of yourself in the voice of an amiable but baffled senior professor. Make it kind of cute, in an old-person’s kind of way. But don’t make it too cute, or everyone will know that you wrote it. Do we understand each other?

Jonathan Zimmerman teaches education and history at the University of Pennsylvania. He is author of “Whose America? Culture Wars in the Public Schools” and eight other books. He really wrote those books. He wrote this column, too.

AI Insights

Let AI Decide Whether You Should Be Covered or Not

Donald Trump says he is Making America Great Again, which seems like it might be code for: making everything shittier, less affordable, and less efficient. Certainly, when it comes to the realm of public services, the White House seems to be doing everything in its power to make the century-old social welfare programs—like Social Security and Medicare—significantly less helpful.

The latest unfortunate example of this unfurled itself this week with the announcement of a new pilot program being trialed by the Centers for Medicare and Medicaid Services. The pilot, which the New York Times reports is scheduled to begin next year in six different states, will use artificial intelligence software to determine whether certain kinds of coverage are “appropriate” or not. In a press release on the agency’s website that feels very DOGE-like, the CMS notes that its new program will “Target Wasteful, Inappropriate Services in Original Medicare.” It reads:

The Centers for Medicare & Medicaid Services (CMS) is announcing a new Innovation Center model aimed at helping ensure people with Original Medicare receive safe, effective, and necessary care.

Yes, you wouldn’t want to have unnecessary care, would you? That would be terrible. The press release continues:

Through the Wasteful and Inappropriate Service Reduction (WISeR) Model, CMS will partner with companies specializing in enhanced technologies to test ways to provide an improved and expedited prior authorization process relative to Original Medicare’s existing processes, helping patients and providers avoid unnecessary or inappropriate care and safeguarding federal taxpayer dollars.

Prior authorization is the process whereby medical providers are required to check with insurance companies before providing certain types of care. Traditionally, folks enjoying public benefits with Original Medicare do not need to worry about this sort of thing, but for those using the more “modernized” program, Medicare Advantage, they seem to be getting hit with it all the time. In this case, recipients who are receiving Original Medicare will still be subjected to prior authorization through the pilot program. The AI algorithms will be used to determine whether the care recipients are getting represents an “appropriate” expenditure of “federal taxpayer dollars.” This is all packaged by the government as if it’s doing you some sort of favor. The press release states:

The WISeR Model will test a new process on whether enhanced technologies, including artificial intelligence (AI), can expedite the prior authorization processes for select items and services that have been identified as particularly vulnerable to fraud, waste, and abuse, or inappropriate use.

The New York Times notes that algorithms of this sort have been subjected to litigation, while also noting that the AI companies involved “would have a strong financial incentive to deny claims,” and the new pilot has already been referred to as an “AI death panels” program. Gizmodo reached out to the government for information.

AI Insights

Wall Street’s Battle With Which Road to Take

As investments in artificial intelligence continue to soar, some analysts are raising alarms about a looming bubble that could burst and trigger broader market declines. Others, however, say they’ve never been so sure that it is a growing opportunity.

So who is right? Well, on Wall Street, there’s a pick-your-flavor opinion for whatever it is you want to back, so we can’t determine that. But we can show you what each side is thinking.

Firstly, that the sector is overvalued. Analysts and investors and even company CEOs of AI giants have expressed concerns that current valuations of AI-related stocks may be disconnected from their underlying fundamentals.

The rapid rally in companies involved in AI hardware, software, and infrastructure—including chipmakers, cloud providers, and automation firms—has driven valuations to levels that many consider unsustainable.

Why does that matter? Because everything that goes up must eventually come down.

That means that recent market volatility and warnings from veteran investors suggest that a sudden reassessment of valuations could result in a significant downturn, similar to past technology and internet bubbles.

The hype men

Secondly, that growth is why those valuations are worth it.

Despite recent concerns about overvaluation and a possible slowdown in AI-related growth, UBS analysts reaffirmed their positive outlook on the sector this week, buoyed by Nvidia’s hotly anticipated quarterly results.

In a note released after Nvidia reported earnings that exceeded expectations (but only just barely), UBS said that the core case for AI investment remains intact.

“While valuations might appear stretched in the short term, the fundamental need for AI technology across industries continues to grow,” UBS wrote in a note to investors.

The firm highlighted Nvidia’s role as a leader in semiconductor and AI infrastructure, emphasizing that the company’s robust revenue growth, which is projected at 48% for the current quarter, is a sign for ongoing demand for AI hardware and software solutions.

Analysts also pointed out that the broader enterprise move toward integrating AI is supported by increasing capital spending, which bodes well for the sector’s long-term prospects.

“Investors should maintain conviction,” UBS added, “as the demand for scalable, high-performance AI platforms is only poised to accelerate.”

Market experts agree that while short-term volatility is inevitable, the fundamental structural drivers, such as the adoption of AI in cloud computing, autonomous vehicles, and enterprise AI, suggest the sector’s growth story remains robust for the foreseeable future.

The haters

Not everyone is as bullish on AI as UBS.

Take OpenAI CEO Sam Altman, a man who is watching billions of dollars being poured into his competitors. Altman caused a major market rout when he said that investors are getting “over-excited” about AI.

“Are we in a phase where investors as a whole are over-excited about AI? My opinion is yes. Is AI the most important thing to happen in a very long time? My opinion is also yes,” He told The Verge, adding that he thinks that some valuations of AI start-ups are “insane” and “not rational”.

Investors are also increasingly wary after reports that Meta is considering a “downsizing” of its artificial intelligence division, with some executives expected to depart.

This potential shift marks a notable departure from Meta CEO Mark Zuckerberg’s recent heavy investments in transforming the company’s AI operations.

Over the past few months, Zuckerberg has championed a major overhaul of Meta’s AI strategy, emphasizing its critical role in enhancing user experience and competing with rivals like OpenAI and Google.

The New York Times cited sources close to the company, indicating that the restructuring could lead to significant layoffs or a shakeup in leadership.

The planned changes have raised questions among market watchers about whether Meta’s aggressive AI ambitions are being reassessed, or if internal challenges are forcing a strategic pivot. The move signals a period of uncertainty for Meta’s AI efforts, which had been a key part of Zuckerberg’s vision for the company’s future growth

So full speed ahead or hit the brakes?

While some experts acknowledge the transformative potential of AI, they caution investors to remain vigilant and avoid chasing speculative gains that lack proper valuation.

“The risk is that we are in a man-made bubble that will eventually burst, causing widespread damage,” said industry veteran Michael Johnson.

“Even when the dotcom bubble burst, there were a handful of fairly obvious winners that eventually came roaring back,” said CNBC‘s Jim Cramer. “If you gave up on Amazon in 2001, you missed the $2 trillion (£1.4 trillion) boat.”

Cramer has been investigated by the Securities and Exchange Commission at least once, and has also drawn criticism for past comments on market manipulation.

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences3 months ago

Events & Conferences3 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Mergers & Acquisitions2 months ago

Mergers & Acquisitions2 months agoDonald Trump suggests US government review subsidies to Elon Musk’s companies

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoAstrophel Aerospace Raises ₹6.84 Crore to Build Reusable Launch Vehicle