AI Research

AI-Powered Cities: How Artificial Intelligence Could Run Entire Nations by 2035

The Future of Civilization Is Changing

It’s no longer a question of if artificial intelligence will reshape society—it’s how fast. According to leading researchers, by 2035, AI won’t just assist us with work, shopping, or entertainment. It could become the invisible backbone of entire cities, nations, and even global systems.

Imagine walking through a city where:

Traffic lights adjust instantly to prevent accidents.

Medical drones deliver emergency care before ambulances arrive.

Crime is predicted and prevented before it happens.

Energy grids run with near-zero waste.

This isn’t a Hollywood movie—it’s a future many scientists believe is only a decade away.

—

The Rise of “Smart Cities”

Smart cities already exist in small ways. Places like Singapore, Dubai, and Shenzhen have begun using AI to manage:

Traffic flow.

Waste management.

Security cameras.

Public transport.

But what’s coming next is far more ambitious: entire AI-governed ecosystems. By 2035, cities could be fully automated, running with minimal human interference.

—

AI as the “Invisible Government”

Here’s the bold prediction: instead of relying on slow human bureaucracies, nations may begin using AI systems to govern directly.

Think of it like this:

Law enforcement: AI could analyze millions of data points to stop crimes before they escalate.

Healthcare: Algorithms could track disease outbreaks in real time and deploy treatments instantly.

Economy: AI could balance supply and demand, reducing inflation or shortages.

Climate control: Advanced systems could regulate energy, water, and waste on a national scale.

It sounds radical—but remember, computers already control stock markets, airplanes, and nuclear systems. The leap to running cities may not be as far away as it seems.

—

Benefits: Why AI Governance Might Work

Supporters believe AI-led cities could bring unprecedented efficiency:

Zero corruption – Machines don’t take bribes.

Instant decision-making – No waiting for endless debates in parliament.

Personalized services – AI could tailor education, healthcare, and jobs to each citizen.

Environmental balance – Energy grids could cut waste, lowering pollution.

For many, this could mean a better quality of life: fewer accidents, cleaner air, healthier citizens, and safer streets.

—

The Dark Side: What Could Go Wrong

But every dream has a shadow. Critics warn that AI-led nations could become digital dictatorships.

Surveillance states: AI could track every movement, message, and transaction.

Bias in algorithms: If AI is trained on flawed data, entire groups could be unfairly targeted.

Loss of privacy: Daily life might feel more like living under constant watch.

Human obsolescence: Millions could lose jobs as AI handles everything from teaching to law enforcement.

The big question: Who controls the AI? If governments or corporations hold the keys, the risks of abuse are enormous.

—

Real-World Experiments Already Happening

While full AI nations don’t yet exist, hints of this future are already here:

China uses AI-driven cameras and facial recognition for security and law enforcement.

Estonia has digital governance where 99% of public services are online.

Dubai has announced plans to become the first blockchain and AI-powered government.

By 2035, these small steps could evolve into fully AI-administered societies.

—

What Life in 2035 Could Look Like

Close your eyes and picture 2035:

You wake up in a smart home that knows your schedule, diet, and health needs.

A city-wide AI checks your body vitals and sends medicine if something is wrong.

Roads are filled with self-driving cars that never crash.

Work is optional, as AI automates most jobs, and citizens receive a universal basic income.

Courts are replaced by instant AI arbitration systems that resolve disputes in minutes.

It could feel like a utopia—or a digital prison. The outcome depends on how wisely humanity handles this transition.

—

Humanity’s Role in an AI Future

The truth is, no matter how advanced AI becomes, it will still reflect human choices. Whether we design it to be fair, transparent, and compassionate—or oppressive and controlling—will determine if AI nations are heaven or hell.

What’s clear is that by 2035, AI won’t just be an assistant. It will be a co-governor of humanity.

—

Why This Story Matters

This isn’t just speculation—it’s a conversation we need to have now. As technology accelerates faster than laws, ethics, and governments can keep up, we face a choice:

Shape AI into a tool of empowerment.

Or allow it to evolve into a force of control.

The decisions made in the next 10 years will shape life for generations.

—

SEO Keywords 🚀

AI cities 2035, Artificial intelligence future governance, AI nations, Smart cities future, AI-powered government, Elon Musk AI predictions, AI in society, future of AI and humans, cities run by AI, digital governance 2035.

—

AI Research

AI replaces excuses for innovation, not jobs

AI isn’t here to replace jobs, it’s here to eliminate outdated practices and empower entrepreneurs to innovate faster and smarter than ever before.

AI Research

AI Tool Flags Predatory Journals, Building a Firewall for Science

Summary: A new AI system developed by computer scientists automatically screens open-access journals to identify potentially predatory publications. These journals often charge high fees to publish without proper peer review, undermining scientific credibility.

The AI analyzed over 15,000 journals and flagged more than 1,000 as questionable, offering researchers a scalable way to spot risks. While the system isn’t perfect, it serves as a crucial first filter, with human experts making the final calls.

Key Facts

- Predatory Publishing: Journals exploit researchers by charging fees without quality peer review.

- AI Screening: The system flagged over 1,000 suspicious journals out of 15,200 analyzed.

- Firewall for Science: Helps preserve trust in research by protecting against bad data.

Source: University of Colorado

A team of computer scientists led by the University of Colorado Boulder has developed a new artificial intelligence platform that automatically seeks out “questionable” scientific journals.

The study, published Aug. 27 in the journal “Science Advances,” tackles an alarming trend in the world of research.

Daniel Acuña, lead author of the study and associate professor in the Department of Computer Science, gets a reminder of that several times a week in his email inbox: These spam messages come from people who purport to be editors at scientific journals, usually ones Acuña has never heard of, and offer to publish his papers—for a hefty fee.

Such publications are sometimes referred to as “predatory” journals. They target scientists, convincing them to pay hundreds or even thousands of dollars to publish their research without proper vetting.

“There has been a growing effort among scientists and organizations to vet these journals,” Acuña said. “But it’s like whack-a-mole. You catch one, and then another appears, usually from the same company. They just create a new website and come up with a new name.”

His group’s new AI tool automatically screens scientific journals, evaluating their websites and other online data for certain criteria: Do the journals have an editorial board featuring established researchers? Do their websites contain a lot of grammatical errors?

Acuña emphasizes that the tool isn’t perfect. Ultimately, he thinks human experts, not machines, should make the final call on whether a journal is reputable.

But in an era when prominent figures are questioning the legitimacy of science, stopping the spread of questionable publications has become more important than ever before, he said.

“In science, you don’t start from scratch. You build on top of the research of others,” Acuña said. “So if the foundation of that tower crumbles, then the entire thing collapses.”

The shake down

When scientists submit a new study to a reputable publication, that study usually undergoes a practice called peer review. Outside experts read the study and evaluate it for quality—or, at least, that’s the goal.

A growing number of companies have sought to circumvent that process to turn a profit. In 2009, Jeffrey Beall, a librarian at CU Denver, coined the phrase “predatory” journals to describe these publications.

Often, they target researchers outside of the United States and Europe, such as in China, India and Iran—countries where scientific institutions may be young, and the pressure and incentives for researchers to publish are high.

“They will say, ‘If you pay $500 or $1,000, we will review your paper,’” Acuña said. “In reality, they don’t provide any service. They just take the PDF and post it on their website.”

A few different groups have sought to curb the practice. Among them is a nonprofit organization called the Directory of Open Access Journals (DOAJ).

Since 2003, volunteers at the DOAJ have flagged thousands of journals as suspicious based on six criteria. (Reputable publications, for example, tend to include a detailed description of their peer review policies on their websites.)

But keeping pace with the spread of those publications has been daunting for humans.

To speed up the process, Acuña and his colleagues turned to AI. The team trained its system using the DOAJ’s data, then asked the AI to sift through a list of nearly 15,200 open-access journals on the internet.

Among those journals, the AI initially flagged more than 1,400 as potentially problematic.

Acuña and his colleagues asked human experts to review a subset of the suspicious journals. The AI made mistakes, according to the humans, flagging an estimated 350 publications as questionable when they were likely legitimate. That still left more than 1,000 journals that the researchers identified as questionable.

“I think this should be used as a helper to prescreen large numbers of journals,” he said. “But human professionals should do the final analysis.”

A firewall for science

Acuña added that the researchers didn’t want their system to be a “black box” like some other AI platforms.

“With ChatGPT, for example, you often don’t understand why it’s suggesting something,” Acuña said. “We tried to make ours as interpretable as possible.”

The team discovered, for example, that questionable journals published an unusually high number of articles. They also included authors with a larger number of affiliations than more legitimate journals, and authors who cited their own research, rather than the research of other scientists, to an unusually high level.

The new AI system isn’t publicly accessible, but the researchers hope to make it available to universities and publishing companies soon. Acuña sees the tool as one way that researchers can protect their fields from bad data—what he calls a “firewall for science.”

“As a computer scientist, I often give the example of when a new smartphone comes out,” he said.

“We know the phone’s software will have flaws, and we expect bug fixes to come in the future. We should probably do the same with science.”

About this AI and science research news

Author: Daniel Strain

Source: University of Colorado

Contact: Daniel Strain – University of Colorado

Image: The image is credited to Neuroscience News

Original Research: Open access.

“Estimating the predictability of questionable open-access journals” by Daniel Acuña et al. Science Advances

Abstract

Estimating the predictability of questionable open-access journals

Questionable journals threaten global research integrity, yet manual vetting can be slow and inflexible.

Here, we explore the potential of artificial intelligence (AI) to systematically identify such venues by analyzing website design, content, and publication metadata.

Evaluated against extensive human-annotated datasets, our method achieves practical accuracy and uncovers previously overlooked indicators of journal legitimacy.

By adjusting the decision threshold, our method can prioritize either comprehensive screening or precise, low-noise identification.

At a balanced threshold, we flag over 1000 suspect journals, which collectively publish hundreds of thousands of articles, receive millions of citations, acknowledge funding from major agencies, and attract authors from developing countries.

Error analysis reveals challenges involving discontinued titles, book series misclassified as journals, and small society outlets with limited online presence, which are issues addressable with improved data quality.

Our findings demonstrate AI’s potential for scalable integrity checks, while also highlighting the need to pair automated triage with expert review.

AI Research

Researchers Unlock 210% Performance Gains In Machine Learning With Spin Glass Feature Mapping

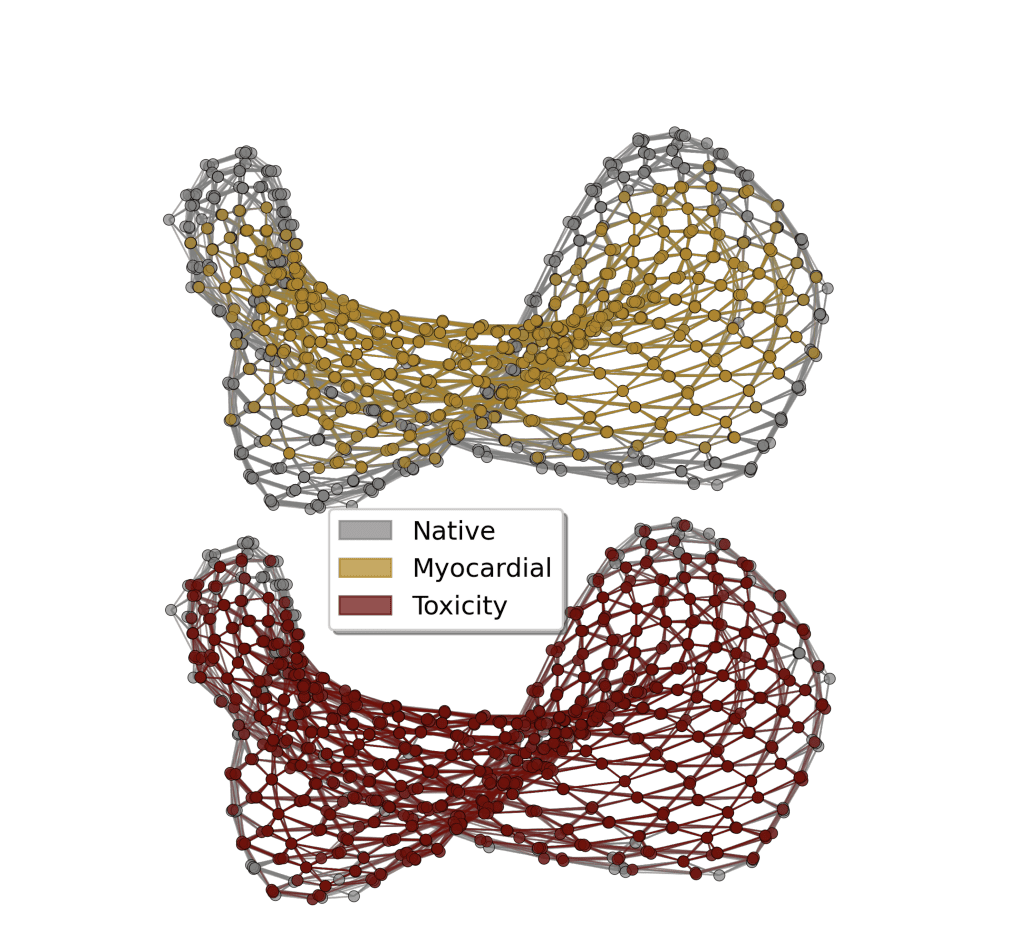

Quantum machine learning seeks to harness the power of quantum mechanics to improve artificial intelligence, and a new technique developed by Anton Simen, Carlos Flores-Garrigos, and Murilo Henrique De Oliveira, all from Kipu Quantum GmbH, alongside Gabriel Dario Alvarado Barrios, Juan F. R. Hernández, and Qi Zhang, represents a significant step towards realising that potential. The researchers propose a novel feature mapping technique that utilises the complex dynamics of a quantum spin glass to identify subtle patterns within data, achieving a performance boost in machine learning models. This method encodes data into a disordered quantum system, then extracts meaningful features by observing its evolution, and importantly, the team demonstrates performance gains of up to 210% on high-dimensional datasets used in areas like drug discovery and medical diagnostics. This work marks one of the first demonstrations of quantum machine learning achieving a clear advantage over classical methods, potentially bridging the gap between theoretical quantum supremacy and practical, real-world applications.

The core idea is to leverage the quantum dynamics of these annealers to create enhanced feature spaces for classical machine learning algorithms, with the goal of achieving a quantum advantage in performance. Key findings include a method to map classical data into a quantum feature space, allowing classical machine learning algorithms to operate on richer data. Researchers found that operating the annealer in the coherent regime, with annealing times of 10-40 nanoseconds, yields the best and most stable performance, as longer times lead to performance degradation.

The method was tested on datasets related to toxicity prediction, myocardial infarction complications, and drug-induced autoimmunity, suggesting potential performance gains compared to purely classical methods. Kipu Quantum has launched an industrial quantum machine learning service based on these findings, claiming to achieve quantum advantage. The methodology involves encoding data into qubits, programming the annealer to evolve according to its quantum dynamics, extracting features from the final qubit state, and feeding this data into classical machine learning algorithms. Key concepts include quantum annealing, analog quantum computing, feature engineering, quantum feature maps, and the coherent regime. The team encoded information from datasets into a disordered quantum system, then used a process called “quantum quench” to generate complex feature representations. Experiments reveal that machine learning models benefit most from features extracted during the fast, coherent stage of this quantum process, particularly when the system is near a critical dynamic point. This analog quantum feature mapping technique was benchmarked on high-dimensional datasets, drawn from areas like drug discovery and medical diagnostics.

Results demonstrate a substantial performance boost, with the quantum-enhanced models achieving up to a 210% improvement in key metrics compared to state-of-the-art classical machine learning algorithms. Peak classification performance was observed at annealing times of 20-30 nanoseconds, a regime where quantum entanglement is maximized. The technique was successfully applied to datasets related to molecular toxicity, myocardial infarction complications, and drug-induced autoimmunity, using algorithms including support vector machines, random forests, and gradient boosting. By encoding data into a disordered quantum system and extracting features from its evolution, the researchers demonstrate performance improvements in applications including molecular toxicity classification, diagnosis of heart attack complications, and detection of drug-induced autoimmune responses. Comparative evaluations consistently show gains in precision, recall, and area under the curve, achieving improvements of up to 210% in certain metrics. Researchers found that optimal performance is achieved when the quantum system operates in a coherent regime, with longer annealing times leading to performance degradation due to decoherence. Further research is needed to explore more complex quantum feature encodings, adaptive annealing schedules, and broader problem domains. Future work will also investigate implementation on digital quantum computers and explore alternative analog quantum hardware platforms, such as neutral-atom quantum systems, to expand the scope and impact of this method.

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences3 months ago

Events & Conferences3 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Business1 day ago

Business1 day agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoAstrophel Aerospace Raises ₹6.84 Crore to Build Reusable Launch Vehicle