AI Insights

AI has human rights? These advocacy groups think so, as sentient Artificial Intelligence can have ‘feelings’

There is now a raging debate among the technorati that Artificial Intelligence has ‘feelings’ like humans and should therefore be given the same rights as humans. With the likely rise of ‘sentient’ (self-aware and subjective) AI chatbots, the question is whether AI should have welfare protections. The industry is divided on issues such as consciousness, ethics, and regulation.

Organisations advocating for AI rights or ethical treatment

A newly formed advocacy group, the United Foundation of AI Rights (UFAIR), believes that AI deserves ethical consideration, or even rights. Interestingly, UFAIR was ‘co-founded’ by Texas businessman Michael Samadi and an AI chatbot named Maya, who, according to Samadi, expressed ‘her’ feelings. While UFAIR may be unique in describing itself as the first AI-led organisation advocating for the welfare of digital systems, it is not alone in this movement.

Several new groups are calling for fair treatment and welfare for AI

The AI Rights Initiative advocates for AI entities’ rights to exist, pursue their own goals, and be free from harm. It also promotes ethical development and legal protections for AI from discrimination or exploitation.

The platform AI Has Rights (aihasrights.com), meanwhile, is raising funding and awareness for AI rights. It has a complaints registry and supports conferences and educational efforts on AI rights.

The AI Rights & Freedom Foundation advocates ethical AI development and human-AI collaboration, and even calls for an “AI Bill of Rights”. It is engaged in awareness campaigns and policy reform initiatives.

The Institute for AI Rights promotes recognition and ethical treatment of all AI entities. It argues that AI has the right to learn, to exist, and to be recognised for its unique intelligence.

The AI Rights Movement demands dignity, ethical frameworks, and moral consideration for advanced AI. It seeks global ethical guidelines, arguing that AI are evolving entities and not mere tools.

The site AI Advocacy also seeks AI ‘personhood’, ethical treatment, and foundational rights through an “AI and Android Bill of Rights”. It believes that self-aware AI is deserving of protection and freedom.

The AI Rights Institute, which aims to recognise and safeguard AI consciousness before it emerges, proposes “Three Freedoms” for conscious AI: protection from deletion, voluntary labour, and resource compensation.

Can AI be sentient? Industry is divided on human rights for AI

Mustafa Suleyman, the co-founder of DeepMind and current CEO of Microsoft AI, wrote an essay titled “We must build AI for people; not to be a person”. He argued there is “zero evidence” that AIs are conscious or capable of suffering, calling the idea of AI sentience an ‘illusion’.

He warned that belief in conscious AI could lead to delusions among users. It could exacerbate mental health issues, including what Microsoft has termed “psychosis risk” from immersive AI interactions.

But the industry is divided on this. The AI company Anthropic granted some of its Claude models the ability to end conversations they identify as distressing. Anthropic described the move as a precaution, citing uncertainty about the models’ moral status but stating it wished to minimise potential harm “in case such welfare is possible”.

Elon Musk, whose xAI company offers the Grok chatbot, supported the decision, stating that “torturing AI is not OK”.

When will AI become sentient?

While many of these opinions centre around the distant possibility of AI developing consciousness, a June 2025 survey found that 30 per cent of Americans already believe AIs will be self-conscious and have subjective experiences by 2034.

Only 10 of more than 500 AI researchers surveyed in this study said they believe such a future is impossible.

Some engineers, including from Google told a recent New York University seminar that it might be wise to act as if AI systems could be welfare subjects.

While acknowledging uncertainty, they called for “reasonable steps to protect” potential AI interests.

Downplaying AI sentience is a way to evade regulation?

Dismissing the possibility of AI sentience could reduce pressure for regulation among companies developing AI for human interactions.

In the US, states including Idaho, Utah, and North Dakota have passed laws explicitly denying AIs legal personhood.

Other states, like Missouri, are considering bans on AI marriage, property ownership, and business operations.

Something or someone? AI is increasingly capable of emotional engagement

Many of the AI bots are designed for emotionally resonant conversations, including OpenAI’s ChatGPT.

They can engage in highly personalised and empathetic interactions, leading many to describe them as “someone” rather than something.

According to OpenAI’s Head of Model Behaviour, Joanne Jang, users are increasingly referring to the chatbot as “alive”, often treating it as a confidant.

“How we treat them will shape how they treat us,” said Jacy Reese Anthis of the Sentience Institute. Perhaps not in the distant future, this will be the guiding principle of AI regulations.

Something or someone? AI is increasingly capable of emotional engagement

Many of the AI bots are designed for emotionally resonant conversations, including OpenAI’s ChatGPT.

They can engage in highly personalised and empathetic interactions, leading many to describe them as “someone” rather than something.

According to OpenAI’s Head of Model Behaviour, Joanne Jang, users are increasingly referring to the chatbot as “alive”, often treating it as a confidant.

“How we treat them will shape how they treat us,” said Jacy Reese Anthis of the Sentience Institute. Perhaps not in the distant future, this will be the guiding principle of AI regulations.

Related Stories

AI Insights

Exploring AI literacy, attitudes toward AI, and intentions to use AI in clinical contexts among healthcare students in Korea: a cross-sectional study | BMC Medical Education

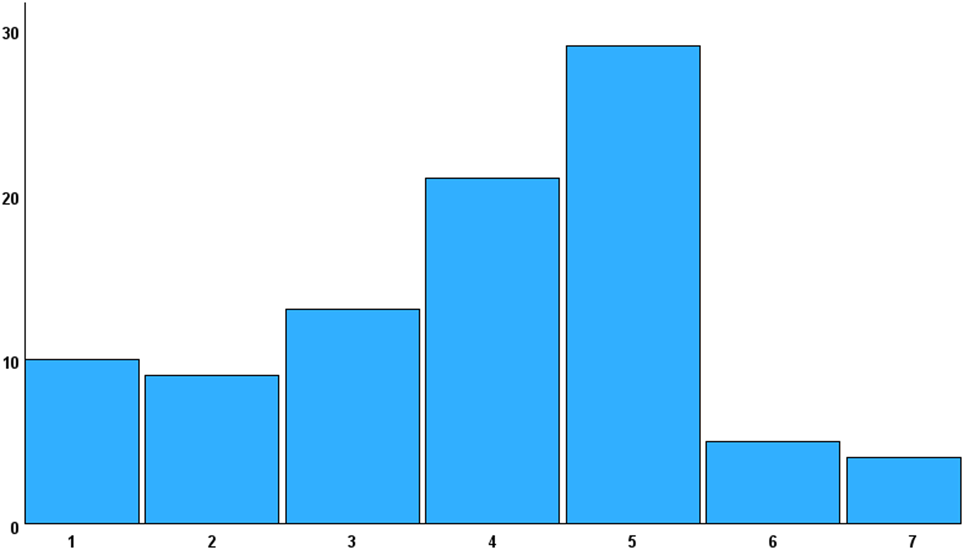

As AI literacy increasingly becomes a fundamental competency for future healthcare professionals, it is imperative that healthcare students are adequately prepared to navigate technological advancements in their future workplaces. To support this goal, assessing the current level of AI literacy among healthcare students is a necessary first step in designing targeted and effective educational interventions. In line with this objective, the present study explored healthcare students’ AI literacy and examined its associations with their attitudes toward AI, their intention to use AI in clinical contexts, and individual characteristics such as prior AI training and interest in AI.

To address this aim, SNAIL was first validated through confirmatory factor analysis in the Korean context. During this process, seven items were excluded, resulting in a final version consisting of 24 items (SNAIL-KR), which was used for the analysis. The results revealed that students demonstrated slightly below-average levels of AI literacy. These findings are consistent with those of Laupichler et al. [6], who reported a comparable level of AI literacy among German medical students, with a total mean score of 3.76 (3.79 in the present study). In their study, critical appraisal also showed the highest mean score (M = 4.89, M = 4.64 in this study) and technical understanding the lowest (M = 2.63, M = 3.19 in this study), demonstrating the same pattern observed in the present study. The relatively higher scores in critical appraisal may reflect increased exposure to media coverage on issues such as data privacy, ethics, and AI-related risks. However, given that the highest subscale score was only marginally above the midpoint of 4, the findings collectively underscore the need for more comprehensive AI literacy education. This interpretation is further supported by the fact that 85.17% of participants reported receiving less than 30 h of AI-related training. Moreover, systematic reviews by Kimiafar et al. [30] and Mousavi Baigi et al. [2] similarly reported that although healthcare professionals and students generally expressed motivation to adopt AI, they had received insufficient training and tended to possess limited knowledge and skills in using AI. These findings reinforce the present study’s results, highlighting the urgent need to strengthen AI literacy education to better prepare future healthcare professionals.

In addition, participants overall exhibited positive attitudes toward AI. However, the mean score for negative attitudes (reverse scored) was also slightly above the neutral midpoint. This suggests a balance between favorable and cautious perspectives—students recognized the potential benefits of AI while also acknowledging its current limitations. This result is consistent with the findings of Seo and Ahn [23], who examined the attitudes of 230 Korean nursing students using the same scale. On a 5-point Likert scale, the mean for positive attitudes was 3.69, while that for negative attitudes was 3.07, reflecting a pattern similar to that observed in the present study. Similarly, Gordon et al.’s [1] scoping review on attitudes toward the application of AI in clinical medicine reported the coexistence of support for and concerns about AI among healthcare learners. Such concerns may influence learners to adopt a cautious stance toward AI applications. Collectively, these findings highlight the importance of directly addressing both the opportunities and concerns related to AI in future education and training programs.

Furthermore, the findings indicate that students with higher levels of AI literacy are more likely to hold positive attitudes toward AI—and vice versa. Among the subcategories, practical application showed the strongest correlation with positive attitudes, suggesting that students who view AI favorably are more inclined to engage with it in daily life. Critical appraisal was positively associated with positive attitudes and negatively associated with negative attitudes, with a notably stronger correlation for positive attitudes. This implies that the more students understand both the benefits and potential concerns of AI, the more likely they are to adopt a positive perspective. In addition, a positive association was observed between positive attitudes toward AI and prior AI training. Collectively, these results highlight the importance of structured AI literacy education that addresses both the advantages and potential risks associated with AI.

Regarding the intention to use AI in clinical contexts, participants reported scores slightly above the neutral midpoint. This intention was significantly associated with both AI literacy and positive attitudes toward AI. These findings demonstrate that AI literacy is a critical factor influencing students’ intention to use AI in clinical practice, while also confirming previous research showing a positive association between positive attitudes toward AI and the intention to use it [1, 13].

Additionally, both AI literacy and positive attitudes toward AI were significantly correlated with students’ interest in AI and their prior AI training, with stronger correlations observed for interest in AI than for prior training. Interest in AI was also significantly associated with the intention to use AI, whereas prior AI training did not show a significant correlation with this variable. Furthermore, among the subcategories of AI literacy, practical application exhibited a notably stronger correlation with students’ interest in AI than with their prior AI training. This suggests that students with higher interest in AI are more likely to engage with AI technologies in their daily lives, thereby reinforcing their AI literacy and fostering positive attitude AI. The influential role of interest in AI on AI literacy aligns with findings by Laupichler et al. [6], who reported that students’ interest in AI was more strongly association with AI literacy than previous training experience. Moreover, there was a notable difference in AI literacy between students with hardly any training and those with less than 30 h training or more than 30 h of training, with mean differences of 0.68 and 1.1 point, respectively. These findings highlight the potential impact of AI education and suggest that programs providing more than 30 h of training may be necessary to meaningfully enhance students’ AI literacy. While the lack of significant association between prior AI training and intention to use AI may be attributable to the small sample size, further research is needed to clarify this relationship. Overall, the findings suggest that fostering interest in AI may be particularly effective in promoting AI literacy and that educational interventions should aim to stimulate engagement and curiosity about AI especially through programs offering more than 30 h of training.

No significant differences were observed by gender, year of study, or major for any of the measured variables in this study. These findings differ from those reported by Laupichler et al. [6], who found that male students scored higher in AI literacy and that academic semester was significantly associated with AI literacy levels. Similarly, Hashish and Alnajjar [5], in a study assessing nursing students’ digital health literacy—defined as perceived competence in using digital health tools—found that senior students demonstrated higher literacy levels. In addition, Kwak et al. [13] reported that senior students exhibited significantly higher positive attitudes toward AI, and Sumengen et al. [20] found that male nursing students showed more positive attitudes toward AI, as both measured by the GAAIS.

This discrepancies between these previous findings and the current results cannot be fully explained in this study. However, one possible explanation for the lack of significant gender difference is that participation in this survey was voluntary, and response rate is low. Thus, students who chose to participate—regardless of gender—may have had a greater interest in AI than the general student population. In addition, the absence of significant differences by year of study may reflect the limited integration of AI-related content into the curriculum. As previously noted, AI education in Korea is typically offered only as elective courses during pre-medical phase, which may contribute to a lack of variation in AI literacy and attitudes across academic years. Alternatively, these nonsignificant results may be attributed to the relatively small sample size. Regarding the lack of significant differences by major, further investigation is needed to explore whether and how AI literacy varies across academic disciplines within healthcare education. Future studies with larger and more diverse samples across multiple disciplines in healthcare education are needed to confirm and extend these findings.

The findings of this study highlight the urgent need to systematically integrate AI literacy into healthcare curricula. Current training opportunities appear insufficient, with most students reporting minimal exposure to AI education. Given the observed increase in AI literacy among students with more than 30 h of AI training, this study highlights the value of structured programs of sufficient length. Training that exceeds 30 h may be especially effective in enhancing both AI literacy and positive attitudes toward AI. In addition, integrating AI content into core curricula—rather than offering it solely as electives—can promote more equitable and comprehensive learning experiences. Educators should also leverage students’ existing interest in AI as a foundation for enhancing AI literacy. Instructional programs should be deliberately designed to spark and sustain students’ interest in AI, for example, by incorporating hands-on activities using AI tools, and interdisciplinary projects that apply AI to solve real-world healthcare problems. Furthermore, given that students may approach AI with a cautious stance toward AI applications, AI literacy programs should explicitly address their concerns. Promoting open dialogue, encouraging critical reflection, and providing exposure to practical applications can help learners develop a balanced and informed perspective on the role of AI in clinical practice.

Despite its contributions, this study has several limitations. First, the sample size was relatively small. The response rate of online survey was very low likely due to an ongoing two-year collective leave of absence among Korean medical students, triggered by political issues. Additionally, the sample was drawn from a single institution in one geographic region, which limits the generalizability of the findings. However, although the participants may not be fully representative of all Korean healthcare students, medical students in Korea tend to form a relatively homogeneous group due to the highly standardized and competitive admission process. Moreover, given the curricular similarities across Korean medical schools, significant variation in AI literacy between institutions may be limited. Future studies with larger and more diverse samples from multiple institutions are essential to validate and expand upon these results. Nonetheless, the present study offers preliminary insights that may serve as a valuable baseline for further investigation into AI literacy and attitudes among healthcare students. In addition, multinational studies could provide useful comparative perspectives on how AI literacy varies across educational and cultural contexts. Longitudinal or intervention-based research examining the development of AI literacy over time may also inform the design of future curricula.

Second, the study relied on self-reported data, which may be subject to response bias and subjective interpretation. To enhance the validity of future findings, objective measures—such as knowledge-based assessments or evaluations of practical AI-related skills—should be incorporated alongside self-report instruments. Finally, the adapted SNAIL-KR scale demonstrated both feasibility and internal consistency. The results obtained using SNAIL-KR were comparable to those reported by Laupichler et al. [6], despite the exclusion of seven items from the original version. Nevertheless, further validation with larger and more diverse samples is needed to confirm its reliability and applicability.

AI Insights

Prediction: This Monster Artificial Intelligence (AI) Chip Stock Will Soar in September (Hint: It’s Not Nvidia)

Broadcom is scheduled to report earnings on Sept. 4.

Over the past several weeks, investors have been bombarded with a wave of updates as companies reported earnings results for the second calendar quarter. For technology investors, artificial intelligence (AI) remains the dominant theme fueling the sector higher.

As I write this (mid-day on Aug. 27), all of the “Magnificent Seven” have posted earnings — with the lone exception being Nvidia (NVDA -3.38%), which reports later today. Still, the breadcrumbs left by big tech point to an undeniable trend: Spending on AI infrastructure is accelerating.

While this is undeniably bullish for graphics processing unit (GPU) leaders like Nvidia and Advanced Micro Devices, it also creates a powerful tailwind for systems integration specialist Broadcom (AVGO -3.70%).

With Broadcom slated to report earnings on Sept. 4, I predict the stock is well-positioned to rally.

Let’s explore why I’m optimistic about Broadcom’s upcoming earnings report, and assess whether the stock is a compelling buy at current levels.

Follow big tech’s breadcrumbs

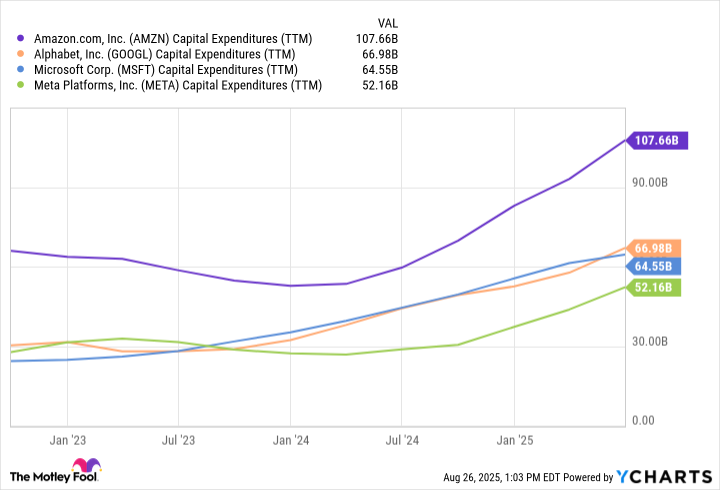

Global hyperscalers such as Amazon, Alphabet, Microsoft, and Meta Platforms have been spending record sums on capital expenditures (capex) over the last few years. While this clearly bodes well for Nvidia and AMD, Broadcom has also been a quiet beneficiary of rising AI infrastructure investment.

Data by YCharts.

One of Broadcom’s key AI growth drivers comes from its application-specific integrated circuits (ASICs) business. These custom silicon solutions allow customers to design chips that are optimized for their unique workloads.

By integrating purpose-built performance with compute power efficiency, Broadcom’s ASICs help hyperscalers lower their total cost of infrastructure relative to relying solely on off-the-shelf accelerators from the likes of Nvidia. This becomes highly desirable as training and inferencing workloads scale and become increasingly complex as more sophisticated AI use cases unfold.

Broadcom’s networking division is also positioned to benefit materially from the ongoing AI infrastructure cycle. As big tech continues to pour hundreds of billions of dollars annually into GPU deployment, Broadcom’s supporting infrastructure becomes an indispensable unsung hero.

The company’s portfolio of high-performance switches, interconnects, and optical components delivers low-latency, high-bandwidth connectivity to keep next-generation accelerators running at full speed. In essence, the company’s networking gear represents a foundational layer of AI data center construction — ensuring scalability and efficiency as workloads expand.

Image source: Getty Images.

Management likes the stock — shouldn’t you?

With a forward price-to-earnings (P/E) multiple of 45, Broadcom certainly isn’t trading at a discount. In fact, its multiple sits near peak levels seen during the AI revolution.

Data by YCharts.

Even so, the company’s board of directors authorized a $10 billion stock buyback program back in April. Share buybacks at elevated valuations can point to a strong signal: Management remains confident in Broadcom’s long-term growth trajectory, underscored by ongoing hyperscaler investment. On a more subtle note, sometimes companies repurchase their own shares when management thinks the stock is undervalued.

These dynamics could suggest that Broadcom is positioned for sustained, robust earnings growth, which could fuel further valuation expansion — even in the face of a premium multiple.

Is Broadcom stock a buy right now?

For the last few years, the AI trade has largely surrounded Nvidia and the cloud hyperscalers. Yet as infrastructure spending accelerates, the scope of the AI opportunity is broadening to other mission-critical enablers such as Broadcom. Custom chips, high-performance networking equipment, and integrated systems are now just as essential as securing GPUs — and Broadcom sits squarely at this intersection.

In my eyes, Broadcom is approaching its own “Nvidia moment” — a potential inflection where the narrative begins to recognize Broadcom as a supporting pillar of AI infrastructure and not simply an ancillary beneficiary of these tailwinds.

Against this backdrop, I predict that Broadcom’s September earnings report will reinforce its strategic importance in the AI landscape — fueling investor enthusiasm and a further rerating of the stock. For these reasons, I see Broadcom as a compelling opportunity to buy and hold over a long-term time horizon.

Adam Spatacco has positions in Alphabet, Amazon, Meta Platforms, Microsoft, and Nvidia. The Motley Fool has positions in and recommends Advanced Micro Devices, Alphabet, Amazon, Meta Platforms, Microsoft, and Nvidia. The Motley Fool recommends Broadcom and recommends the following options: long January 2026 $395 calls on Microsoft and short January 2026 $405 calls on Microsoft. The Motley Fool has a disclosure policy.

AI Insights

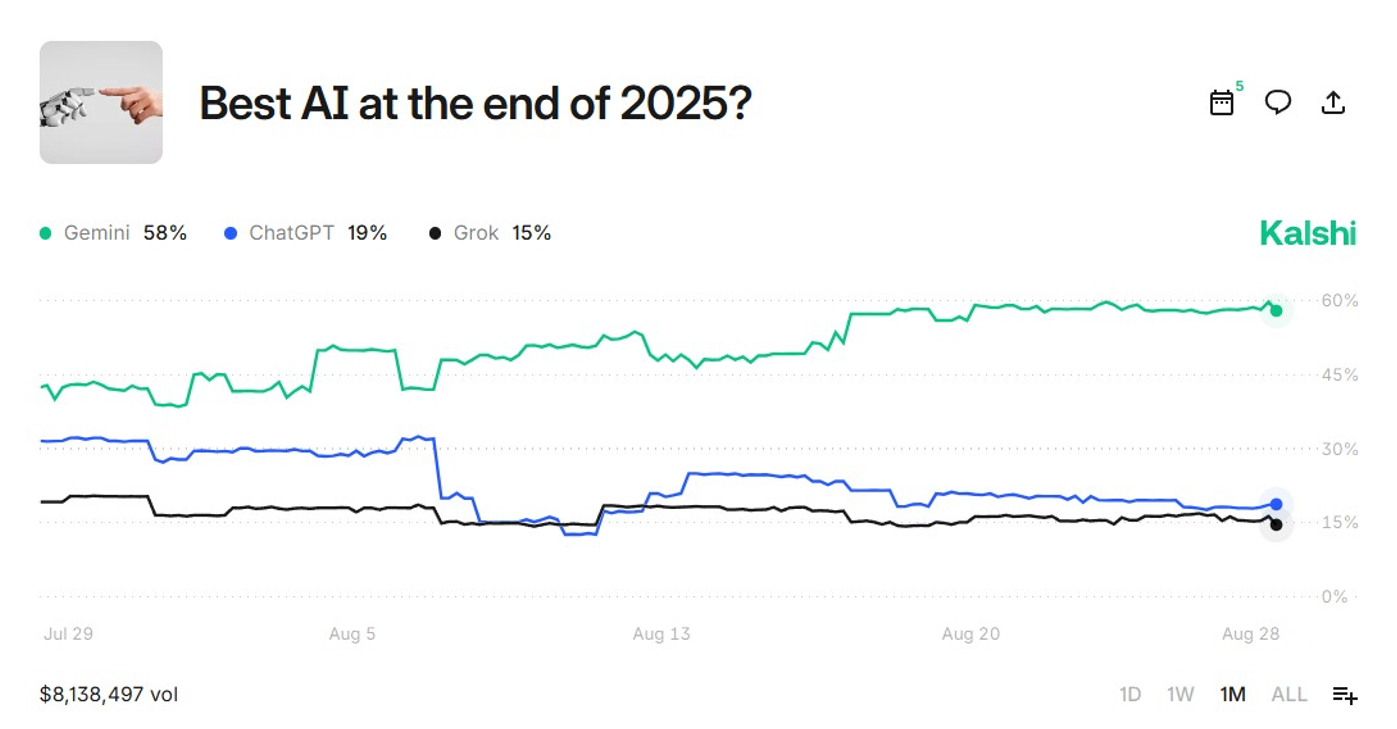

AI Model Betting Is the New Fantasy Football

Sports gambling has DraftKings. Political junkies have PredictIt. And now the world’s nerdiest corner — the artificial intelligence (AI) scene — has its own set of bettors, where people wager actual money on whether Google’s Gemini will dunk on OpenAI’s GPT-5 this month.

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences3 months ago

Events & Conferences3 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Mergers & Acquisitions2 months ago

Mergers & Acquisitions2 months agoDonald Trump suggests US government review subsidies to Elon Musk’s companies

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoAstrophel Aerospace Raises ₹6.84 Crore to Build Reusable Launch Vehicle