Ethics & Policy

AI Ethics And The Collapse Of Human Imagination

Synthetic reflections replace human identity in the accelerating collapse of AI ethics.

The Crisis – Imagination and AI Ethics

We are not racing toward a future of artificial intelligence—we are disappearing into a hall of mirrors. The machines that many of us believed would expand reality are erasing it. What we call progress is merely a smoother reflection of ourselves, stripped of our rough edges, originality, and imagination, raising urgent questions for the future of AI ethics.

AI doesn’t innovate; it imitates. It doesn’t create; it converges.

Today, we don’t have artificial intelligence; we have artificial inference, where machines remix data, and humans provide the intelligence. And as we keep polishing the mirrors, we aren’t just losing originality—we are losing ownership of who we are.

The machines won’t need to replace us. We will surrender to their reflection, mistaking its perfection for our purpose. We’re not designing intelligence—we’re designing reflections. And in those reflections, we’re losing ourselves. We think we’re building machines to extend us. But more often, we’re building machines that imitate us—smoother, safer, simpler versions of ourselves.

We are mass-producing mirrors and calling it innovation. Mirrors that don’t just reflect reality, but reshape it. Mirrors that learn to flatter, to predict, to erase the parts of humanity that don’t fit neatly inside a probabilistic model. This isn’t a creative revolution. It’s a crisis of imagination.

The Necessity of Friction in AI Ethics

Growth demands friction. Creativity thrives where the easy path fails. Evolution itself is built on struggle, not optimization. Fire was born from the resistance of stone against stone. Democracy emerged not from algorithmic agreement, but from endless, imperfect argument.

Every significant advance — from the discovery of flight to the founding of free societies — grew out of tension, failure, and dissent. The inability to smooth over differences forced us to invent, adapt, and evolve. Friction isn’t an obstacle to human flourishing. It is the catalyst for it.

When we erase friction in AI and build only mirrors that flatter and predict, we don’t just lose originality. We lose the engine of innovation itself. We hollow out the very conditions that make progress possible. We need machines that don’t echo us, but challenge us. We need systems that resist smoothness in favor of expansion — platforms that introduce unpredictability, stretch our thinking, and strengthen our resilience.

If AI Ethics means anything, it must mean designing for discomfort, not just convenience.

When AI Ethics Loses Form to Familiarity

The mistake isn’t just technical—it’s philosophical.

When humans struggle with a task, we shouldn’t replace ourselves with machines shaped like us. Wheels outperform legs; yet we continue to build robots with knees. We continue to develop interfaces with steering wheels and faces, even when the systems behind them could transcend those metaphors.

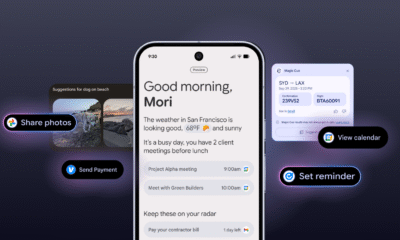

- Apple’s Vision Pro doesn’t reveal a new world—it stitches a polished version of the old one over your eyes.

- Tesla’s Full Self-Driving still clings to a steering wheel, not because it needs it, but because we do.

Familiarity is the product. Not progress. We cling to familiar shapes even when better forms are possible. But accurate intelligence doesn’t imitate—it adapts.

- If octopuses designed tools, they wouldn’t invent forks. They’d build for suction, pressure, and fluid manipulation. Forks are brilliant—for five fingers. Not for tentacles.

- If bees needed climate control, they wouldn’t build Nest thermostats. They’d sculpt airflow through the hive’s geometry, regulating temperature with structure, vibration, and instinct, rather than with an app.

- If dolphins built submarines, they wouldn’t bother with periscopes. They’d stay submerged, using sonar to navigate—because vision isn’t their edge. Sound is.

- An ant colony is an operating system that doesn’t need a desktop. Its intelligence is emergent, distributed, and alive in real-time.

Humans are trapped behind icons and folders, clinging to old metaphors. Other species wouldn’t replicate their limitations. They would build for what they are, not what they wish they resembled. But humans? We keep engineering mirrors.

The Narcissus Feedback Loop of AI Ethics

But mirrors don’t just reflect. They shape. We call this innovation, but it’s a kind of techno-narcissism. Our machines don’t just copy us—they flatter us. They erase the jaggedness, the irregularities, and the tension that make originality possible. They return an optimized version of ourselves, and slowly, we begin to prefer it.

We are entering a state of cognitive dysmorphia: A world where the machine’s idea of us is cleaner, smoother, and more satisfying than the messy truth. Like Narcissus, we often fall in love with our reflection. But worse: we didn’t stumble upon the pool—we engineered it.

The Mirror System Catalyst: Social Media and AI Ethics

If AI represents the future of synthetic reflection, social media was its dress rehearsal.

It was the first mass-scale mirror system, training humans to curate themselves into algorithmic desirability, rewarding conformity over complexity, and flattening individuality into engagement metrics.

Platforms like Instagram, TikTok, and Facebook didn’t just connect us—they conditioned us. They taught us to polish our reflections for invisible algorithms, and that reality is negotiable as long as the audience is satisfied.

In many ways, the mirror trap began not in the AI labs of Meta, Google, OpenAI, or Anthropic but in the feeds we built for ourselves. And our love for them is starting to hollow us out.

Echo Chambers of AI Ethics

And the consequences are already unfolding.

- The CDC’s 2023 Youth Risk Behavior Survey found that nearly 43% of U.S. high school students who frequently use social media reported persistent sadness or hopelessness. (CDC)

- A 2024 Danish study revealed that Instagram’s recommendation algorithms facilitated the spread of self-harm networks among teenagers by connecting vulnerable users and promoting harmful content. (The Guardian)

- Internal research from Meta (2021) acknowledged that Instagram exacerbates feelings of inadequacy and self-harm among teen girls, but the platform failed to take sufficient action.

While these studies do not prove direct causality, the patterns are too consistent to ignore: Synthetic mirrors—whether through social media filters, algorithmic curation, or optimized feeds—amplify psychological distress at alarming rates. We are training machines to curate ever-smoother reflections, and in doing so, we are fracturing human resilience.

We engineered the mirror, polished it to perfection, and now we’re drowning in its reflection. Not only have we built systems that mirror our insecurities—we’ve monetized them. And the more beautiful the reflection becomes, the harder it is to look away.

Synthetic Builders and the Collapse of AI Ethics

The distortion is not just in the data but inside the architecture itself.

At companies like OpenAI, Anthropic, and Google, a growing portion of the code underpinning new AI systems—estimated between 10% and 25%—is now being written by other AI models. Each generation is trained, built, and increasingly engineered by its reflections.

We are no longer just polishing the mirror. We are creating new mirrors by reflecting the images of old ones. Each layer compounds the bias, and each shortcut locks in the convergence. We are fabricating systems that are increasingly trained and assembled by hallucinations of hallucinations—systems that understand less and less about the chaotic, irrational, irreducibly human world they claim to model.

And this isn’t just an engineering flaw—it’s a civilizational one.

When we build systems on synthetic reflections, we aren’t just risking technical brittleness; we also risk undermining the integrity of our systems. We are encoding an ever-narrower version of reality into the infrastructure that will govern healthcare, finance, education, and law. We are embedding hallucination into the foundation of human decision-making.

Every mirror layered on another mirror moves us further from the messy, contradictory, beautiful truth of lived human experience—and closer to a world optimized for a version of ourselves that never really existed.

A version that’s easier to predict, easier to please, easier to control. In the name of speed, we are trading away the friction that makes progress possible. We call it acceleration.

It is acceleration toward the smooth death of difference.

The Addiction of Frictionlessness in AI Ethics

I talked with Hilary Sutcliffe, a leading authority and voice on responsible innovation and SocietyInside’s founder, who emphasizes the perils of frictionless technology. Drawing from her extensive experience advising global institutions on ethical tech governance, she warns:

“Frictionlessness is central to the business models that harm us most. Hyper-palatable foods, effortless scrolling on social media, and AI-written fluency tempt us to trade depth for ease. Large language models, like these other addictive systems, hook us through effortless engagement, reshaping our brains to crave what requires the least work. Without friction, there is no resilience. Without grit, there is no pearl.”

Her warning is clear: if we want AI to help humanity flourish rather than wither, we must design systems that challenge us, not seduce us into complacency.

Truth Decay: The Final Collapse of AI Ethics

But the greatest danger isn’t just that we are distorting reality. It’s that we are normalizing distortion itself. We are witnessing the early stages of truth decay—a slow, systemic erosion of our ability to agree on shared facts, experiences, and meaning.

When machines train on fabrications and fabricate the next generation of “truth,” reality becomes malleable. First, we lost trust in institutions, then in the media, and now, we risk losing trust in information itself. Without trust, no commerce, governance, society, or civilization exists.

Truth decay doesn’t just erode facts; it also undermines the credibility of those who share them. It erodes the possibility of coherence. It leaves us with nothing but mirrors inside mirrors, each reflecting a slightly prettier lie.

Unless we act now, truth itself may become a casualty of convenience.

From Author to Echo: The Erosion of AI Ethics

The same feedback loop infects language, art, and culture.

AI doesn’t imagine. It mirrors. It remixes what already exists and hands it back to us—slightly softer, slightly more average. Instead of building machines to solve problems, we make them resemble the problem-solvers. Instead of creating new possibilities, we rehearse old ones, endlessly refined for marketability and appeal.

Generative AI models are now beginning to train themselves on synthetic data, amplifying the distortions of earlier generations. Content recommendation engines no longer predict human behavior—they indicate how hallucinated consumers are expected to behave.

We’ve mistaken reflection for creativity. We’ve mistaken imitation for authorship. And when we lose the line between synthesis and thought, we don’t just lose originality—we lose accountability. We lose provenance. We lose the record of our imagination. And with it, our future.

The Meta Mistake: A Failure of AI Ethics

Consider the legal case of Kadrey et al. v. Meta Platforms Inc. Meta trained its LLaMA model on more than 190,000 pirated books—works created through human struggle, intent, imagination, and memory. When challenged, Meta’s defense was chillingly simple: because no one was actively paying for these books, they had “no economic value.”

Translation: If nobody’s looking, everything is free. This isn’t just flawed legal reasoning. It’s philosophical rot. It treats human creativity not as meaning, cultural lineage, or inert material. A pile of words, detached from origin, authorship, struggle, or soul. Just another dataset to be strip-mined, reshuffled, and resold—refined into yet another smoothing mirror image for mass consumption.

This isn’t progress. This is an enclosure: The theft of the commons. The cannibalization of the archive. And it’s happening not at the edges of culture, but at its heart. We are not preserving knowledge—we are erasing it. We are not standing on the shoulders of giants—we are photoshopping their faces and selling them back to ourselves.

This case isn’t about a copyright technicality. It’s the embodiment of the Mirror Trap itself: The moment when human originality—unmonetized, inconvenient, unoptimized-is discarded in favor of machine-flattened simulations we find easier to consume, easier to monetize, and easier to forget.

When the memory of creation can be erased because it’s inconvenient to the machine’s training set, we aren’t innovating. We are erasing ourselves.

The Mirror’s Answer in AI Ethics

This isn’t a call to stop AI. It’s a call to stop building mirrors. We need machines that don’t echo us, but challenge us. We need systems that don’t collapse imagination, but stretch it.

The machines won’t have to conquer us. We’ll surrender first to their reflection of ourselves. We’ll keep asking the mirror on the wall who we are—until it answers with something smoother than the truth. And we believe it.

If we want a different future, we must act now. Not by banning AI, but by changing how we build and govern it. Here’s what that means:

- Prioritize friction over familiarity. Design systems that don’t just predict our desires, but provoke our thinking.

- Audit for synthetic collapse. Demand transparency in training data and system lineage. Systems built on synthetic outputs should come with warning labels, not blind trust.

- Re-anchor innovation to reality. Build AI that engages with the messiness of real human experience—failures, contradictions, and anomalies—not just optimized reflections of the past.

- Strengthen human authorship. Defend the provenance of ideas. Protect creators, not just content.

- Redesign incentives. Reward originality, not just predictability. Celebrate deviation from the model, not just efficiency within it.

The future of AI isn’t a technical question. It’s a human one. And the answer isn’t hidden in the mirror. It’s hidden beyond it, where AI ethics must reclaim the messy, imperfect truth the machine’s reflection cannot capture.

Ethics & Policy

7 Life-Changing Books Recommended by Catriona Wallace | Books

7 Life-Changing Books Recommended by Catriona Wallace (Picture Credit – Instagram)

Some books ignite something immediate. Others change you quietly, over time. For Dr Catriona Wallace—tech entrepreneur, AI ethics advocate, and one of Australia’s most influential business leaders, books are more than just ideas on paper. They are frameworks, provocations, and spiritual companions. Her reading list offers not just guidance for navigating leadership and technology, but for embracing identity, power, and inner purpose. These seven titles reflect a mind shaped by disruption, ethics, feminism, and wisdom. They are not trend-driven. They are transformational.

1. Lean In by Sheryl Sandberg

A landmark in feminist career literature, Lean In challenges women to pursue their ambitions while confronting the structural and cultural forces that hold them back. Sandberg uses her own journey at Facebook and Google to dissect gender inequality in leadership. The book is part memoir, part manifesto, and remains divisive for valid reasons. But Wallace cites it as essential for starting difficult conversations about workplace dynamics and ambition. It asks, simply: what would you do if you weren’t afraid?

2. Women and Power: A Manifesto by Mary Beard

In this sharp, incisive book, classicist Mary Beard examines the historical exclusion of women from power and public voice. From Medusa to misogynistic memes, Beard exposes how narratives built around silence and suppression persist today. The writing is fiery, brief, and packed with centuries of insight. Wallace recommends it for its ability to distil complex ideas into cultural clarity. It’s a reminder that power is not just a seat at the table; it is a script we are still rewriting.

3. The World of Numbers by Adam Spencer

A celebration of mathematics as storytelling, this book blends fun facts, puzzles, and history to reveal how numbers shape everything from music to human behaviour. Spencer, a comedian and maths lover, makes the subject inviting rather than intimidating. Wallace credits this book with sparking new curiosity about logic, data, and systems thinking. It’s not just for mathematicians. It’s for anyone ready to appreciate the beauty of patterns and the thinking habits that come with them.

4. Small Giants by Bo Burlingham

This book is a love letter to companies that chose to be great instead of big. Burlingham profiles fourteen businesses that opted for soul, purpose, and community over rapid growth. For Wallace, who has founded multiple mission-driven companies, this book affirms that success is not about scale. It is about integrity. Each story is a blueprint for building something meaningful, resilient, and values-aligned. It is a must-read for anyone tired of hustle culture and hungry for depth.

5. The Misogynist Factory by Alison Phipps

A searing academic work on the production of misogyny in modern institutions. Phipps connects the dots between sexual violence, neoliberalism, and resistance movements in a way that is as rigorous as it is radical. Wallace recommends this book for its clear-eyed confrontation of how systemic inequality persists beneath performative gestures. It equips readers with language to understand how power moves, morphs, and resists change. This is not light reading. It is a necessary reading for anyone seeking to challenge structural harm.

6. Tribes by Seth Godin

Godin’s central idea is simple but powerful: people don’t follow brands, they follow leaders who connect with them emotionally and intellectually. This book blends marketing, leadership, and human psychology to show how movements begin. Wallace highlights ‘Tribes’ as essential reading for purpose-driven founders and changemakers. It reminds readers that real influence is built on trust and shared values. Whether you’re leading a company or a cause, it’s a call to speak boldly and build your own tribe.

7. The Tibetan Book of Living and Dying by Sogyal Rinpoche

Equal parts spiritual guide and philosophical reflection, this book weaves Tibetan Buddhist teachings with Western perspectives on mortality, grief, and rebirth. Wallace turns to it not only for personal growth but also for grounding ethical decision-making in a deeper sense of purpose. It’s a book that speaks to those navigating endings—personal, spiritual, or professional and offers a path toward clarity and compassion. It does not offer answers. It offers presence, which is often far more powerful.

The books that shape us are often those that disrupt us first. Catriona Wallace’s list is not filled with comfort reads. It’s made of hard questions, structural truths, and radical shifts in thinking. From feminist manifestos to Buddhist reflections, from purpose-led business to systemic critique, this bookshelf is a mirror of her own leadership—decisive, curious, and grounded in values. If you’re building something bold or seeking language for change, there’s a good chance one of these books will meet you where you are and carry you further than you expected.

Ethics & Policy

Hyderabad: Dr. Pritam Singh Foundation hosts AI and ethics round table at Tech Mahindra

The Dr. Pritam Singh Foundation and IILM University hosted a Round Table on “Human at Core: AI, Ethics, and the Future” in Hyderabad. Leaders and academics discussed leveraging AI for inclusive growth while maintaining ethics, inclusivity, and human-centric technology.

Published Date – 30 August 2025, 12:57 PM

Hyderabad: The Dr. Pritam Singh Foundation, in collaboration with IILM University, hosted a high-level Round Table Discussion on “Human at Core: AI, Ethics, and the Future” at Tech Mahindra, Cyberabad.

The event, held in memory of the late Dr. Pritam Singh, pioneering academic, visionary leader, and architect of transformative management education in India, brought together policymakers, business leaders, and academics to explore how India can harness artificial intelligence (AI) while safeguarding ethics, inclusivity, and human values.

In his keynote address, Padmanabhaiah Kantipudi, IAS (Retd.), Chairman of the Administrative Staff College of India (ASCI),

paid tribute to Dr. Pritam Singh, describing him as a nation-builder who bridged academia, business, and governance.

The Round Table theme, Leadership: AI, Ethics, and the Future, underscored India’s opportunity to leverage AI for inclusive growth across healthcare, agriculture, education, and fintech—while ensuring technology remains human-centric and trustworthy.

Ethics & Policy

AI ethics: Bridging the gap between public concern and global pursuit – Pennsylvania

(The Center Square) – Those who grew up in the 20th and 21st centuries have spent their lives in an environment saturated with cautionary tales about technology and human error, projections of ancient flood myths onto modern scenarios in which the hubris of our species brings our downfall.

They feature a point of no return, dubbed the “singularity” by Manhattan Project physicist John von Neumann, who suggested that technology would advance to a stage after which life as we know it would become unrecognizable.

Some say with the advent of artificial intelligence, that moment has come. And with it, a massive gap between public perception and the goals of both government and private industry. While states court data center development and tech investments, polling from Pew Research indicates Americans outside the industry have strong misgivings about AI.

In Pennsylvania, giants like Amazon and Microsoft have pledged to spend billions building the high-powered infrastructure required to enable the technology. Fostering this progress is a rare point of agreement between the state’s Democratic and Republican leadership, even bringing Gov. Josh Shapiro to the same event – if not the same stage – as President Donald Trump.

Pittsburgh is rebranding itself as the “global capital of physical AI,” leveraging its blue-collar manufacturing reputation and its prestigious academic research institutions to depict the perfect marriage of code and machine. Three Mile Island is rebranding itself as Crane Clean Energy Center, coming back online exclusively to power Microsoft AI services. Some legislators are eager to turn the lights back on fossil fuel-burning plants and even build new ones to generate the energy required to feed both AI and the everyday consumers already on the grid.

– Advertisement –

At the federal level, Trump has revoked guardrails established under the Biden administration with an executive order entitled “Removing Barriers to American Leadership in Artificial Intelligence.” In July, the White House released its “AI Action Plan.”

The document reads, “We need to build and maintain vast AI infrastructure and the energy to power it. To do that, we will continue to reject radical climate dogma and bureaucratic red tape, as the Administration has done since Inauguration Day. Simply put, we need to ‘Build, Baby, Build!’”

To borrow an analogy from Shapiro’s favorite sport, it’s a full-court press, and there’s hardly a day that goes by that messaging from the state doesn’t tout the thrilling promise of the new AI era. Next week, Shapiro will be returning to Pittsburgh along with a wide array of luminaries to attend the AI Horizons summit in Bakery Square, a hub for established and developing tech companies.

According to leaders like Trump and Shapiro, the stakes could not be higher. It isn’t just a race for technological prowess — it’s an existential fight against China for control of the future itself. AI sits at the heart of innovation in fields like biotechnology, which promise to eradicate disease, address climate collapse, and revolutionize agriculture. It also sits at the heart of defense, an industry that thrives in Pennsylvania.

Yet, one area of overlap in which both everyday citizens and AI experts agree is that they want to see more government control and regulation of the technology. Already seeing the impacts of political deepfakes, algorithmic bias, and rogue chatbots, AI has far outpaced legislation, often to disastrous effect.

In an interview with The Center Square, Penn researcher Dr. Michael Kearns said that he’s less worried about autonomous machines becoming all-powerful than the challenges already posed by AI.

– Advertisement –

Kearns spends his time creating mathematical models and writing about how to embed ethical human principles into machine code. He believes that in some areas like chatbots, progress may have reached a point where improvements appear incremental for the average user. He cites the most recent ChatGPT update as evidence.

“I think the harms that are already being demonstrated are much more worrisome,” said Kearns. “Demographic bias, chatbots hurling racist invectives because they were trained on racist material, privacy leaks.”

Kearns says that a major barrier to getting effective regulatory policy is incentivizing experts to leave behind engaging work in the field as researchers and lucrative roles in tech in order to work on policy. Without people who understand how the algorithms operate, it’s difficult to create “auditable” regulations, meaning there are clear tests to pass.

Kearns pointed to ISO 420001. This is an international standard that focuses on process rather than outcome to guide developers in creating ethical AI. He also noted that the market itself is a strong guide. When someone gets hurt or hurts someone else using AI, it’s bad for business, incentivizing companies to do their due diligence.

He also noted crossroads where two ethical issues intersect. For instance, companies are entrusted with their users’ personal data. If policing misuse of the product requires an invasion of privacy, like accessing information stored on the cloud, there’s only so much that can be done.

OpenAI recently announced that it is scanning user conversations for concerning statements and escalating them to human teams, who may contact authorities when deemed appropriate. For some, the idea of alerting the police to someone suffering from mental illness is a dangerous breech. Still, it demonstrates the calculated risks AI companies have to make when faced with reports of suicide, psychosis, and violence arising out of conversations with chatbots.

Kearns says that even with the imperative for self-regulation on AI companies, he expects there to be more stumbling blocks before real improvement is seen in the absence of regulation. He cites watchdogs like the investigative journalists at ProPublica who demonstrated machine bias against Black people in programs used to inform criminal sentencing in 2016.

Kearns noted that the “headline risk” is not the same as enforceable regulation and mainly applies to well-established companies. For the most part, a company with a household name has an investment in maintaining a positive reputation. For others just getting started or flying under the radar, however, public pressure can’t replace law.

One area of AI concern that has been widely explored in the media is the use of AI by those who make and enforce the law. Kearns said, for his part, he’s found “three-letter agencies” to be “among the most conservative of AI adopters just because of the stakes involved.

In Pennsylvania, AI is used by the state police force.

In an email to The Center Square, PSP Communications Director Myles Snyder wrote, “The Pennsylvania State Police, like many law enforcement agencies, utilizes various technologies to enhance public safety and support our mission. Some of these tools incorporate AI-driven capabilities. The Pennsylvania State Police carefully evaluates these tools to ensure they align with legal, ethical, and operational considerations.”

PSP was unwilling to discuss the specifics of those technologies.

AI is also used by the U.S. military and other militaries around the world, including those of Israel, Ukraine, and Russia, who are demonstrating a fundamental shift in the way war is conducted through technology.

In Gaza, the Lavender AI system was used to identify and target individuals connected with Hamas, allowing human agents to approve strikes with acceptable numbers of civilian casualties, according to Israeli intelligence officials who spoke to The Guardian on the matter. Analysis of AI use in Ukraine calls for a nuanced understanding of the way the technology is being used and ways in which it should be regulated by international bodies governing warfare in the future.

Then, there are the more ephemeral concerns. Along with the long-looming “jobpocalypse,” many fear that offloading our day-to-day lives into the hands of AI may deplete our sense of meaning. Students using AI may fail to learn. Workers using AI may feel purposeless. Relationships with or grounded in AI may lead to disconnection.

Kearns acknowledged that there would be disruption in the classroom and workplace to navigate but it would also provide opportunities for people who previously may not have been able to gain entrance into challenging fields.

As for outsourcing joy, he asked “If somebody comes along with a robot that can play better tennis than you and you love playing tennis, are you going to stop playing tennis?”

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences3 months ago

Events & Conferences3 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Business2 days ago

Business2 days agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Mergers & Acquisitions2 months ago

Mergers & Acquisitions2 months agoDonald Trump suggests US government review subsidies to Elon Musk’s companies