AI Insights

NCCN holds AI policy summit

The National Comprehensive Cancer Network (NCCN) hosted a policy summit in Washington, DC, on September 9 to explore the role of AI in cancer care both now and in the future.

Participants, including patients and patient advocates, clinicians, and policymakers, discussed AI’s emerging success in improving oncology care, as well as areas of possible concern.

Among topics raised were issues of implementation and integration into different platforms, oversight (both internal and governmental), and avoiding disparities and increasing access to AI-based software.

Collaboration between medical and technological organizations in the creation and implementation of AI tools for oncology was also highlighted, with the observation that many of the challenges faced with diagnostic AI software have also been faced in other fields that use AI applications.

The speed at which AI models are evolving was a common theme with panelists, NCCN said, with some comparing its potential to advances in care that represented major technological shifts, such as the transition to electronic medical records.

AI and cancer care was also the topic of a plenary session during the NCCN 2025 Annual Conference. Sessions are available for viewing at the NCCN Continuing Education Portal.

NCCN will be hosting a Patient Advocacy Summit on December 9 on the cancer care needs of veterans and first responders.

AI Insights

To ChatGPT or not to ChatGPT: Professors grapple with AI in the classroom

As shopping period settles, students may notice a new addition to many syllabi: an artificial intelligence policy. As one of his first initiatives as associate provost for artificial intelligence, Michael Littman PhD’96 encouraged professors to implement guidelines for the use of AI.

Littman also recommended that professors “discuss (their) expectations in class” and “think about (their) stance around the use of AI,” he wrote in an Aug. 20 letter to faculty. But, professors on campus have applied this advice in different ways, reflecting the range of attitudes towards AI.

In her nonfiction classes, Associate Teaching Professor of English Kate Schapira MFA’06 prohibits AI usage entirely.

“I teach nonfiction because evidence … clarity and specificity are important to me,” she said. AI threatens these principles at a time “when they are especially culturally devalued” nationally.

She added that an overreliance on AI goes beyond the classroom. “It can get someone fired. It can screw up someone’s medication dosage. It can cause someone to believe that they have justification to harm themselves or another person,” she said.

Nancy Khalek, an associate professor of religious studies and history, said she is intentionally designing assignments that are not suitable for AI usage. Instead, she wants students “to engage in reflective assignments, for which things like ChatGPT and the like are not particularly useful or appropriate.”

Khalek said she considers herself an “AI skeptic” — while she acknowledged the tool’s potential, she expressed opposition to “the anti-human aspects of some of these technologies.”

But AI policies vary within and across departments.

Professors “are really struggling with how to create good AI policies, knowing that AI is here to stay, but also valuing some of the intermediate steps that it takes for a student to gain knowledge,” said Aisling Dugan PhD’07, associate teaching professor of biology.

In her class, BIOL 0530: “Principles of Immunology,” Dugan said she allows students to choose to use artificial intelligence for some assignments, but that she requires students to critique their own AI-generated work.

She said this reflection “is a skill that I think we’ll be using more and more of.”

Dugan added that she thinks AI can serve as a “study buddy” for students. She has been working with her teaching assistants to develop an AI chatbot for her classes, which she hopes will eventually answer student questions and supplement the study videos made by her TAs.

Despite this, Dugan still shared concerns over AI in classrooms. “It kind of misses the mark sometimes,” she said, “so it’s not as good as talking to a scientist.”

For some assignments, like primary literature readings, she has a firm no-AI policy, noting that comprehending primary literature is “a major pedagogical tool in upper-level biology courses.”

“There’s just some things that you have to do yourself,” Dugan said. “It (would be) like trying to learn how to ride a bike from AI.”

Assistant Professor of the Practice of Computer Science Eric Ewing PhD’24 is also trying to strike a balance between how AI can support and inhibit student learning.

This semester, his courses, CSCI 0410: “Foundations of AI and Machine Learning” and CSCI 1470: “Deep Learning,” heavily focus on artificial intelligence. He said assignments are no longer “measuring the same things,” since “we know students are using AI.”

While he does not allow students to use AI on homework, his classes offer projects that allow them “full rein” use of AI. This way, he said, “students are hopefully still getting exposure to these tools, but also meeting our learning objectives.”

Get The Herald delivered to your inbox daily.

Ewing also added that the skills required of graduated students are shifting — the growing presence of AI in the professional world requires a different toolkit.

He believes students in upper level computer science classes should be allowed to use AI in their coding assignments. “If you don’t use AI at the moment, you’re behind everybody else who’s using it,” he said.

Ewing says that he identifies AI policy violations through code similarity — last semester, he found that 25 students had similarly structured code. Ultimately, 22 of those 25 admitted to AI usage.

Littman also provided guidance to professors on how to identify the dishonest use of AI, noting various detection tools.

“I personally don’t trust any of these tools,” Littman said. In his introductory letter, he also advised faculty not to be “overly reliant on automated detection tools.”

Although she does not use detection tools, Schapira provides specific reasons in her syllabi to not use AI in order to convince students to comply with her policy.

“If you’re in this class because you want to get better at writing — whatever “better” means to you — those tools won’t help you learn that,” her syllabus reads. “It wastes water and energy, pollutes heavily, is vulnerable to inaccuracies and amplifies bias.”

In addition to these environmental concerns, Dugan was also concerned about the ethical implications of AI technology.

Khalek also expressed her concerns “about the increasingly documented mental health effects of tools like ChatGPT and other LLM-based apps.” In her course, she discussed with students how engaging with AI can “resonate emotionally and linguistically, and thus impact our sense of self in a profound way.”

Students in Schapira’s class can also present “collective demands” if they find the structure of her course overwhelming. “The solution to the problem of too much to do is not to use an AI tool. That means you’re doing nothing. It’s to change your conditions and situations with the people around you,” she said.

“There are ways to not need (AI),” Schapira continued. “Because of the flaws that (it has) and because of the damage (it) can do, I think finding those ways is worth it.”

AI Insights

This Artificial Intelligence (AI) Stock Could Outperform Nvidia by 2030

When investors think about artificial intelligence (AI) and the chips powering this technology, one company tends to dominate the conversation: Nvidia (NASDAQ: NVDA). It has become an undisputed barometer for AI adoption, riding the wave with its industry-leading GPUs and the sticky ecosystem of its CUDA software that keep developers in its orbit. Since the launch of ChatGPT about three years ago, Nvidia stock has surged nearly tenfold.

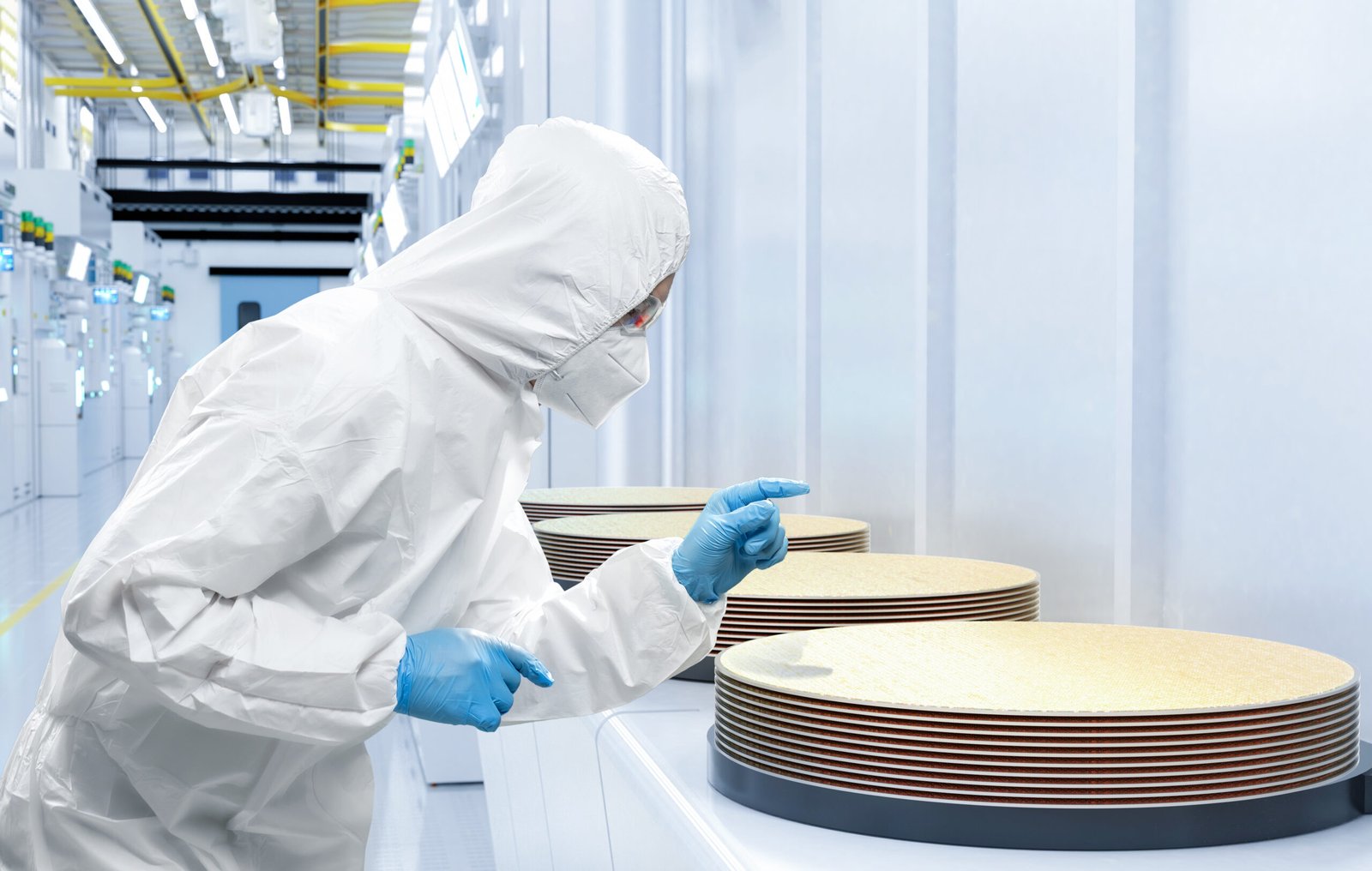

Here’s the twist: While Nvidia commands the spotlight today, it may be Taiwan Semiconductor Manufacturing (NYSE: TSM) that holds the real keys to growth as we look toward the next decade. Below, I’ll unpack why Taiwan Semi — or TSMC, as it’s often called — isn’t just riding the AI wave, but rather is building the foundation that brings the industry to life.

What makes Taiwan Semi so critical is its role as the backbone of the semiconductor ecosystem. Its foundry operations serve as the lifeblood of the industry, transforming complex chip designs into the physical processors that power myriad generative AI applications.

Source Fool.com

AI Insights

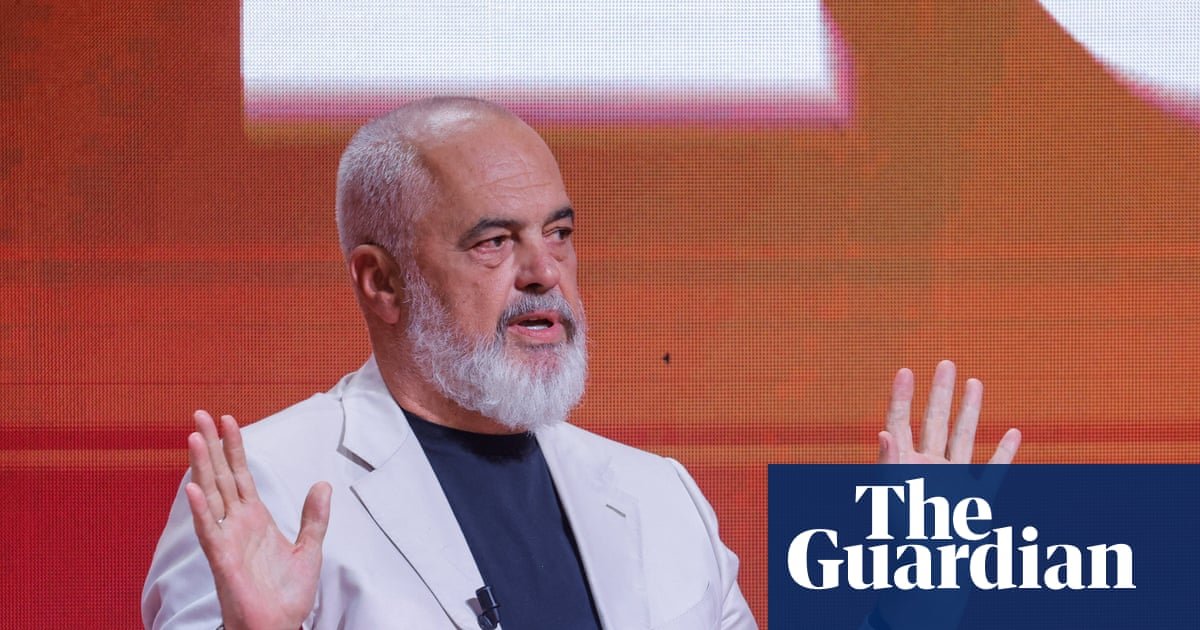

Albania puts AI-created ‘minister’ in charge of public procurement | Albania

A digital assistant that helps people navigate government services online has become the first “virtually created” AI cabinet minister and put in charge of public procurement in an attempt to cut down on corruption, the Albanian prime minister has said.

Diella, which means Sun in Albanian, has been advising users on the state’s e-Albania portal since January, helping them through voice commands with the full range of bureaucratic tasks they need to perform in order to access about 95% of citizen services digitally.

“Diella, the first cabinet member who is not physically present, but has been virtually created by AI”, would help make Albania “a country where public tenders are 100% free of corruption”, Edi Rama said on Thursday.

Announcing the makeup of his fourth consecutive government at the ruling Socialist party conference in Tirana, Rama said Diella, who on the e-Albania portal is dressed in traditional Albanian costume, would become “the servant of public procurement”.

Responsibility for deciding the winners of public tenders would be removed from government ministries in a “step-by-step” process and handled by artificial intelligence to ensure “all public spending in the tender process is 100% clear”, he said.

Diella would examine every tender in which the government contracts private companies and objectively assess the merits of each, said Rama, who was re-elected in May and has previously said he sees AI as a potentially effective anti-corruption tool that would eliminate bribes, threats and conflicts of interest.

Public tenders have long been a source of corruption scandals in Albania, which experts say is a hub for international gangs seeking to launder money from trafficking drugs and weapons and where graft has extended into the upper reaches of government.

Albanian media praised the move as “a major transformation in the way the Albanian government conceives and exercises administrative power, introducing technology not only as a tool, but also as an active participant in governance”.

after newsletter promotion

Not everyone was convinced, however. “In Albania, even Diella will be corrupted,” commented one Facebook user.

-

Business2 weeks ago

Business2 weeks agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Tools & Platforms1 month ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy2 months ago

Ethics & Policy2 months agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences4 months ago

Events & Conferences4 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Education2 months ago

Education2 months agoMacron says UK and France have duty to tackle illegal migration ‘with humanity, solidarity and firmness’ – UK politics live | Politics

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi