AI Insights

Increased artificial intelligence usage prompts lawmakers to push for regulation

When Catherine Hanaway stepped forward after being announced as Missouri’s next attorney general, she mentioned that her office would see how artificial intelligence programs could make the office run more efficiently.

Hanaway pointed out that attorneys can now use AI programs to quickly organize information in a manner that could have taken hours, or even days, of work.

There’s no question that artificial intelligence programs like ChatGPT, Google Gemini and Grok have enormous potential to change the way people consume and organize information, as well as create informative and entertaining content. But many officials are calling for regulation.

During an episode of the Politically Speaking Hour on St. Louis on the Air, Oliver Roberts of the WashU Law AI Collaborative discussed why lawmakers want to place statutory guardrails around artificial intelligence. That includes U.S. Sen. Josh Hawley, who introduced legislation allowing people to sue technology companies or individuals who feed their data into AI programs without consent.

Roberts said lawmakers wanting to protect certain information, like email addresses or login information, from being fed into AI systems makes sense and likely provides assurances to people using certain programs.

But he added that there is ambiguity around whether placing copyrighted work into AI systems without explicit permission of the creator are protected by what’s known as Fair Use. That’s the legal standard that allows people to use copyrighted material legally.

“This is an ongoing debate, and it’s currently making its way through the courts. We now have over 48 copyright lawsuits, and they all have different iterations,” Roberts said. “All these cases do have their own nuances, and what really complicates it is the affirmative defense that’s being used by these AI companies is Fair Use. And the application of those Fair Use factors, which creates an exception to copyright law, those in themselves are often more amorphous, and they’re not always applied consistently, and they could be fact specific.

“So this is kind of a gray area of the law that the courts are currently making their way through the courts,” he added.

Kate Grumke / St. Louis Public Radio

/

St. Louis Public Radio

The environmental cost

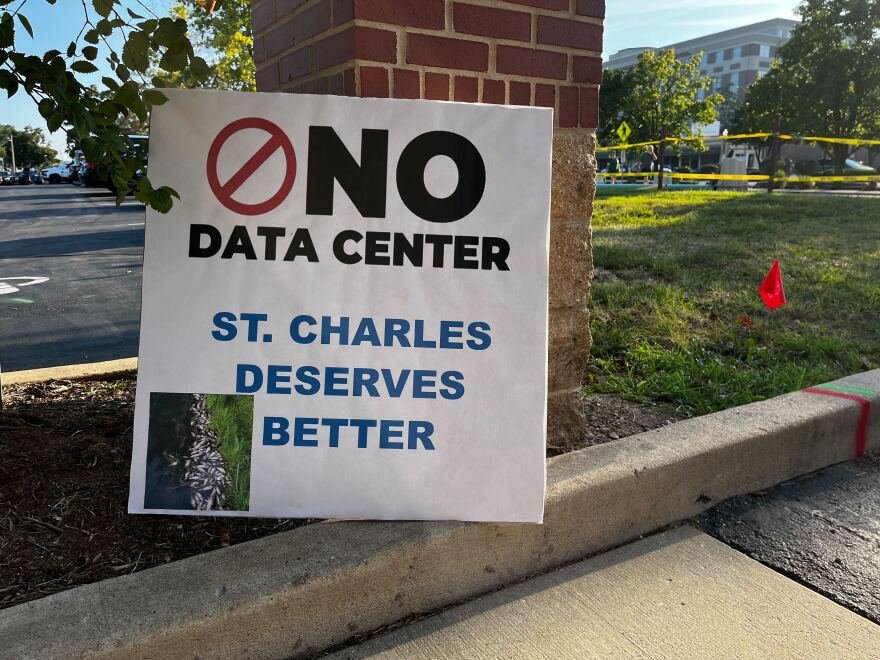

During the Politically Speaking Hour on St. Louis on the Air conversation, St. Louis Public Radio’s Kate Grumke detailed the immense backlash over a now-scuttled data center in St. Charles County.

In particular, she talked about the widespread fears from environmentalists about how the infrastructure needed to run artificial intelligence programs could consume massive amounts of power and water.

“To put this in perspective, last year, Ameren’s largest customer had a peak electricity load of 32 megawatts in Kansas City. There’s a Google data center that’s being built that is going to be around 400 megawatts,” Grumke said. “So these are just humongous increases in the amount of electricity that might be needed. And then on top of that, these systems get pretty hot, and so they also use water to cool them.”

Missouri lawmakers, such as state Rep. Collin Wellenkamp, R-St. Charles County, expect robust debate in the General Assembly about whether to place statutory regulations on artificial intelligence.

That comes after a provision that would have severely restricted states from passing laws regulating AI failed to make it into President Donald Trump’s One Big Beautiful Bill, which Roberts said was prompted by the release of the Chinese-made DeepSeek AI program.

“So the AI debate then became a matter of national security, became a geopolitical issue, and that’s when these AI companies started pushing for federal preemption,” Roberts said. “Because in all these different states right now, there’s over 1,000 pending AI regulations, and these companies are going to navigate potentially 50 different frameworks of regulation. And these AI companies say that’s going to kill our ability to expand and grow and compete with China.”

“St. Louis on the Air” brings you the stories of St. Louis and the people who live, work and create in our region. The show is produced by Miya Norfleet, Emily Woodbury, Danny Wicentowski, Elaine Cha and Alex Heuer. Darrious Varner is our production assistant. The audio engineer is Aaron Doerr.

Copyright 2025 St. Louis Public Radio

AI Insights

Bitcoin Proxy’s Chief Seeks Funding Fix as ‘Flywheel’ Falters

Simon Gerovich, who turned a struggling Japanese hotelier into a Bitcoin stockpiler and investor darling, is feeling the heat.

Source link

AI Insights

Anthropic Settles Landmark Artificial Intelligence Copyright Case

Anthropic’s settlement came after a mixed ruling on the “fair use” where it potentially faced massive piracy damages for downloading millions of books illegally. The settlement seems to clarify an important principle: how AI companies acquire data matters as much as what they do with it.

After warning both the district court and an appeals court that the potential pursuit of hundreds of billions of dollars in statutory damages created a “death knell” situation that would force an unfair settlement, Anthropic has settled its closely watched copyright lawsuit with authors whose books were allegedly pirated for use in Anthropic’s training data. Anthropic’s settlement this week in a landmark copyright case may signal how the industry will navigate the dozens of similar lawsuits pending nationwide. While settlement details remain confidential pending court approval, the timing reveals essential lessons for AI development and intellectual property law.

The settlement follows Judge William Alsup’s nuanced ruling that using copyrighted materials to train AI models constitutes transformative fair use (essentially, using copyrighted material in a new way that doesn’t compete with the original) — a victory for AI developers. The court held that AI models are “like any reader aspiring to be a writer” who trains upon works “not to race ahead and replicate or supplant them — but to turn a hard corner and create something different.”

(For readers unfamiliar with copyright law, “fair use” is a legal doctrine that allows limited use of copyrighted material without permission for purposes like criticism, comment, or — as courts are now determining — AI training. A key test is whether the new use “transforms” the original work by adding something new or serving a different purpose, rather than simply copying it. Think of it as the difference between a critic quoting a novel to review it versus someone photocopying the entire book to avoid buying it.)

After ruling in Anthropic’s favor on this issue, Judge Alsup drew a bright line at acquisition methods. Anthropic’s downloading of over seven million books from pirate sites like LibGen constituted infringement, the judge ruled, rejecting Anthropic’s “research purpose” defense: “You can’t just bless yourself by saying I have a research purpose and, therefore, go and take any textbook you want.”

The settlement’s timing suggests a pragmatic approach to risk management. While Anthropic could claim vindication on training methodology, defending its acquisition methods before a jury posed substantial financial exposure. Statutory damages for willful infringement can reach $150,000 per work, creating potential liability for Anthropic totaling in the billions.

Anthropic is still facing copyright suits from music publishers, including Universal Music Corp. and Concord Music Group Inc., as well as Reddit. The settlement with authors removes one of Anthropic’s many legal challenges. Lawyers for the plaintiffs said, “[t]his historic settlement will benefit all class members,” promising to announce details in the coming weeks.

This settlement solidifies the principles established in Judge Alsup’s prior ruling: how AI companies acquire training data matters as much as what they do with it. The court’s framework permits AI systems to learn from human cultural output, but only through legitimate channels.

For practitioners advising AI projects and companies, the lesson is straightforward: document data sources meticulously and ensure the legitimate acquisition of data. AI companies that previously relied on scraped or pirated content face strong incentives to negotiate licensing agreements or develop alternative training approaches. Publishers and authors gain leverage to demand compensation, even as the fair use doctrine limits their ability to block AI training entirely.

The Anthropic settlement marks neither a total victory nor a defeat for either side, but rather a recognition of the complex realities governing AI and intellectual property. It also remains to be seen what impact it will have on similar pending cases, including whether this will create a pattern of AI companies settling when facing potential class actions. In this new landscape, the legitimacy of the process matters as much as the innovation of the outcome. That balance will define the next chapter of AI development. Under Anthropic, it is apparent that to maximize chances of AI models constituting fair use, developers should use a bookstore, not a pirate’s flag.

AI Insights

AI-powered stethoscopes can detect 3 types of heart conditions within seconds, say researchers – Anadolu Ajansı

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences3 months ago

Events & Conferences3 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Business1 day ago

Business1 day agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoAstrophel Aerospace Raises ₹6.84 Crore to Build Reusable Launch Vehicle