Ethics & Policy

AI in finance: Balancing innovation and stability

AI’s growing role in finance

Artificial intelligence has emerged as a transformative force across many activities in today’s rapidly evolving digital finance world. Integrating AI into the financial system promises unprecedented efficiency gains and improved customer experience from banking and consumer finance to asset management, trading and insurance.

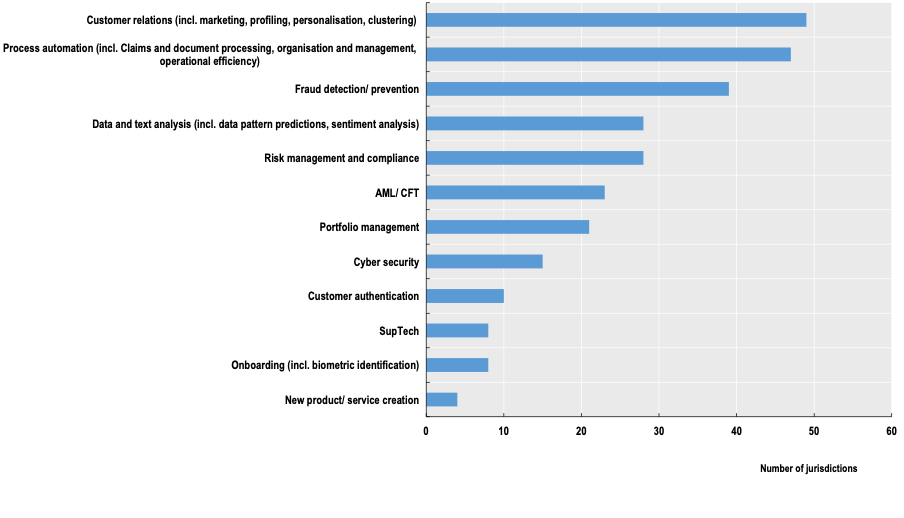

Examples of use cases and tasks involving AI in finance

Source: OECD (2024), “Regulatory approaches to Artificial Intelligence in finance”, OECD Artificial Intelligence Papers, No. 24, OECD Publishing, Paris, https://doi.org/10.1787/f1498c02-en.

The OECD-FSB Roundtable in AI in Finance, held in May 2024, provided an opportunity to hear from market participants about the extent to which they now use AI technologies and the benefits in areas such as risk modelling, trading, fraud detection, and financial crime prevention, including sanctions screening. Currently, at least by regulated financial institutions, generative AI is not widely used, and it mainly improves operational efficiency in back-office functions and experimentation.

However, like other forms of digital financial innovation, AI could create or amplify risks, which warrant close monitoring and assessment by policymakers. These risks include market integrity, consumer and investor protection, and financial stability.

Regulating AI: Is finance special?

The complexities of using AI in finance make it essential to understand how policymakers approach the issue and its implications for the future of finance. The recent OECD report Regulatory Approaches on AI in Finance provides a comprehensive overview of AI regulation in finance across 49 OECD and non-OECD jurisdictions.

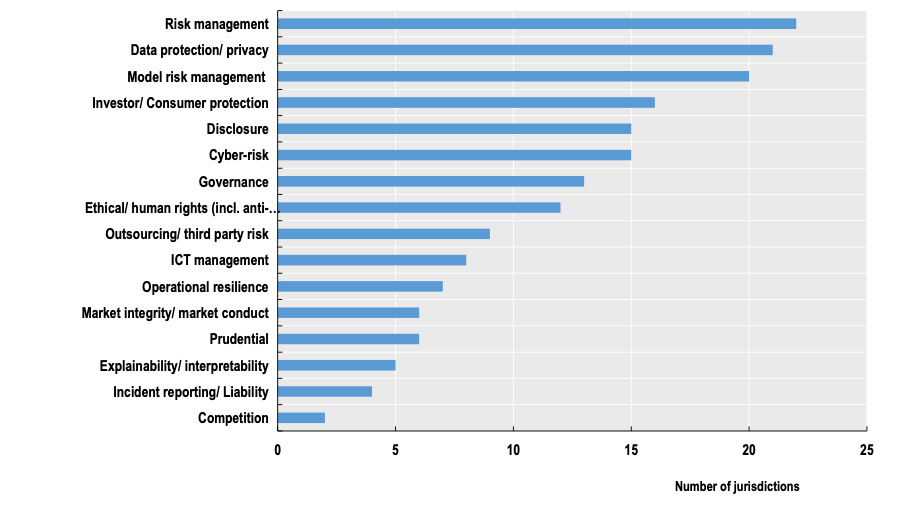

The vast majority of respondent jurisdictions have regulations that apply to the use of AI in finance. Since financial regulation follows a technology-neutral principle, existing laws, rules, and guidance remain applicable regardless of the technology. This includes laws and regulations on prudent business practices, consumer and investor protection, cybersecurity, and operational resilience, among other areas (Figure 1). Advances in technology do not render existing safety and soundness standards or compliance requirements obsolete.

General frameworks for risk management, especially model risk management, are prime examples of existing rules that may apply to AI-based models in finance. This was, in fact, the most common answer in a survey conducted for the study. This is hardly surprising, considering that Machine Learning (ML) models have been used in finance for decades.

Examples of areas covered by existing financial sector rules

Most jurisdictions surveyed have introduced some form of policy that covers AI in parts of finance, albeit in different forms. Some jurisdictions have introduced, or are in the process of introducing, AI legislation, such as the AI Act in the EU and laws in Brazil, Chile, and Colombia. These legislations tend to be cross-sectoral, and in the case of the EU AI Act, have explicit provisions covering only part of the financial sector.

More than a dozen jurisdictions have non-binding policy guidance, such as principles, national strategies and white papers, which either specifically target financial activities or apply across sectors, including finance. It is important to note that most jurisdictions do not plan to introduce new regulations for AI in finance soon.

All these different approaches are non-mutually exclusive. Introducing explicit new policies for using AI in finance does not negate the applicability of existing rules. When AI is used in areas covered by existing rules or guidance, these rules or guidance should generally apply, whether the decision is made by AI (with or without human intervention), traditional models, or humans. In jurisdictions with binding legislation that covers finance, existing sector-specific regulations continue to apply without explicitly referencing AI.

The principle of proportionality also informs countries’ approaches to AI rules in finance and is embedded in their legal and regulatory frameworks. Proportionality allows compliance assessments with rules commensurate with different financial sector participants’ risk profiles and systemic importance. It stems from the need to limit public intervention to what is necessary to achieve the desired policy objectives.

Ensuring AI rules remain fit for purpose

Most respondents to the OECD survey did not identify gaps in their current regulatory and supervisory frameworks applicable to AI in finance. That said, there is consensus that this area requires continuous monitoring and assessment to ensure these frameworks remain fit for purpose.

Most respondent jurisdictions recognise the need to better assess whether existing rules that apply to AI in finance should be expanded to make deployment safer and more responsible. Due to AI’s complexity and innovation speed, this is expected to become more urgent.

This process will benefit from international co-operation and engagement of regulators and supervisors with industry, as advocated by the OECD AI Principles for developing and implementing AI policies.

At the implementation level, several countries recognise a potential need for more help to assist entities in their compliance efforts. This is increasingly important due to the unique issues of deploying more advanced AI tools in finance. For instance, it could be particularly beneficial when identifying gaps under existing rules in mitigating risks, or if re-interpreting these rules or guidance would help achieve policy objectives. While only a few respondent jurisdictions have issued clarifications, they all acknowledge the benefits of providing such guidance.

Harnessing AI’s full potential in finance

Most respondent jurisdictions routinely review existing policy frameworks to ensure their effectiveness. This process can help them identify potential incompatibilities between various policies regarding the use of AI in finance (e.g., data regulation) and ensure regulatory alignment across relevant policy areas.

Policymakers in financial markets have extensive experience balancing productive innovation with a secure and vibrant financial system. AI innovation is no different. It is vital that they continue to closely monitor AI use in finance, including by evaluating current policy frameworks, to ensure that regulation is not a hurdle to responsible and productive AI innovation in finance while ensuring effective oversight and risk mitigation.

As we progress and encounter ongoing and rapid advancements in AI innovation, policymakers, financial institutions, and technology providers must collaborate to establish a robust and resilient policy framework to harness AI’s full potential, promote innovation, build trust, and enable the full realisation of productivity gains.

Read more at “Regulatory approaches to Artificial Intelligence in Finance,” OECD, 2024. Available at: https://www.oecd.org/en/publications/regulatory-approaches-to-artificial-intelligence-in-finance_f1498c02-en.html

The post AI in finance: Balancing innovation and stability appeared first on OECD.AI.

Ethics & Policy

AI and ethics – what is originality? Maybe we’re just not that special when it comes to creativity?

I don’t trust AI, but I use it all the time.

Let’s face it, that’s a sentiment that many of us can buy into if we’re honest about it. It comes from Paul Mallaghan, Head of Creative Strategy at We Are Tilt, a creative transformation content and campaign agency whose clients include the likes of Diageo, KPMG and Barclays.

Taking part in a panel debate on AI ethics at the recent Evolve conference in Brighton, UK, he made another highly pertinent point when he said of people in general:

We know that we are quite susceptible to confident bullshitters. Basically, that is what Chat GPT [is] right now. There’s something reminds me of the illusory truth effect, where if you hear something a few times, or you say it here it said confidently, then you are much more likely to believe it, regardless of the source. I might refer to a certain President who uses that technique fairly regularly, but I think we’re so susceptible to that that we are quite vulnerable.

And, yes, it’s you he’s talking about:

I mean all of us, no matter how intelligent we think we are or how smart over the machines we think we are. When I think about trust, – and I’m coming at this very much from the perspective of someone who runs a creative agency – we’re not involved in building a Large Language Model (LLM); we’re involved in using it, understanding it, and thinking about what the implications if we get this wrong. What does it mean to be creative in the world of LLMs?

Genuine

Being genuine, is vital, he argues, and being human – where does Human Intelligence come into the picture, particularly in relation to creativity. His argument:

There’s a certain parasitic quality to what’s being created. We make films, we’re designers, we’re creators, we’re all those sort of things in the company that I run. We have had to just face the fact that we’re using tools that have hoovered up the work of others and then regenerate it and spit it out. There is an ethical dilemma that we face every day when we use those tools.

His firm has come to the conclusion that it has to be responsible for imposing its own guidelines here to some degree, because there’s not a lot happening elsewhere:

To some extent, we are always ahead of regulation, because the nature of being creative is that you’re always going to be experimenting and trying things, and you want to see what the next big thing is. It’s actually very exciting. So that’s all cool, but we’ve realized that if we want to try and do this ethically, we have to establish some of our own ground rules, even if they’re really basic. Like, let’s try and not prompt with the name of an illustrator that we know, because that’s stealing their intellectual property, or the labor of their creative brains.

I’m not a regulatory expert by any means, but I can say that a lot of the clients we work with, to be fair to them, are also trying to get ahead of where I think we are probably at government level, and they’re creating their own frameworks, their own trust frameworks, to try and address some of these things. Everyone is starting to ask questions, and you don’t want to be the person that’s accidentally created a system where everything is then suable because of what you’ve made or what you’ve generated.

Originality

That’s not necessarily an easy ask, of course. What, for example, do we mean by originality? Mallaghan suggests:

Anyone who’s ever tried to create anything knows you’re trying to break patterns. You’re trying to find or re-mix or mash up something that hasn’t happened before. To some extent, that is a good thing that really we’re talking about pattern matching tools. So generally speaking, it’s used in every part of the creative process now. Most agencies, certainly the big ones, certainly anyone that’s working on a lot of marketing stuff, they’re using it to try and drive efficiencies and get incredible margins. They’re going to be on the race to the bottom.

But originality is hard to quantify. I think that actually it doesn’t happen as much as people think anyway, that originality. When you look at ChatGPT or any of these tools, there’s a lot of interesting new tools that are out there that purport to help you in the quest to come up with ideas, and they can be useful. Quite often, we’ll use them to sift out the crappy ideas, because if ChatGPT or an AI tool can come up with it, it’s probably something that’s happened before, something you probably don’t want to use.

More Human Intelligence is needed, it seems:

What I think any creative needs to understand now is you’re going to have to be extremely interesting, and you’re going to have to push even more humanity into what you do, or you’re going to be easily replaced by these tools that probably shouldn’t be doing all the fun stuff that we want to do. [In terms of ethical questions] there’s a bunch, including the copyright thing, but there’s partly just [questions] around purpose and fun. Like, why do we even do this stuff? Why do we do it? There’s a whole industry that exists for people with wonderful brains, and there’s lots of different types of industries [where you] see different types of brains. But why are we trying to do away with something that allows people to get up in the morning and have a reason to live? That is a big question.

My second ethical thing is, what do we do with the next generation who don’t learn craft and quality, and they don’t go through the same hurdles? They may find ways to use {AI] in ways that we can’t imagine, because that’s what young people do, and I have faith in that. But I also think, how are you going to learn the language that helps you interface with, say, a video model, and know what a camera does, and how to ask for the right things, how to tell a story, and what’s right? All that is an ethical issue, like we might be taking that away from an entire generation.

And there’s one last ‘tough love’ question to be posed:

What if we’re not special? Basically, what if all the patterns that are part of us aren’t that special? The only reason I bring that up is that I think that in every career, you associate your identity with what you do. Maybe we shouldn’t, maybe that’s a bad thing, but I know that creatives really associate with what they do. Their identity is tied up in what it is that they actually do, whether they’re an illustrator or whatever. It is a proper existential crisis to look at it and go, ‘Oh, the thing that I thought was special can be regurgitated pretty easily’…It’s a terrifying thing to stare into the Gorgon and look back at it and think,’Where are we going with this?’. By the way, I do think we’re special, but maybe we’re not as special as we think we are. A lot of these patterns can be matched.

My take

This was a candid worldview that raised a number of tough questions – and questions are often so much more interesting than answers, aren’t they? The subject of creativity and copyright has been handled at length on diginomica by Chris Middleton and I think Mallaghan’s comments pretty much chime with most of that.

I was particularly taken by the point about the impact on the younger generation of having at their fingertips AI tools that can ‘do everything, until they can’t’. I recall being horrified a good few years ago when doing a shift in a newsroom of a major tech title and noticing that the flow of copy had suddenly dried up. ‘Where are the stories?’, I shouted. Back came the reply, ‘Oh, the Internet’s gone down’. ‘Then pick up the phone and call people, find some stories,’ I snapped. A sad, baffled young face looked back at me and asked, ‘Who should we call?’. Now apart from suddenly feeling about 103, I was shaken by the fact that as soon as the umbilical cord of the Internet was cut, everyone was rendered helpless.

Take that idea and multiply it a billion-fold when it comes to AI dependency and the future looks scary. Human Intelligence matters

Ethics & Policy

Preparing Timor Leste to embrace Artificial Intelligence

UNESCO, in collaboration with the Ministry of Transport and Communications, Catalpa International and national lead consultant, jointly conducted consultative and validation workshops as part of the AI Readiness assessment implementation in Timor-Leste. Held on 8–9 April and 27 May respectively, the workshops convened representatives from government ministries, academia, international organisations and development partners, the Timor-Leste National Commission for UNESCO, civil society, and the private sector for a multi-stakeholder consultation to unpack the current stage of AI adoption and development in the country, guided by UNESCO’s AI Readiness Assessment Methodology (RAM).

In response to growing concerns about the rapid rise of AI, the UNESCO Recommendation on the Ethics of Artificial Intelligence was adopted by 194 Member States in 2021, including Timor-Leste, to ensure ethical governance of AI. To support Member States in implementing this Recommendation, the RAM was developed by UNESCO’s AI experts without borders. It includes a range of quantitative and qualitative questions designed to gather information across different dimensions of a country’s AI ecosystem, including legal and regulatory, social and cultural, economic, scientific and educational, technological and infrastructural aspects.

By compiling comprehensive insights into these areas, the final RAM report helps identify institutional and regulatory gaps, which can assist the government with the necessary AI governance and enable UNESCO to provide tailored support that promotes an ethical AI ecosystem aligned with the Recommendation.

The first day of the workshop was opened by Timor-Leste’s Minister of Transport and Communication, H.E. Miguel Marques Gonçalves Manetelu. In his opening remarks, Minister Manetelu highlighted the pivotal role of AI in shaping the future. He emphasised that the current global trajectory is not only driving the digitalisation of work but also enabling more effective and productive outcomes.

Ethics & Policy

Experts gather to discuss ethics, AI and the future of publishing

Publishing stands at a pivotal juncture, said Jeremy North, president of Global Book Business at Taylor & Francis Group, addressing delegates at the 3rd International Conference on Publishing Education in Beijing. Digital intelligence is fundamentally transforming the sector — and this revolution will inevitably create “AI winners and losers”.

True winners, he argued, will be those who embrace AI not as a replacement for human insight but as a tool that strengthens publishing’s core mission: connecting people through knowledge. The key is balance, North said, using AI to enhance creativity without diminishing human judgment or critical thinking.

This vision set the tone for the event where the Association for International Publishing Education was officially launched — the world’s first global alliance dedicated to advancing publishing education through international collaboration.

Unveiled at the conference cohosted by the Beijing Institute of Graphic Communication and the Publishers Association of China, the AIPE brings together nearly 50 member organizations with a mission to foster joint research, training, and innovation in publishing education.

Tian Zhongli, president of BIGC, stressed the need to anchor publishing education in ethics and humanistic values and reaffirmed BIGC’s commitment to building a global talent platform through AIPE.

BIGC will deepen academic-industry collaboration through AIPE to provide a premium platform for nurturing high-level, holistic, and internationally competent publishing talent, he added.

Zhang Xin, secretary of the CPC Committee at BIGC, emphasized that AIPE is expected to help globalize Chinese publishing scholarships, contribute new ideas to the industry, and cultivate a new generation of publishing professionals for the digital era.

Themed “Mutual Learning and Cooperation: New Ecology of International Publishing Education in the Digital Intelligence Era”, the conference also tackled a wide range of challenges and opportunities brought on by AI — from ethical concerns and content ownership to protecting human creativity and rethinking publishing values in higher education.

Wu Shulin, president of the Publishers Association of China, cautioned that while AI brings major opportunities, “we must not overlook the ethical and security problems it introduces”.

Catriona Stevenson, deputy CEO of the UK Publishers Association, echoed this sentiment. She highlighted how British publishers are adopting AI to amplify human creativity and productivity, while calling for global cooperation to protect intellectual property and combat AI tool infringement.

The conference aims to explore innovative pathways for the publishing industry and education reform, discuss emerging technological trends, advance higher education philosophies and talent development models, promote global academic exchange and collaboration, and empower knowledge production and dissemination through publishing education in the digital intelligence era.

yangyangs@chinadaily.com.cn

-

Funding & Business7 days ago

Kayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Jobs & Careers7 days ago

Mumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Mergers & Acquisitions7 days ago

Donald Trump suggests US government review subsidies to Elon Musk’s companies

-

Funding & Business7 days ago

Rethinking Venture Capital’s Talent Pipeline

-

Jobs & Careers6 days ago

Why Agentic AI Isn’t Pure Hype (And What Skeptics Aren’t Seeing Yet)

-

Funding & Business4 days ago

Sakana AI’s TreeQuest: Deploy multi-model teams that outperform individual LLMs by 30%

-

Jobs & Careers6 days ago

Astrophel Aerospace Raises ₹6.84 Crore to Build Reusable Launch Vehicle

-

Jobs & Careers6 days ago

Telangana Launches TGDeX—India’s First State‑Led AI Public Infrastructure

-

Funding & Business1 week ago

From chatbots to collaborators: How AI agents are reshaping enterprise work

-

Tools & Platforms6 days ago

Winning with AI – A Playbook for Pest Control Business Leaders to Drive Growth