Events & Conferences

Economics Nobelist on causal inference

Since 2013, Amazon has held an annual internal conference, the Amazon Machine Learning Conference (AMLC), where machine learning practitioners from around the company come together to share their work, teach and learn new techniques, and discuss best practices.

At the third AMLC, in 2015, Guido Imbens, a professor of economics at the Stanford University Graduate School of Business, gave a popular tutorial on causality and machine learning. Nine years and one Nobel Prize for economics later, Imbens — now in his tenth year as an Amazon academic research consultant — was one of the keynote speakers at the 2024 AMLC, held in October.

In his talk, Imbens discussed causal inference, a mainstay of his research for more than 30 years and the topic that the Nobel committee highlighted in its prize citation. In particular, he considered so-called panel data, in which multiple units — say, products, customers, or geographic regions — and outcomes — say, sales or clicks — are observed at discrete points in time.

Over particular time spans, some units receive a treatment — say, a special product promotion or new environmental regulation — whose effects are reflected in the outcome measurements. Causal inference is the process of determining how much of the change in outcomes over time can be attributed to the treatment. This means adjusting for spurious correlations that result from general trends in the data, which can be inferred from trends among the untreated (control) units.

Imbens began by discussing the value of his work at Amazon. “I started working with people here at Amazon in 2014, and it’s been a real pleasure and a real source of inspiration for my research, interacting with the people here and seeing what kind of problems they’re working on, what kind of questions they have,” he said. “I’ve always found it very useful in my econometric, in my statistics, in my methodological research to talk to people who are using these methods in practice, who are actually working with these things on the ground. So it’s been a real privilege for the last 10 years doing that with the people here at Amazon.”

Panel data

Then, with no further ado, he launched into the substance of his talk. Panel data, he explained, is generally represented by a pair of matrices, whose rows represents units and whose columns represent points in time. In one matrix, the entries represent measurements made on particular units at particular times; the other matrix takes only binary values, which represent whether a given unit was subject to treatment during the corresponding time span.

Ideally, for a given unit and a given time span, we would run an experiment in which the unit went untreated; then we would back time up and run the experiment again, with the treatment. But of course, time can’t be backed up. So instead, for each treated cell in the matrix, we estimate what the relevant measurement would have been if the treatment hadn’t been applied, and we base that estimate on the outcomes for other units and time periods.

For ease of explanation, Imbens said, he considered the case in which only one unit was treated, for only one time interval: “Once I have methods that work effectively for that case, the particular methods I’m going to suggest extend very naturally to the more-general assignment mechanism,” he said. “This is a very common setup.”

Control estimates

Imbens described five standard methods for estimating what would have been the outcome if a treated unit had been untreated during the same time period. The first method, which is very common in empirical work in economics, is known as known as difference of differences. It involves a regression analysis of all the untreated data up to the treatment period; the regression function can then be used to estimate the outcome for the treated unit if it hadn’t been treated.

The second method is called synthetic control, in which a control version of the treated unit is synthesized as a weighted average of the other control units.

“One of the canonical examples is one where he [Alberto Abadie, an Amazon Scholar, pioneer of synthetic control, and long-time collaborator of Imbens] is interested in estimating the effect of an anti-smoking regulation in California that went into effect in 1989,” Imbens explained. “So he tries to find the convex combination of the other states such that smoking rates for that convex combination match the actual smoking rates in California prior to 1989 — say, 40% Arizona, 30% Utah, 10% Washington and 20% New York. Once he has those weights, he then estimates the counterfactual smoking rate in California.”

The third method, which Imbens and a colleague had proposed in 2016, adds an intercept to the synthetic-control equation; that is, it specifies an output value for the function when all the unit measurements are zero.

The final two methods were variations on difference of differences that added another term to the function to be optimized: a low-rank matrix, which approximates the results of the outcomes matrix at a lower resolution. The first of these variations — the matrix completion method — simply adds the matrix, with a weighting factor, to the standard difference-of-differences function.

The second variation — synthetic difference of differences — weights the distances between the unit-time measurements and the regression curve according to the control units’ similarities to the unit that received the intervention.

“In the context of the smoking example,” Imbens said, “you assign more weight to units that are similar to California, that match California better. So rather than pretending that Delaware or Alaska is very similar to California — other than in their level — you only put weight on states that are very similar to California.”

Drawbacks

Having presented these five methods, Imbens went on to explain what he found wrong with them. The first problem, he said, is that they treat the outcome and treatment matrices as both row (units) and column (points in time) exchangeable. That is, the methods produce the same results whatever the ordering of rows and columns in the matrices.

“The unit exchangeability here seems very reasonable,” Imbens said. “We may have some other covariates, but in principle, there’s nothing that distinguishes these units or suggests treating them in a way that’s different from exchangeable.

“But for the time dimension, it’s different. You would think that if we’re trying to predict outcomes in 2020, having outcomes measured in 2019 is going to be much more useful than having outcomes measured in 1983. We think that there’s going to be correlation over time that makes predictions based on values from 2019 much more likely to be accurate than predictions based on values from 1983.”

The second problem, Imbens said, is that while the methods work well in the special case he considered, where only a single unit-time pair is treated — and indeed, they work well under any conditions in which the treatment assignments have a clearly discernible structure — they struggle in cases where the treatment assignments are more random. That’s because, with random assignment, units drop in and out of the control group from one time period to the next, making accurate regression analysis difficult.

A new estimator

So Imbens proposed a new estimator, one based on the matrix completion method, but with additional terms that apply two sets of weights to each control unit’s contribution to the regression analysis. The first weight reduces the contribution of a unit measurement according to its distance in time from the measurement of the treated unit — that is, it privileges more recent measurements.

The second weight reduces the contributions of control unit measurements according to their absolute distance from the measurement of the treated unit. There, the idea is to limit the influence of outliers in sparse datasets — that is, datasets that control units are constantly dropping in and out of.

Imbens then compared the performance of his new estimator to those of the other five, on nine existing datasets that had been chosen to test the accuracy of prior estimators. On eight of the nine datasets, Imbens’s estimator outperformed all five of its predecessors, sometimes by a large margin; on the ninth dataset, it finished a close second to the difference-of-differences approach — which, however, was the last-place finisher on several other datasets.

“I don’t want to push this as a particular estimator that you should use in all settings,” Imbens explained. “I want to mainly show that even simple changes to existing classes of estimators can actually do substantially better than the previous estimators by incorporating the time dimension in a more uh more satisfactory way.”

For purposes of causal inference, however, the accuracy of an estimator is not the only consideration. The reliability of the estimator — its power, in the statistical sense — also depends on its variance, the degree to which its margin of error deviates from the mean in particular instances. The lower the variance, the more likely the estimator is to provide accurate estimates.

Variance of variance

For the rest of his talk, Imbens discussed methods of estimating the variance of counterfactual estimators. Here things get a little confusing, because the variance estimators themselves display variance. Imbens advocated the use of conditional variance estimators, which hold some variables fixed — in the case of panel data, unit, time, or both — and estimate the variance of the free variables. Counterintuitively, higher-variance variance estimators, Imbens said, offer more power.

“In general, you should prefer the conditional variance because it adapts more to the particular dataset you’re analyzing,” Imbens explained. “It’s going to give you more power to find the treatment effects. Whereas the marginal variance” — an alternative and widely used method for estimating variance — “has the lowest variance itself, and it’s going to have the lowest power in general for detecting treatment effects.”

Imbens then presented some experimental results using synthetic panel data that indicated that, indeed, in cases where data is heteroskedastic — meaning that the variance of one variable increases with increasing values of the other — variance estimators that themselves use conditional variance have greater statistical power than other estimators.

“There’s clearly more to be done, both in terms of estimation, despite all the work that’s been done in the last couple of years in this area, and in terms of variance estimation,” Imbens concluded. “And where I think the future lies for these models is a combination of the outcome modeling by having something flexible in terms of both factor models as well as weights that ensure that you’re doing the estimation only locally. And we need to do more on variance estimation, keeping in mind both power and validity, with some key role for modeling some of the heteroskedasticity.”

Events & Conferences

Revolutionizing warehouse automation with scientific simulation

Modern warehouses rely on complex networks of sensors to enable safe and efficient operations. These sensors must detect everything from packages and containers to robots and vehicles, often in changing environments with varying lighting conditions. More important for Amazon, we need to be able to detect barcodes in an efficient way.

The Amazon Robotics ID (ARID) team focuses on solving this problem. When we first started working on it, we faced a significant bottleneck: optimizing sensor placement required weeks or months of physical prototyping and real-world testing, severely limiting our ability to explore innovative solutions.

To transform this process, we developed Sensor Workbench (SWB), a sensor simulation platform built on NVIDIA’s Isaac Sim that combines parallel processing, physics-based sensor modeling, and high-fidelity 3-D environments. By providing virtual testing environments that mirror real-world conditions with unprecedented accuracy, SWB allows our teams to explore hundreds of configurations in the same amount of time it previously took to test just a few physical setups.

Camera and target selection/positioning

Sensor Workbench users can select different cameras and targets and position them in 3-D space to receive real-time feedback on barcode decodability.

Three key innovations enabled SWB: a specialized parallel-computing architecture that performs simulation tasks across the GPU; a custom CAD-to-OpenUSD (Universal Scene Description) pipeline; and the use of OpenUSD as the ground truth throughout the simulation process.

Parallel-computing architecture

Our parallel-processing pipeline leverages NVIDIA’s Warp library with custom computation kernels to maximize GPU utilization. By maintaining 3-D objects persistently in GPU memory and updating transforms only when objects move, we eliminate redundant data transfers. We also perform computations only when needed — when, for instance, a sensor parameter changes, or something moves. By these means, we achieve real-time performance.

Visualization methods

Sensor Workbench users can pick sphere- or plane-based visualizations, to see how the positions and rotations of individual barcodes affect performance.

This architecture allows us to perform complex calculations for multiple sensors simultaneously, enabling instant feedback in the form of immersive 3-D visuals. Those visuals represent metrics that barcode-detection machine-learning models need to work, as teams adjust sensor positions and parameters in the environment.

CAD to USD

Our second innovation involved developing a custom CAD-to-OpenUSD pipeline that automatically converts detailed warehouse models into optimized 3-D assets. Our CAD-to-USD conversion pipeline replicates the structure and content of models created in the modeling program SolidWorks with a 1:1 mapping. We start by extracting essential data — including world transforms, mesh geometry, material properties, and joint information — from the CAD file. The full assembly-and-part hierarchy is preserved so that the resulting USD stage mirrors the CAD tree structure exactly.

To ensure modularity and maintainability, we organize the data into separate USD layers covering mesh, materials, joints, and transforms. This layered approach ensures that the converted USD file faithfully retains the asset structure, geometry, and visual fidelity of the original CAD model, enabling accurate and scalable integration for real-time visualization, simulation, and collaboration.

OpenUSD as ground truth

The third important factor was our novel approach to using OpenUSD as the ground truth throughout the entire simulation process. We developed custom schemas that extend beyond basic 3-D-asset information to include enriched environment descriptions and simulation parameters. Our system continuously records all scene activities — from sensor positions and orientations to object movements and parameter changes — directly into the USD stage in real time. We even maintain user interface elements and their states within USD, enabling us to restore not just the simulation configuration but the complete user interface state as well.

This architecture ensures that when USD initial configurations change, the simulation automatically adapts without requiring modifications to the core software. By maintaining this live synchronization between the simulation state and the USD representation, we create a reliable source of truth that captures the complete state of the simulation environment, allowing users to save and re-create simulation configurations exactly as needed. The interfaces simply reflect the state of the world, creating a flexible and maintainable system that can evolve with our needs.

Application

With SWB, our teams can now rapidly evaluate sensor mounting positions and verify overall concepts in a fraction of the time previously required. More importantly, SWB has become a powerful platform for cross-functional collaboration, allowing engineers, scientists, and operational teams to work together in real time, visualizing and adjusting sensor configurations while immediately seeing the impact of their changes and sharing their results with each other.

New perspectives

In projection mode, an explicit target is not needed. Instead, Sensor Workbench uses the whole environment as a target, projecting rays from the camera to identify locations for barcode placement. Users can also switch between a comprehensive three-quarters view and the perspectives of individual cameras.

Due to the initial success in simulating barcode-reading scenarios, we have expanded SWB’s capabilities to incorporate high-fidelity lighting simulations. This allows teams to iterate on new baffle and light designs, further optimizing the conditions for reliable barcode detection, while ensuring that lighting conditions are safe for human eyes, too. Teams can now explore various lighting conditions, target positions, and sensor configurations simultaneously, gleaning insights that would take months to accumulate through traditional testing methods.

Looking ahead, we are working on several exciting enhancements to the system. Our current focus is on integrating more-advanced sensor simulations that combine analytical models with real-world measurement feedback from the ARID team, further increasing the system’s accuracy and practical utility. We are also exploring the use of AI to suggest optimal sensor placements for new station designs, which could potentially identify novel configurations that users of the tool might not consider.

Additionally, we are looking to expand the system to serve as a comprehensive synthetic-data generation platform. This will go beyond just simulating barcode-detection scenarios, providing a full digital environment for testing sensors and algorithms. This capability will let teams validate and train their systems using diverse, automatically generated datasets that capture the full range of conditions they might encounter in real-world operations.

By combining advanced scientific computing with practical industrial applications, SWB represents a significant step forward in warehouse automation development. The platform demonstrates how sophisticated simulation tools can dramatically accelerate innovation in complex industrial systems. As we continue to enhance the system with new capabilities, we are excited about its potential to further transform and set new standards for warehouse automation.

Events & Conferences

Enabling Kotlin Incremental Compilation on Buck2

The Kotlin incremental compiler has been a true gem for developers chasing faster compilation since its introduction in build tools. Now, we’re excited to bring its benefits to Buck2 – Meta’s build system – to unlock even more speed and efficiency for Kotlin developers.

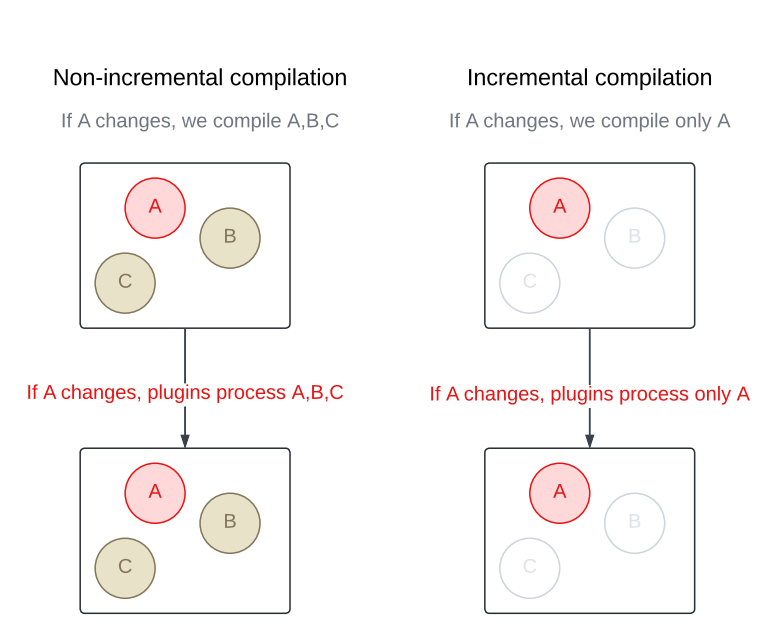

Unlike a traditional compiler that recompiles an entire module every time, an incremental compiler focuses only on what was changed. This cuts down compilation time in a big way, especially when modules contain a large number of source files.

Buck2 promotes small modules as a key strategy for achieving fast build times. Our codebase followed that principle closely, and for a long time, it worked well. With only a handful of files in each module, and Buck2’s support for fast incremental builds and parallel execution, incremental compilation didn’t seem like something we needed.

But, let’s be real: Codebases grow, teams change, and reality sometimes drifts away from the original plan. Over time, some modules started getting bigger – either from legacy or just organic growth. And while big modules were still the exception, they started having quite an impact on build times.

So we gave the Kotlin incremental compiler a closer look – and we’re glad we did. The results? Some critical modules now build up to 3x faster. That’s a big win for developer productivity and overall build happiness.

Curious about how we made it all work in Buck2? Keep reading. We’ll walk you through the steps we took to bring the Kotlin incremental compiler to life in our Android toolchain.

Step 1: Integrating Kotlin’s Build Tools API

As of Kotlin 2.2.0, the only guaranteed public contract to use the compiler is through the command-line interface (CLI). But since the CLI doesn’t support incremental compilation (at least for now), it didn’t meet our needs. Alternatively, we could integrate the Kotlin incremental compiler directly via the internal compiler’s components – APIs that are technically accessible but not intended for public use. However, relying on them would’ve made our toolchain fragile and likely to break with every Kotlin update since there’s no guarantee of backward compatibility. That didn’t seem like the right path either.

Then we came across the Build Tools API (KEEP), introduced in Kotlin 1.9.20 as the official integration point for the compiler – including support for incremental compilation. Although the API was still marked as experimental, we decided to give it a try. We knew it would eventually stabilize, and saw it as a great opportunity to get in early, provide feedback, and help shape its direction. Compared to using internal components, it offered a far more sustainable and future-proof approach to integration.

⚠️ Depending on kotlin-compiler? Watch out!

In the Java world, a shaded library is a modified version of the library where the class and package names are changed. This process – called shading – is a handy way to avoid classpath conflicts, prevent version clashes between libraries, and keeps internal details from leaking out.

Here’s quick example:

- Unshaded (original) class: com.intellij.util.io.DataExternalizer

- Shaded class: org.jetbrains.kotlin.com.intellij.util.io.DataExternalizer

The Build Tools API depends on the shaded version of the Kotlin compiler (kotlin-compiler-embeddable). But our Android toolchain was historically built with the unshaded one (kotlin-compiler). That mismatch led to java.lang.NoClassDefFoundError crashes when testing the integration because the shaded classes simply weren’t on the classpath.

Replacing the unshaded compiler across the entire Android toolchain would’ve been a big effort. So to keep moving forward, we went with a quick workaround: We unshaded the Build Tools API instead. 🙈 Using the jarjar library, we stripped the org.jetbrains.kotlin prefix from class names and rebuilt the library.

Don’t worry, once we had a working prototype and confirmed everything behaved as expected, we circled back and did it right – fully migrating our toolchain to use the shaded Kotlin compiler. That brought us back in line with the API’s expectations and gave us a more stable setup for the future.

Step 2: Keeping previous output around for the incremental compiler

To compile incrementally, the Kotlin compiler needs access to the output from the previous build. Simple enough, but Buck2 deletes that output by default before rebuilding a module.

With incremental actions, you can configure Buck2 to skip the automatic cleanup of previous outputs. This gives your build actions access to everything from the last run. The tradeoff is that it’s now up to you to figure out what’s still useful and manually clean up the rest. It’s a bit more work, but it’s exactly what we needed to make incremental compilation possible.

Step 3: Making the incremental compiler cache relocatable

At first, this might not seem like a big deal. You’re not planning to move your codebase around, so why worry about making the cache relocatable, right?

Well… that’s until you realize you’re no longer in a tiny team, and you’re definitely not the only one building the project. Suddenly, it does matter.

Buck2 supports distributed builds, which means your builds don’t have to run only on your local machine. They can be executed elsewhere, with the results sent back to you. And if your compiler cache isn’t relocatable, this setup can quickly lead to trouble – from conflicting overloads to strange ambiguity errors caused by mismatched paths in cached data.

So we made sure to configure the root project directory and the build directory explicitly in the incremental compilation settings. This keeps the compiler cache stable and reliable, no matter who runs the build or where it happens.

Step 4: Configuring the incremental compiler

In a nutshell, to decide what needs to be recompiled, the Kotlin incremental compiler looks for changes in two places:

- Files within the module being rebuilt.

- The module’s dependencies.

Once the changes are found, the compiler figures out which files in the module are affected – whether by direct edits or through updated dependencies – and recompiles only those.

To get this process rolling, the compiler needs just a little nudge to understand how much work it really has to do.

So let’s give it that nudge!

Tracking changes inside the module

When it comes to tracking changes, you’ve got two options: You can either let the compiler do its magic and detect changes automatically, or you can give it a hand by passing a list of modified files yourself. The first option is great if you don’t know which files have changed or if you just want to get something working quickly (like we did during prototyping). However, if you’re on a Kotlin version earlier than 2.1.20, you have to provide this information yourself. Automatic source change detection via the Build Tools API isn’t available prior to that. Even with newer versions, if the build tool already has the change list before compilation, it’s still worth using it to optimize the process.

This is where Buck’s incremental actions come in handy again! Not only can we preserve the output from the previous run, but we also get hash digests for every action input. By comparing those hashes with the ones from the last build, we can generate a list of changed files. From there, we pass that list to the compiler to kick off incremental compilation right away – no need for the compiler to do any change detection on its own.

Tracking changes in dependencies

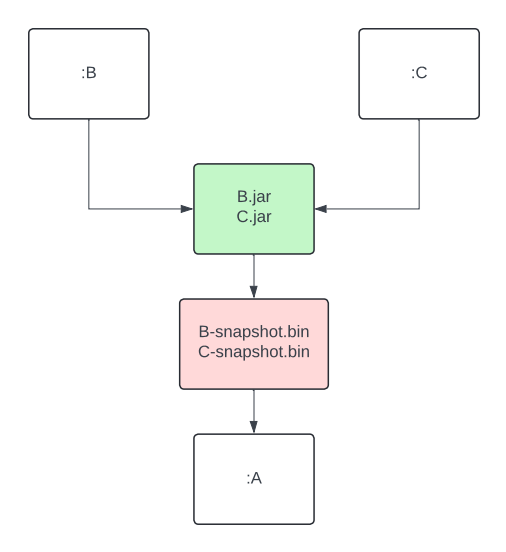

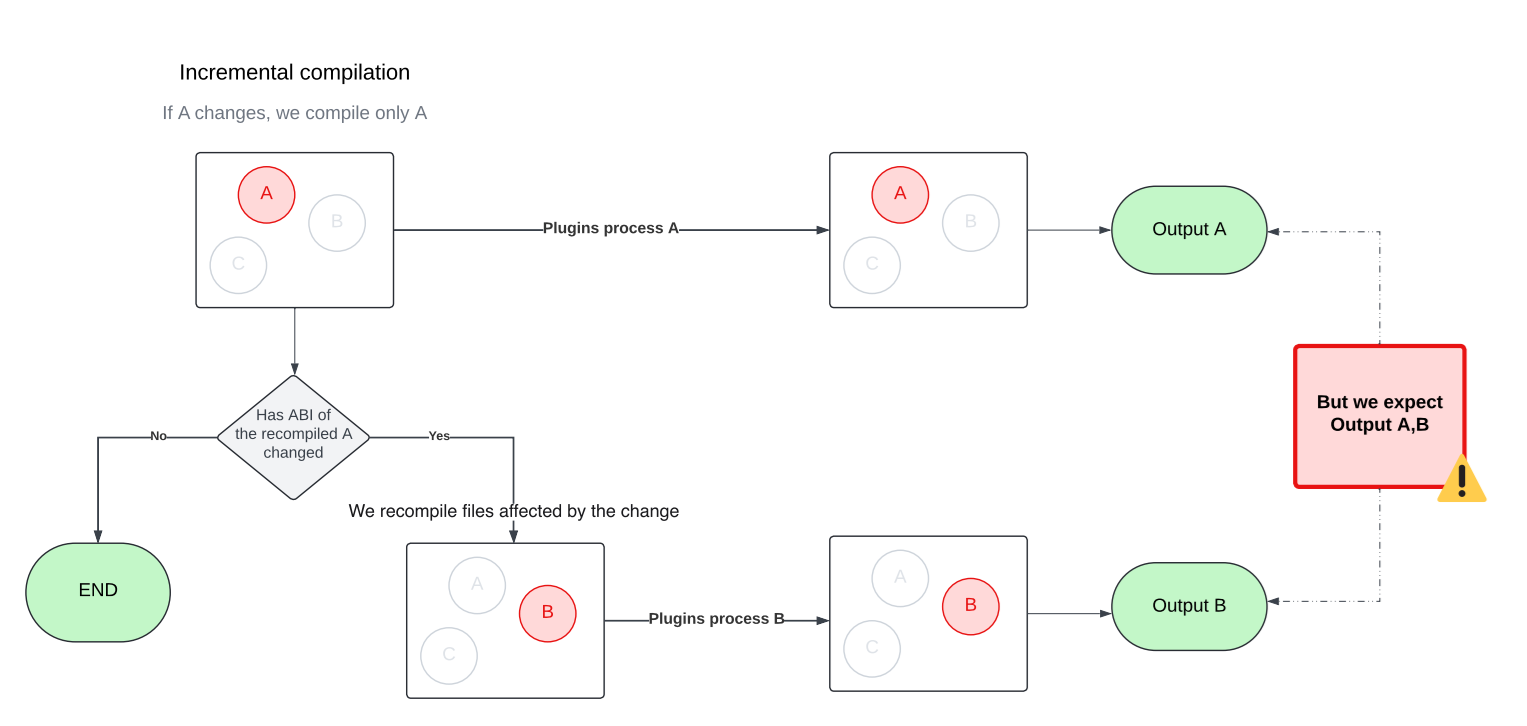

Sometimes it’s not the module itself that changes, it’s something the module depends on. In these cases, the compiler relies on classpath snapshot. These snapshots capture the Application Binary Interface (ABI) of a library. By comparing the current snapshots to the previous one, the compiler can detect changes in dependencies and figure out which files in your module are affected. This adds an extra layer of filtering on top of standard compilation avoidance.

In Buck2, we added a dedicated action to generate classpath snapshots from library outputs. This artifact is then passed as an input to the consuming module, right alongside the library’s compiled output. The best part? Since it’s a separate action, it can be run remotely or be pulled from cache, so your machine doesn’t have to do the heavy lifting of extracting ABI at this step.

If, after all, only your module changes but your dependencies do not, the API also lets you skip the snapshot comparison entirely if your build tool handles the dependency analysis on its own. Since we already had the necessary data from Buck2’s incremental actions, adding this optimization was almost free.

Step 5: Making compiler plugins work with the incremental compiler

One of the biggest challenges we faced when integrating the incremental compiler was making it play nicely with our custom compiler plugins, many of which are important to our build optimization strategy. This step was necessary for unlocking the full performance benefits of incremental compilation, but it came with two major issues we needed to solve.

🚨 Problem 1: Incomplete results

As we already know, the input to the incremental compiler does not have to include all Kotlin source files. Our plugins weren’t designed for this and ended up producing incomplete results when run on just a subset of files. We had to make them incremental as well so they could handle partial inputs correctly.

🚨 Problem 2: Multiple rounds of Compilation

The Kotlin incremental compiler doesn’t just recompile the files that changed in a module. It may also need to recompile other files in the same module that are affected by those changes. Figuring out the exact set of affected files is tricky, especially when circular dependencies come into play. To handle this, the incremental compiler approximates the affected set by compiling in multiple rounds within a single build.

💡Curious how that works under the hood? The Kotlin blog on fast compilation has a great deep dive that’s worth checking out.

This behavior comes with a side effect, though. Since the compiler may run in multiple rounds with different sets of files, compiler plugins can also be triggered multiple times, each time with a different input. That can be problematic, as later plugin runs may override outputs produced by earlier ones. To avoid this, we updated our plugins to accumulate their results across rounds rather than replacing them.

Step 6: Verifying the functionality of annotation processors

Most of our annotation processors use Kotlin Symbol Processing (KSP2), which made this step pretty smooth. KSP2 is designed as a standalone tool that uses the Kotlin Analysis API to analyze source code. Unlike compiler plugins, it runs independently from the standard compilation flow. Thanks to this setup, we were able to continue using KSP2 without any changes.

💡 Bonus: KSP2 comes with its own built-in incremental processing support. It’s fully self-contained and doesn’t depend on the incremental compiler at all.

Before we adopted KSP2 (or when we were using an older version of the Kotlin Annotation Processing Tool (KAPT), which operates as a plugin) our annotation processors ran in a separate step dedicated solely to annotation processing. That step ran before the main compilation and was always non-incremental.

Step 7: Enabling compilation against ABI

To maximize cache hits, Buck2 builds Android modules against the class ABI instead of the full JAR. For Kotlin targets, we use the jvm-abi-gen compiler plugin to generate class ABI during compilation.

But once we turned on incremental compilation, a couple of new challenges popped up:

- The jvm-abi-gen plugin currently lacks direct support for incremental compilation, which ties back to the issues we mentioned earlier with compiler plugins.

- ABI extraction now happens twice – once during compilation via jvm-abi-gen, and again when the incremental compiler creates classpath snapshots.

In theory, both problems could be solved by switching to full JAR compilation and relying on classpath snapshots to maintain cache hits. While that could work in principle, it would mean giving up some of the build optimizations we’ve already got in place – a trade-off that needs careful evaluation before making any changes.

For now, we’ve implemented a custom (yet suboptimal) solution that merges the newly generated ABI with the previous result. It gets the job done, but we’re still actively exploring better long-term alternatives.

Ideally, we’d be able to reuse the information already collected for classpath snapshot or, even better, have this kind of support built directly into the Kotlin compiler. There’s an open ticket for that: KT-62881. Fingers crossed!

Step 8: Testing

Measuring the impact of build changes is not an easy task. Benchmarking is great for getting a sense of a feature’s potential, but it doesn’t always reflect how things perform in “the real world.” Pre/post testing can help with that, but it’s tough to isolate the impact of a single change, especially when you’re not the only one pushing code.

We set up A/B testing to overcome these obstacles and measure the true impact of the Kotlin incremental compiler on Meta’s codebase with high confidence. It took a bit of extra work to keep the cache healthy across variants, but it gave us a clean, isolated view of how much difference the incremental compiler really made at scale.

We started with the largest modules – the ones we already knew were slowing builds the most. Given their size and known impact, we expected to see benefits quickly. And sure enough, we did.

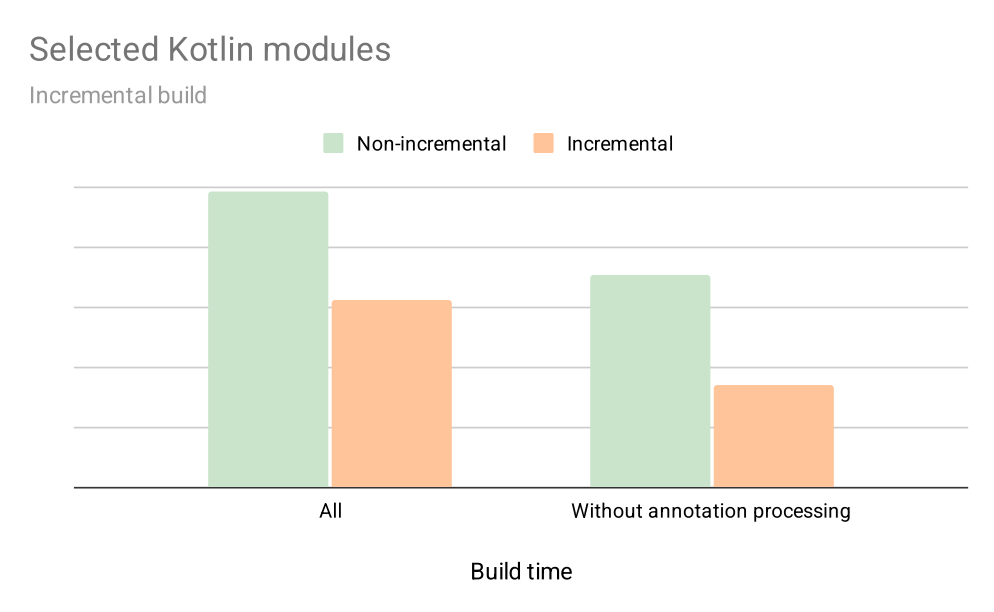

The impact of incremental compilation

The graph below shows early results on how enabling incremental compilation for selected targets impacts their local build times during incremental builds over a 4-week period. This includes not just compilation, but also annotation processing, and a few other optimisations we’ve added along the way.

With incremental compilation, we’ve seen about a 30% improvement for the average developer. And for modules without annotation processing, the speed nearly doubled. That was more than enough to convince us that the incremental compiler is here to stay.

What’s next

Kotlin incremental compilation is now supported in Buck2, and we’re actively rolling it out across our codebase! For now, it’s available for internal use only, but we’re working on bringing it to the recently introduced open source toolchain as well.

But that’s not all! We’re also exploring ways to expand incrementality across the entire Android toolchain, including tools like Kosabi (the Kotlin counterpart to Jasabi), to deliver even faster build times and even better developer experience.

To learn more about Meta Open Source, visit our open source site, subscribe to our YouTube channel, or follow us on Facebook, Threads, X and LinkedIn.

Events & Conferences

A decade of database innovation: The Amazon Aurora story

When Andy Jassy, then head of Amazon Web Services, announced Amazon Aurora in 2014, the pitch was bold but metered: Aurora would be a relational database built for the cloud. As such, it would provide access to cost-effective, fast, and scalable computing infrastructure.

In essence, he explained, Aurora would combine the cost effectiveness and simplicity of MySQL with the speed and availability of high-end commercial databases, the kind that firms typically managed on their own. In numbers, Aurora promised five times the throughput (e.g., the number of transactions, queries, read/write operations) of MySQL at one-tenth the price of commercial database solutions, all while offloading costly management challenges and maintaining performance and availability.

AWS re:Invent 2014 | Announcing Amazon Aurora for RDS

Aurora launched a year later, in 2015. Significantly, it decoupled computation from storage, a distinct contrast to traditional database architectures where the two are entwined. This fundamental innovation, along with automated backups and replication and other improvements, enabled easy scaling for both computational tasks and storage, while meeting reliability demands.

“Aurora’s design preserves the core transactional consistency strengths of relational databases. It innovates at the storage layer to create a database built for the cloud that can support modern workloads without sacrificing performance,” explained Werner Vogels, Amazon’s CTO, in 2019.

“To start addressing the limitations of relational databases, we reconceptualized the stack by decomposing the system into its fundamental building blocks,” Vogels said. “We recognized that the caching and logging layers were ripe for innovation. We could move these layers into a purpose-built, scale-out, self-healing, multitenant, database-optimized storage service. When we began building the distributed storage system, Amazon Aurora was born.”

Within two years, Aurora became the fastest-growing service in AWS history. Tens of thousands of customers — including financial-services companies, gaming companies, healthcare providers, educational institutions, and startups — turned to Aurora to help carry their workloads.

In the intervening years, Aurora has continued to evolve to suit the needs of a changing digital landscape. Most recently, in 2024, Amazon announced Aurora DSQL. A major step forward, Aurora DSQL is a serverless approach designed for global scale and enhanced adaptability to variable workloads.

Today, International Data Corporation (IDC) research estimates that firms using Aurora see a three-year return on investment of 434 percent and an operational cost reduction of 42 percent compared to other database solutions.

But what lies behind those figures? How did Aurora become so valuable to its users? To understand that, it’s useful to consider what came before.

A time for reinvention

In 2015, as cloud computing was gaining popularity, legacy firms began migrating workloads away from on-premises data centers to save money on capital investments and in-house maintenance. At the same time, mobile and web app startups were calling for remote, highly reliable databases that could scale in an instant. The theme was clear: computing and storage needed to be elastic and reliable. The reality was that, at the time, most databases simply hadn’t adapted to those needs.

Amazon engineers recognized that the cloud could enable virtually unlimited, networked storage and, separately, compute.

That rigidity makes sense considering the origin of databases and the problems they were invented to solve. The 1960s saw one of their earliest uses: NASA engineers had to navigate a complex list of parts, components, and systems as they built spacecraft for moon exploration. That need inspired the creation of the Information Management System, or IMS, a hierarchically structured solution that allowed engineers to more easily locate relevant information, such as the sizes or compatibilities of various parts and components. While IMS was a boon at the time, it was also limited. Finding parts meant engineers had to write batches of specially coded queries that would then move through a tree-like data structure, a relatively slow and specialized process.

In 1970, the idea of relational databases made its public debut when E. F. Codd coined the term. Relational databases organized data according to how it was related: customers and their purchases, for instance, or students in a class. Relational databases meant faster search, since data was stored in structured tables, and queries didn’t require special coding knowledge. With programming languages like SQL, relational databases became a dominant model for storing and retrieving structured data.

By the 1990s, however, that approach began to show its limits. Firms that needed more computing capabilities typically had to buy and physically install more on-premises servers. They also needed specialists to manage new capabilities, such as the influx of transactional workloads — as, for instance, when increasing numbers of customers added more and more pet supplies to virtual shopping carts. By the time AWS arrived in 2006, these legacy databases were the most brittle, least elastic component of a company’s IT stack.

The emergence of cloud computing promised a better way forward with more flexibility and remotely managed solutions. Amazon engineers recognized that the cloud could enable virtually unlimited, networked storage and, separately, computation.

The Amazon Relational Database Service (Amazon RDS) debuted in 2009 to help customers set up, operate, and scale a MySQL database in the cloud. And while that service expanded to include Oracle, SQL Server, and PostgreSQL, as Jeff Barr noted in a 2014 blog post, those database engines “were designed to function in a constrained and somewhat simplistic hardware environment.”

AWS researchers challenged themselves to examine those constraints and “quickly realized that they had a unique opportunity to create an efficient, integrated design that encompassed the storage, network, compute, system software, and database software”.

“The central constraint in high-throughput data processing has moved from compute and storage to the network,” wrote the authors of a SIGMOD 2017 paper describing Aurora’s architecture. Aurora researchers addressed that constraint via “a novel, service-oriented architecture”, one that offered significant advantages over traditional approaches. These included “building storage as an independent fault-tolerant and self-healing service across multiple data centers … protecting databases from performance variance and transient or permanent failures at either the networking or storage tiers.”’

The serverless era is now

In the years since its debut, Amazon engineers and researchers have ensured Aurora has kept pace with customer needs. In 2018, Aurora Serverless provided an on-demand autoscaling configuration that allowed customers to adjust computational capacity up and down based on their needs. Later versions further optimized that process by automatically scaling based on customer needs. That approach relieves the customer of the need to explicitly manage database capacity; customers need to specify only minimum and maximum levels.

Achieving that sort of “resource elasticity at high levels of efficiency” meant Aurora Serverless had to address several challenges, wrote the authors of a VLDB 2024 paper. “These included policy issues such as how to define ‘heat’ (i.e., resource usage features on which to base decision making)” and how to determine whether remedial action may be required. Aurora Serverless meets those challenges, the authors noted, by adapting and modifying “well-established ideas related to resource oversubscription; reactive control informed by recent measurements; distributed and hierarchical decision making; and innovations in the DB engine, OS, and hypervisor for efficiency.”

As of May 2025, all of Aurora’s offerings are now serverless. Customers no longer need to choose a specific server type or size or worry about the underlying hardware or operating system, patching, or backups; all that is completely managed by AWS. “One of the things that we’ve tried to design from the beginning is a database where you don’t have to worry about the internals,” Marc Brooker, AWS vice president and Distinguished Engineer, said at AWS re:Invent in 2024.

These are exactly the capabilities that Arizona State University needs, says John Rome, deputy chief information officer at ASU. Each fall, the university’s data needs explode when classes for its more than 73,000 students are in session across multiple campuses. Aurora lets ASU pay for the computation and storage it uses and helps it to adapt on the fly.

We see Amazon Aurora Serverless as a next step in our cloud maturity.

John Rome, deputy chief information officer at ASU

“We see Amazon Aurora Serverless as a next step in our cloud maturity,” Rome says, “to help us improve development agility while reducing costs on infrequently used systems, to further optimize our overall infrastructure operations.”

And what might the next step in maturity look like for the now 10-year-old Aurora service? The authors of that 2024 paper outlined several potential paths. Those include “introducing predictive techniques for live migration”; “exploiting statistical multiplexing opportunities stemming from complementary resource needs”, and “using sophisticated ML/RL-based techniques for workload prediction and decision making.”

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Business2 days ago

Business2 days agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences3 months ago

Events & Conferences3 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Mergers & Acquisitions2 months ago

Mergers & Acquisitions2 months agoDonald Trump suggests US government review subsidies to Elon Musk’s companies