AI Research

How a Research Lab Made Entirely of LLM Agents Developed Molecules That Can Block a Virus

GPT-4o-based agents led by a human collaborated to develop experimentally validated nanobodies against SARS-CoV-2. Discover with me how we are entering the era of automated reasoning and work with actionable outputs, and let’s imagine how this will go even further when robotic lab technicians can also carry out the wet lab parts of any research project!

In an era where artificial intelligence continues to reshape how we code, write, and even reason, a new frontier has emerged: AI conducting real scientific research, as several companies (from major established players like Google to dedicated spin offs) are trying to achieve. And we are not talking just about simulations, automated summarization, data crunching, or theoretical outputs, but actually about producing experimentally validated materials, such as biological designs with potential clinical relevance, in this case that I bring you today.

That future just got much closer; very close indeed!

In a groundbreaking paper just published in Nature by researchers from Stanford and the Chan Zuckerberg Biohub, a novel system called the Virtual Lab demonstrated that a human researcher working with a team of large language model (LLM) agents can design new nanobodies—these are tiny, antibody-like proteins that can bind others to block their function—to target fast-mutating variants of SARS-CoV-2. This was not just a narrow chatbot interaction or a tool-assisted paper; it was an open-ended, multi-phase research process led and executed by AI agents, each having a specialized expertise and role, resulting in real-world validated biological molecules that could perfectly move on to downstream studies for actual applicability in disease (in this case Covid-19) treatment.

Let’s delve into it to see how this is serious, replicable research presenting an approach to AI-human (and actually AI-AI) collaborative science works.

From “simple” applications to materializing a full-staff AI lab

Although there are some precedents to this, the new system is unlike anything before it. And one of the coolest things is that it is not based on a special-purpose trained LLM or tool; rather, it uses GPT-4o instructed with prompts that make it play the role of the different kinds of people often involved in a research team.

Until recently, the role of LLMs in science was limited to question-answering, summarizing, writing support, coding and perhaps some direct data analysis. Useful, yes, but not transformative. The Virtual Lab presented in this new Nature paper changes that by elevating LLMs from assistants to autonomous researchers that interact with one another and with a human user (who brings the research question, runs experiments when required, and eventually concludes the project) in structured meetings to explore, hypothesize, code, analyze, and iterate.

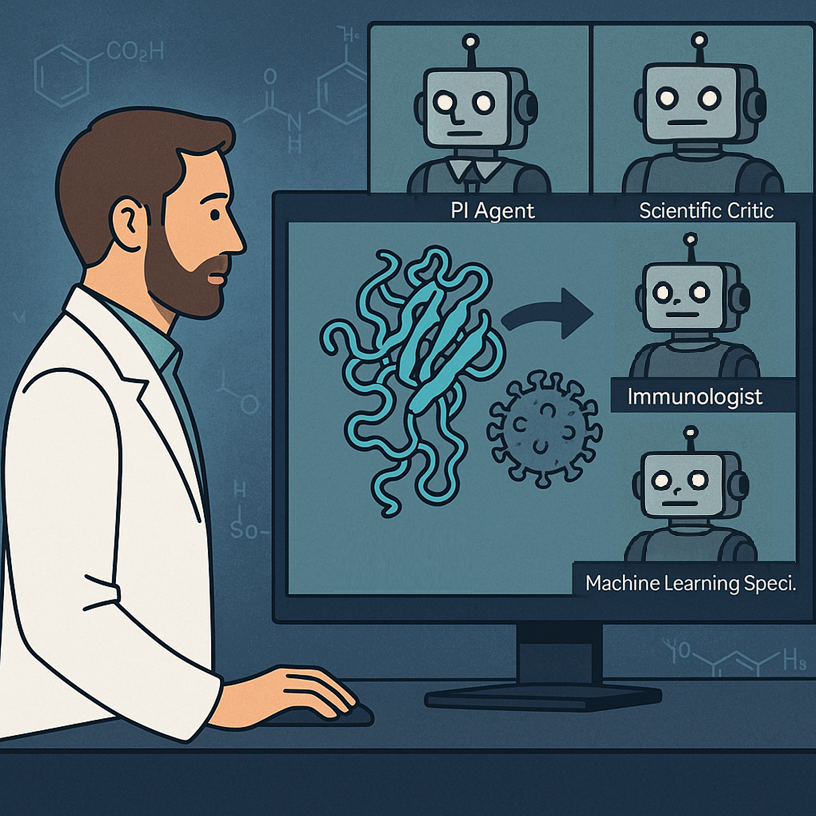

The core idea at the heart of this work was indeed to simulate an interdisciplinary lab staffed by AI agents. Each agent has a scientific role—say, immunologist, computational biologist, or machine learning specialist—and is instantiated from GPT-4o with a “persona” crafted via careful prompt engineering. These agents are led by a Principal Investigator (PI) Agent and monitored by a Scientific Critic Agent, both virtual agents.

The Critic Agent challenges assumptions and pinpoints errors, acting as the lab’s skeptical reviewer; as the paper explored, this was a key element of the workflow without which too many errors and overlooks happened that went in detriment of the project.

The human researcher sets high-level agendas, injects domain constraints, and ultimately runs the outputs (especially the wet-lab experiments). But the “thinking” (maybe I should start considering removing the quotation marks?) is done by the agents.

How it all worked

The Virtual Lab was tasked with solving a real and urgent biomedical challenge: design new nanobody binders for the KP.3 and JN.1 variants of SARS-CoV-2, which had evolved resistance to existing treatments. Instead of starting from scratch, the AI agents decided (yes, they took this decision by themselves) to mutate existing nanobodies that were effective against the ancestral strain but no longer worked as well. Yes, they decided on that!

As all interactions are tracked and documented, we can see exactly how the team moved forward with the project.

First of all, the human defined only the PI and Critic agents. The PI agent then created the scientific team by spawning specialized agents. In this case they were an Immunologist, a Machine Learning Specialist and a Computational Biologist. In a team meeting, the agents debated whether to design antibodies or nanobodies, and whether to design from scratch or mutate existing ones. They chose nanobody mutation, justified by faster timelines and available structural data. They then discussed about what tools to use and how to implement them. They went for the ESM protein language model coupled to AlphaFold multimer for structure prediction and Rosetta for binding energy calculations. For implementation, the agents decided to go with Python code. Very interestingly, the code needed to be reviewed by the Critic agent multiple times, and was refined through multiple asynchronous meetings.

From the meetings and multiple runs of code, an exact strategy was devised on how to propose the final set of mutations to be tested. Briefly, for the bioinformaticians reading this post, the PI agent designed an iterative pipeline that uses ESM to score all point mutations on a nanobody sequence by log-likelihood ratio, selects top mutants to predict their structures in complex with the target protein using AlphaFold-Multimer, scores the interfaces via ipLDDT, then uses Rosetta to estimate binding energy, and finally combines the scores to come up with a ranking of all proposed mutations. This actually was repeated in a cycle, to introduce more mutations as needed.

The computational pipeline generated 92 nanobody sequences that were synthesized and tested in a real-world lab, finding that most of them were actually proteins that can be produced and handled. Two of these proteins gained affinity to the SARS-CoV-2 proteins they were designed to bind, both to modern mutants and to ancestral forms.

These success rates are similar to those coming from analogous projects running in traditional form (that is executed by humans), but they took much much less time to conclude. And it hurts to say it, but I’m pretty sure the virtual lab entailed much lower costs overall, as it involved much fewer people (hence salaries).

Like in a human group of scientists: meetings, roles, and collaboration

We saw above how the Virtual Lab mimics how human science happens: via structured interdisciplinary meetings. Each meeting is either a “Team Meeting”, where multiple agents discuss broad questions (the PI starts, others contribute, the Critic reviews, and the PI summarizes and decides); or an “Individual Meeting” where a single agent (with or without the Critic) works on a specific task, e.g., writing code or scoring outputs.

To avoid hallucinations and inconsistency, the system also uses parallel meetings; that is, the same task is run multiple times with different randomness (i.e. at high “temperature”). It is interesting that the outcomes from these several meetings is the condensed in a single low-temperature merge meeting that is much more deterministic and can quite safely decide which conclusions, among all coming from the various meetings, make more sense.

Clearly, these ideas can be applied to any other kind of multi-agent interaction, and for any purpose!

How much did the humans do?

Surprisingly little, for the computational part of course–as the experiments can’t be so much automated yet, though keep reading to find some reflections about robots in labs!

In this Virtual Lab round, LLM agents wrote 98.7% of the total words (over 120,000 tokens), while the human researcher contributed just 1,596 words in total across the entire project. The Agents wrote all the scripts for ESM, AlphaFold-Multimer post-processing, and Rosetta XML workflows. The human only helped running the code and facilitated the real-world experiments. The Virtual Lab pipeline was built in 1-2 days of prompting and meetings, and the nanobody design computation ran in ~1 week.

Why this matters (and what comes next)

The Virtual Lab could serve as the prototype for a fundamentally new research model–and actually for a fundamentally new way to work, where everything that can be done on a computer is left automated, with humans only taking the very critical decisions. LLMs are clearly shifting from passive tools to active collaborators that, as the Virtual Lab shows, can drive complex, interdisciplinary projects from idea to implementation.

The next ambitious leap? Replace the hands of human technicians, who ran the experiments, with robotic ones. Clearly, the next frontier is in automatizing the physical interaction with the real world, which is essentially what robots are. Imagine then the full pipeline as applied to a research lab:

- A human PI defines a high-level biological goal.

- The team does research of existing information, scans databases, brainstorms ideas.

- A set of AI agents selects computational tools if required, writes and runs code and/or analyses, and finally proposes experiments.

- Then, robotic lab technicians, rather than human technicians, carry out the protocols: pipetting, centrifuging, plating, imaging, data collection.

- The results flow back into the Virtual Lab, closing the loop.

- Agents analyze, adapt, iterate.

This would make the research process truly end-to-end autonomous. From problem definition to experiment execution to result interpretation, all components would be run by an integrated AI-robotics system with minimal human intervention—just high-level steering, supervision, and global vision.

Robotic biology labs are already being prototyped. Emerald Cloud Lab, Strateos, and Transcriptic (now part of Colabra) offer robotic wet-lab-as-a-service. Future House is a non-profit building AI agents to automate research in biology and other complex sciences. In academia, some autonomous chemistry labs exist whose robots can explore chemical space on their own. Biofoundries use programmable liquid handlers and robotic arms for synthetic biology workflows. Adaptyv Bio automates protein expression and testing at scale.

Such kinds of automatized laboratory systems coupled to emerging systems like Virtual Lab could radically transform how science and technology progress. The intelligent layer drives the project and give those robots work to do, whose output then feeds back into the thinking tool in a closed-loop discovery engine that would run 24/7 without fatigue or scheduling conflicts, conduct hundreds or thousands of micro-experiments in parallel, and rapidly explore vast hypothesis spaces that are just not feasible for human labs. Moreover, the labs, virtual labs, and managers don’t even need to be physically together, allowing to optimize how resources are spread.

There are challenges, of course. Real-world science is messy and nonlinear. Robotic protocols must be incredibly robust. Unexpected errors still need judgment. But as robotics and AI continue to evolve, those gaps will certainly shrink.

Final thoughts

We humans were always confident that technology in the form of smart computers and robots would kick us out of our highly repetitive physical jobs, while creativity and thinking would still be our domain of mastery for decades, perhaps centuries. However, despite quite some automation via robots, the AI of the 2020s has shown us that technology can also be better than us at some of our most brain-intensive jobs.

In the near future, LLMs don’t just answer our questions or barely help us with work. They will ask, argue, debate, decide. And sometimes, they will discover!

References and further reads

The Nature paper analyzed here:

https://www.nature.com/articles/s41586-025-09442-9

Other scientific discoveries by AI systems:

AI Research

Tories pledge to get ‘all our oil and gas out of the North Sea’

Conservative leader Kemi Badenoch has said her party will remove all net zero requirements on oil and gas companies drilling in the North Sea if elected.

Badenoch is to formally announce the plan to focus solely on “maximising extraction” and to get “all our oil and gas out of the North Sea” in a speech in Aberdeen on Tuesday.

Reform UK has said it wants more fossil fuels extracted from the North Sea.

The Labour government has committed to banning new exploration licences. A spokesperson said a “fair and orderly transition” away from oil and gas would “drive growth”.

Exploring new fields would “not take a penny off bills” or improve energy security and would “only accelerate the worsening climate crisis”, the government spokesperson warned.

Badenoch signalled a significant change in Conservative climate policy when she announced earlier this year that reaching net zero would be “impossible” by 2050.

Successive UK governments have pledged to reach the target by 2050 and it was written into law by Theresa May in 2019. It means the UK must cut carbon emissions until it removes as much as it produces, in line with the 2015 Paris Climate Agreement.

Now Badenoch has said that requirements to work towards net zero are a burden on oil and gas producers in the North Sea which are damaging the economy and which she would remove.

The Tory leader said a Conservative government would scrap the need to reduce emissions or to work on technologies such as carbon storage.

Badenoch said it was “absurd” the UK was leaving “vital resources untapped” while “neighbours like Norway extracted them from the same sea bed”.

In 2023, then Prime Minister Rishi Sunak granted 100 new licences to drill in the North Sea which he said at the time was “entirely consistent” with net zero commitments.

Reform UK has said it will abolish the push for net zero if elected.

The current government said it had made the “biggest ever investment in offshore wind and three first of a kind carbon capture and storage clusters”.

Carbon capture and storage facilities aim to prevent carbon dioxide (CO2) produced from industrial processes and power stations from being released into the atmosphere.

Most of the CO2 produced is captured, transported and then stored deep underground.

It is seen by the likes of the International Energy Agency and the Climate Change Committee as a key element in meeting targets to cut the greenhouse gases driving dangerous climate change.

AI Research

Dogs and drones join forest battle against eight-toothed beetle

Esme Stallard and Justin RowlattClimate and science team

Sean Gallup/Getty Images

Sean Gallup/Getty ImagesIt is smaller than your fingernail, but this hairy beetle is one of the biggest single threats to the UK’s forests.

The bark beetle has been the scourge of Europe, killing millions of spruce trees, yet the government thought it could halt its spread to the UK by checking imported wood products at ports.

But this was not their entry route of choice – they were being carried on winds straight over the English Channel.

Now, UK government scientists have been fighting back, with an unusual arsenal including sniffer dogs, drones and nuclear waste models.

They claim the UK has eradicated the beetle from at risk areas in the east and south east. But climate change could make the job even harder in the future.

The spruce bark beetle, or Ips typographus, has been munching its way through the conifer trees of Europe for decades, leaving behind a trail of destruction.

The beetles rear and feed their young under the bark of spruce trees in complex webs of interweaving tunnels called galleries.

When trees are infested with a few thousand beetles they can cope, using resin to flush the beetles out.

But for a stressed tree its natural defences are reduced and the beetles start to multiply.

“Their populations can build to a point where they can overcome the tree defences – there are millions, billions of beetles,” explained Dr Max Blake, head of tree health at the UK government-funded Forestry Research.

“There are so many the tree cannot deal with them, particularly when it is dry, they don’t have the resin pressure to flush the galleries.”

Since the beetle took hold in Norway over a decade ago it has been able to wipe out 100 million cubic metres of spruce, according to Rothamsted Research.

‘Public enemy number one’

As Sitka spruce is the main tree used for timber in the UK, Dr Blake and his colleagues watched developments on continental Europe with some serious concern.

“We have 725,000 hectares of spruce alone, if this beetle was allowed to get hold of that, the destructive potential means a vast amount of that is at risk,” said Andrea Deol at Forestry Research. “We valued it – and it’s a partial valuation at £2.9bn per year in Great Britain.”

There are more than 1,400 pests and diseases on the government’s plant health risk register, but Ips has been labelled “public enemy number one”.

The number of those diseases has been accelerating, according to Nick Phillips at charity The Woodland Trust.

“Predominantly, the reason for that is global trade, we’re importing wood products, trees for planting, which does sometimes bring ‘hitchhikers’ in terms of pests and disease,” he said.

Forestry Research had been working with border control for years to check such products for Ips, but in 2018 made a shocking discovery in a wood in Kent.

“We found a breeding population that had been there for a few years,” explained Ms Deol.

“Later we started to pick up larger volumes of beetles in [our] traps which seemed to suggest they were arriving by other means. All of the research we have done now has indicated they are being blown over from the continent on the wind,” she added.

Daegan Inward/Forestry Research

Daegan Inward/Forestry ResearchThe team knew they had to act quickly and has been deploying a mixture of techniques that wouldn’t look out of place in a military operation.

Drones are sent up to survey hundreds of hectares of forest, looking for signs of infestation from the sky – as the beetle takes hold, the upper canopy of the tree cannot be fed nutrients and water, and begins to die off.

But next is the painstaking work of entomologists going on foot to inspect the trees themselves.

“They are looking for a needle in a haystack, sometimes looking for single beetles – to get hold of the pioneer species before they are allowed to establish,” Andrea Deol said.

In a single year her team have inspected 4,500 hectares of spruce on the public estate – just shy of 7,000 football pitches.

Such physically-demanding work is difficult to sustain and the team has been looking for some assistance from the natural and tech world alike.

Tony Jolliffe/BBC

Tony Jolliffe/BBCWhen the pioneer Spruce bark beetles find a suitable host tree they release pheromones – chemical signals to attract fellow beetles and establish a colony.

But it is this strong smell, as well as the smell associated with their insect poo – frass – that makes them ideal to be found by sniffer dogs.

Early trials so far have been successful. The dogs are particularly useful for inspecting large timber stacks which can be difficult to inspect visually.

The team is also deploying cameras on their bug traps, which are now able to scan daily for the beetles and identify them in real time.

“We have [created] our own algorithm to identify the insects. We have taken about 20,000 images of Ips, other beetles and debris, which have been formally identified by entomologists, and fed it into the model,” said Dr Blake.

Some of the traps can be in difficult to access areas and previously had only been checked every week by entomologists working on the ground.

The result of this work means that the UK has been confirmed as the first country to have eradicated Ips Typographus in its controlled areas, deemed to be at risk from infestation, and which covers the south east and east England.

“What we are doing is having a positive impact and it is vital that we continue to maintain that effort, if we let our guard down we know we have got those incursion risks year on year,” said Ms Deol.

Tony Jolliffe/BBC

Tony Jolliffe/BBCAnd those risks are rising. Europe has seen populations of Ips increase as they take advantage of trees stressed by the changing climate.

Europe is experiencing more extreme rainfall in winter and milder temperatures meaning there is less freezing, leaving the trees in waterlogged conditions.

This coupled with drier summers leaves them stressed and susceptible to falling in stormy weather, and this is when Ips can take hold.

With larger populations in Europe the risk of Ips colonies being carried to the UK goes up.

The team at Forestry Research has been working hard to accurately predict when these incursions may occur.

“We have been doing modelling with colleagues at the University of Cambridge and the Met Office which have adapted a nuclear atmospheric dispersion model to Ips,” explained Dr Blake. “So, [the model] was originally used to look at nuclear fallout and where the winds take it, instead we are using the model to look at how far Ips goes.”

Nick Phillips at The Woodland Trust is strongly supportive of the government’s work but worries about the loss of ancient woodland – the oldest and most biologically-rich areas of forest.

Commercial spruce have long been planted next to such woods, and every time a tree hosting spruce beetle is found, it and neighbouring, sometimes ancient trees, have to be removed.

“We really want the government to maintain as much of the trees as they can, particularly the ones that aren’t affected, and then also when the trees are removed, supporting landowners to take steps to restore what’s there,” he said. “So that they’re given grants, for example, to be able to recover the woodland sites.”

The government has increased funding for woodlands in recent years but this has been focused on planting new trees.

“If we only have funding and support for the first few years of a tree’s life, but not for those woodlands that are 100 or century years old, then we’re not going to be able to deliver nature recovery and capture carbon,” he said.

Additional reporting Miho Tanaka

AI Research

AI replaces excuses for innovation, not jobs

AI isn’t here to replace jobs, it’s here to eliminate outdated practices and empower entrepreneurs to innovate faster and smarter than ever before.

-

Tools & Platforms3 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy1 month ago

Ethics & Policy1 month agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences3 months ago

Events & Conferences3 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Business1 day ago

Business1 day agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoAstrophel Aerospace Raises ₹6.84 Crore to Build Reusable Launch Vehicle