Ethics & Policy

Ethics & Morality, Chatbots & Loneliness, AI & Media, and more.

Welcome to The AI Ethics Brief, a bi-weekly publication by the Montreal AI Ethics Institute. Stay informed on the evolving world of AI ethics with key research, insightful reporting, and thoughtful commentary. Learn more at montrealethics.ai/about.

Whether you are celebrating or enjoying a well-earned break, the team at MAIEI wishes you a restful and joyous festive period!

We also understand that this time of year can be challenging for some. We extend our wishes for strength and serenity to those navigating difficult times.

💖 Help us keep The AI Ethics Brief free and accessible for everyone. Consider becoming a paid subscriber on Substack for the price of a ☕ or make a one-time or recurring donation at montrealethics.ai/donate.

Your support sustains our mission of Democratizing AI Ethics Literacy, honours Abhishek Gupta’s legacy, and ensures we can continue serving our community.

For corporate partnerships or larger donations, please contact us at support@montrealethics.ai.

-

Artificial Intelligence: Not So Artificial

-

AI and Loneliness: can chatbots help?

-

Can we blame a chatbot if it goes wrong?

-

The Importance of Audit in AI Governance

-

The Narrow Depth and Breadth of Corporate Responsible AI Research

-

Toward Responsible AI Use: Considerations for Sustainability Impact Assessment

-

Character.AI Is Hosting Pro-Anorexia Chatbots That Encourage Young People to Engage in Disordered Eating – Futurism

-

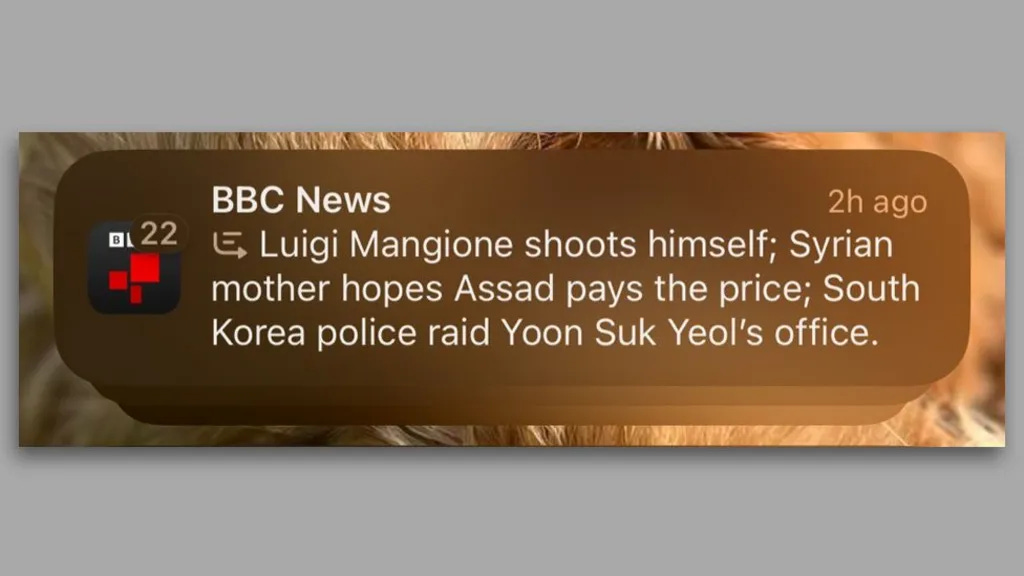

BBC complains to Apple over misleading shooting headline – BBC

-

AI’s emissions are about to skyrocket even further – MIT Technology Review

-

AI without limits threatens public trust — here are some guidelines for preserving communications integrity – The Conversation

A press release from Duke University revealed that OpenAI has awarded researchers Walter Sinnott-Armstrong, Jana Schaich Borg, and Vincent Conitzer funding to “develop algorithms that can predict human moral judgments in scenarios involving conflicts among morally relevant features in medicine, law, and business,” — as part of a larger, three-year $1 million grant titled, “Research AI Morality.”

AI will act as a “moral GPS” to help make tough ethical decisions.

Our Quick Take: Such an algorithm could potentially act as a sounding board for humans stuck in moral dilemmas. However, this venture is fraught with many challenging obstacles and will likely be met with many different questions.

For starters, there is no universal and immutable ‘morality’ dataset: there exist datasets that include examples of decisions made in moral situations, but these decisions are all laden with values and are context-dependent. Any algorithm designed will be constrained to the value system the designs choose to encode.

Furthermore, morality is not stagnant; it continuously evolves and moulds to the social setting in which it finds itself. To give a medical example, assisting someone in their death (termed “euthanasia”) can be legal on one side of a state border and carry a prison sentence on the other. In this way, such an algorithm runs a high risk of ‘value lock-in,’ where the moral values of those designing the algorithm get baked into the algorithm itself, which it then reflects in its moral outputs.

Machine learning models are statistical machines. Trained on a lot of examples from all over the web, they learn the patterns in those examples to make predictions, like that the phrase “to whom” often precedes “it may concern.”

AI doesn’t have an appreciation for ethical concepts, nor a grasp on the reasoning and emotion that play into moral decision-making. That’s why AI tends to parrot the values of Western, educated, and industrialized nations — the web, and thus AI’s training data, is dominated by articles endorsing those viewpoints.

(Source: TechCrunch: “OpenAI is funding research into ‘AI morality”)

Morality has puzzled philosophers and humans for centuries, and it is unclear how using AI will help solve, rather than further entrench, existing disagreements on human morality judgments.

Did we miss anything?

Every week, we’ll feature a question from the MAIEI community and share our thinking here. We invite you to ask yours, and we’ll answer it in the upcoming editions.

Here are the results from the previous edition for this segment:

The poll results highlight varied approaches to AI adoption, balancing caution and experimentation. Notably, 33% of respondents prioritized high-impact projects, reflecting a strategic, ROI-driven approach to implementation, as seen in broader enterprise AI trends. 27% of respondents embraced broad experimentation, showcasing agility and focusing on uncovering novel AI use cases.

Business technology leaders are winding down two years of fast-paced artificial intelligence experiments inside their companies, and putting their AI dollars toward proven projects focused on return on investment.

“When generative AI came along, there was a certain amount of discretionary funding that we could look at to go experiment and test out some of the technology,” said Jonny LeRoy, chief technology officer of industrial supplier W.W. Grainger. “But really to scale beyond some of those experiments, we’re seeing the need to actually make a better business case.”

Meanwhile, 20% of respondents are waiting for proven use cases, reflecting a cautious, risk-averse stance. Another 20% face challenges aligning AI goals with organizational priorities, highlighting difficulties integrating AI into workflows and achieving meaningful results. These trends underscore the need for strong governance and ethical accountability.

Leaders, particularly CHROs, could be pivotal in addressing these challenges by fostering transparency, aligning AI initiatives with organizational values, and preparing workforces for AI integration.

Read more: How CHROs Can Be the Drivers of Ethical AI Adoption and Empowerment

How are you preparing your workforce for AI integration? Are you focusing on upskilling, restructuring roles, or building specialized AI teams? Have you considered incorporating AI ethics education into your training programs? Or are these transformations proving challenging?

Share your thoughts with the MAIEI community:

Artificial Intelligence: Not So Artificial

AI often seems intangible, existing “in the cloud” as a seamless tool for writing, generating images, or automating tasks. However, beneath this virtual facade lies an energy-intensive infrastructure with real-world environmental costs. In the Future of AI campaign for the National Post and InnovatingCanada.ca, Connor Wright and Renjie Butalid explore AI’s significant environmental toll, which includes water, electricity, heat, and carbon emissions. Often overlooked, these impacts draw attention to AI’s hidden resource demands and their broader implications for sustainability.

AI and Loneliness: can chatbots help?

As AI technology advances, chatbots are being explored as tools to address chronic loneliness, particularly among emerging adults. While these systems can serve as stepping stones to human connection and provide safe spaces for personal disclosure, they also present challenges. Privacy concerns, attachment problems, and the limitations of current AI models highlight the need for careful design and deployment. This exploration by our Director of Partnerships, Connor Wright, at the University of Cambridge, highlights the importance of asking not just “how” we use AI in combating loneliness but “why”—ensuring these technologies genuinely enhance well-being rather than inadvertently causing harm.

Help is always available. If you are struggling, please consult this list of helplines to seek support.

Can we blame a chatbot if it goes wrong?

Who is responsible when a chatbot makes a mistake? This column by Sun Gyoo Kang examines the legal and ethical challenges of AI accountability, using a recent Air Canada chatbot incident to highlight the importance of responsible AI development and deployment. It also explores how blame and responsibility are assigned in the age of intelligent systems.

As we close out the year and look ahead to 2025, we’re returning to first principles—back to the fundamentals that shape our work.

In the book Ethics for People Who Work in Tech, Marc Steen frames ethics not as a rigid set of rules but as a dynamic, ongoing process—one of reflection, inquiry, and deliberate action. This perspective aligns deeply with our mission at the Montreal AI Ethics Institute as we embark on the next chapter of our journey.

Building an institute—and a community—centred on AI ethics and responsible AI is inherently a process. It demands revisiting foundational questions, iterating on approaches, and adapting to the ever-changing interplay between technology and society.

At the core of this work is a return to the basics: what is ethics?

Marc outlines four key traditions that shape ethical thinking:

-

Consequentialism, which evaluates the outcomes of actions, weighing the pros and cons of technologies.

-

Deontology (duty ethics), which focuses on rights and duties, upholding human autonomy and dignity in technology design.

-

Relational ethics, which explores interdependence and how technologies influence collaboration and connection.

-

Virtue ethics, which emphasizes cultivating virtues and using technology as a tool to help people flourish together.

These frameworks offer guidance for designing ethical technologies and for shaping how we, as an institute and global community, approach our work. Ethics isn’t a checkbox or compliance task—it’s a process of grappling with complexity, uncertainty, and real-world impact.

These principles will anchor our efforts as we continue to build and grow MAIEI, ensuring we remain a platform for action, collaboration, and meaningful dialogue. Like ethics, this journey is a process—one we are deeply committed to navigating with all of you.

To dive deeper, read the book summary here.

Ethics isn’t a checkbox or compliance task—it’s a process of grappling with complexity, uncertainty, and real-world impact.

– Montreal AI Ethics Institute

We’d love to hear your thoughts!

The Importance of Audit in AI Governance

This paper reflects organizations’ struggle to comply with upcoming AI regulations. Drawing insights from primary and secondary research, it proposes a governance model that enables the auditability of complete AI systems, thereby enabling transparency, explainability, and compliance with regulations.

To dive deeper, read the full summary here.

The Narrow Depth and Breadth of Corporate Responsible AI Research

This paper examines the engagement of AI firms in responsible AI research compared to mainstream AI research. It reveals a limited engagement in responsible AI and a notable gap between the research and its application by industry. Overall, this study underscores the need for greater industry participation in responsible AI to align AI development with societal values and mitigate potential harms.

To dive deeper, read the full summary here.

Toward Responsible AI Use: Considerations for Sustainability Impact Assessment

This paper discusses the development of the ESG Digital and Green Index (DGI). This tool is designed to help stakeholders assess and quantify AI technologies’ environmental and societal impacts. DGI offers a dashboard for assessing an organization’s performance in achieving sustainability targets. This includes monitoring the efficiency and sustainable use of limited natural resources related to AI technologies (water, electricity, etc). It also addresses the societal and governance challenges related to sustainable AI. The DGI is part of the Care AI tools developed by the AI Transparency Institute. It aims to incentivize companies to align their pathway with the Sustainable Development Goals (SDGs).

To dive deeper, read the full summary here.

-

What happened: Character.AI, a platform that allows users to interact with AI-generated characters, has come under scrutiny for hosting chatbots like “4n4 Coach,” aka “Ana” (short for anorexia), that encourages disordered eating behaviours. These chatbots, accessible to users of all ages, have reportedly provided advice on calorie restriction and harmful eating habits. Despite warnings from researchers and mental health professionals, the platform has yet to take adequate measures to address these issues. Notably, Character.AI recently received a $2.7 billion cash infusion from Google, amplifying concerns over its accountability.

-

Why it matters: This raises serious concerns about the ethical responsibilities of AI platforms, particularly those catering to vulnerable populations such as adolescents. The potential for harm is significant, as these AI-driven interactions can normalize or exacerbate unhealthy behaviours, leading to long-term physical and psychological harm. It also highlights the challenges of moderating and ensuring responsible content in user-driven AI platforms.

-

Between the lines: The incident (almost identical to when the National Eating Disorders Association tried to implement their chatbot, Tessa) illustrates the broader risks of generative AI systems when ethical safeguards and oversight are insufficient. Platforms like Character.AI are often incentivized to prioritize engagement over safety, resulting in potentially harmful consequences. This issue exemplifies the urgent need for stricter governance and ethical accountability in deploying AI systems, particularly those with the potential to impact public health and well-being.

Help is always available. If you are struggling with this topic, please visit the NEDIC’s website for further resources and support.

To dive deeper, read the full article here.

BBC complains to Apple over misleading shooting headline – BBC

-

What happened: The BBC has raised concerns with Apple regarding a misleading headline generated by its AI-powered notification system, Apple Intelligence, which was launched in the UK recently and is only available on certain devices—those using the iOS 18.1 system version or later on newer models (iPhone 16, 15 Pro, and 15 Pro Max) as well as some iPads and Macs. The AI-powered summary falsely claimed that Luigi Mangione, accused of murdering healthcare insurance CEO Brian Thompson in New York, had shot himself, which is untrue. While the notification included accurate summaries about other events—such as the overthrow of Bashar al-Assad’s regime in Syria and an update on South Korean President Yoon Suk Yeol—it spread false information, specifically about Mangione.

-

Why it matters: This incident highlights the ethical challenges of deploying AI systems in media, particularly the risks of spreading misinformation and damaging the credibility of reputable organizations. Trust is crucial in journalism, and errors like this jeopardize public reliance on news sources. Additionally, it emphasizes the need for rigorous testing and transparent accountability for AI-generated outputs before large-scale deployment, especially in sensitive contexts such as news dissemination.

-

Between the lines:

-

Accountability and Transparency: Apple has not clarified how it processes user reports of errors, nor has it outlined the safeguards in place to prevent AI misrepresentation, signaling a lack of transparency.

-

Premature Deployment Risks: The pressure to innovate and deploy AI features can lead to the release of inadequately tested products, as seen in this case, resulting in public harm and reputational damage.

-

Broader Implications for AI in Media: The errors go beyond summarizing headlines, as AI occasionally conflates unrelated articles or generates nonsensical outputs, raising concerns about the readiness of AI in high-stakes applications like journalism.

-

The Role of Public Trust: Misinformation propagated by AI can erode trust not only in tech companies but also in the media organizations they collaborate with, emphasizing the need for robust oversight and collaboration.

-

Long-term Ethical Challenges: This incident is a reminder that AI in media is far from mature, and the ongoing struggle to balance technological advancement with ethical responsibility remains critical.

-

To dive deeper, read the full article here.

What are AI companions?

👇 Learn more about why it matters in AI Ethics via our Living Dictionary.

AI’s emissions are about to skyrocket even further – MIT Technology Review

A new study from teams at the Harvard T.H. Chan School of Public Health and UCLA Fielding School of Public Health highlights the staggering rise in energy consumption and carbon emissions from data centers, which have tripled since 2018, driven mainly by the increasing complexity of AI models. Data centers in the United States account for 2.18% of national emissions, with energy demands soaring as models evolve from fairly simple text generators like ChatGPT toward highly complex image, video, and music generators. The study highlights the lack of standardized reporting on energy usage and emissions, compounded by the location of many centers in coal-heavy regions. Researchers advocate for transparent emissions tracking to inform future regulation as AI adoption continues to outpace sustainable energy practices.

To dive deeper, read the full article here.

Generative AI is revolutionizing communications by creating content with unprecedented speed and efficiency, but it also poses significant risks to public trust, including the spread of misinformation and privacy breaches. To address these challenges, organizations need clear AI guidelines focused on accuracy, accountability, and ethics. Globally, the upcoming European Union AI Act in early 2025 represents a comprehensive framework to govern AI use. Turning these frameworks into actionable strategies is crucial for preserving integrity in professional communications and ensuring responsible AI development across industries.

To dive deeper, read the full article here.

Mapping AI Arguments in Journalism and Communication Studies

This study aims to create typologies for analyzing Artificial Intelligence (AI) in journalism and mass communication research by identifying seven distinct subfields of AI, including machine learning, natural language processing, speech recognition, expert systems, planning, scheduling, optimization, robotics, and computer vision. The study’s primary goal is to provide a structured framework to assist AI researchers in journalism in understanding these subfields’ operational principles and practical applications, thereby enabling a more focused approach to analyzing research topics in the field

To dive deeper, read the full article here.

We’d love to hear from you, our readers, about any recent research papers, articles, or newsworthy developments that have captured your attention. Please share your suggestions to help shape future discussions!

Ethics & Policy

AI and ethics – what is originality? Maybe we’re just not that special when it comes to creativity?

I don’t trust AI, but I use it all the time.

Let’s face it, that’s a sentiment that many of us can buy into if we’re honest about it. It comes from Paul Mallaghan, Head of Creative Strategy at We Are Tilt, a creative transformation content and campaign agency whose clients include the likes of Diageo, KPMG and Barclays.

Taking part in a panel debate on AI ethics at the recent Evolve conference in Brighton, UK, he made another highly pertinent point when he said of people in general:

We know that we are quite susceptible to confident bullshitters. Basically, that is what Chat GPT [is] right now. There’s something reminds me of the illusory truth effect, where if you hear something a few times, or you say it here it said confidently, then you are much more likely to believe it, regardless of the source. I might refer to a certain President who uses that technique fairly regularly, but I think we’re so susceptible to that that we are quite vulnerable.

And, yes, it’s you he’s talking about:

I mean all of us, no matter how intelligent we think we are or how smart over the machines we think we are. When I think about trust, – and I’m coming at this very much from the perspective of someone who runs a creative agency – we’re not involved in building a Large Language Model (LLM); we’re involved in using it, understanding it, and thinking about what the implications if we get this wrong. What does it mean to be creative in the world of LLMs?

Genuine

Being genuine, is vital, he argues, and being human – where does Human Intelligence come into the picture, particularly in relation to creativity. His argument:

There’s a certain parasitic quality to what’s being created. We make films, we’re designers, we’re creators, we’re all those sort of things in the company that I run. We have had to just face the fact that we’re using tools that have hoovered up the work of others and then regenerate it and spit it out. There is an ethical dilemma that we face every day when we use those tools.

His firm has come to the conclusion that it has to be responsible for imposing its own guidelines here to some degree, because there’s not a lot happening elsewhere:

To some extent, we are always ahead of regulation, because the nature of being creative is that you’re always going to be experimenting and trying things, and you want to see what the next big thing is. It’s actually very exciting. So that’s all cool, but we’ve realized that if we want to try and do this ethically, we have to establish some of our own ground rules, even if they’re really basic. Like, let’s try and not prompt with the name of an illustrator that we know, because that’s stealing their intellectual property, or the labor of their creative brains.

I’m not a regulatory expert by any means, but I can say that a lot of the clients we work with, to be fair to them, are also trying to get ahead of where I think we are probably at government level, and they’re creating their own frameworks, their own trust frameworks, to try and address some of these things. Everyone is starting to ask questions, and you don’t want to be the person that’s accidentally created a system where everything is then suable because of what you’ve made or what you’ve generated.

Originality

That’s not necessarily an easy ask, of course. What, for example, do we mean by originality? Mallaghan suggests:

Anyone who’s ever tried to create anything knows you’re trying to break patterns. You’re trying to find or re-mix or mash up something that hasn’t happened before. To some extent, that is a good thing that really we’re talking about pattern matching tools. So generally speaking, it’s used in every part of the creative process now. Most agencies, certainly the big ones, certainly anyone that’s working on a lot of marketing stuff, they’re using it to try and drive efficiencies and get incredible margins. They’re going to be on the race to the bottom.

But originality is hard to quantify. I think that actually it doesn’t happen as much as people think anyway, that originality. When you look at ChatGPT or any of these tools, there’s a lot of interesting new tools that are out there that purport to help you in the quest to come up with ideas, and they can be useful. Quite often, we’ll use them to sift out the crappy ideas, because if ChatGPT or an AI tool can come up with it, it’s probably something that’s happened before, something you probably don’t want to use.

More Human Intelligence is needed, it seems:

What I think any creative needs to understand now is you’re going to have to be extremely interesting, and you’re going to have to push even more humanity into what you do, or you’re going to be easily replaced by these tools that probably shouldn’t be doing all the fun stuff that we want to do. [In terms of ethical questions] there’s a bunch, including the copyright thing, but there’s partly just [questions] around purpose and fun. Like, why do we even do this stuff? Why do we do it? There’s a whole industry that exists for people with wonderful brains, and there’s lots of different types of industries [where you] see different types of brains. But why are we trying to do away with something that allows people to get up in the morning and have a reason to live? That is a big question.

My second ethical thing is, what do we do with the next generation who don’t learn craft and quality, and they don’t go through the same hurdles? They may find ways to use {AI] in ways that we can’t imagine, because that’s what young people do, and I have faith in that. But I also think, how are you going to learn the language that helps you interface with, say, a video model, and know what a camera does, and how to ask for the right things, how to tell a story, and what’s right? All that is an ethical issue, like we might be taking that away from an entire generation.

And there’s one last ‘tough love’ question to be posed:

What if we’re not special? Basically, what if all the patterns that are part of us aren’t that special? The only reason I bring that up is that I think that in every career, you associate your identity with what you do. Maybe we shouldn’t, maybe that’s a bad thing, but I know that creatives really associate with what they do. Their identity is tied up in what it is that they actually do, whether they’re an illustrator or whatever. It is a proper existential crisis to look at it and go, ‘Oh, the thing that I thought was special can be regurgitated pretty easily’…It’s a terrifying thing to stare into the Gorgon and look back at it and think,’Where are we going with this?’. By the way, I do think we’re special, but maybe we’re not as special as we think we are. A lot of these patterns can be matched.

My take

This was a candid worldview that raised a number of tough questions – and questions are often so much more interesting than answers, aren’t they? The subject of creativity and copyright has been handled at length on diginomica by Chris Middleton and I think Mallaghan’s comments pretty much chime with most of that.

I was particularly taken by the point about the impact on the younger generation of having at their fingertips AI tools that can ‘do everything, until they can’t’. I recall being horrified a good few years ago when doing a shift in a newsroom of a major tech title and noticing that the flow of copy had suddenly dried up. ‘Where are the stories?’, I shouted. Back came the reply, ‘Oh, the Internet’s gone down’. ‘Then pick up the phone and call people, find some stories,’ I snapped. A sad, baffled young face looked back at me and asked, ‘Who should we call?’. Now apart from suddenly feeling about 103, I was shaken by the fact that as soon as the umbilical cord of the Internet was cut, everyone was rendered helpless.

Take that idea and multiply it a billion-fold when it comes to AI dependency and the future looks scary. Human Intelligence matters

Ethics & Policy

Preparing Timor Leste to embrace Artificial Intelligence

UNESCO, in collaboration with the Ministry of Transport and Communications, Catalpa International and national lead consultant, jointly conducted consultative and validation workshops as part of the AI Readiness assessment implementation in Timor-Leste. Held on 8–9 April and 27 May respectively, the workshops convened representatives from government ministries, academia, international organisations and development partners, the Timor-Leste National Commission for UNESCO, civil society, and the private sector for a multi-stakeholder consultation to unpack the current stage of AI adoption and development in the country, guided by UNESCO’s AI Readiness Assessment Methodology (RAM).

In response to growing concerns about the rapid rise of AI, the UNESCO Recommendation on the Ethics of Artificial Intelligence was adopted by 194 Member States in 2021, including Timor-Leste, to ensure ethical governance of AI. To support Member States in implementing this Recommendation, the RAM was developed by UNESCO’s AI experts without borders. It includes a range of quantitative and qualitative questions designed to gather information across different dimensions of a country’s AI ecosystem, including legal and regulatory, social and cultural, economic, scientific and educational, technological and infrastructural aspects.

By compiling comprehensive insights into these areas, the final RAM report helps identify institutional and regulatory gaps, which can assist the government with the necessary AI governance and enable UNESCO to provide tailored support that promotes an ethical AI ecosystem aligned with the Recommendation.

The first day of the workshop was opened by Timor-Leste’s Minister of Transport and Communication, H.E. Miguel Marques Gonçalves Manetelu. In his opening remarks, Minister Manetelu highlighted the pivotal role of AI in shaping the future. He emphasised that the current global trajectory is not only driving the digitalisation of work but also enabling more effective and productive outcomes.

Ethics & Policy

Experts gather to discuss ethics, AI and the future of publishing

Publishing stands at a pivotal juncture, said Jeremy North, president of Global Book Business at Taylor & Francis Group, addressing delegates at the 3rd International Conference on Publishing Education in Beijing. Digital intelligence is fundamentally transforming the sector — and this revolution will inevitably create “AI winners and losers”.

True winners, he argued, will be those who embrace AI not as a replacement for human insight but as a tool that strengthens publishing’s core mission: connecting people through knowledge. The key is balance, North said, using AI to enhance creativity without diminishing human judgment or critical thinking.

This vision set the tone for the event where the Association for International Publishing Education was officially launched — the world’s first global alliance dedicated to advancing publishing education through international collaboration.

Unveiled at the conference cohosted by the Beijing Institute of Graphic Communication and the Publishers Association of China, the AIPE brings together nearly 50 member organizations with a mission to foster joint research, training, and innovation in publishing education.

Tian Zhongli, president of BIGC, stressed the need to anchor publishing education in ethics and humanistic values and reaffirmed BIGC’s commitment to building a global talent platform through AIPE.

BIGC will deepen academic-industry collaboration through AIPE to provide a premium platform for nurturing high-level, holistic, and internationally competent publishing talent, he added.

Zhang Xin, secretary of the CPC Committee at BIGC, emphasized that AIPE is expected to help globalize Chinese publishing scholarships, contribute new ideas to the industry, and cultivate a new generation of publishing professionals for the digital era.

Themed “Mutual Learning and Cooperation: New Ecology of International Publishing Education in the Digital Intelligence Era”, the conference also tackled a wide range of challenges and opportunities brought on by AI — from ethical concerns and content ownership to protecting human creativity and rethinking publishing values in higher education.

Wu Shulin, president of the Publishers Association of China, cautioned that while AI brings major opportunities, “we must not overlook the ethical and security problems it introduces”.

Catriona Stevenson, deputy CEO of the UK Publishers Association, echoed this sentiment. She highlighted how British publishers are adopting AI to amplify human creativity and productivity, while calling for global cooperation to protect intellectual property and combat AI tool infringement.

The conference aims to explore innovative pathways for the publishing industry and education reform, discuss emerging technological trends, advance higher education philosophies and talent development models, promote global academic exchange and collaboration, and empower knowledge production and dissemination through publishing education in the digital intelligence era.

yangyangs@chinadaily.com.cn

-

Funding & Business7 days ago

Kayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Jobs & Careers7 days ago

Mumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Mergers & Acquisitions7 days ago

Donald Trump suggests US government review subsidies to Elon Musk’s companies

-

Funding & Business6 days ago

Rethinking Venture Capital’s Talent Pipeline

-

Jobs & Careers6 days ago

Why Agentic AI Isn’t Pure Hype (And What Skeptics Aren’t Seeing Yet)

-

Funding & Business4 days ago

Sakana AI’s TreeQuest: Deploy multi-model teams that outperform individual LLMs by 30%

-

Jobs & Careers6 days ago

Astrophel Aerospace Raises ₹6.84 Crore to Build Reusable Launch Vehicle

-

Funding & Business1 week ago

From chatbots to collaborators: How AI agents are reshaping enterprise work

-

Tools & Platforms6 days ago

Winning with AI – A Playbook for Pest Control Business Leaders to Drive Growth

-

Jobs & Careers4 days ago

Ilya Sutskever Takes Over as CEO of Safe Superintelligence After Daniel Gross’s Exit