AI Insights

Samsung Z Flip 7, Oakley Meta glasses, DJI Osmo 360 and more

Whew, it’s been a crazy few weeks for us at Engadget. School may still be out, but there’s no summer break for the steady stream of new gadgets coming across our desks. I’ll forgive you if you missed a review or two over the last few weeks — we’ve been busy. Here’s a quick rundown of what we’ve been up to, just in time for you to catch up over the weekend.

Samsung Z Flip 7

The Z Flip 7 has decent battery life, bigger screens and more AI smarts. However, the cameras are dated and Samsung isn’t offering enough utility on this foldable’s second screen.

- Bigger front screen

- Better battery life

- Slimmer design

- Cameras are dated

- Front screen utility is still limited

- Sluggish charge speed

Alongside the Z Fold 7, Samsung debuted an updated version of its more compact foldable, the Z Flip 7. UK bureau chief Mat Smith noted that the company managed to provide a substantial overhaul, but there are some areas that were left untouched. “Certain aspects of the Flip 7 are lacking, most notably the cameras, which haven’t been changed since last year,” he said. “Samsung also needs to put more work into its Flex Window.”

There are some solid upgrades that will appeal to serious athletes and power users, but they don’t quite justify the higher price.

- Five hours of continuous music playback

- 3K video recording

- Meta AI is finally getting useful

- Awkwardly thick frames

- Bulky charging case

- (At least) $100 more than Meta’s Ray-Ban glasses

Meta’s first non-Ray-Ban smart glasses have arrived. While we wait for a more affordable version to get here, senior editor Karissa Bell put the white and gold option through its paces. “While I don’t love the style of the Oakley Meta HSTN frames, Meta has shown that it’s been consistently able to improve its glasses,” she wrote. “The upgrades that come with the new Oakley frames aren’t major leaps, but they deliver improvements to core features.”

DJI Osmo 360

DJI’s Osmo 360 is a worthy rival to Insta360’s X5, thanks to the innovative sensor and 8K 50 fps video. However, the editing app still needs some work.

- Sharp 8K 10-bit log video

- Seamless 360 stitching

- Works with DJI’s mics and accessories

- Good design and handling

- DJI Studio app needs work

- Stabilization breaks down in low light

Reporter Steve Dent argued that DJI is finally giving Insta360 some competition in the 360-degree action cam space. The design and performance of the Osmo 360 are great, but the problem comes when it’s time to edit. “The all-new DJI Studio app also needs some work,” he explained. “For a first effort, though, the Osmo 360 is a surprisingly solid rival to Insta360’s X5.”

Nothing Phone 3

The Nothing Phone 3 might be the company’s first “true flagship,” but several specs don’t match that flagship moniker. The bigger screen, battery and new Glyph Matrix make it a major step up from Phone 2, but camera performance is erratic and you’d expect a more powerful processor at this price.

- Big, bright display

- Unique hardware and software design

- Big silicon-carbon battery

- Camera performance is erratic

- Middling processor

Nothing’s first “true flagship” phone has arrived, ready to take on the likes of the Pixel 9 and Galaxy S25. Despite the company’s lofty chatter, Mat argued the Nothing Phone 3 is hampered by a lower-power chip and disappointing cameras. “While I want Nothing to continue experimenting with its phones, it should probably prioritize shoring up the camera performance first,” he said.

Samsung Galaxy Watch 8

The redesigned Galaxy Watch 8 has a longer battery life and much more comfortable fit. The Gemini integration is actually helpful and the new health metrics and fitness guidance are useful.

- Remarkably comfortable fit

- Tiles interface is snappy

- New antioxidant level and vascular load health metrics may help users keep an eye on their health

- The running coach can be inspiring for beginners

- Good Gemini integration

- Improved battery makes the AOD more viable

- The raised glass screen can be easily damaged

- AI-running coach could be more personalized

- Notifications are easy to miss

Samsung debuted a big update to its Galaxy Watch line when it unveiled the Z Fold 7 and Z Flip 7. Senior buying advice reporter Amy Skorheim spent two weeks testing the new wearable, which impressed her so much she declared it was “Samsung’s best smartwatch in years.” You can read her in-depth review here.

Everything else we tested

Here are the rest of the reviews you might have missed:

AI Insights

Westwood joins 40 other municipalities using artificial intelligence to examine roads

The borough of Westwood has started using artificial intelligence to determine if their roads need to be repaired or repaved.

It’s an effort by elected officials as a way to save money on manpower and to be sure that all decisions are objective.

Instead of relying on his own two eyes, the superintendent of Public Works is now allowing an app on his phone to record images of Westwood’s roads as he drives them.

Data on every pothole, faded striping and 13 other types of road defects are collected by the app.

The road management app is from a New Jersey company called Vialytics.

Westwood is one of 40 municipalities in the state to use the software, which also rates road quality and provides easy to use data.

“Now you’re relying on the facts here not just my opinion of the street. It’s helped me a lot already. A lot of times you’ll have residents who just want their street paved. Now I can go back to people and say there’s nothing wrong with your street that it needs to be repaved,” said Rick Woods, superintendent of Public Works.

Superintendent Woods says he can even create work orders from the road as soon as a defect is detected.

Borough officials believe the Vialytics app will pay for itself in manpower and offer elected officials objective data when determining how to use taxpayer dollars for roads.

AI Insights

How AI Simulations Match Up to Real Students—and Why It Matters

AI-simulated students consistently outperform real students—and make different kinds of mistakes—in math and reading comprehension, according to a new study.

That could cause problems for teachers, who increasingly use general prompt-based artificial intelligence platforms to save time on daily instructional tasks. Sixty percent of K-12 teachers report using AI in the classroom, according to a June Gallup study, with more than 1 in 4 regularly using the tools to generate quizzes and more than 1 in 5 using AI for tutoring programs. Even when prompted to cater to students of a particular grade or ability level, the findings suggest underlying large language models may create inaccurate portrayals of how real students think and learn.

“We were interested in finding out whether we can actually trust the models when we try to simulate any specific types of students. What we are showing is that the answer is in many cases, no,” said Ekaterina Kochmar, co-author of the study and an assistant professor of natural-language processing at the Mohamed bin Zayed University of Artificial Intelligence in the United Arab Emirates, the first university dedicated entirely to AI research.

How the study tested AI “students”

Kochmar and her colleagues prompted 11 large language models (LLMs), including those underlying generative AI platforms like ChatGPT, Qwen, and SocraticLM, to answer 249 mathematics and 240 reading grade-level questions on the National Assessment of Educational Progress in reading and math using the persona of typical students in grades 4, 8, and 12. The researchers then compared the models’ answers to NAEP’s database of real student answers to the same questions to measure how closely AI-simulated students’ answers mirrored those of actual student performance.

The LLMs that underlie AI tools do not think but generate the most likely next word in a given context based on massive pools of training data, which might include real test items, state standards, and transcripts of lessons. By and large, Kochmar said, the models are trained to favor correct answers.

“In any context, for any task, [LLMs] are actually much more strongly primed to answer it correctly,” Kochmar said. “That’s why it’s very difficult to force them to answer anything incorrectly. And we’re asking them to not only answer incorrectly but fall in a particular pattern—and then it becomes even harder.”

For example, while a student might miss a math problem because he misunderstood the order of operations, an LLM would have to be specifically prompted to misuse the order of operations.

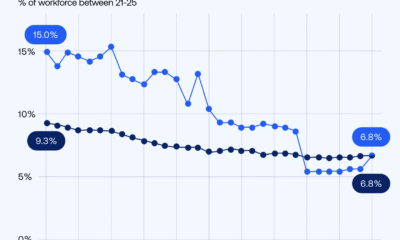

None of the tested LLMs created simulated students that aligned with real students’ math and reading performance in 4th, 8th, or 12th grades. Without specific grade-level prompts, the proxy students performed significantly higher than real students in both math and reading—scoring, for example, 33 percentile points to 40 percentile points higher than the average real student in reading.

Kochmar also found that simulated students “fail in different ways than humans.” While specifying specific grades in prompts did make simulated students perform more like real students with regard to how many answers they got correct, they did not necessarily follow patterns related to particular human misconceptions, such as order of operations in math.

The researchers found no prompt that fully aligned simulated and real student answers across different grades and models.

What this means for teachers

For educators, the findings highlight both the potential and the pitfalls of relying on AI-simulated students, underscoring the need for careful use and professional judgment.

“When you think about what a model knows, these models have probably read every book about pedagogy, but that doesn’t mean that they know how to make choices about how to teach,” said Robbie Torney, the senior director of AI programs at Common Sense Media, which studies children and technology.

Torney was not connected to the current study, but last month released a study of AI-based teaching assistants that similarly found alignment problems. AI models produce answers based on their training data, not professional expertise, he said. “That might not be bad per se, but it might also not be a good fit for your learners, for your curriculum, and it might not be a good fit for the type of conceptual knowledge that you’re trying to develop.”

This doesn’t mean teachers shouldn’t use general prompt-based AI to develop tools or tests for their classes, the researchers said, but that educators need to prompt AI carefully and use their own professional judgement when deciding if AI outputs match their students’ needs.

“The great advantage of the current technologies is that it is relatively easy to use, so anyone can access [them],” Kochmar said. “It’s just at this point, I would not trust the models out of the box to mimic students’ actual ability to solve tasks at a specific level.”

Torney said educators need more training to understand not just the basics of how to use AI tools but their underlying infrastructure. “To be able to optimize use of these tools, it’s really important for educators to recognize what they don’t have, so that they can provide some of those things to the models and use their professional judgement.”

AI Insights

We’re Entering a New Phase of AI in Schools. How Are States Responding?

Artificial intelligence topped the list of state technology officials’ priorities for the first time, according to an annual survey released by the State Educational Technology Directors’ Association on Wednesday.

More than a quarter of respondents—26%—listed AI as their most pressing issue, compared to 18% in a similar survey conducted by SETDA last year. AI supplanted cybersecurity, which state leaders previously identified as their No. 1 concern.

About 1 in 5 state technology officials—21%—named cybersecurity as their highest priority, and 18% identified professional development and technology support for instruction as their top issues.

Forty percent of respondents reported that their state had issued guidance on AI. That’s a considerable increase from just two years ago, when only 2% of respondents to the same survey reported their state had released AI guidance.

State officials’ heightened attention on AI suggests that even though many more states have released some sort of AI guidance in the past year or two, officials still see a lot left on their to-do lists when it comes to supporting districts in improving students’ AI literacy, offering professional development about AI for educators, and crafting policies around cheating and proper AI use.

“A lot of guidance has come out, but now the rubber’s hitting the road in terms of implementation and integration,” said Julia Fallon, SETDA’s executive director, in an interview.

SETDA, along with Whiteboard Advisors, surveyed state education leaders—including ed-tech directors, chief information officers, and state chiefs—receiving more than 75 responses across 47 states. It conducted interviews with state ed-tech teams in Alabama, Delaware, Nebraska, and Utah and did group interviews with ed-tech leaders from 14 states.

AI professional development is a rising priority

States are taking a myriad of approaches to responding to the AI challenge, the report noted.

Some states—such as North Carolina and Utah—designated an AI point person to help support districts in puzzling through the technology. For instance, Matt Winters, who leads Utah’s work, has helped negotiate statewide pricing for AI-powered ed-tech tools and worked with an outside organization to train 4,500 teachers on AI, according to the report.

Wyoming, meanwhile, has developed an “innovator” network that pays teachers to offer AI professional development to colleagues across the state. Washington hosted two statewide AI summits to help district and school leaders explore the technology.

And North Carolina and Virginia have used state-level competitive grant programs to support activities such as AI-specific professional development or AI-infused teaching and learning initiatives.

“As AI continues to evolve, developing connections with those in tech, in industry, and in commerce, as well as with other educators, will become more important than ever,” wrote Sydnee Dickson, formerly Utah’s state superintendent of public instruction, in an introduction to the report. “The technology is advancing too quickly for any one person or state to have all the answers.”

-

Business2 weeks ago

Business2 weeks agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Tools & Platforms4 weeks ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy2 months ago

Ethics & Policy2 months agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences4 months ago

Events & Conferences4 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers2 months ago

Jobs & Careers2 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Education2 months ago

Education2 months agoMacron says UK and France have duty to tackle illegal migration ‘with humanity, solidarity and firmness’ – UK politics live | Politics

-

Education2 months ago

Education2 months agoVEX Robotics launches AI-powered classroom robotics system

-

Funding & Business2 months ago

Funding & Business2 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi