AI Research

Manage multi-tenant Amazon Bedrock costs using application inference profiles

Successful generative AI software as a service (SaaS) systems require a balance between service scalability and cost management. This becomes critical when building a multi-tenant generative AI service designed to serve a large, diverse customer base while maintaining rigorous cost controls and comprehensive usage monitoring.

Traditional cost management approaches for such systems often reveal limitations. Operations teams encounter challenges in accurately attributing costs across individual tenants, particularly when usage patterns demonstrate extreme variability. Enterprise clients might have different consumption behaviors—some experiencing sudden usage spikes during peak periods, whereas others maintain consistent resource consumption patterns.

A robust solution requires a context-driven, multi-tiered alerting system that exceeds conventional monitoring standards. By implementing graduated alert levels—from green (normal operations) to red (critical interventions)—systems can develop intelligent, automated responses that dynamically adapt to evolving usage patterns. This approach enables proactive resource management, precise cost allocation, and rapid, targeted interventions that help prevent potential financial overruns.

The breaking point often comes when you experience significant cost overruns. These overruns aren’t due to a single factor but rather a combination of multiple enterprise tenants increasing their usage while your monitoring systems fail to catch the trend early enough. Your existing alerting system might only provide binary notifications—either everything is fine or there’s a problem—that lack the nuanced, multi-level approach needed for proactive cost management. The situation is further complicated by a tiered pricing model, where different customers have varying SLA commitments and usage quotas. Without a sophisticated alerting system that can differentiate between normal usage spikes and genuine problems, your operations team might find itself constantly taking reactive measures rather than proactive ones.

This post explores how to implement a robust monitoring solution for multi-tenant AI deployments using a feature of Amazon Bedrock called application inference profiles. We demonstrate how to create a system that enables granular usage tracking, accurate cost allocation, and dynamic resource management across complex multi-tenant environments.

What are application inference profiles?

Application inference profiles in Amazon Bedrock enable granular cost tracking across your deployments. You can associate metadata with each inference request, creating a logical separation between different applications, teams, or customers accessing your foundation models (FMs). By implementing a consistent tagging strategy with application inference profiles, you can systematically track which tenant is responsible for each API call and the corresponding consumption.

For example, you can define key-value pair tags such as TenantID, business-unit, or ApplicationID and send these tags with each request to partition your usage data. You can also send the application inference profile ID with your request. When combined with AWS resource tagging, these tag-enabled profiles provide visibility into the utilization of Amazon Bedrock models. This tagging approach introduces accurate chargeback mechanisms to help you allocate costs proportionally based on actual usage rather than arbitrary distribution approaches. To attach tags to the inference profile, see Tagging Amazon Bedrock resources and Organizing and tracking costs using AWS cost allocation tags. Furthermore, you can use application inference profiles to identify optimization opportunities specific to each tenant, helping you implement targeted improvements for the greatest impact to both performance and cost-efficiency.

Solution overview

Imagine a scenario where an organization has multiple tenants, each with their respective generative AI applications using Amazon Bedrock models. To demonstrate multi-tenant cost management, we provide a sample, ready-to-deploy solution on GitHub. It deploys two tenants with two applications, each within a single AWS Region. The solution uses application inference profiles for cost tracking, Amazon Simple Notification Service (Amazon SNS) for notifications, and Amazon CloudWatch to produce tenant-specific dashboards. You can modify the source code of the solution to suit your needs.

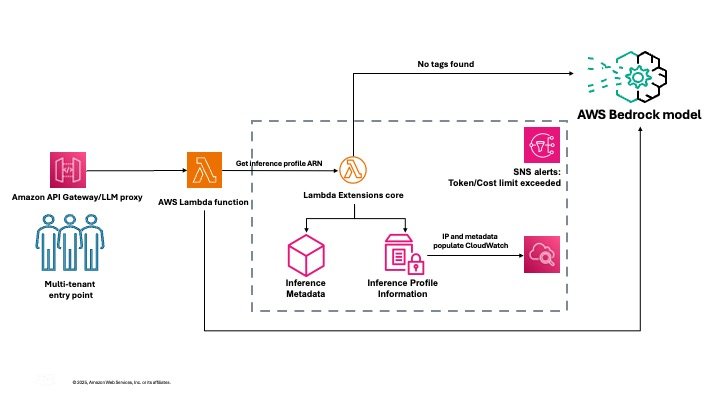

The following diagram illustrates the solution architecture.

The solution handles the complexities of collecting and aggregating usage data across tenants, storing historical metrics for trend analysis, and presenting actionable insights through intuitive dashboards. This solution provides the visibility and control needed to manage your Amazon Bedrock costs while maintaining the flexibility to customize components to match your specific organizational requirements.

In the following sections, we walk through the steps to deploy the solution.

Prerequisites

Before setting up the project, you must have the following prerequisites:

- AWS account – An active AWS account with permissions to create and manage resources such as Lambda functions, API Gateway endpoints, CloudWatch dashboards, and SNS alerts

- Python environment – Python 3.12 or higher installed on your local machine

- Virtual environment – It’s recommended to use a virtual environment to manage project dependencies

Create the virtual environment

The first step is to clone the GitHub repo or copy the code into a new project to create the virtual environment.

Update models.json

Review and update the models.json file to reflect the correct input and output token pricing based on your organization’s contract, or use the default settings. Verifying you have the right data at this stage is critical for accurate cost tracking.

Update config.json

Modify config.json to define the profiles you want to set up for cost tracking. Each profile can have multiple key-value pairs for tags. For every profile, each tag key must be unique, and each tag key can have only one value. Each incoming request should contain these tags or the profile name as HTTP headers at runtime.

As part of the solution, you also configure a unique Amazon Simple Storage Service (Amazon S3) bucket for saving configuration artifacts and an admin email alias that will receive alerts when a particular threshold is breached.

Create user roles and deploy solution resources

After you modify config.json and models.json, run the following command in the terminal to create the assets, including the user roles:

python setup.py --create-user-rolesAlternately, you can create the assets without creating user roles by running the following command:

python setup.pyMake sure that you are executing this command from the project directory. Note that full access policies are not advised for production use cases.

The setup command triggers the process of creating the inference profiles, building a CloudWatch dashboard to capture the metrics for each profile, deploying the inference Lambda function that executes the Amazon Bedrock Converse API and extracts the inference metadata and metrics related to the inference profile, sets up the SNS alerts, and finally creates the API Gateway endpoint to invoke the Lambda function.

When the setup is complete, you will see the inference profile IDs and API Gateway ID listed in the config.json file. (The API Gateway ID will also be listed in the final part of the output in the terminal)

When the API is live and inferences are invoked from it, the CloudWatch dashboard will show cost tracking. If you experience significant traffic, the alarms will trigger an SNS alert email.

For a video version of this walkthrough, refer to Track, Allocate, and Manage your Generative AI cost & usage with Amazon Bedrock.

You are now ready to use Amazon Bedrock models with this cost management solution. Make sure that you are using the API Gateway endpoint to consume these models and send the requests with the tags or application inference profile IDs as headers, which you provided in the config.json file. This solution will automatically log the invocations and track costs for your application on a per-tenant basis.

Alarms and dashboards

The solution creates the following alarms and dashboards:

- BedrockTokenCostAlarm-{profile_name} – Alert when total token cost for {profile_name} exceeds {cost_threshold} in 5 minutes

- BedrockTokensPerMinuteAlarm-{profile_name} – Alert when tokens per minute for {profile_name} exceed {tokens_per_min_threshold}

- BedrockRequestsPerMinuteAlarm-{profile_name} – Alert when requests per minute for {profile_name} exceed {requests_per_min_threshold}

You can monitor and receive alerts about your AWS resources and applications across multiple Regions.

A metric alarm has the following possible states:

- OK – The metric or expression is within the defined threshold

- ALARM – The metric or expression is outside of the defined threshold

- INSUFFICIENT_DATA – The alarm has just started, the metric is not available, or not enough data is available for the metric to determine the alarm state

After you add an alarm to a dashboard, the alarm turns gray when it’s in the INSUFFICIENT_DATA state and red when it’s in the ALARM state. The alarm is shown with no color when it’s in the OK state.

An alarm invokes actions only when the alarm changes state from OK to ALARM. In this solution, an email is sent to through your SNS subscription to an admin as specified in your config.json file. You can specify additional actions when the alarm changes state between OK, ALARM, and INSUFFICIENT_DATA.

Considerations

Although the API Gateway maximum integration timeout (30 seconds) is lower than the Lambda timeout (15 minutes), long-running model inference calls might be cut off by API Gateway. Lambda and Amazon Bedrock enforce strict payload and token size limits, so make sure your requests and responses fit within these boundaries. For example, the maximum payload size is 6 MB for synchronous Lambda invocations and the combined request line and header values can’t exceed 10,240 bytes for API Gateway payloads. If your workload can work within these limits, you will be able to use this solution.

Clean up

To delete your assets, run the following command:

python unsetup.pyConclusion

In this post, we demonstrated how to implement effective cost monitoring for multi-tenant Amazon Bedrock deployments using application inference profiles, CloudWatch metrics, and custom CloudWatch dashboards. With this solution, you can track model usage, allocate costs accurately, and optimize resource consumption across different tenants. You can customize the solution according to your organization’s specific needs.

This solution provides the framework for building an intelligent system that can understand context—distinguishing between a gradual increase in usage that might indicate healthy business growth and sudden spikes that could signal potential issues. An effective alerting system needs to be sophisticated enough to consider historical patterns, time of day, and customer tier when determining alert levels. Furthermore, these alerts can trigger different types of automated responses based on the alert level: from simple notifications, to automatic customer communications, to immediate rate-limiting actions.

Try out the solution for your own use case, and share your feedback and questions in the comments.

About the authors

Claudio Mazzoni is a Sr Specialist Solutions Architect on the Amazon Bedrock GTM team. Claudio exceeds at guiding costumers through their Gen AI journey. Outside of work, Claudio enjoys spending time with family, working in his garden, and cooking Uruguayan food.

Claudio Mazzoni is a Sr Specialist Solutions Architect on the Amazon Bedrock GTM team. Claudio exceeds at guiding costumers through their Gen AI journey. Outside of work, Claudio enjoys spending time with family, working in his garden, and cooking Uruguayan food.

Fahad Ahmed is a Senior Solutions Architect at AWS and assists financial services customers. He has over 17 years of experience building and designing software applications. He recently found a new passion of making AI services accessible to the masses.

Fahad Ahmed is a Senior Solutions Architect at AWS and assists financial services customers. He has over 17 years of experience building and designing software applications. He recently found a new passion of making AI services accessible to the masses.

Manish Yeladandi is a Solutions Architect at AWS, specializing in AI/ML, containers, and security. Combining deep cloud expertise with business acumen, Manish architects secure, scalable solutions that help organizations optimize their technology investments and achieve transformative business outcomes.

Dhawal Patel is a Principal Machine Learning Architect at AWS. He has worked with organizations ranging from large enterprises to mid-sized startups on problems related to distributed computing and artificial intelligence. He focuses on deep learning, including NLP and computer vision domains. He helps customers achieve high-performance model inference on Amazon SageMaker.

Dhawal Patel is a Principal Machine Learning Architect at AWS. He has worked with organizations ranging from large enterprises to mid-sized startups on problems related to distributed computing and artificial intelligence. He focuses on deep learning, including NLP and computer vision domains. He helps customers achieve high-performance model inference on Amazon SageMaker.

James Park is a Solutions Architect at Amazon Web Services. He works with Amazon.com to design, build, and deploy technology solutions on AWS, and has a particular interest in AI and machine learning. In h is spare time he enjoys seeking out new cultures, new experiences, and staying up to date with the latest technology trends. You can find him on LinkedIn.

James Park is a Solutions Architect at Amazon Web Services. He works with Amazon.com to design, build, and deploy technology solutions on AWS, and has a particular interest in AI and machine learning. In h is spare time he enjoys seeking out new cultures, new experiences, and staying up to date with the latest technology trends. You can find him on LinkedIn.

Abhi Shivaditya is a Senior Solutions Architect at AWS, working with strategic global enterprise organizations to facilitate the adoption of AWS services in areas such as Artificial Intelligence, distributed computing, networking, and storage. His expertise lies in Deep Learning in the domains of Natural Language Processing (NLP) and Computer Vision. Abhi assists customers in deploying high-performance machine learning models efficiently within the AWS ecosystem.

Abhi Shivaditya is a Senior Solutions Architect at AWS, working with strategic global enterprise organizations to facilitate the adoption of AWS services in areas such as Artificial Intelligence, distributed computing, networking, and storage. His expertise lies in Deep Learning in the domains of Natural Language Processing (NLP) and Computer Vision. Abhi assists customers in deploying high-performance machine learning models efficiently within the AWS ecosystem.

AI Research

Notre Dame to host summit on AI, faith and human flourishing, introducing new DELTA framework | News | Notre Dame News

Artificial intelligence is advancing at a breakneck pace, as governments and industries commit resources to its development at a scale not seen since the Space Race. These technologies have the potential to disrupt every aspect of life, including education, the economy, labor and human relationships.

“As a leading global Catholic research university, Notre Dame is uniquely positioned to help the world confront and understand AI’s benefits and risks to human flourishing,” said John T. McGreevy, the Charles and Jill Fischer Provost. “Technology ethics is a key priority for Notre Dame, and we are fully committed to bringing the wisdom of the global Church to bear on this critical theme.”

In support of this work, the Institute for Ethics and the Common Good and the Notre Dame Ethics Initiative will host the Notre Dame Summit on AI, Faith and Human Flourishing on the University’s campus from Monday, Sept. 22 through Thursday, Sept. 25. This event will draw together a dynamic, ecumenical group of educators, faith leaders, technologists, journalists, policymakers and young people who believe in the enduring relevance of Christian ethical thought in a world of powerful AI.

“As artificial intelligence becomes more powerful, the ‘ethical floor’ of safety, privacy and transparency is simply not enough,” said Meghan Sullivan, the Wilsey Family College Professor of Philosophy and the director of the Institute for Ethics and the Common Good and the Notre Dame Ethics Initiative. “This moment in time demands a response rooted in the Christian tradition — a richer, more holistic perspective that recognizes the nature of the human person as a spiritual, emotional, moral and physical being.”

Sullivan noted that a unified, faith-based response to AI is a priority of newly elected Pope Leo XIV, who has spoken publicly about the new challenges to human dignity, justice and labor posed by these technologies.

The summit will begin at 5:15 p.m. Monday with an opening Mass at the University’s Basilica of the Sacred Heart. His Eminence Cardinal Christophe Pierre, Apostolic Nuncio to the United States, will serve as primary celebrant and homilist with University President Rev. Robert A. Dowd, C.S.C., as concelebrant. All members of the campus community are invited to attend this opening Mass.

Summit speakers include Andy Crouch, Praxis; Alex Hartemink, Duke University; Molly Kinder, Brookings Institution; Andrew Schuman, Veritas Forum; Anne Snyder, Comment Magazine and Elizabeth Dias, The New York Times. Over the course of the summit, attendees will take part in use case workshops, panels and community of practice sessions focused on public engagement, ministry and education. Executives from Google, Microsoft, Apple and many other organizations are among the 200 invited guests who will attend.

At the summit, Notre Dame will launch DELTA, a new framework for guiding conversations about AI. DELTA — an acronym that stands for Dignity, Embodiment, Love, Transcendence and Agency — will serve as a practical resource across sectors that are experiencing disruption from AI, including homes, schools, churches and workplaces, while also providing a platform for credible, principled voices to promote moral clarity and human dignity in the face of advancing technology.

“Our goal is for DELTA to become a common lens through which to engage AI — a language that reflects the depth of the Christian tradition while remaining accessible to people of all faiths,” Sullivan said. “By bringing together this remarkable group of leaders here at Notre Dame, we’re launching a community that will work passionately to create — as the Vatican puts it — ‘a growth in human responsibility, values and conscience that is proportionate to the advances posed by technology.’”

Although the summit sessions are by invitation only, Sullivan’s keynote on DELTA will be livestreamed. Those interested are invited to view the livestream and learn more about DELTA at https://ethics.nd.edu/summit-livestream at 8:30 a.m. EST on Tuesday, Sept. 23.

The Notre Dame Summit on AI, Faith and Human Flourishing is supported with a grant provided by Lilly Endowment Inc.

Lilly Endowment Inc. is a private foundation created in 1937 by J.K. Lilly Sr. and his sons Eli and J.K. Jr. through gifts of stock in their pharmaceutical business, Eli Lilly and Company. While those gifts remain the financial bedrock of the Endowment, it is a separate entity from the company, with a distinct governing board, staff and location. In keeping with the founders’ wishes, the Endowment supports the causes of community development, education and religion and maintains a special commitment to its hometown, Indianapolis, and home state, Indiana. A principal aim of the Endowment’s religion grantmaking is to deepen and enrich the lives of Christians in the United States, primarily by seeking out and supporting efforts that enhance the vitality of congregations and strengthen the pastoral and lay leadership of Christian communities. The Endowment also seeks to improve public understanding of religious traditions in the United States and across the globe.

Contact: Carrie Gates, associate director of media relations, 574-993-9220, c.gates@nd.edu

AI Research

New Research Reveals That “Evidence-Based Creativity” Is the Next Must-Have Skill Set for Marketers in the Age of AI

Global report from Contentful and Atlantic Insights finds nearly half of marketers rank data analysis and interpretation as a top skill; marketers must now demonstrate breakthrough creativity and validate with data

DENVER & BERLIN–(BUSINESS WIRE)–Contentful, a leading digital experience platform, in collaboration with Atlantic Insights, the marketing research division of The Atlantic, today released a new study, ‘When Machines Make Marketers More Human’ challenging the notion that AI will replace many marketing functions and instead demonstrates how AI can amplify marketers’ effectiveness, creativity, and impact.

The report, based on surveys and interviews with hundreds of senior marketing leaders around the world, finds that “evidence-based creativity” is emerging as the defining capability of modern marketers. Whether on skills – 46% of respondents cited data analysis and interpretation as the top skill needed in the profession today – or on measuring efficacy – 34% of successful marketers define success according to strong performance metrics or ROI – it’s clear that data-driven marketing is only accelerating in the age of AI. The ability to combine human creativity with AI-driven insights is becoming essential to producing, testing, and scaling ideas with measurable impact.

Beyond creativity, the research highlights a broader evolution of the marketing skillset. A new generation of “full-stack marketers” is taking shape. They are fluent in creating AI-enabled workflows, writing effective prompts, navigating diverse technology stacks, and embedding AI tools into daily operations. Nearly half of marketers report using both AI copilots in productivity software (49%) and generative tools for content creation (48%), underscoring how quickly these tools are becoming part of the day-to-day workflow. Combined with growing expertise in digital experience design, personalization strategy, and governance, these capabilities signal a fundamental shift in what it takes to succeed in marketing today.

“There is a growing fear that AI will erase marketing jobs, but that concern is misplaced. The real risk is failing to use AI strategically,” said Elizabeth Maxson, Chief Marketing Officer, Contentful. “When marketers invest in the right tools that support their teams’ daily work and prioritize marketing talent that blends creativity with analytics, that’s when AI stops being hype and starts delivering meaningful results.”

“Marketing is a deeply creative industry, but there is an urgent need for marketers to start thinking more like engineers in order to keep pace with the rise in AI,” said Alice McKown, Publisher, The Atlantic. “Tomorrow’s most valuable marketing leaders won’t be defined as creative or analytical. They’ll be both.”

Key Findings from the Report

Evidence-based creativity is the new marketing superpower — and organizations are investing to get them there.

- The marketing skills that matter most today are data analysis and interpretation (46%) and digital experience design (40%), followed by personalization strategy (37%), and writing for AI tools (37%).

- 33% of marketers rank campaign testing and optimization as a top skill — reflecting a shift toward data-informed creative instincts.

- 45% of organizations are already offering AI training, a clear marker of organizational maturity.

The “Optimism-Execution Gap” reflects the chasm between AI’s potential and reality; ROI is still a work in progress.

- AI investment is a priority for marketers, with 74% investing in the technology and 34% allocating at least $500k toward AI marketing tools or initiatives over the next 12-36 months.

- Despite the investment, two-thirds of marketers say their current marketing technology stack isn’t helping them do more with fewer resources (yet).

- 89% of marketing teams are already using AI tools, but only 18% say it has reduced their reliance on developers or data teams

AI’s key opportunities and challenges differ by region, with Europeans prioritizing compliance and Americans focusing on rapid experimentation.

- EMEA marketers adopt a methodical, compliance-ready approach, with 58% selectively testing AI tools under a defined plan. Nearly a third (32%) emphasize governance skills such as brand voice, compliance and quality standards.

- U.S. marketers emphasize experimentation and rapid testing, with 37% focusing on campaign optimization (vs. 26% in EMEA). U.S. teams measure success by high content quality (45%) and flexibility (39%), while EMEA teams lean toward operational excellence and speed (43%).

To read the full report, visit: https://www.theatlantic.com/sponsored/contentful-2025/making-marketers-more-human/4024/

About the Report

Research from the report was conducted by Contentful in collaboration with Atlantic Insights, and included three primary components:

- Quantitative survey – 425 marketing decision-makers across industries, company sizes, and regions, executed by Cint.

- Diary studies – Ten-day live user testing with marketing professionals using AI tools in their real workflows, executed by Dscout. Participants completed eight activities, including content creation, campaign optimization, translation/localization, personalization, and A/B testing, while recording their screens and providing commentary.

- Subject matter expert (SME) interviews – In-depth interviews with Contentful executives, team leads, and partner organizations to contextualize quantitative findings and capture emerging best practices.

All survey data was collected and analyzed using advanced statistical methods to ensure reliability and significance of findings.

About Contentful

Contentful is a leading digital experience platform that helps modern businesses meet the growing demand for engaging, personalized content at scale. By blending composability with native AI capabilities, Contentful enables dynamic personalization, automated content delivery, and real-time experimentation, powering next-generation digital experiences across brands, regions, and channels for more than 4,200 organizations worldwide. For more information, visit www.contentful.com. Contentful, the Contentful logo, and other trademarks listed here are registered trademarks of Contentful Inc., Contentful GmbH and/or its affiliates in the United States and other countries. Other names may be trademarks of their respective owners.

About Atlantic Insights

Atlantic Insights is the marketing research division of The Atlantic, with custom and co-branded research experience spanning industries and sectors ranging across finance, luxury, technology, healthcare, and small business.

Contacts

AI Research

Study reveals why humans adapt better than AI

Humans adapt to new situations through abstraction, while AI relies on statistical or rule-based methods, limiting flexibility in unfamiliar scenarios.

A new interdisciplinary study from Bielefeld University and other leading institutions explores why humans excel at adapting to new situations while AI systems often struggle. Researchers found humans generalise through abstraction and concepts, while AI relies on statistical or rule-based methods.

The study proposes a framework to align human and AI reasoning, defining generalisation, how it works, and how it can be assessed. Experts say differences in generalisation limit AI flexibility and stress the need for human-centred design in medicine, transport, and decision-making.

Researchers collaborated across more than 20 institutions, including Bielefeld, Bamberg, Amsterdam, and Oxford, under the SAIL project. The initiative aims to develop AI systems that are sustainable, transparent, and better able to support human values and decision-making.

Interdisciplinary insights may guide the responsible use of AI in human-AI teams, ensuring machines complement rather than disrupt human judgement.

The findings underline the importance of bridging cognitive science and AI research to foster more adaptable, trustworthy, and human-aligned AI systems capable of tackling complex, real-world challenges.

Would you like to learn more about AI, tech and digital diplomacy? If so, ask our Diplo chatbot!

-

Business3 weeks ago

Business3 weeks agoThe Guardian view on Trump and the Fed: independence is no substitute for accountability | Editorial

-

Tools & Platforms1 month ago

Building Trust in Military AI Starts with Opening the Black Box – War on the Rocks

-

Ethics & Policy2 months ago

Ethics & Policy2 months agoSDAIA Supports Saudi Arabia’s Leadership in Shaping Global AI Ethics, Policy, and Research – وكالة الأنباء السعودية

-

Events & Conferences4 months ago

Events & Conferences4 months agoJourney to 1000 models: Scaling Instagram’s recommendation system

-

Jobs & Careers3 months ago

Jobs & Careers3 months agoMumbai-based Perplexity Alternative Has 60k+ Users Without Funding

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoHappy 4th of July! 🎆 Made with Veo 3 in Gemini

-

Education3 months ago

Education3 months agoVEX Robotics launches AI-powered classroom robotics system

-

Education2 months ago

Education2 months agoMacron says UK and France have duty to tackle illegal migration ‘with humanity, solidarity and firmness’ – UK politics live | Politics

-

Podcasts & Talks2 months ago

Podcasts & Talks2 months agoOpenAI 🤝 @teamganassi

-

Funding & Business3 months ago

Funding & Business3 months agoKayak and Expedia race to build AI travel agents that turn social posts into itineraries